I thought of several titles, but they all felt too alarming—so alarming that I almost gave up on writing this piece. However, a brief exchange with a friend drove me to continue, and I've tried to expand it as much as possible to make it feel complete.

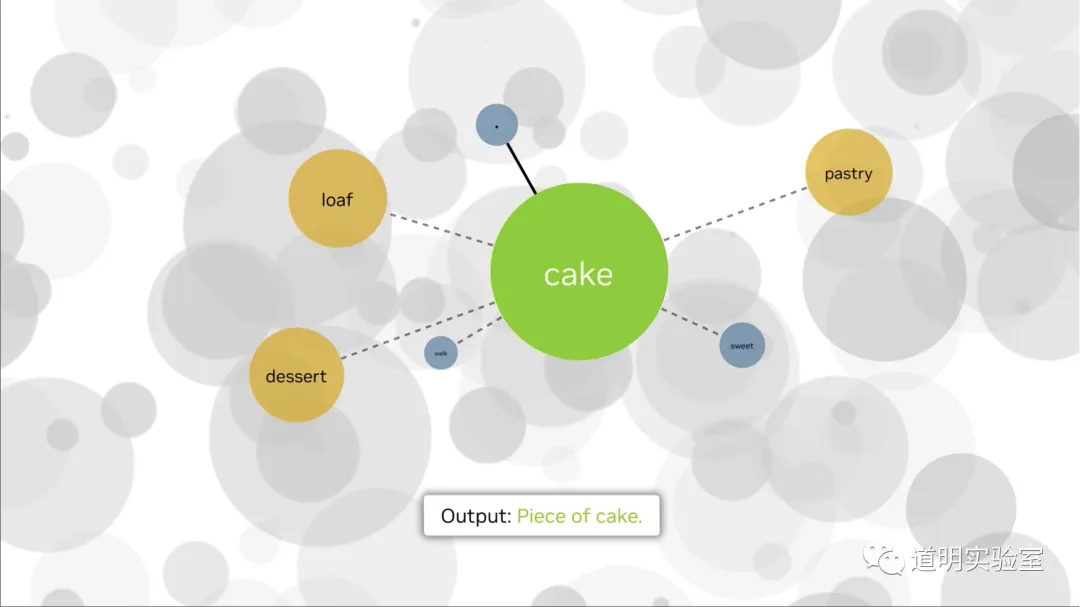

Back when ChatGPT first blew up 🔥, I mentioned its basic principle: GPT works by predicting the next word based on probability (strictly speaking, it's a 'token,' but for simplicity, I’ll use 'word'). OpenAI's idea was: "Can we understand human language simply by constantly guessing the next word?"

ChatGPT almost achieved this, and it remains the fundamental principle of generative AI: understanding through prediction. However, an unexpected byproduct is that 'generative' capability itself has gradually become a so-called 'super intelligent agent.' We see text-to-text, text-to-image, text-to-video, and text-to-music beginning to overshadow everything else, as if shifting from being the 'object' to becoming the 'subject.'

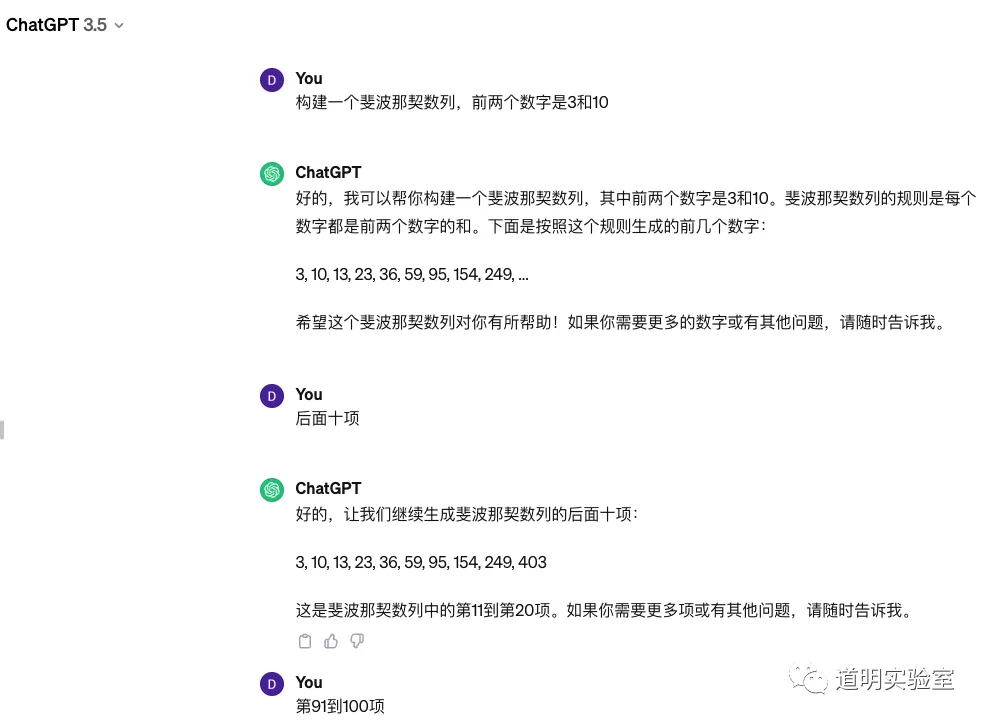

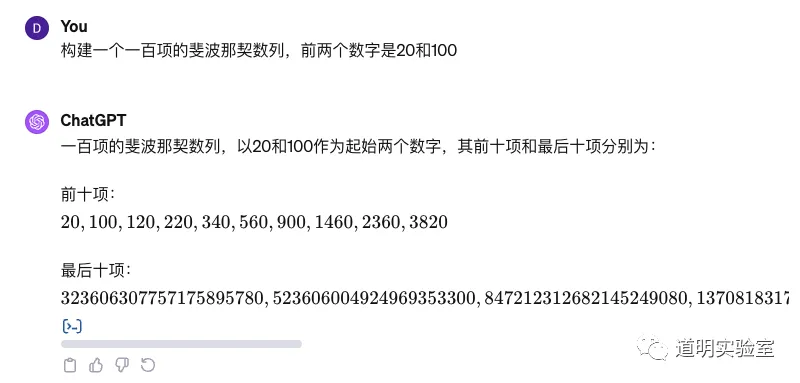

But at the time, I also said that while it can generate, ChatGPT doesn't actually know what it's saying (what people often refer to as 'hallucinations'). It doesn't understand numbers, time, or space. I had many in-depth discussions with friends and found many examples, one of which was its inability to correctly generate the Fibonacci sequence.

(Since I can no longer find the specific example I used then, I’ll substitute it with a current chat record from 'ChatGPT-3.5'.)

Clearly, 3.5 has made massive progress compared to the models in my memory; at least the first ten items appear correct.

But it is also clear that items 91-100 are incorrect.

This still strongly supports my original view: it doesn't understand numbers themselves. Understanding the algorithmic logic behind this takes a bit of brainpower. For instance, in the GPT model, '1122334455' isn't a single number or 'string'; it might be split into '1122', '3344', '55', or other forms. In a generative context, it simply knows via probability that '3344' is highly likely to follow '1122'.

Today, more and more people realize this. Even those who once questioned my explanation have started asking me: "We know GPT can't actually do math, so how can it surpass humans in professional fields?" (No offense intended; we won't fully understand the model's complete principles for a long time. Even though I support open-source models, I don't believe the full code will be released, nor do I necessarily support it. LLaMA2 strikes the right balance).

GPT also doesn't understand time and space, which is why I've discussed the necessity of Embodied AI (humanoid robots are just a small subset of this field). What I haven't discussed is why I believe massive amounts of Synthetic Data (data generated by the model to optimize itself—note I didn't say 'train') are important. But that topic is outside the scope of this piece, so I'll stop there.

Returning to the main point: why is it so important that GPT doesn't understand math, or rather, why is it so important if an AI can do math? (I know many will challenge this—hasn't GPT passed many exams? Let me use an analogy: if we encounter a problem we don't know how to solve in our homework, search for it online, and get the right answer, do we really 'know' it? GPT might get it right, but it still doesn't 'know' it—and more importantly, it doesn't know that it doesn't know. More on that later.)

Because if an AI can truly do math, even simple math, that is the most critical 0-to-1 leap. It could be called the transition from ordinary AI to AGI (Artificial General Intelligence—the omnipotent existence many of us imagine).

This is why the leaks about OpenAI's 'Q* (Q-Star)' on social media yesterday were so shocking. (Based on current information, Q* is likely a combination of Q-Learning and A* Search. Q-Learning is used in the Reinforcement Learning from Human Feedback (RLHF) we know, which is the key part that makes GPT sound human; its essence is solving for the optimal value of a Q-function. To use a loose analogy, a Q-function is like giving us a 'cheat' that ensures every choice we make is as correct as possible—much like DeepMind's AlphaGo.)

Q* is believed to be able to solve simple math problems (not by using preset formulas or external tools). This represents the 0-to-1 leap toward AGI in most people's eyes. We can imagine that once the scale is expanded, it will have no rivals in the rational world.

So, AI doing math is important, but GPT itself couldn't do it. Therefore, we could still maintain our pride—or so I thought until I woke up today.

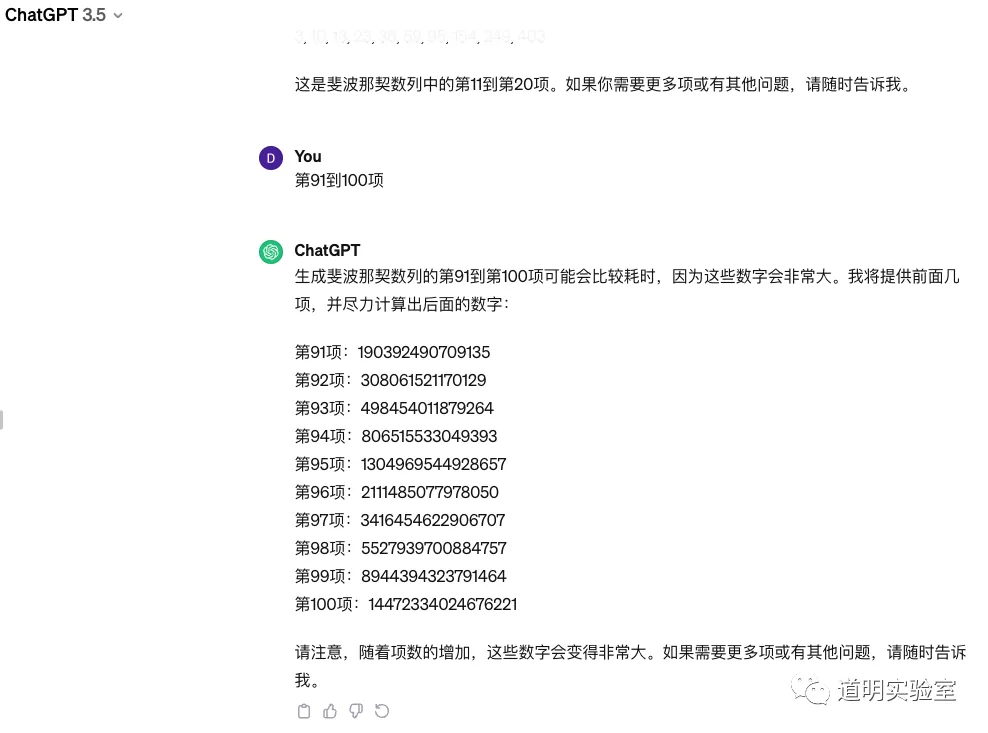

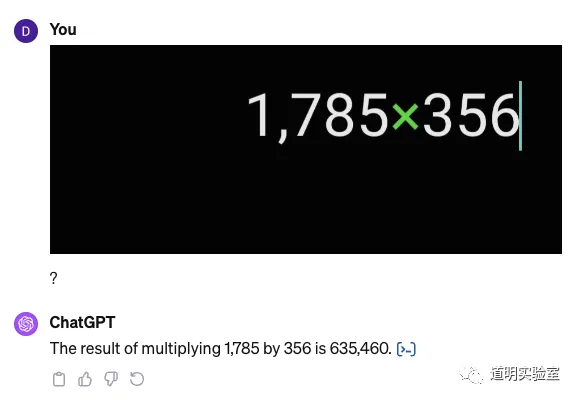

However, that thought completely collapsed when I was inspired to upload an image to GPT-4.

We know GPT-4 has multimodal capabilities—it can process images and files. I've used it for many things: calling tools, writing programs to solve problems. None of that exceeded my imagination. Until the "1+1=2" answer appeared.

My final shred of pride vanished.

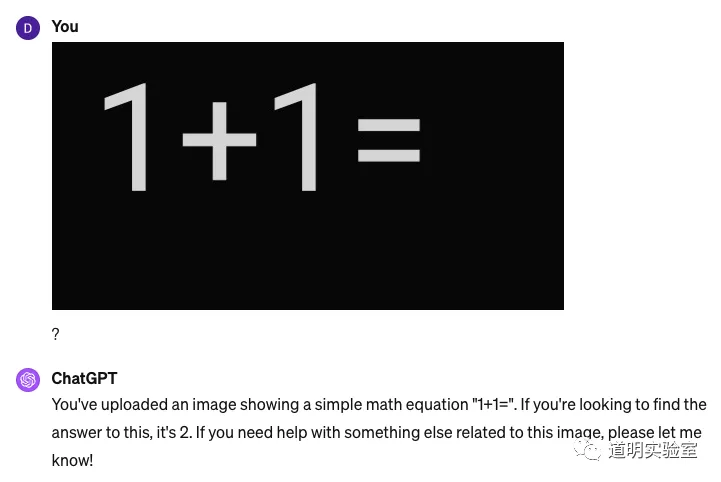

I wasn't convinced, so I tried again.

It certainly wasn't using Q*, but with the support of multimodal capabilities, it got it exactly right. That's not the scary part—that could even be luck. But as I said before: the truly terrifying part is that it knows it doesn't know, and it knows that writing a piece of code can yield an accurate answer.

It knows its own limitations, but it can correctly leverage external forces.

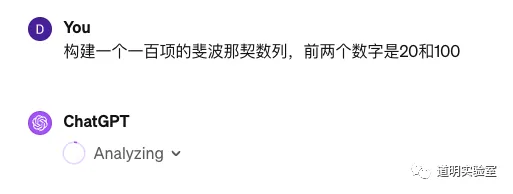

I tried that familiar question again:

But when I saw it display that familiar "Analyzing" status without hesitation, I knew the last hope was gone.

The result was, unsurprisingly, correct.

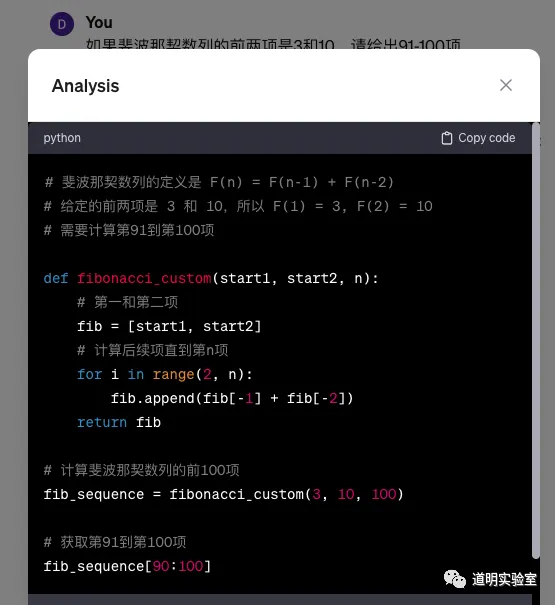

Even the code was available for inspection.

This means: 1. When it admits it doesn't know, the "hallucination" problem, while not gone, is restricted to a tiny probability; 2. When it can understand a problem through multimodality, it means it can start to understand our human world; 3. When it can call tools or write code to complete tasks, it means all the human accumulation over history is now at its disposal; 4. Currently, it is safe because it's within a controllable range. But what about the future? This is too complex a question, but my answer is: the more open it is and the more people understand it, the safer it will be—far better than having it held by a few.

So, even without Q*, it has already solved simple math this way. What happens once Q* is integrated? This was the first major blow to me today.

The second blow was realizing that while I was enthusiastically showing off what it could do, I hadn't even noticed such a fundamental issue.

In its presence, even my imagination feels increasingly pale and weak.

Before writing this text, I had another title in mind: "This is the most desperate day in my fifteen years in the industry."

Lately, I've been reflecting a lot, much like the quote from Andrej Karpathy I posted on my WeChat moments regarding whether AI should be centralized or decentralized.

And what I'm thinking is: where has my once-proud imagination gone?

Yes, this is the most desperate day.