Pitfalls of DIYing a Top-Tier 3D Imaging System (Part I)

During the past year or so of working on 3D reconstruction, my daughter has always challenged me: "Google has already done all this, what's the point of you doing it?"

It’s true—Google's current Immersive View covers many cities. However, as demonstrated by projects like Cesium: it's a great tool, but as an upscaling option for 2D photos, it is practically unusable for high-end needs.

My goal for 3D reconstruction is clear: to generate the highest quality results—results that can rival my photography both technically and artistically. Consequently, for over a year, I have been continuously searching for algorithms, optimizing workflows, and hunting for the right hardware.

I recently received the Revopoint Miraco, which I backed on crowdfunding last year. It’s excellent: about the size of a Makina 670 folding camera, it shoots just like a camera, features in-device modeling, and is fast with high quality.

However, its drawbacks are obvious: the shooting distance is limited to within one meter, and it doesn't adapt well to outdoor lighting environments. Thus, while it's a high-precision tool that fits into my workflow, it isn't suitable for the architectural subjects I want to capture.

There are many outdoor mapping solutions, but their results are often on par with a household robot vacuum scanning a room. The reasons are twofold: 1. Poor lens quality. Although many products now use multiple lenses—including RGB-D (depth-sensing), infrared, etc., and some even feature LiDAR—resolutions are often capped at 640x480 due to cost. 2. Limited computing power. Image processing, especially 3D reconstruction, is incredibly demanding. Low-cost edge chips cannot support even 1080P HD reconstruction. This explains why Apple's Vision Pro is so expensive; the imaging components and processing chips required to achieve even an "acceptable" result in an integrated device are astronomical in cost—and that's with Apple Silicon being the strongest edge-side chip on Earth.

Even so, that imaging quality won't surpass the result of running 86 of my 24-megapixel photos through RealityCapture on an Nvidia 4090 card.

And this is only the beginning.

Moving forward involves a series of capture processes; looking back involves models and post-adjustments.

Currently, there is much more room for optimization at the capture end. The final quality is determined by the capture stage: the quality of the images, the number of images, and the information overlap between them.

There are systems that meet these requirements, such as those from my partner brand, Phase One. They offer 150-megapixel digital backs, specialized high-definition digital lenses, global control modules, navigation modules, and IMU modules. These have a price, but the real barrier is logistics: you need a full-sized aircraft (not a drone) and a large team.

Even as a Phase One ambassador, such a system is beyond what I can manage right now. However, these world-class solutions, combined with the maturing robotics ecosystem, gave me an idea. I could build a small-scale ground version. By controlling size and weight, I could drastically improve capture efficiency and achieve top-tier image quality using cloud computing and model matrices.

But this is a massive undertaking filled with pitfalls. For the hardware, I'd need at least a medium-format camera like the Fuji GFX (Phase One backs are better, but Fuji is more suitable in terms of system weight and capture efficiency).

I’d also need an Nvidia Jetson Orin Developer Kit (256T TOPS, 64GB unified memory, supporting CUDA/CuDNN, 12V/35W power via Type-C) and a small touchscreen.

Plus a LiDAR unit, like the Unitree L1.

And mounts, IMUs, GNSS/RTK support, and perhaps a depth camera array. The hardware alone is a rabbit hole, not to mention the unmanned rover I'm preparing...

I originally thought this was just a matter of selecting and pairing hardware, especially since Large Language Models have ushered in an era of low-code or no-code development.

But when I actually started the hands-on phase in early 2024, I realized I was far too naive.

The above was just context. Now for the real "pitfalls": I am currently stuck in a code abyss with no exit in sight. After a week of torment, I've finally taken a step or two forward. Thus, this "Pitfall Diary (Part I)".

Conceptually, the system should:

- Suit most outdoor environments, be portable, and capable of climbing stairs.

- Provide real-time preliminary 3D results during shooting for on-site adjustments.

- Automatically align and fuse camera photos with LiDAR, IMU, high-precision navigation, and depth data.

- Feature a global control interface for rapid modeling and live preview.

This is why I need the Orin, the screen, the LiDAR, and a reliable power system.

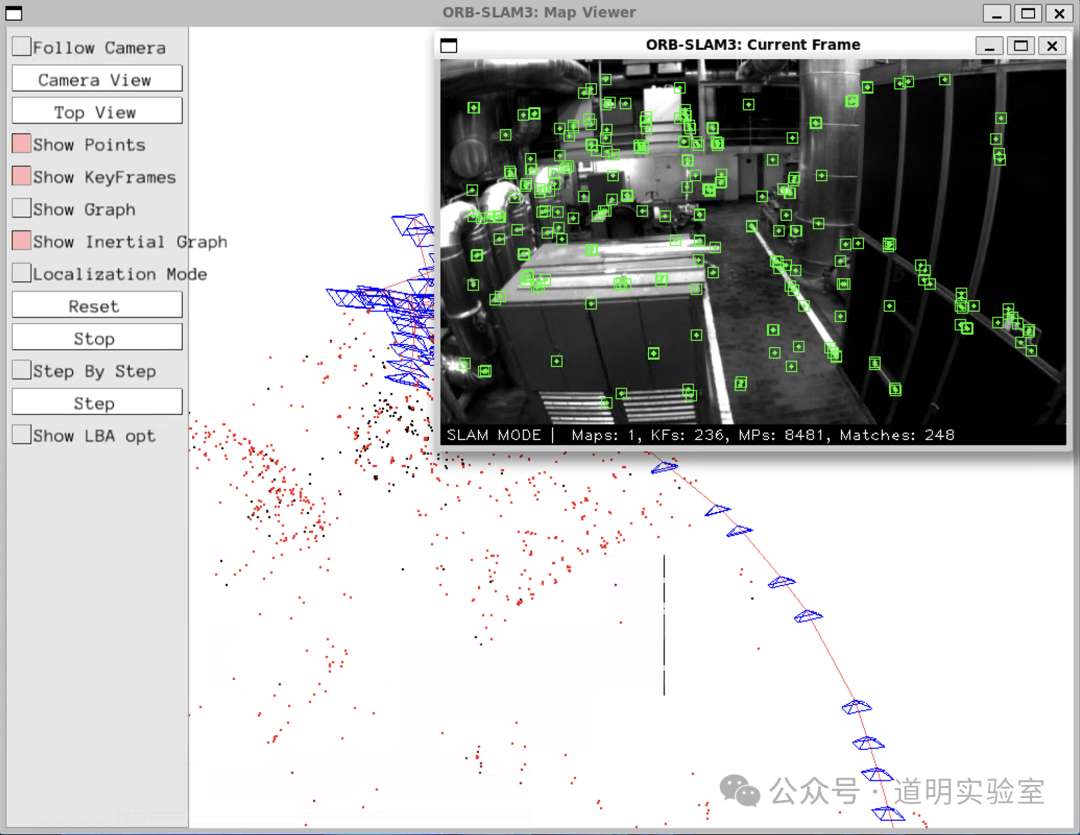

The first step was installing tools on the Orin: gphoto2 for camera control, orb_slam for real-time mapping, colmap for alignment, real-time NeRF algorithms...

Getting these to work has been my nightmare for the past week. I'll summarize the technical hurdles briefly for future reference and for any other "crazy" souls out there:

- Architecture Pitfalls: Most libraries are for X86_64, while Orin is ARM (aarch64). Almost every library required recompilation. I haven't touched C++ in years, and modern Makefiles have become incredibly convoluted.

- Dependency Hell: Low-level libraries often hadn't been updated since 2021 or earlier. Modern compilers like GCC 14 are out, but this code is stuck in the GCC 11 era. It’s not just about specifying a version; it's about compatibility.

- System Version Issues: I spent an entire night upgrading the Jetson Jetpack to version 6 (Developer Preview), which uses Ubuntu 22.04. Consequently, I had to deploy a cross-compilation environment for aarch64 on other devices running older Ubuntu versions.

- CUDA Frustrations: Despite being a 15-year-old cornerstone of the Nvidia ecosystem, I don't remember a single time CUDA deployment went smoothly. SoC CUDA and Discrete GPU CUDA are treated very differently by many third-party libraries. I must praise Apple here; although they created a new wheel with MLX, the developer experience on Apple Silicon is seamless compared to this.

- Driver & Permission Issues: Professional cameras lack Linux software. I had to use

gphoto2(andlibgphoto2), which required full recompilation for aarch64, dealing with vague documentation, library linking, and—most unexpectedly—USB port permissions. - Display & X Forwarding: OpenGL support, Qt installation, and X Forwarding were a mess. Since macOS no longer supports OpenGL, I couldn't forward Qt windows back to my Mac. Since server-grade A100/H100s lack graphics capabilities, they don't support OpenGL either. I finally settled it using WSL on a Windows laptop.

- Code Compatibility: Some code relied on the Boost library, which underwent a major architectural change recently. I had to manually fix hundreds of errors, but luckily, it finally compiled.

Seeing that window finally appear on my screen felt like a true first step. Now that I can process the image data stream, the puzzle is coming together, even though a massive amount of work remains.

PS: The hardware and libraries I'm using are the same ones powering modern autonomous driving and robotics. Beneath the futuristic, dreamlike exterior of these technologies lies a foundation of aging, almost obsolete low-level code.

Yesterday, I posted this: "Those making money have no time to rewrite the low-level code; those not making money have no motivation to do so."

I don't have the motivation to rewrite it either; perhaps I'll just look for a way to bypass it altogether.