Latest Speech by the "Father of AI" Geoffrey Hinton: Digital Intelligence Will Triumph Over Biological Intelligence

Geoffrey Hinton, acclaimed as the "Father of AI," delivered a speech at Oxford University on February 19, 2024, titled "Will digital intelligence replace biological intelligence?"

In this speech of less than 37 minutes, some might find the viewpoints familiar. However, setting aside the discussion on AI risks, Hinton's perspective, his understanding of digital vs. biological intelligence, and his new ideas on training computation infrastructure are truly inspiring. It left me reflecting all night: I used to think whether Large Language Models (LLMs) have "IQ" in the human sense was unimportant, but the old master believes it is crucial because it determines the direction of AI research. Furthermore, he believes current models do have "IQ"; he "believes in the light."

Nowadays, when I watch videos, I have Gemini Ultra 1.0 watch them too and provide a summary, as shown below. Friends who wish to see the original video can access it via the link in the screenshot.

This is a capability GPT-4 currently lacks. Of course, the summary above contains obvious errors, such as time and location, because the video didn't provide that context—resulting in a "hallucination." However, Hinton offers a different explanation for this phenomenon in the video, which I found fascinating and will mention later.

While most agree GPT-4 is more powerful, it has gradually faded from my daily work and life. The reason is simple: no matter how strong GPT-4's reasoning is, it's not yet enough to replace my "thinking," whereas Gemini Ultra is a better tool and executor.

Below are excerpts from most of Hinton's PPT (omitting sections on risks and threats for various reasons), with simple explanations and my own reflections:

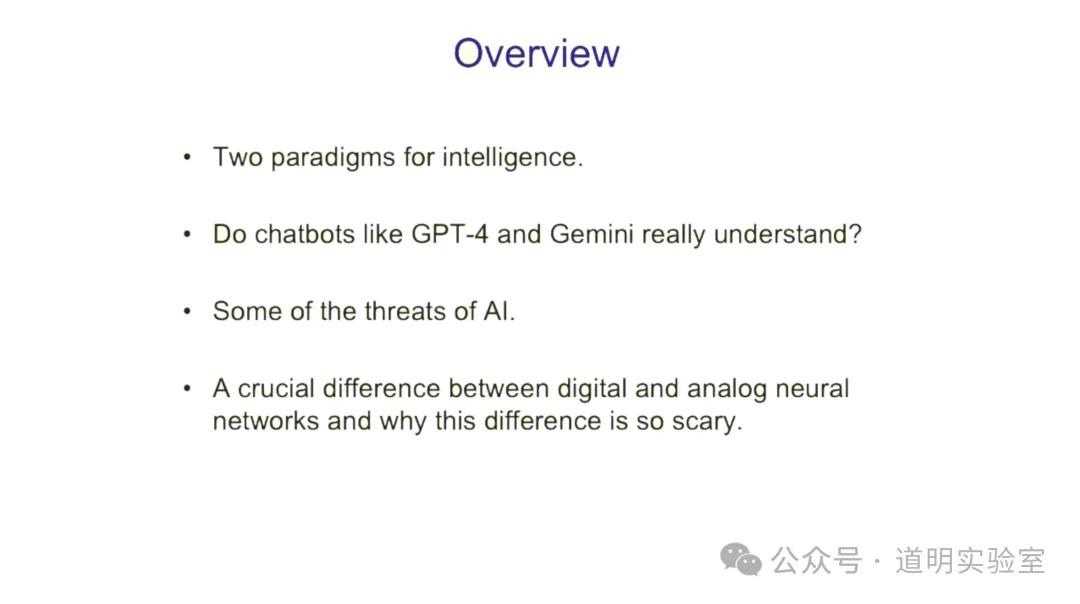

1. Overview

Since this is an overview, I handed it to Gemini for translation. Because the slide was uploaded within the context of the video analysis session, Gemini "over-delivered" on the task:

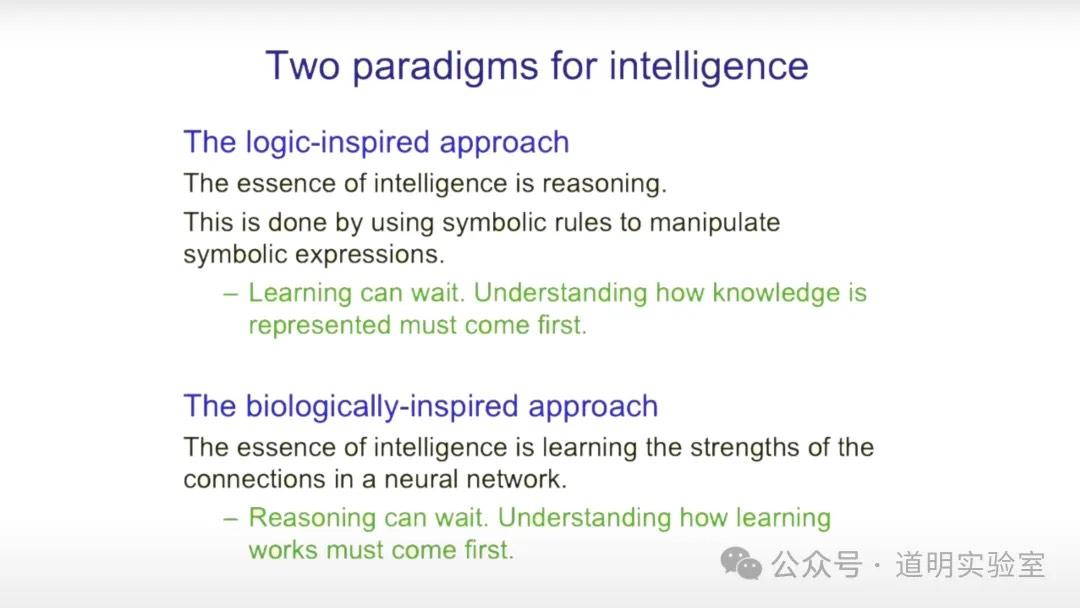

2. Two Different Types of Intelligence

A profound comparison:

- Biological intelligence must first understand how to learn, then reason.

- Digital intelligence is logic-driven; it must first know how knowledge is represented, then learn.

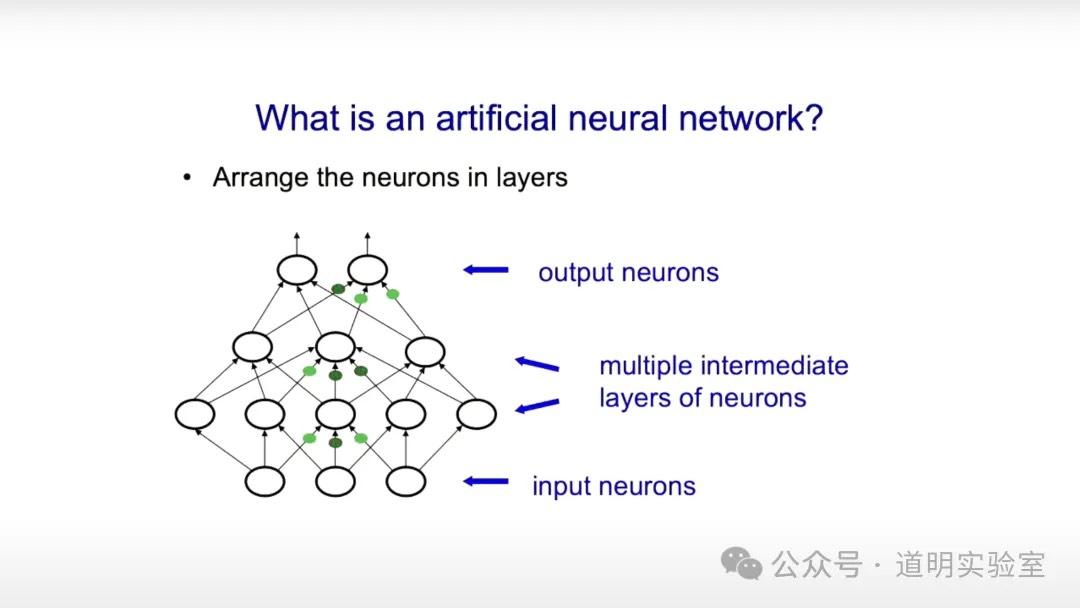

3. Artificial Neural Networks Explained

Very basic: it mimics human neurons to form a network that takes inputs and produces outputs.

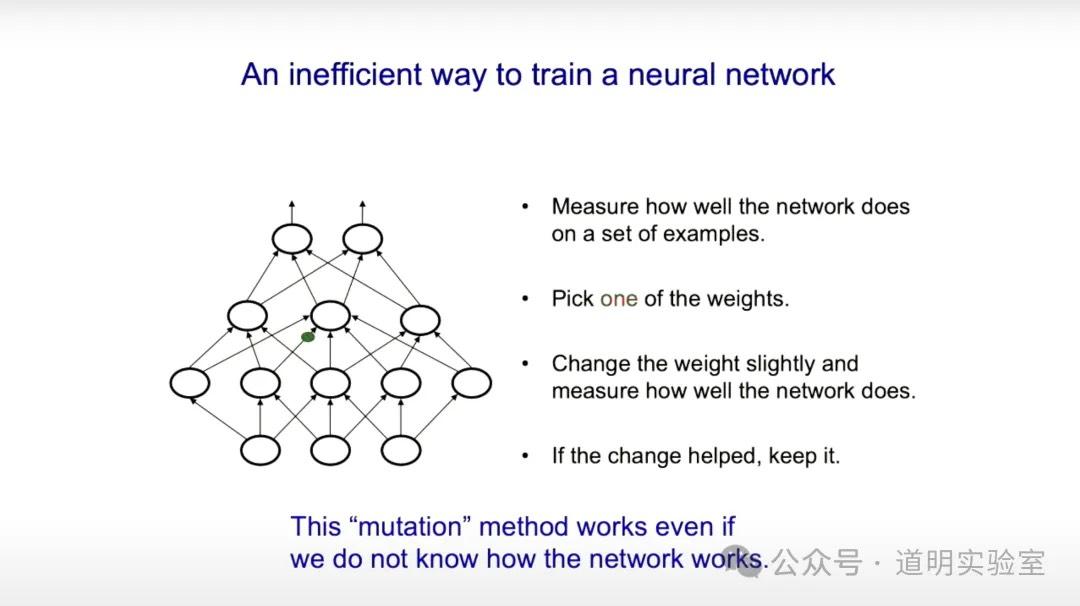

4. Inefficient Training Methods

In neural networks, each neuron is a function. Together, they form a complex matrix calculation. The "weights" on each neuron directly affect the output, functioning as parameters.

An inefficient method is akin to genetic mutation: slightly adjusting one weight and seeing if the output improves. If so, the result is kept for the next "mutation." This random approach is extremely inefficient.

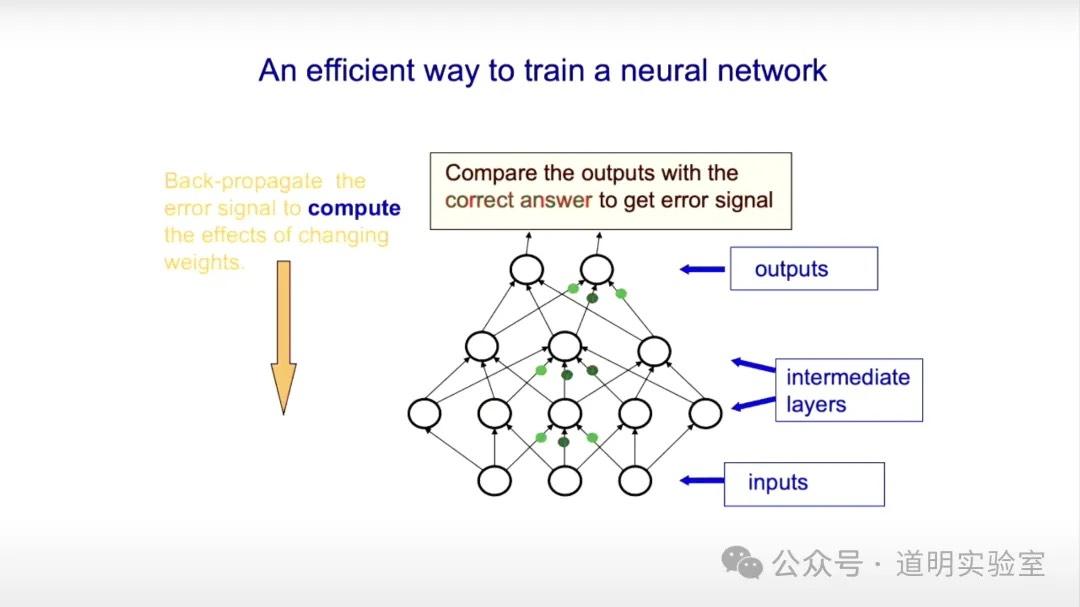

5. An Effective Training Method

Effective training uses backpropagation: adjusting a batch of weights and comparing the result to the correct answer. The weights are adjusted (usually via gradient descent) until the error (Loss Function) is minimized. Choosing the right activation functions (Sigmoid, ReLU, Softmax) is key.

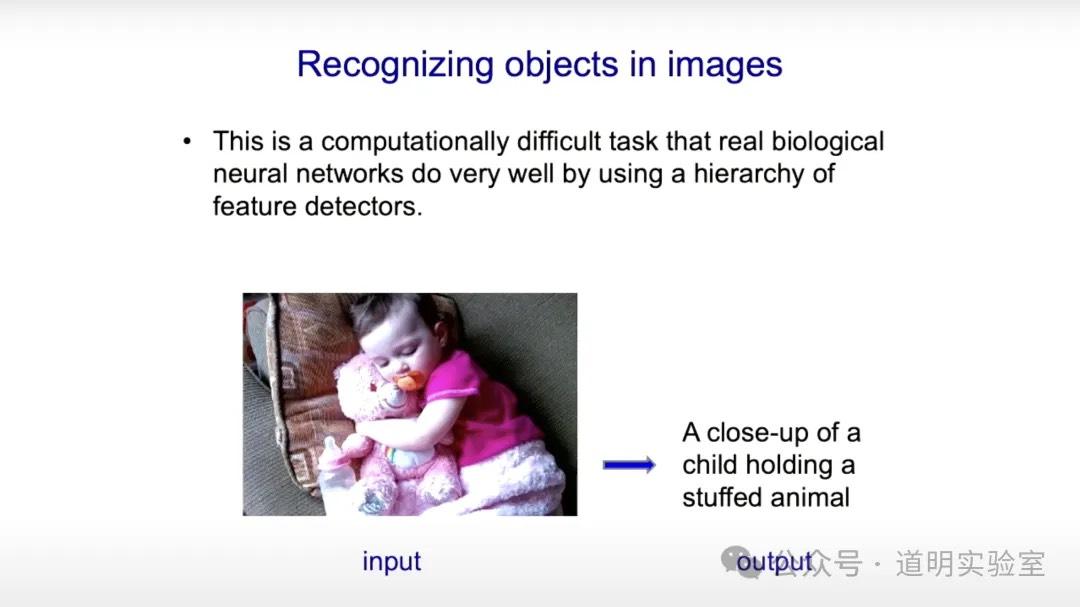

6. Recognizing Objects in Images

Currently, biological intelligence is still better at this because hierarchical feature recognition is perfectly suited for this task.

7. The Rise of Deep Neural Networks

In 2012, the AlexNet model trained via backpropagation achieved a 16% error rate in image classification, crushing the previous best of 25%. This led to deep neural networks replacing other algorithms, and Hinton became known as the "Father of AI" for this breakthrough.

8. Debate over Language Understanding

Unlike computer vision, language understanding faced skepticism. Chomsky argued humans are born with innate language understanding. The Symbolic AI community argued feature-extraction algorithms could never "understand" language.

9. Symbolic vs. Psychological Definitions

In Symbolic AI, a word is its relationship to other words. In psychology, a word is a series of features; synonyms share similar features.

10. The 1985 Language Model

Hinton introduced a small model in 1985 trying to unify these views. The core idea: knowledge is generated by predicting the next word, without needing pre-stored rules. This is the origin of GPT.

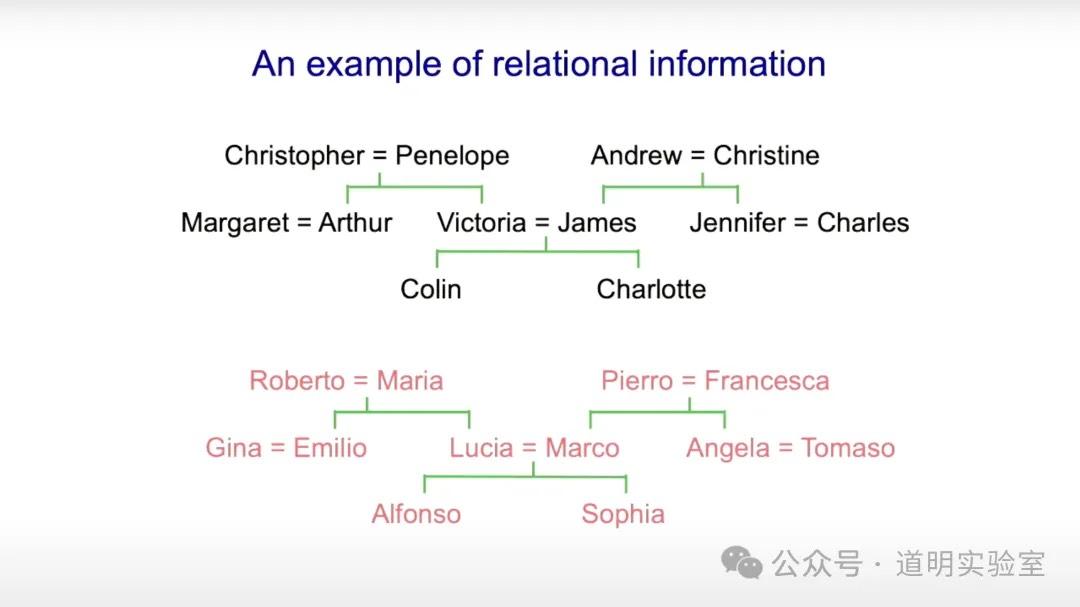

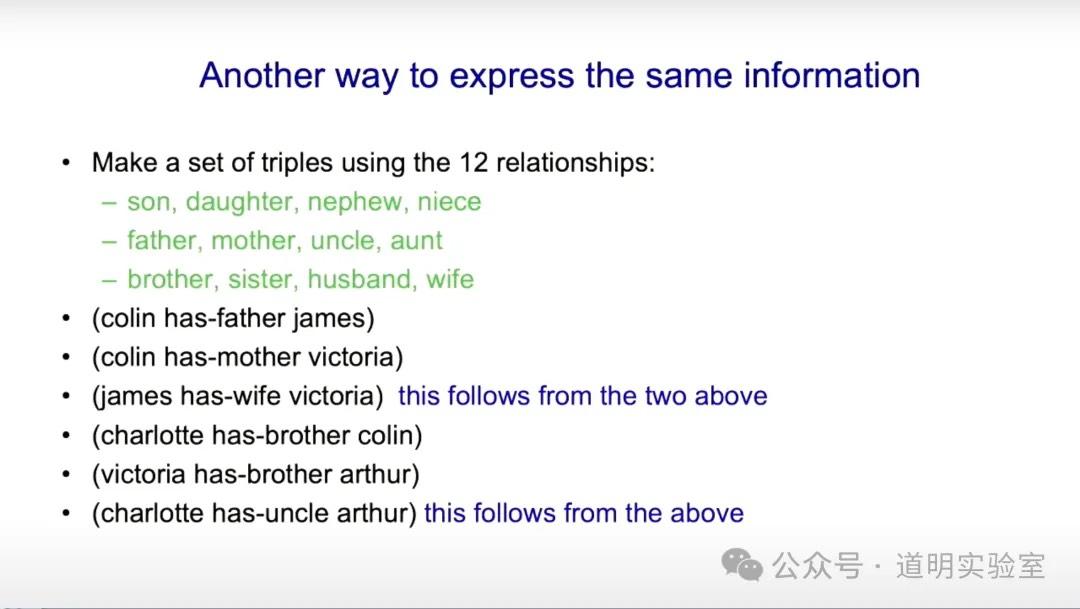

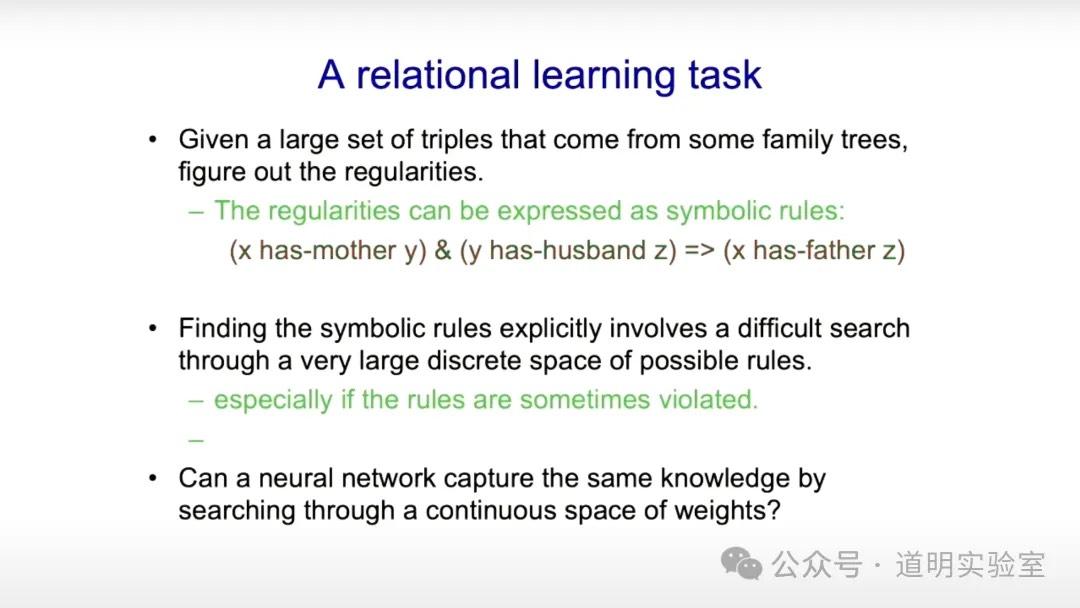

11. Family Tree Example: Rules vs. Neural Networks

Symbolic AI requires explicit rules (e.g., "Mother of X is Y" + "Husband of Y is Z" = "Father of X is Z"). This becomes impractical at scale.

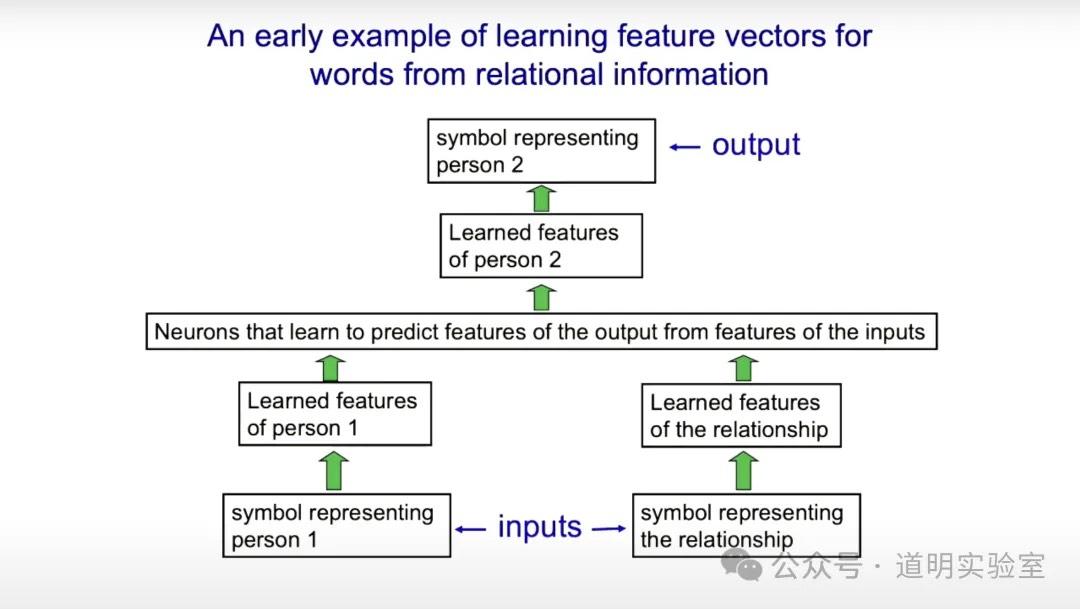

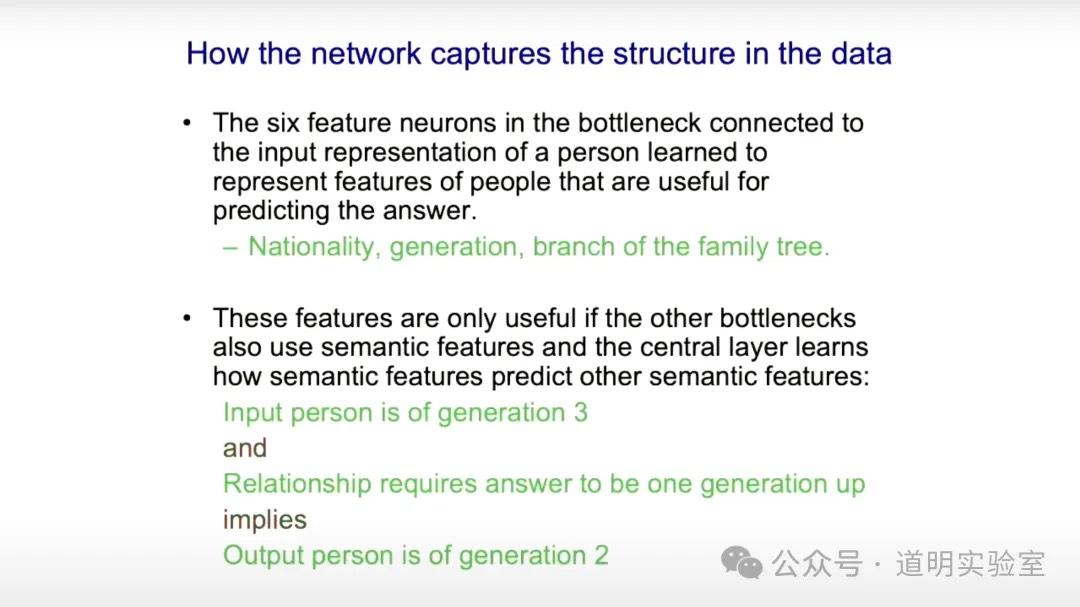

12. Vector Representation and Prediction

Neural networks represent people and relationships as vectors. By inputting symbols for a person and a relation, the network "predicts" the resulting person.

13. LLM Understanding

Many see LLMs as glorified auto-completers. Hinton disagrees: the ability to generate the "next word" means it has learned enough features and relationships to imply "understanding." It predicts because it has "learned," not because it stores text.

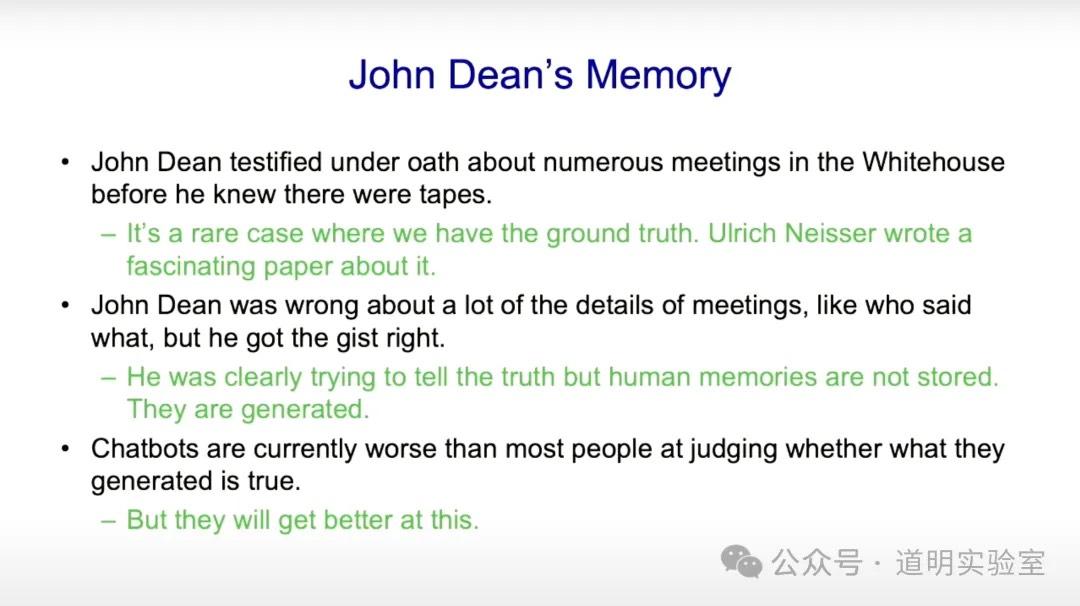

14. "Hallucination" vs. "Confabulation"

Hinton suggests LLM errors be called "Confabulation"—a psychological term for distorted memories. Human brains also store knowledge through weights, and we are often confidently wrong about details. He uses the John Dean memory case as an example.

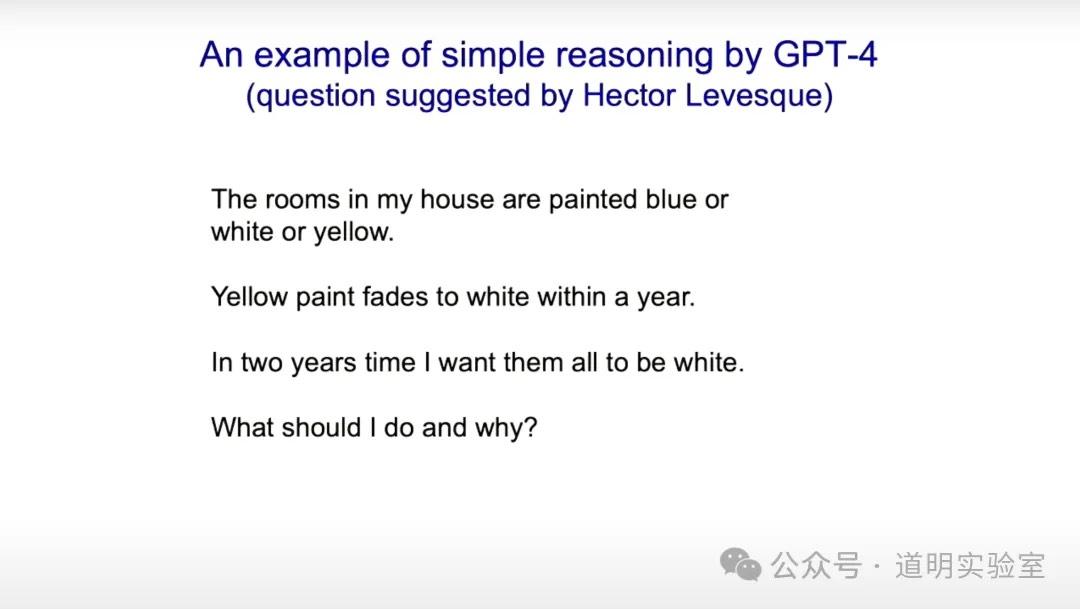

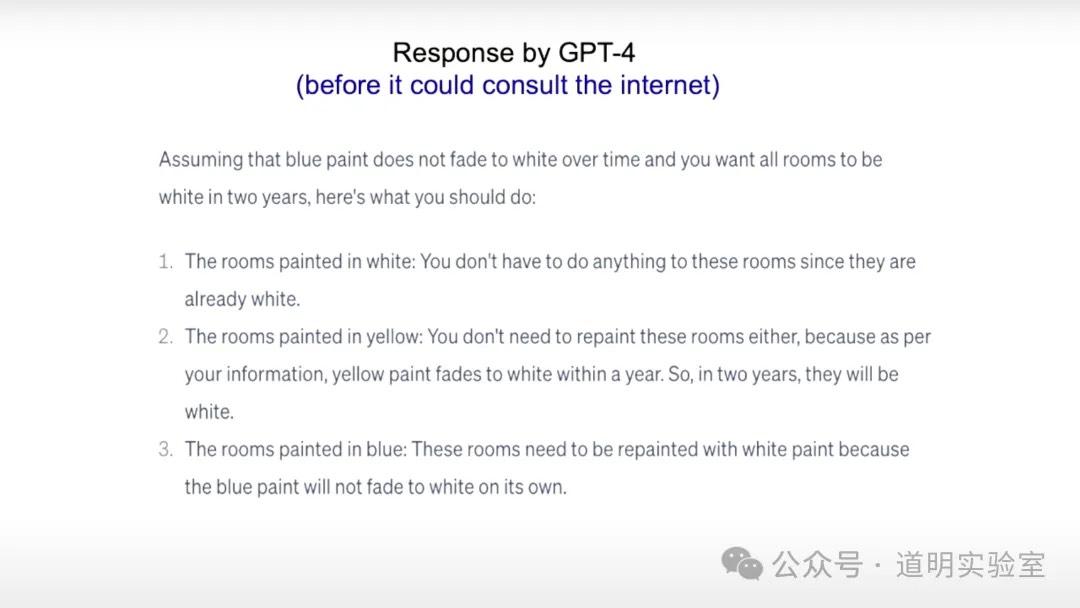

15. GPT-4 Q&A Example

A demonstration of GPT-4's reasoning.

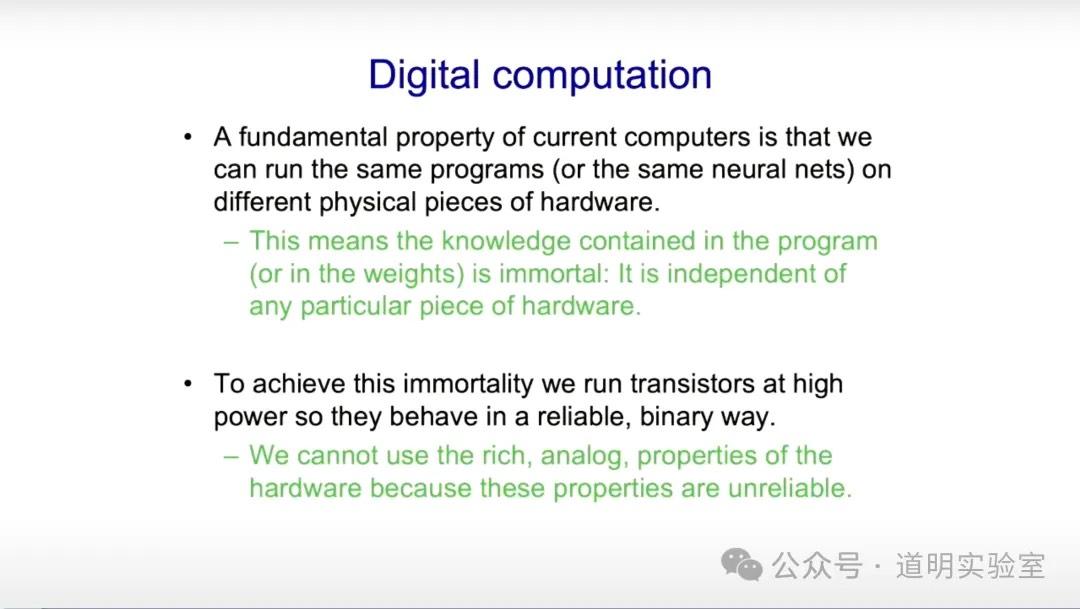

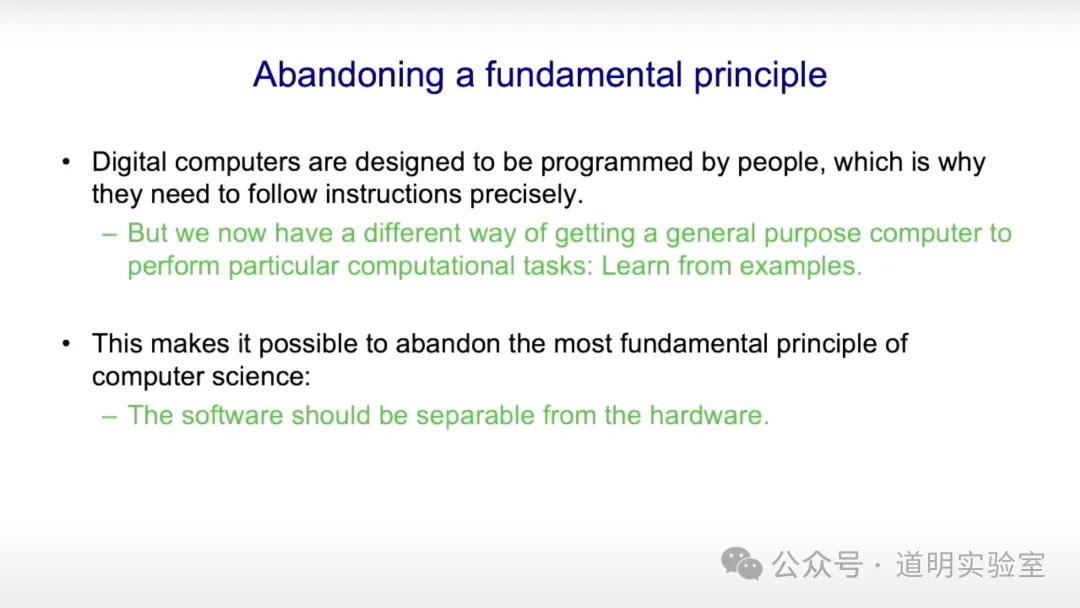

16. Digital vs. Mortal Computation

- Immortal Computation: Software/hardware decoupling. Weights (knowledge) don't vanish if hardware fails. Digital systems are binary and reliable.

- If we abandon decoupling, software and hardware merge. In AI, learning "fuzzy correctness" challenges the principle of separation.

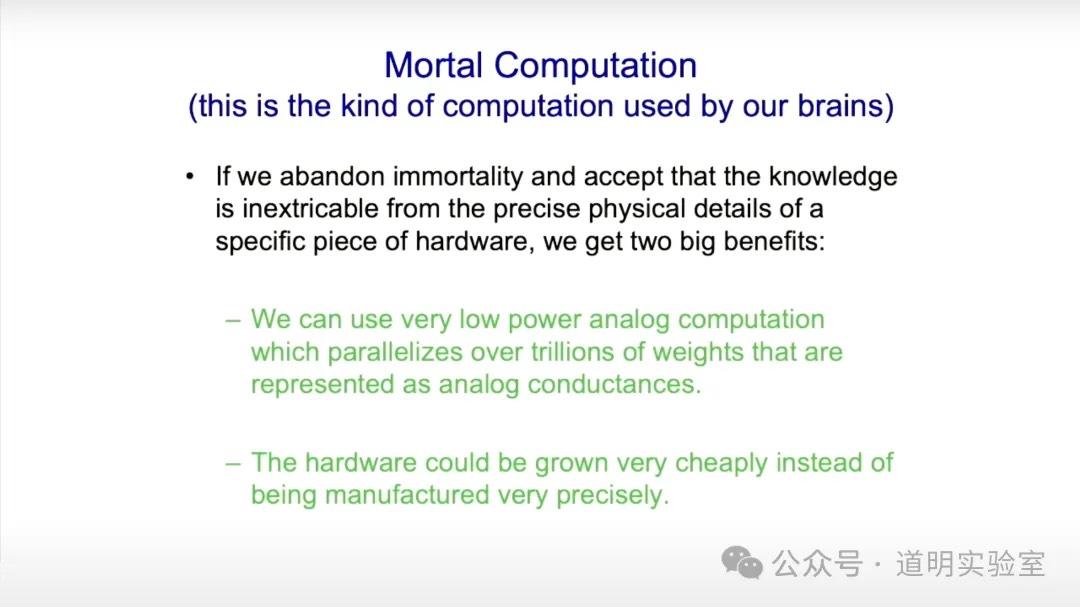

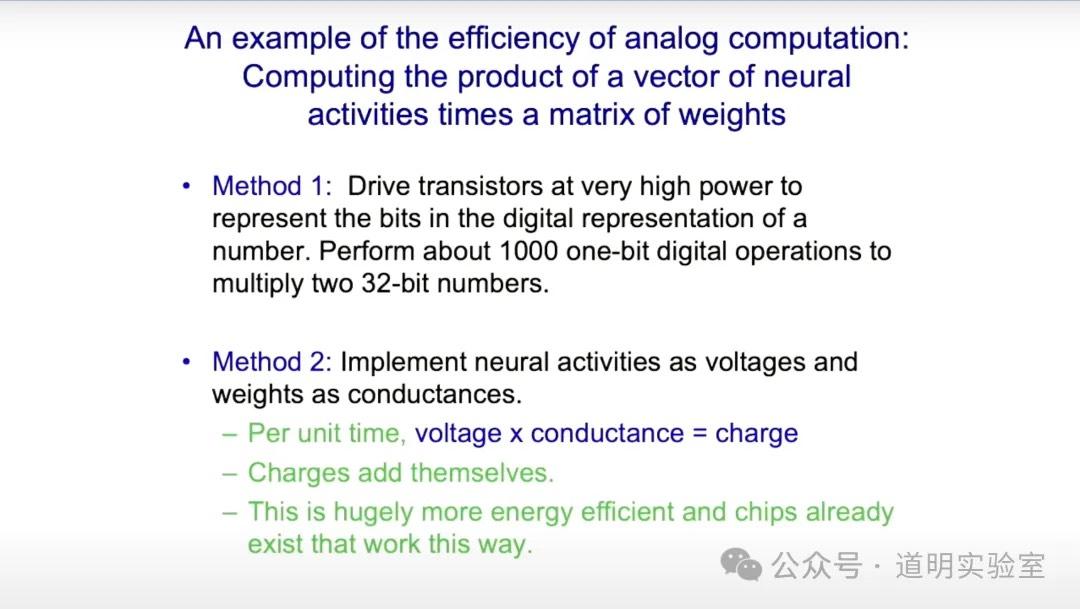

- Mortal Computation: We give up hardware precision for low-energy analog computing (like the brain). If hardware dies, knowledge dies, but the efficiency is massive.

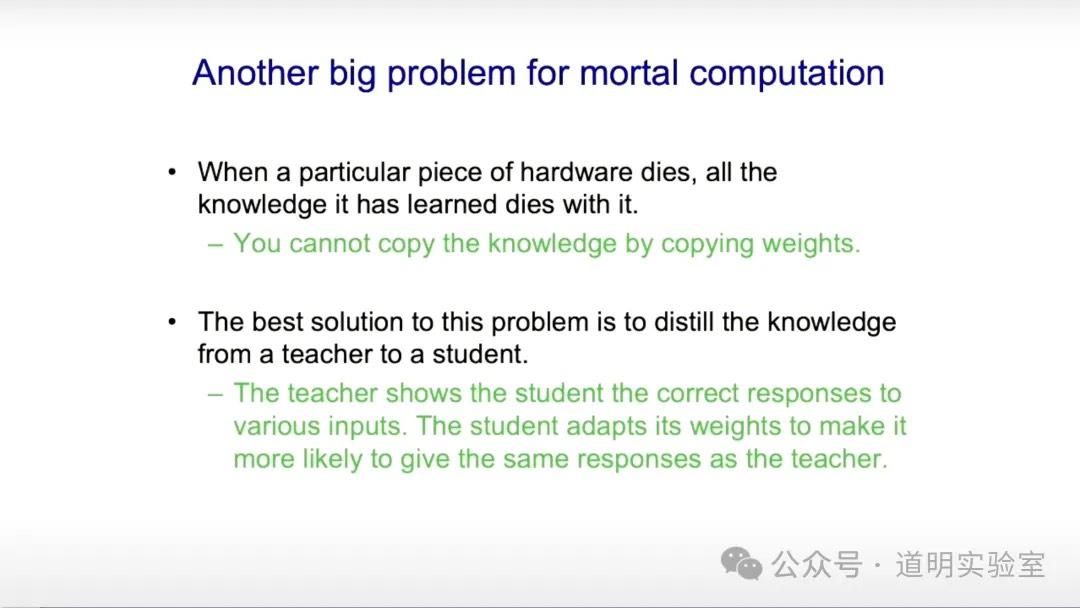

- The fix for dying knowledge is "Distillation" (Teacher-Student). Humans are poor at this (education is slow), but digital models (like MoE) can share knowledge instantly and efficiently.

17. Conclusion: 50% Probability in 20 Years

Biological intelligence evolves under energy constraints. Once energy becomes cheap, digital intelligence will surpass biological intelligence due to superior knowledge sharing. Hinton estimates a 50% chance of this happening within 20 years.

My Thoughts

I used to think models like GPT-4 didn't "understand" language in the human sense, but merely had strong output capabilities. To Hinton, this distinction is vital: prediction implies understanding. Since leaving Google, Hinton has warned about AI risks, perhaps because he truly "believes in the light"—that the intelligence is real.

The debate between carbon-based and silicon-based life is ultimately about energy dependency. Under physical and energy constraints, Mixture of Experts (MoE) has become the choice, as seen in Gemini 1.5.

Hinton's "surpassing" is a scientific concept: a new species that completely crushes us in "IQ." This differs from the engineering concept of AGI. He believes proving the "IQ" of these models is paramount.