Cohere released its new closed-source model, Command R+, on April 4th.

Of course, any model daring to remain closed-source today must essentially match or exceed GPT-4, and ideally, its API invocation costs should be lower than GPT-4's.

Cohere has largely achieved this, at least according to its own performance metrics.

This model features several key highlights.

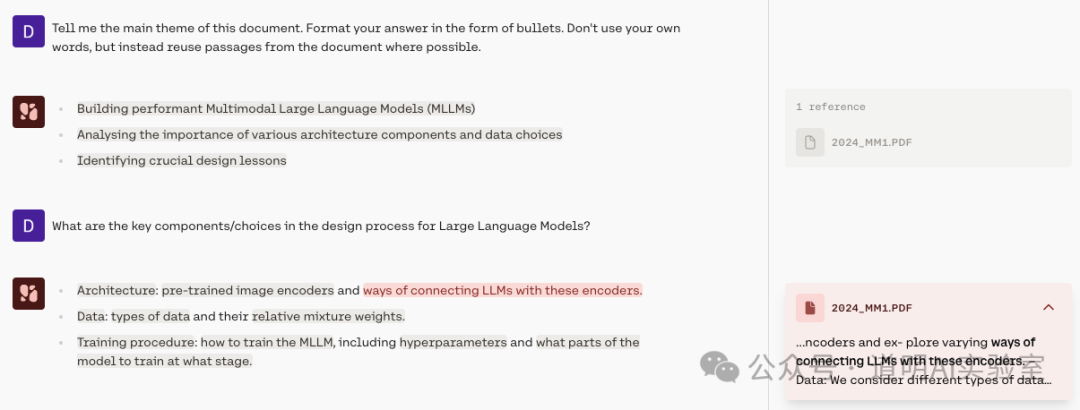

First is the advanced version of RAG (Retrieval Augmented Generation) with grounded citations, designed to eliminate "hallucinations." I tried it out, as shown below: in the dialogue results on the left, the text with a light gray background represents parts retrieved from the document. Clicking them reveals the source directly on the right. Honestly, this is no longer a "new" feature for modern RAG, but from another perspective, the current penetration of AI models in document processing is actually very low. The integration of such features will accelerate the adoption of many applications.

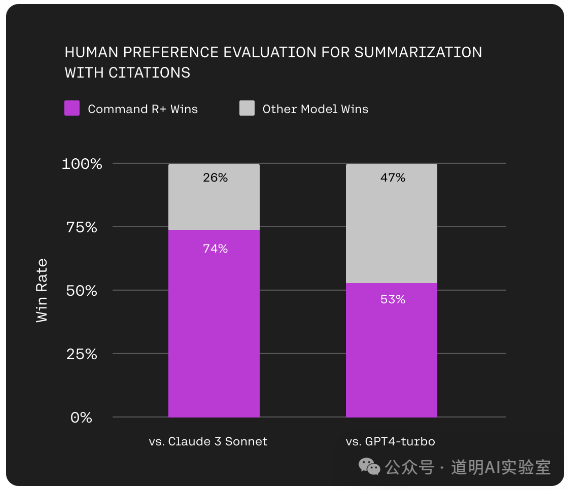

In human blind tests, Command R+ slightly outperformed GPT-4 Turbo. Unfortunately, there were no comparisons with the Claude 3 Opus model, which is currently considered the best production-ready model available.

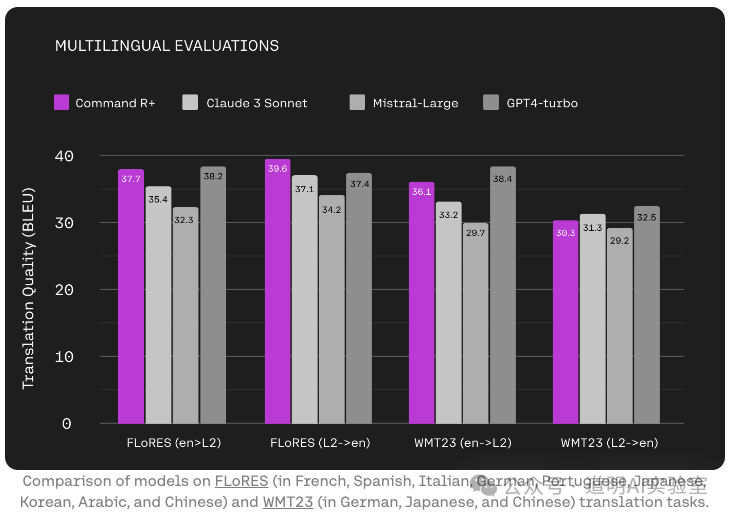

The second major feature of Command R+ is its multilingual capability. In fact, around the time Sora was released, Cohere released an open-source model called Aya, which achieved excellent multilingual performance through the collective efforts of nearly 3,000 independent researchers worldwide. Given this foundation, strong multilingual capabilities in their closed-source model were to be expected.

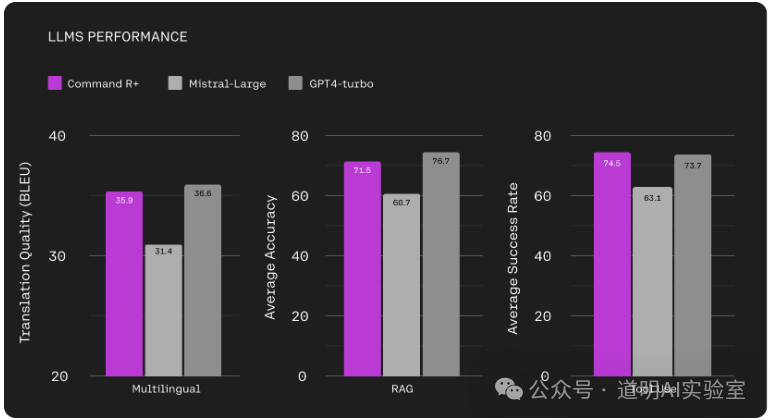

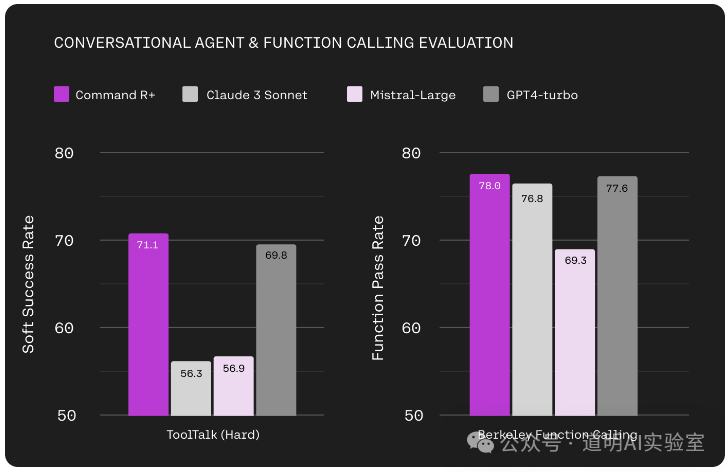

The third feature is its complex tool-calling capabilities suitable for business scenarios. Automated workflows and "function calling" have many practical applications and are vital for AI implementation. Command R+'s performance surpassing GPT-4 Turbo is quite impressive.

This is a model built entirely for the enterprise. Naturally, it partnered with Microsoft Azure from day one, allowing Azure users to invoke the model directly.

In this round of AI, the biggest winner is clearly NVIDIA, followed by cloud service providers. Microsoft seems to have quickly adjusted its strategy after the OpenAI management shake-up last year—partnering with Mistral, merging Inflection, and now immediately partnering with Cohere. The MaaS (Model-as-a-Service) business model is starting to look clear.

Cohere's Command R+ model actually raises two questions:

- Who is the model for? Enterprises—the answer is clear.

- Once capability is achieved, what matters most? The cost of use.

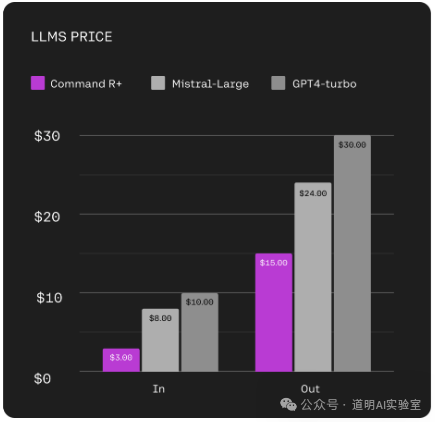

Therefore, compared to the model's inherent capabilities, user cost and actual inference cost have become critical factors in model competition.

First, looking at the cost per million tokens: Command R+'s input cost is only 30% of GPT-4 Turbo's, and its output cost is 50%. (If you consider that enterprise applications often have longer input contexts, the overall cost is actually less than 50%.)

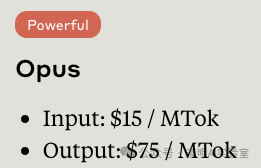

Currently, the most expensive model is no longer GPT-4 Turbo, but Claude 3 Opus, which costs $15 for input and $75 for output per million tokens.

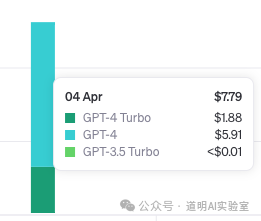

Secondly, it seems that models are still a very lucrative business. Yesterday, I tried OpenAI's newly open-sourced Transformer Debugger, and a few examples cost nearly $8.

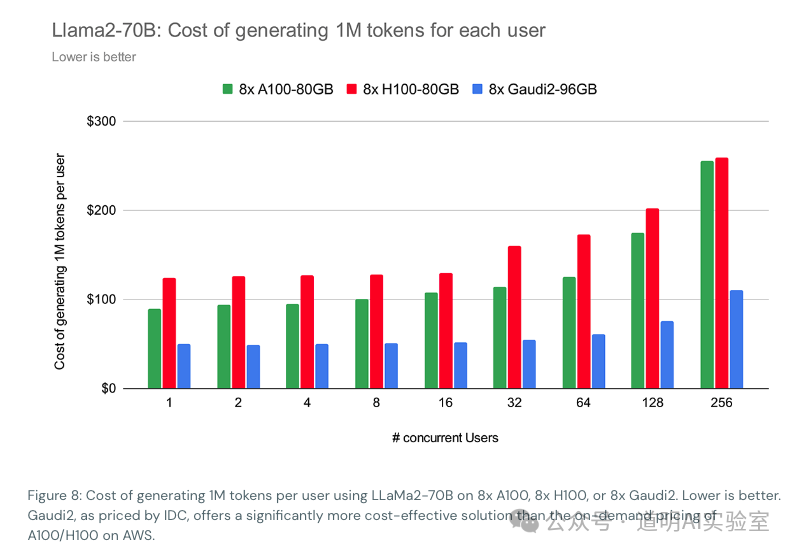

However, according to calculations by Databricks, even when using Llama 2-70B for inference, the cost per million tokens exceeds $100 (based on cloud server rental prices).

I believe large model companies have significantly lower inference costs than this, but in all likelihood, their token fees do not cover the actual inference costs.

The battle over inference costs is just beginning, and this competition involves both model companies and, even more so, chip companies.