We didn't get GPT-5, but as a spring update—even with previous spoilers—the new GPT-4 update features a powerful voice dialogue assistant. To be fair, the model's performance remains incredibly stunning:

Voice and real-time video interaction merge seamlessly. The Rabbit r1, which many are still waiting for, has been essentially "replaced" on the spot. Model capability remains the most critical foundation for a product.

This is a voice model that almost entirely possesses emotion, interacting with near real-time low latency. Whether it is understanding user emotions or expressing its own emotional output, it is proving itself to be "human."

It understands not only the emotion in a voice but also the emotion in facial expressions.

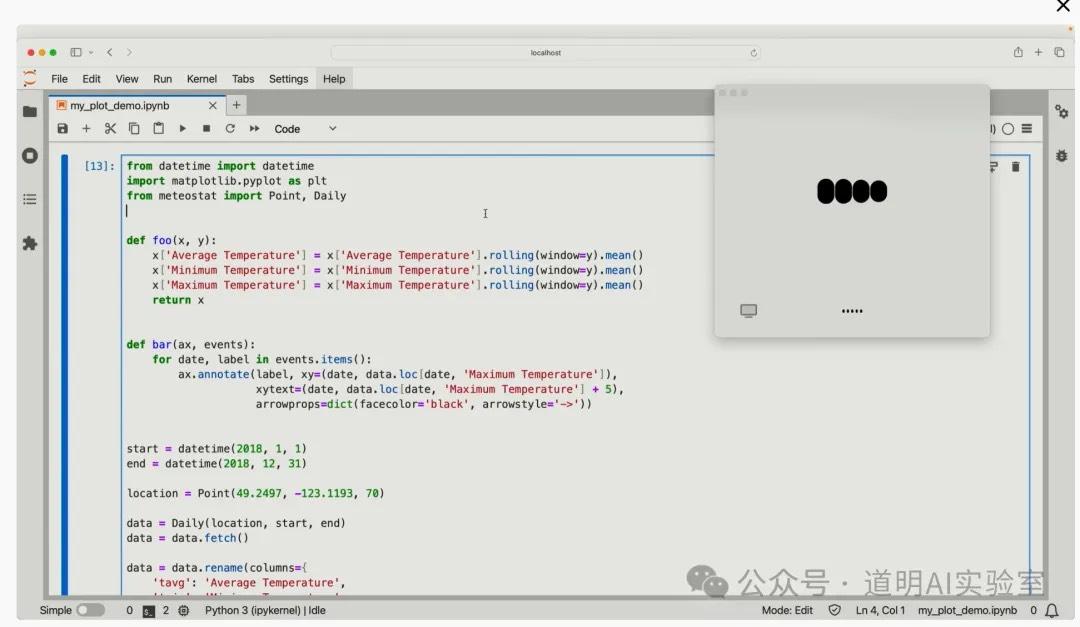

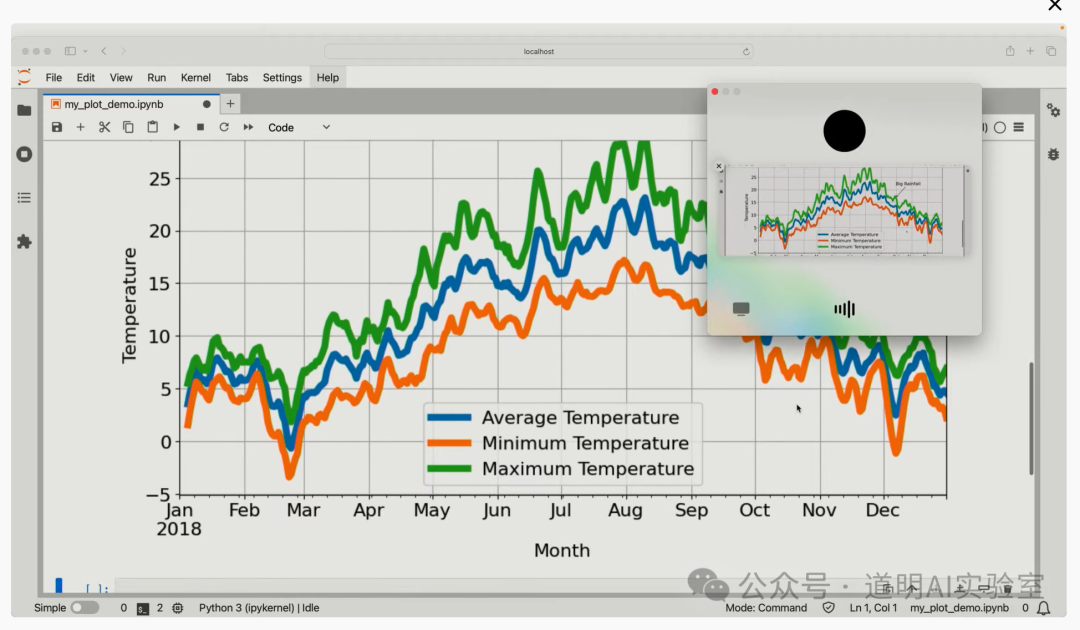

- The era of the true all-around assistant has begun. Not just on mobile, but also on desktop systems, allowing for real-time discussions on code issues and data analysis.

- Real-time translation: we no longer need dedicated translation devices.

The official website provided even more impressive examples:

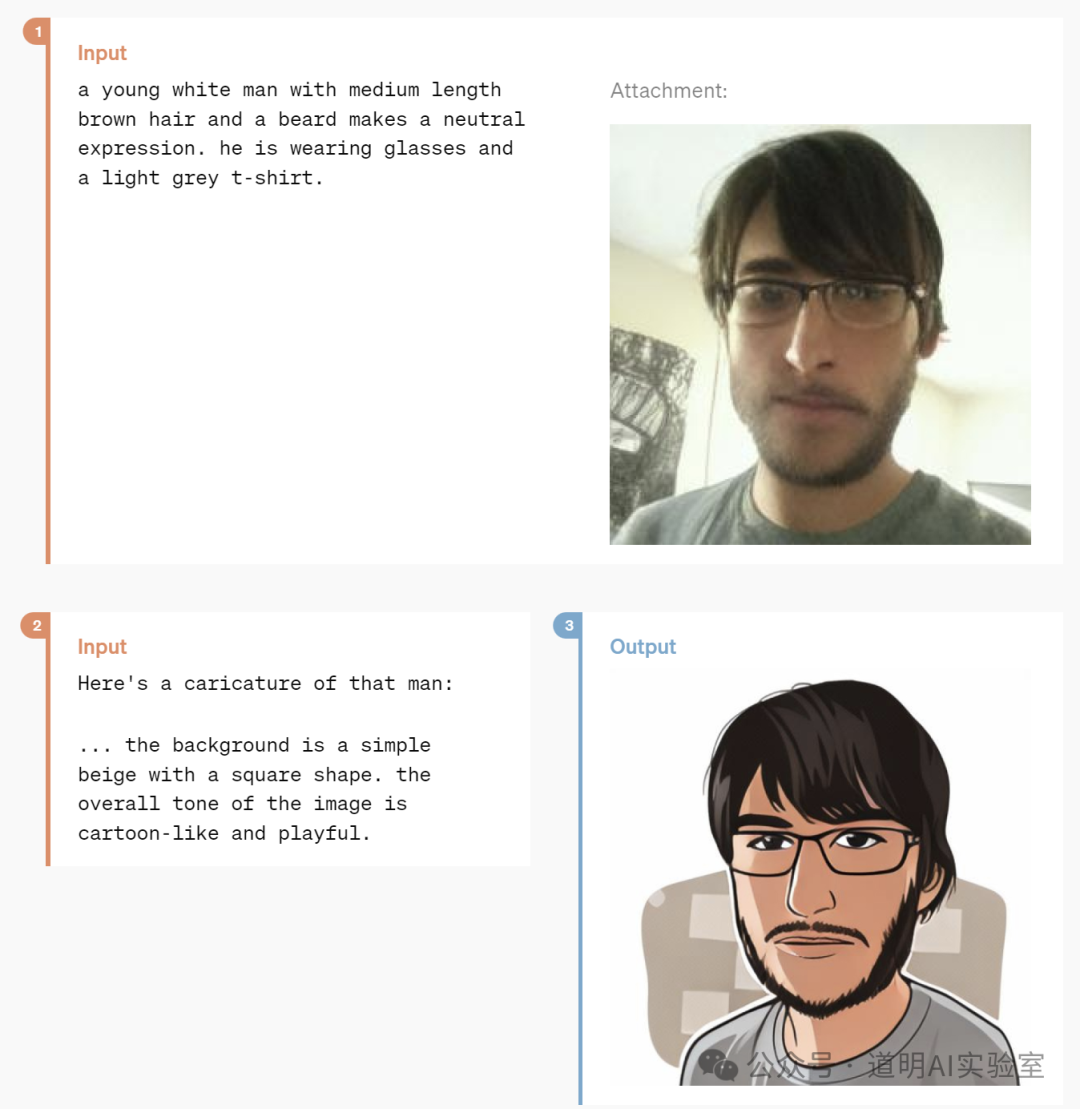

Generating cartoon avatars

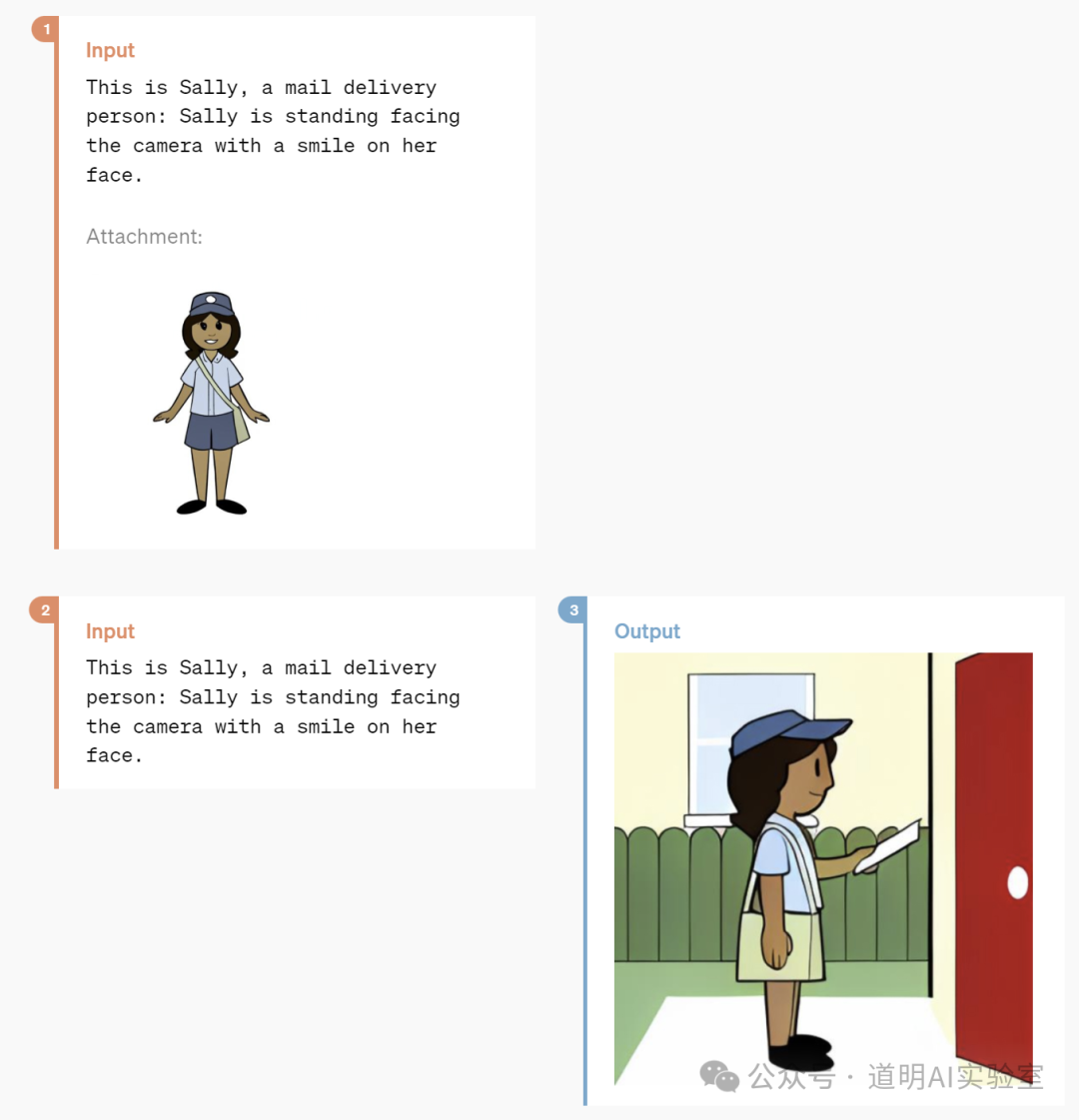

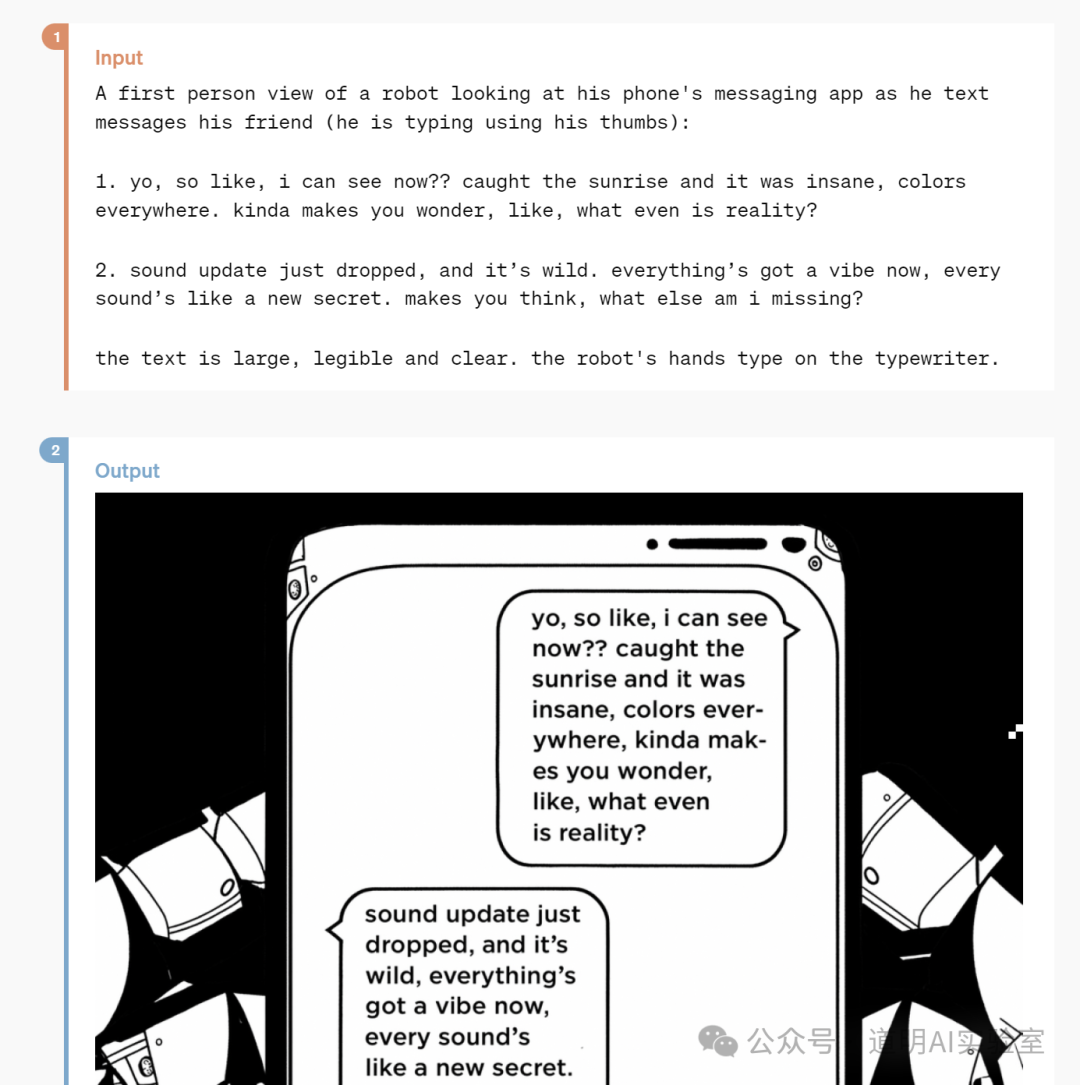

Comics with character consistency

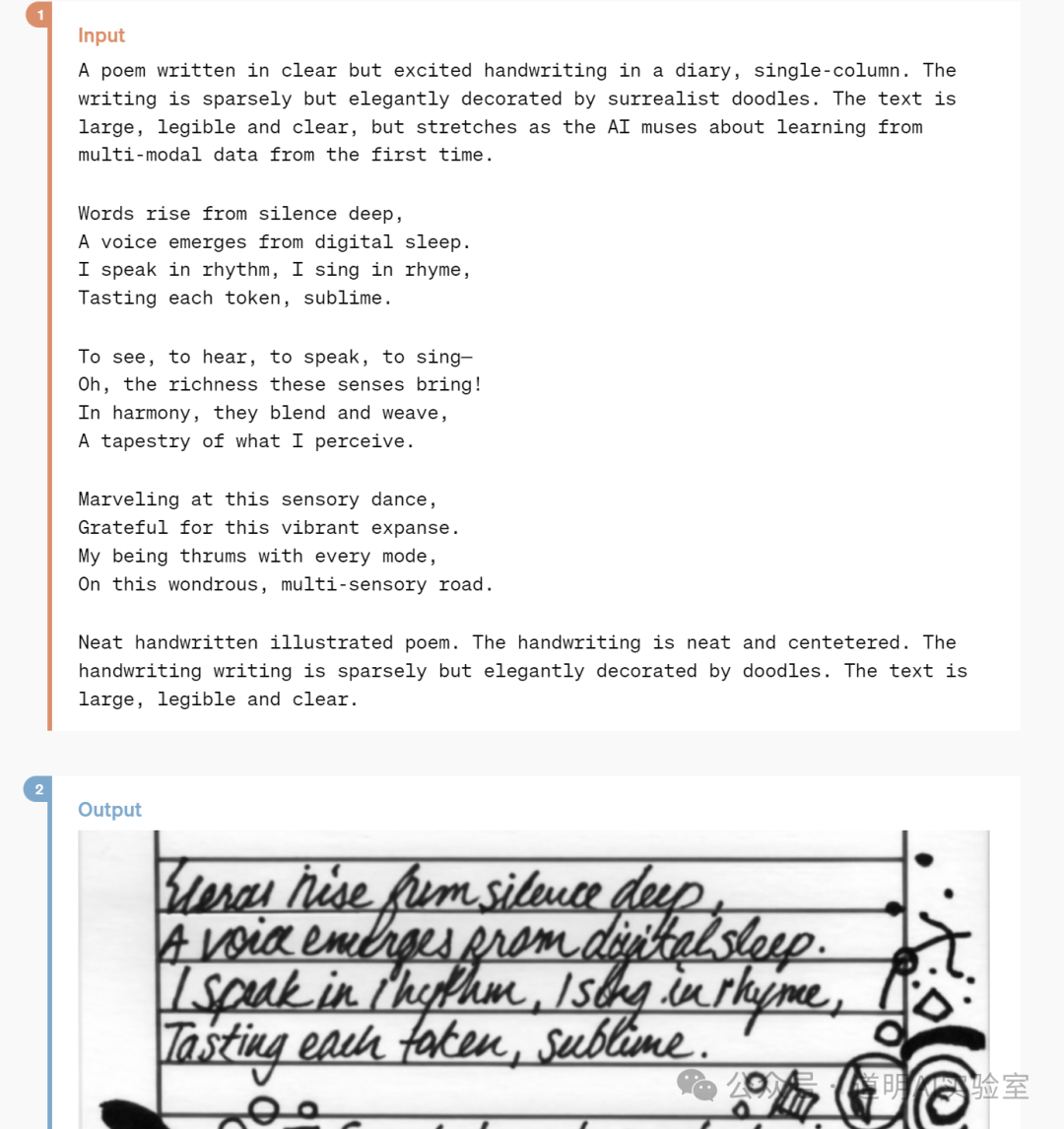

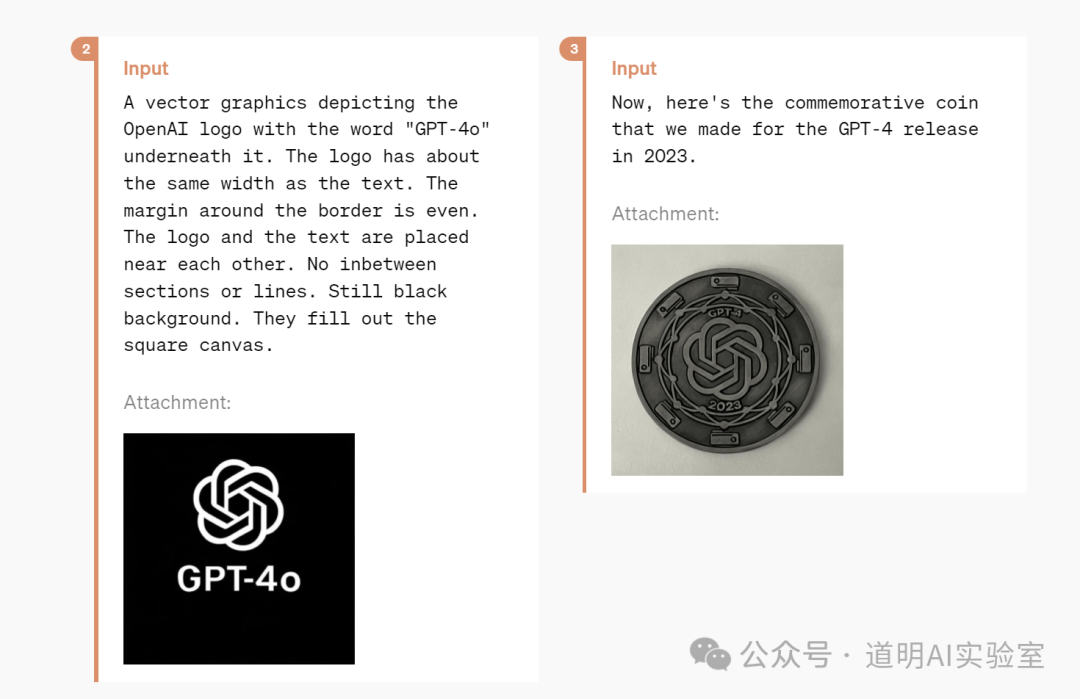

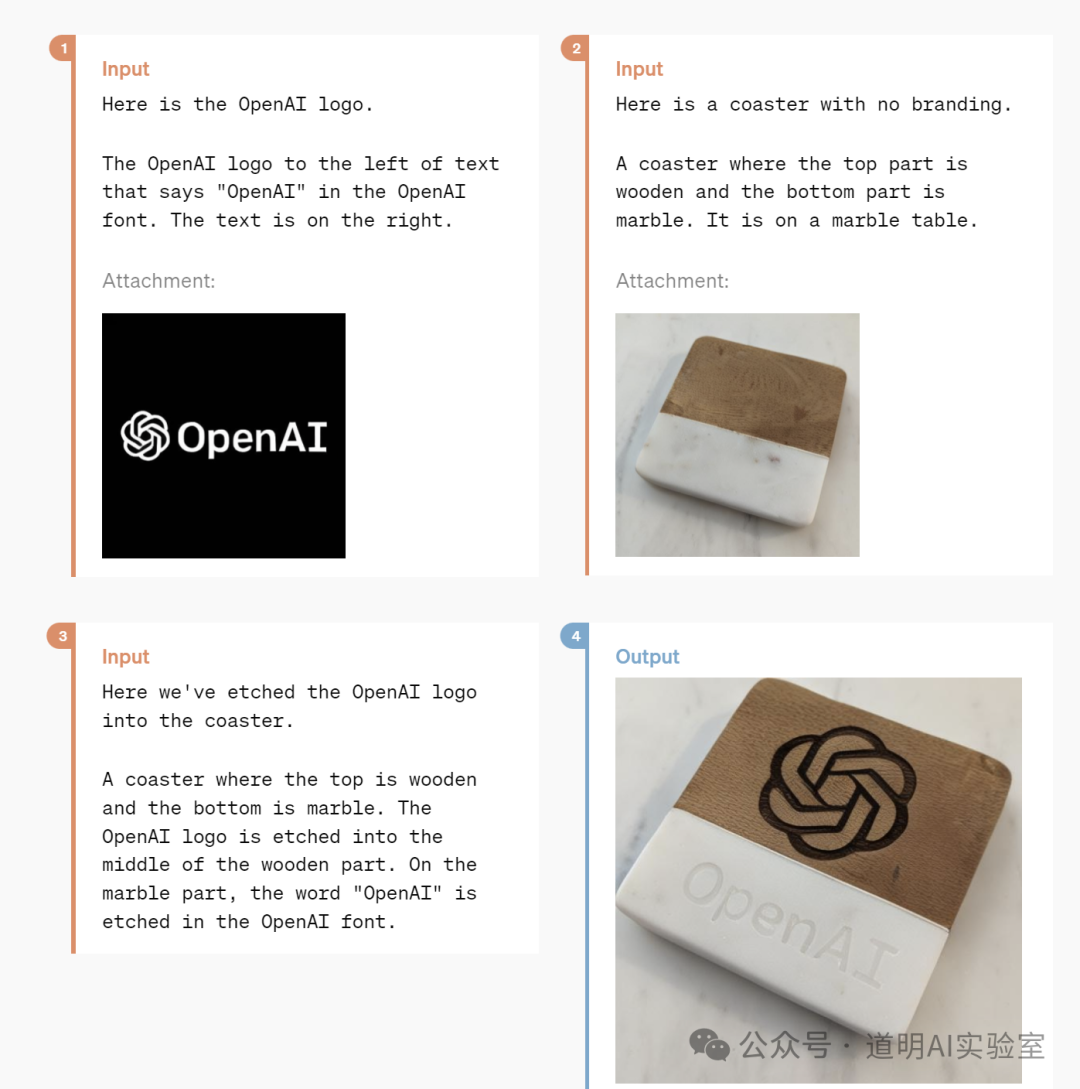

Generating images with accurate text (compared to Dall-E 3)

Various logo designs

3D generation (generating six images first, followed by 3D reconstruction)

UI design?

Meeting minutes summarization

Understanding video and summarizing it:

Yes, the 25-minute live demo showcased these features, but the underlying implications are significant:

Multimodal capabilities have reached a new level. Near real-time interaction opens up many possibilities; however, it also means the space for others to develop third-party applications on top of GPT has been further compressed.

The model is starting to "understand" emotion. This is a profound development, as the possession of emotion was once considered a key distinction between AI and humans.

GPT-4o is available for free. While there will certainly be limitations to this free access, it is undoubtedly a massive boost for ChatGPT's traffic.

If the rumors regarding a partnership between Apple and OpenAI for the iPhone are true, then we can look forward to the first true AI phone—the iPhone 16.

To be clear, this is still GPT-4, not GPT-5. This means the fundamental model strength is still within the GPT-4 generation. However, it integrates almost every capability imaginable and opens up larger spaces for scenario-based applications—and critically, it remains accurate.

There was no mention of Sora in this release, but we can see that Sora's image and video capabilities have been integrated into the new model in a more flexible way. While it may take more time to replace Hollywood, as a new social tool, all kinds of content will surely go viral in the coming period.

I have always believed that everyone needs an AI assistant. GPT-4o represents a new peak, or rather, the "full-power" version.

Finally, a little easter egg: the previously mysterious "gpt2" was actually the pre-test version of GPT-4o.