Ever since the leak a few days ago that Apple would call its AI "Apple Intelligence," this rather uninspired pun has been stuck in my head.

Indeed, at the WWDC2024 conference early this morning, the long-awaited Apple AI solution finally arrived.

Simply put, I believe all the features I expected are there, and it seems to handle them even better than I anticipated.

For the first time, Apple has fully leveraged its greatest advantage: a unified chip architecture across iPhone, iPad, and Mac, alongside increasingly unified operating systems.

Of course, there is also something everyone might have overlooked before 2023: the "pioneer status" of its users. In the process of reconstructing all products through AI, only the most pioneering users are likely to "play" with various possibilities and then continuously "go viral" across highly developed social networks.

So, as I expected, when Apple presents such an "AI," the first thing we see is the integration of iPhone, iPad, and Mac. We see "Apple Intelligence" being pushed to the iPhone 15 Pro and later models, as well as the entire range of M1-M4 chip Macs and iPads. Many might question whether this will drive a wave of upgrades, but that's just Apple, isn't it?

Two hours of presentation covered a lot of ground. Compiling a routine chronological summary has never been my forte, and I believe such summaries will be everywhere today. So, I’ll stick to my habit of picking what I consider the key highlights.

First is the discussion regarding on-device AI and Private Cloud Compute.

As previously expected, many AI functions are On-Device, meaning they are processed locally—primarily for data security and secondarily for efficiency. Think about the controversy Microsoft caused recently with its pre-announced Recall feature (which constantly records screen content for GPT processing). While Apple faced its own share of skepticism recently due to the "deleted photos unexpectedly restored" incident, users of the closed macOS (or iOS/iPadOS) generally perceive Apple systems as more secure compared to Windows, which is often viewed as an "open system" with inconsistent app quality. In consumer business, technology is only one part; user perception is more important.

At the same time, I have long maintained that Apple Silicon possesses powerful local AI processing capabilities. While absolute computing power is crucial for running models, the overall optimization of software and hardware is even more important. Even with Microsoft and Qualcomm working closely together, there is still a significant gap between them and the level Apple has achieved through years of continuous self-iteration.

Naturally, when the system determines that local computing power cannot meet the requirements of a model call, it will turn to Private Cloud Compute. In plain English, it's like using ChatGPT: you access the cloud, and data is uploaded. However, Apple introduced the "Private" concept—data is private, the cloud model doesn't store it, and the system on your phone or laptop determines what data to send to "protect the user."

To summarize this section: local processing for models is what everyone wants. Based solely on the demonstration, Apple's solution appears to be the most complete, integrating its own hardware and apps. The only question is that I seemed to hear these features will gradually roll out over the coming year, meaning they might only be fully realized with iOS 19? (This actually aligns with what I expected from offline discussions.)

As for data security, we believe Apple is very serious and working hard, but often it remains a matter of subjective feeling.

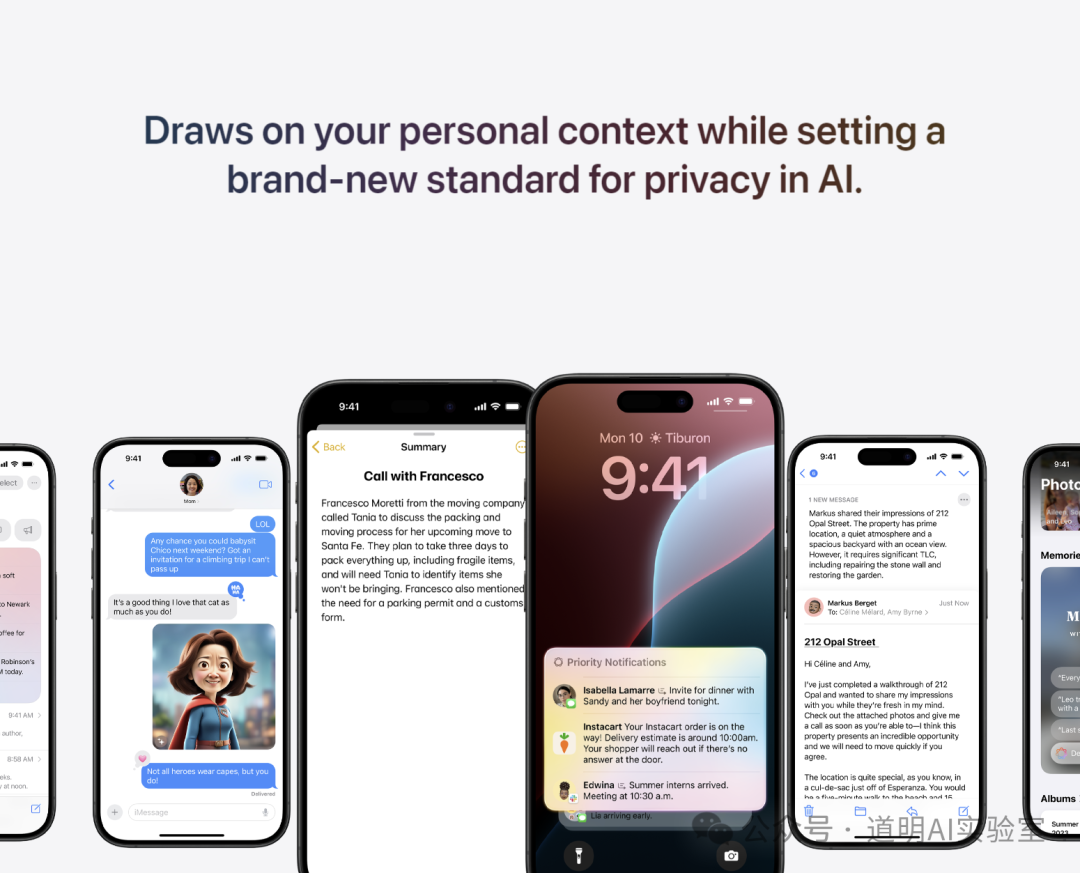

Secondly, while the term "Agent" wasn't specifically emphasized, the products presented based on "Personal Context" are essentially Agents, and they are cross-device.

As AI enters our phones, tablets, and laptops, integrating into our daily lives and work, the foundation of a "fluid experience" is for the AI to "understand us." This is Personal Context: accessing local photo libraries to generate cartoon avatars of contacts in real-time chats (I suspect this feature might have limitations to avoid issues); optimized reminders; replies to emails and messages; automatic form-filling of personal info; and handling files on your phone that were saved on your laptop a few days ago, and so on.

There can be many such features, but they must be built on an understanding of the user to improve. Yet, this poses a controversial question: Are you willing to let a model "formally" take over your data?

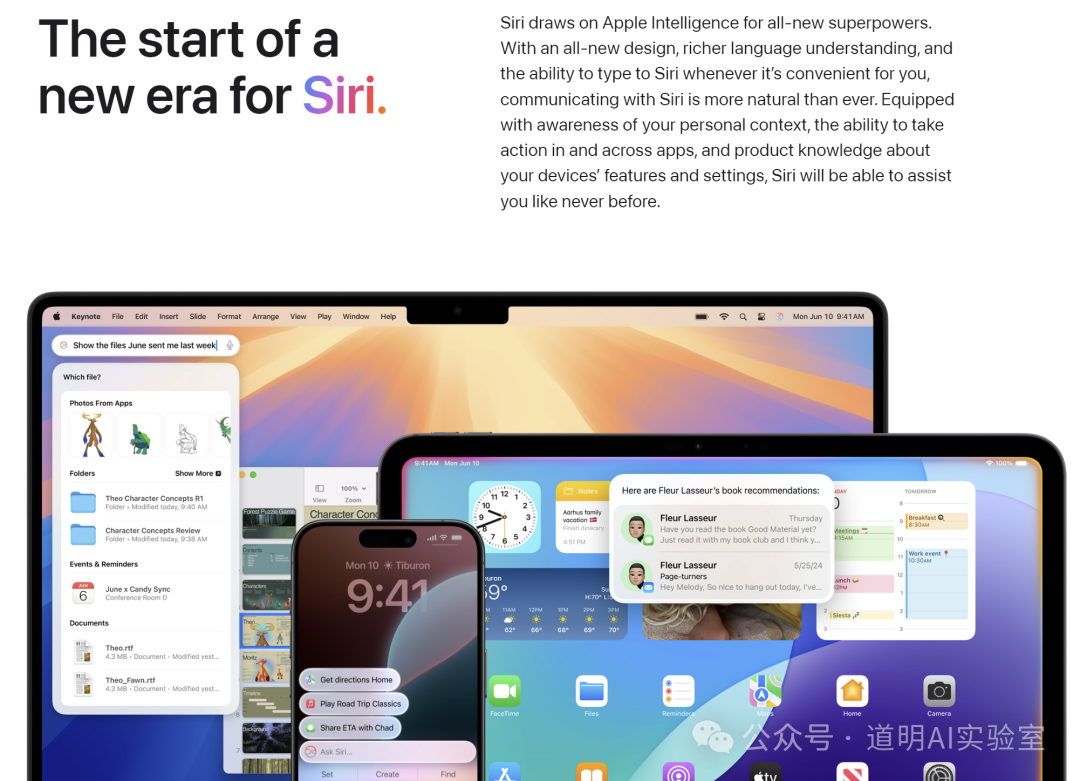

Thirdly, the highly anticipated Siri and ChatGPT integration.

A Siri based on a Large Language Model is finally here: it can handle complex conversations, can be summoned across different screens at any time, supports text input, and seamlessly integrates ChatGPT.

Consequently, Siri is no longer just a voice assistant; it is now a more convenient interaction interface between humans and Agents.

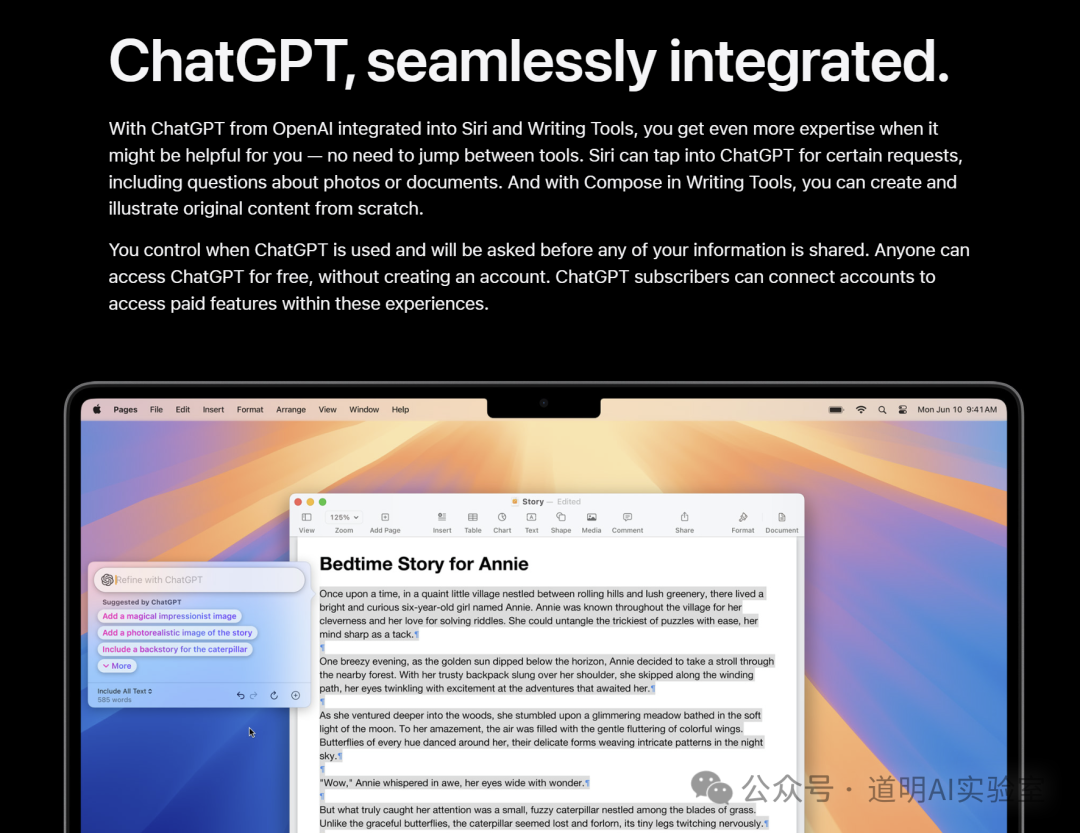

Additionally, the long-confirmed partnership between Apple and OpenAI has been officially announced, with ChatGPT integrated into the system and allowing for linked ChatGPT paid accounts. Thinking about it, the biggest beneficiaries might be OpenAI and Azure cloud services, along with Nvidia's computing power.

Fourthly, is that it? Yes. If we were to take one step further, we probably wouldn't need these devices anymore. But that won't happen for a few years.

I believe more features will continue to roll out; after all, the AI capabilities for Keynote and Numbers weren't introduced yet, and the demands for auto-generating presentations and smart spreadsheet processing certainly won't be left behind. However, if we truly feel for the features in the image above, we would likely agree that this is a suitable boundary for "human-machine cooperation." Theoretically, more powerful and automated models would only reduce the time we spend using devices.

However, this theoretical derivation might not hold—we still need to watch movies, play games, socialize, shop, take photos...

Unless a completely new hardware form factor emerges.

Overall Impressions:

Regarding the report card Apple submitted: the functions met expectations, the fluidity slightly exceeded expectations, and the implementation of cross-platform features was quite aggressive. Indeed, time is running out for them.

It is clear that no one other than Apple can provide such a complete and fluid solution. Microsoft can't, Google can't, and Samsung can't. The reasons have been stated many times: the "all-in-one" synergy of chip + OS + applications, now with the added factor of a higher proportion of "pioneer users."

I never worry that Apple's solutions will fail to meet their own expectations; I only worry that the "hidden entry barrier" of AI might block a large number of C-end users. If I had to pick who would perform best in C-end AI implementation, I would choose Apple without hesitation. However, I am not entirely confident about how quickly market penetration will occur due to complex underlying reasons I prefer not to discuss publicly.

After some time, people will likely realize that "Apple Intelligence" is a very important milestone. When the world's largest consumer electronics company seriously presents such a complete solution, the trend becomes certain. The only question is, as mentioned before, how fast is the penetration? We'll have to let the market and the data speak.

We are finally going to hand over our data completely. Though I realized this twenty years ago in my first job, and despite my professional habits leaving me with little psychological burden regarding sharing "personal context" data, I must admit I am not representative. I tend to believe that those with a psychological burden might be the majority.

Following up on that, the battle between AI and human nature has only just begun.