Regarding the question in the title, my answer is: though it is difficult, I believe so!

In terms of content, this should be a follow-up to the previous article. ChatGPT "quietly" launched its search feature, and the transformation has officially begun.

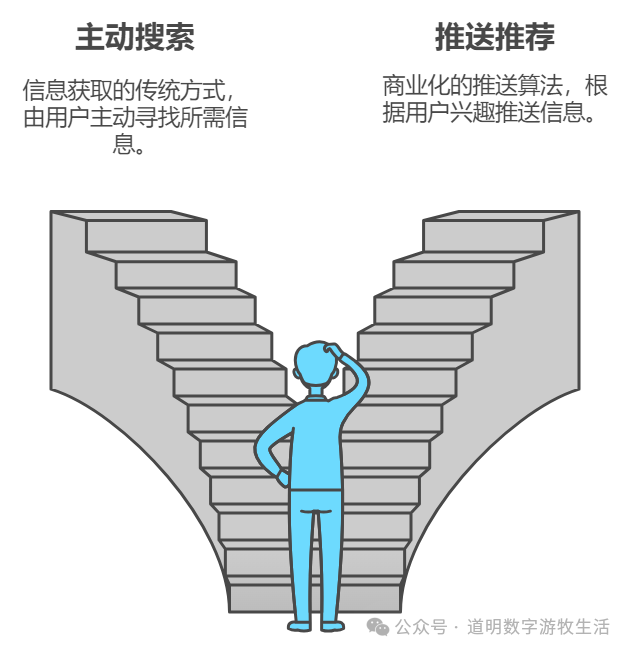

In terms of structure, the search service discussed in the previous article is a user's active request. Today's discussion is about the other side: the push to the user. The former was the original intent of the Internet; the latter is the foundation of today's commercial empires.

This part is not easy to write because I neither believe in the creed now held by many internet researchers—that "users surrender their data ownership to obtain services provided by internet companies"—nor am I radical enough to believe that "tech giants all deserve to die."

Lacking a sharp point of view is always a major taboo in expression.

However, I still believe that the current situation, or the state before ChatGPT emerged, is just a brief, unstable state because it wasn't the original intent of the Internet. We are seeing more and more people making various efforts to return data to the users themselves, and at least nominally, Apple and Google have expressed the same sentiment.

AI has given us such an opportunity.

Twenty years ago, when I had the honor of participating in the construction of the first version of what now seems like an enormous project, the Internet was flat. Back then, everyone involved saw the value of data, but being too young, we couldn't understand that this had the potential to become a "Utopia." Naturally, we couldn't imagine the massive commercial opportunities behind it.

Being young and ignorant made me miss the fifteen years of BAT (Baidu, Alibaba, Tencent), and even fifteen years of financial industry training didn't correct this stubborn prejudice.

Even now, I believe that you cannot simply make money by repackaging user data and selling it back to users (via advertisers), but recommendation algorithms believe they can, and "tech giants" believe they can.

Recommendation algorithms originated from a story about "Big Data" (though the term didn't exist then) that has been told for over twenty years: Beer and Diapers.

I have told this story many times as well. As long as you have the chance to "see" that much data, reaching the conclusion in the story is effortless. The question is: who does the data belong to?

However, we have become accustomed to a kind of "virus": when we repeatedly search for keywords like "AI" and "ChatGPT," the search engine's homepage recommends AI-related content, and short video apps constantly push "course-selling" videos; when we browse content about new phones, search engines, short videos, shopping apps, and a series of applications surround us with "phone ads" or "shopping links"...

The above is, of course, a well-known fact that people have become numb to; I have no intention of repeating or criticizing it. I am not that cynical, and I firmly believe that change has arrived.

In recommendation algorithms, each of us is a bundle of labels, which are then matched with labels for content or advertisements in the cloud. The algorithm pushes relevant content to us based on "budget" and similarity;

The most valuable labels require only simple and straightforward processing: we frequently read football news, obviously because we like football...

We might only read football news on a portal site through a browser, and then almost all apps know about this "behavior." We have signed a bunch of agreements we aren't even aware of, legalizing this data distribution behavior;

In recent years, Apple introduced some restrictions on data usage. Now, social giants represented by Meta have begun to tout how AI helps them improve advertiser ROI. However, simple statistics and association rules are not the same as the AI we understand;

To some extent, users receive a large amount of free information services, and recommendation algorithms also largely help users filter out content they aren't interested in;

The lowest-cost method under this model is batch processing in the cloud: generating and adjusting labels in batches, matching labels in batches, and pushing in batches. The core of the algorithm is to be more "precise" and have lower computational costs. The foundation of achieving "a thousand people, a thousand faces" is using a centralized set of algorithms to process all data;

Users have no chance to take back their data because the entire computing network has no chance to be "decentralized";

Just as we became accustomed to centralized "thousand people, thousand faces," ChatGPT appeared. Over a year ago, so-called generative models were criticized for only being able to provide "a thousand people, one face": when the model's randomness (temperature) is lowered enough, different people with the same input will only produce the same output. Every single question from every person represents a non-trivial computational cost.

A model can, of course, be personalized enough, as long as someone pays the computational cost for that "one face."

If we set aside the cost factor for a moment, [if everyone owned a large model] becomes achievable. The essence of the increasingly clear "AI Agent" is to transform the "undifferentiated" person in front of recommendation algorithms back into individualized subjects served by models.

Bringing the cost factor back in, over the past year or so, the fastest decline has been in "model inference costs": for the same output capability, OpenAI's costs have dropped by at least dozens of times. Many domestic model companies have seen even more dramatic cost reductions. Open-source models (open weights) have provided us with the opportunity to run models on our own hardware without third-party services or additional fees...

For large enterprises, by deploying models like GPT-4 privately in the cloud and loading private enterprise data, the models can serve only internal needs without needing to "sell data" to tech companies. For individuals with some technical ability, deploying open-source models like LlaMA on personal PCs provides an opportunity for algorithmic models to move from "thousand people, thousand faces" to "one person, one face." For the vast majority of users, through increasingly better AI on phones or other hardware, we are slowly moving toward "one person, one face"...

As mentioned in the previous article, we can no longer simply call current models Generative AI; let's call them Agents for now.

Agents can search for the latest information, process our own data, and gradually complete tasks we assign, such as shopping, booking tickets, etc.

Although things like news, shopping, and booking tickets still require access to third-party services and will still "contribute" some user data just as we do ourselves, the commercial closed-loop based on push-after-recommendation is being broken:

When an Agent searches on our behalf and processes the results for us, the ad loading on search pages becomes ineffective;

When an Agent processes our preference data locally and points directly to valuable news, products, or ticketing information, push links become ineffective;

Of course, the above conclusions are quite idealized and in the "very early" stages.

But as models have progressed to their current state, the core driving factor has changed: "centralized" computing has become "decentralized" computing. The rapid reduction in costs is moving more and more data from "cloud computing" to "local computing."

This is the opportunity I see for taking back our own data.

Under such an opportunity, "centralization" and "decentralization" may coexist for a long time.

In fact, from the first moment I encountered the Internet, I believed it was "decentralized."