Doubts about the Scaling Law hitting a bottleneck are rapidly intensifying, fueled by both alleged internal leaks from OpenAI and a "definitive word" from Ilya.

At the same time, an increasing number of independent media outlets are reporting that Gemini and Claude have also encountered bottlenecks. It seems as if an "AI Winter" has suddenly returned.

However, looking at the major tech giants in the US market this week, while there isn't exactly a "unstoppable surge," there certainly isn't a "massive collapse" either. Logically, if the market truly believed a winter was coming, we would see a crash of at least ten points, similar to what happened in the summer.

So, where is the problem? Have we really hit a "Data Wall"—a lack of high-quality data?

First of all, the data shortage has been the biggest issue since last year. But compared to pre-training data, there might be a greater shortage of fine-tuning data and evaluation data. In essence, it is a shortage of "human labor."

Although "synthetic data" is being increasingly applied to the production of fine-tuning and evaluation data—greatly reducing the workload of human "supervision"—two reasons still lead to massive human labor costs for these two types of data: 1. As pre-training data scales rapidly (by over ten times) due to increased availability, the demand for data required for reinforcement learning has surged, necessitating massive manual intervention to ensure high data quality; 2. As models cover a broader range of topics, evaluation data has become woefully insufficient. Expanding into new fields and introducing more "corner cases" requires significant human participation, and this labor needs to come from "experienced experts" who understand application scenarios deeply.

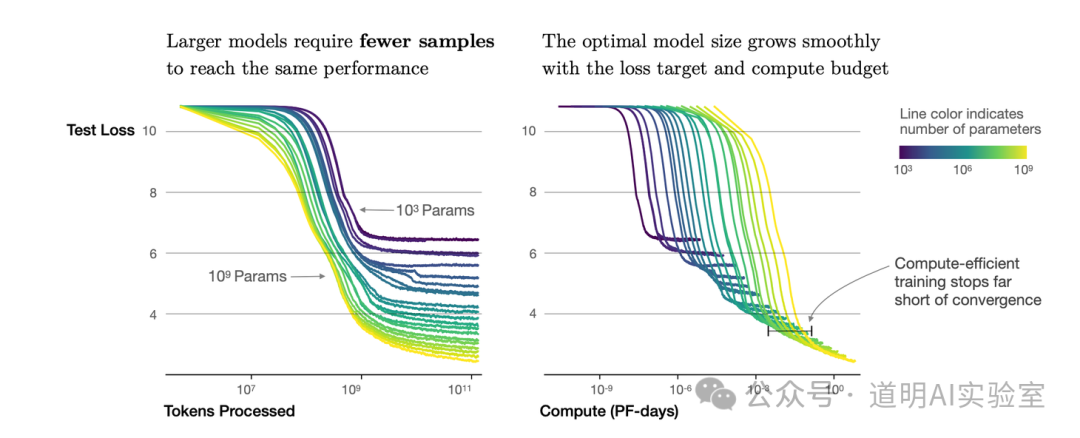

Meanwhile, without the coordination of the latter two types of data, the marginal utility of increasing pre-training data alone has rapidly diminished under current compute constraints.

Therefore, the truth of the story might be closer to the observations I made in the second quarter: 1. Single-cluster capacity will serve as the ceiling for the Scaling Law until the official deployment of Blackwell; 2. The gap in high-quality fine-tuning and evaluation data might be tenfold or more, and even if there is a "labor" shortage, it cannot be solved simply by increasing human resources (as experts are not easily found). Finding effective and cost-controlled "high-quality synthetic data" will become the most important direction forward.

Models may indeed have "hit a wall"—a "Data Wall"—but the way to break through requires relying on improvements in compute clusters and the transformation of data generation methods. This might be the true meaning shared by OpenAI's Sam Altman, Anthropic's Dario Amodei, and Ilya.

In the two years since the debut of ChatGPT, the rapid progress in model capabilities has been obvious to all. More and more people have become inseparable from these models in their daily study and work, and they can now clearly identify the models' strengths and boundaries.

From chip process walls, interconnect walls, memory walls, and network walls, to data walls and application walls—technological progress is a continuous process of breaking down walls.

Although the usual way to break walls is through "indirect routes," in this era of "intelligence" driven by data, data remains the greatest challenge and the only truly vital asset.