Following the expected earnings report showing a slowdown in cloud growth and an increase in capital expenditure, Google DeepMind has officially released Gemini 2.0.

The biggest difference from OpenAI: every time OpenAI releases a model, it's "vaporware" for most, requiring paid users to wait for a significant period before it's gradually rolled out.

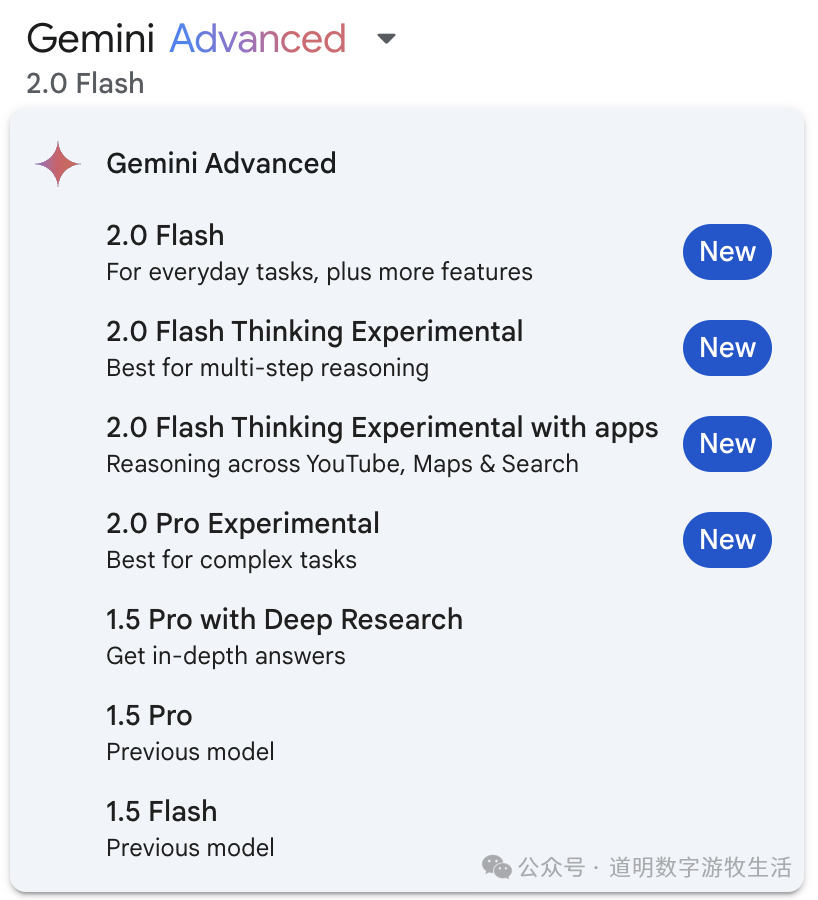

In contrast, whenever Google DeepMind releases a new model, it is open to developers and trusted testers for free on day one, and then opened to all users after a short period. The sign of this is on the Web version or App of Gemini, where more model choices appear: 2.0 Flash (without the "Experimental" tag) signifies the "official release." However, this is limited to Gemini Advanced users—those who subscribe to the monthly Google One service.

Objectively speaking, both OpenAI and Google's operations are somewhat baffling, but for users, they offer different ways to "brag."

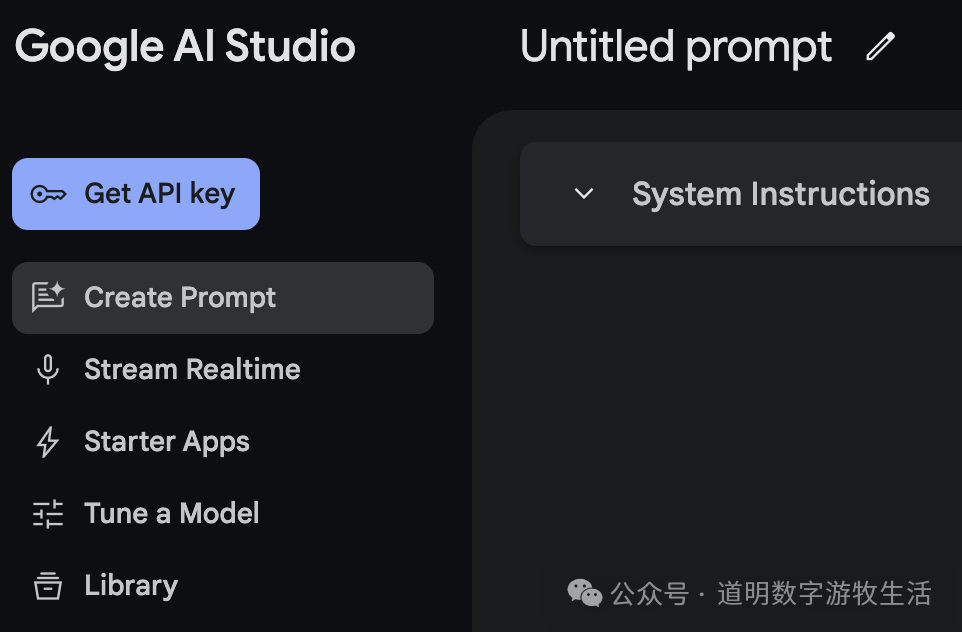

In terms of model usage, I basically use Gemini through two channels: one is Google AI Studio (which requires an application to Google, though approval is very easy now), and the other is via API calls (mainly through Obsidian plugins).

AI Studio might be the best AI model client available today. Previously just web-based, it can now be opened as a standalone Web App window on a computer. In the past, every conversation had to be saved manually; now, they are automatically saved to the Google Drive associated with the account (a sort of commitment to user data protection, if you choose to believe it).

Today's AI Studio also integrates many new features, including direct screen sharing and real-time voice conversations with the model (the very feature OpenAI emphasized during the GPT-4o launch last year but has yet to fully release). The experience is excellent. You can also quickly configure AI-powered apps.

I conducted a relatively complete test of more features on the day the model was first introduced.

First Look at Gemini 2.0

Dao Ming, Public Account: Dao Ming Digital Nomad Life

Gemini 2.0 Establishes Google's AI Dominance in 2025

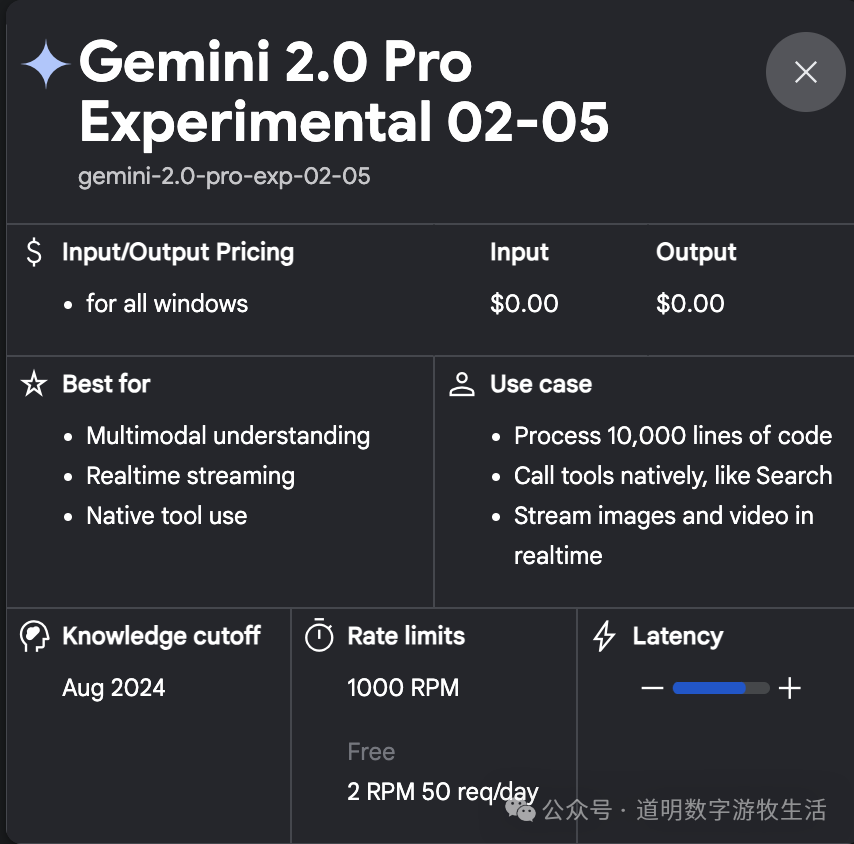

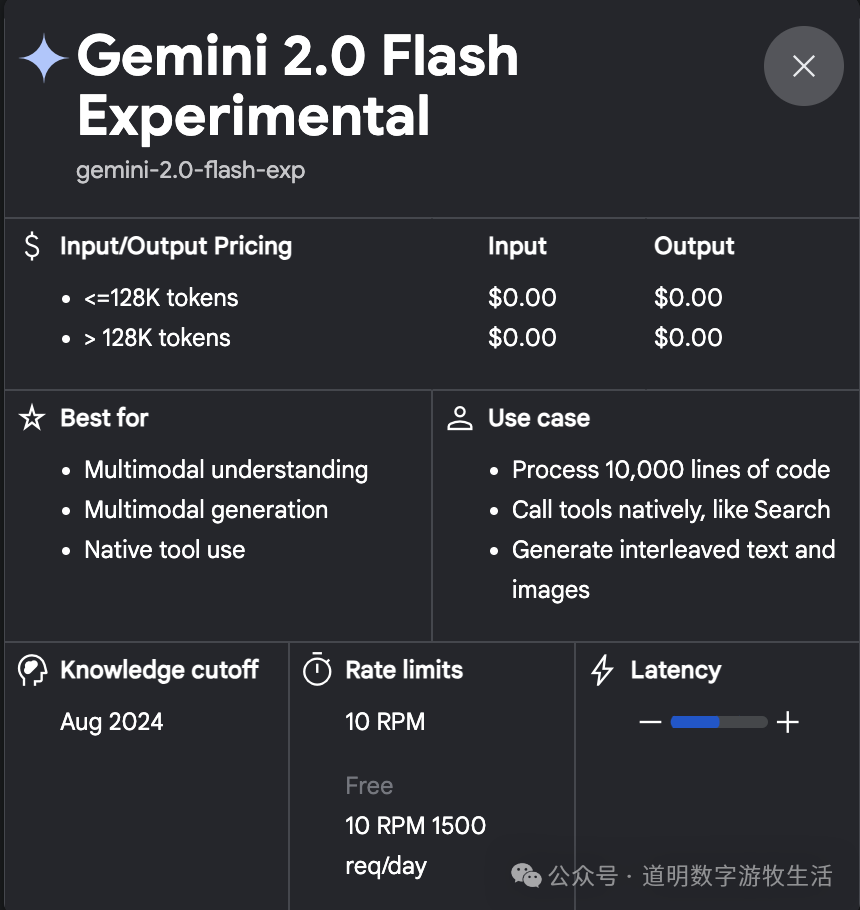

Most importantly, the use of these models is free—not only within AI Studio but also via daily free API quotas.

Taking the newly launched Gemini 2.0 Pro (a superior model to Flash) as an example, you can make 50 calls per day. The more than capable Flash model allows for 1,500 calls per day. For my daily routine tasks, this is more than enough.

For developers, it provides an immense sense of satisfaction: trying out the best models for free, letting one's ideas run wild, and receiving immediate feedback.

Looking back, when Google released Gemini 1.0, it likely intended to charge for it. However, a series of subsequent negative reviews (including frequent failures of its image generation models) led Google to shift its strategy.

Developer sentiment is the most important reputation in the AI field. "Pleasing developers" is a formula Google knows well.

However, this brings up a massive commercial puzzle: where is the AI revenue? Google still has its strong core advertising revenue to lean on, and its search business seems completely unaffected by large language models.

But the lower-than-expected growth in cloud services remains a long-term issue (last year, Gemini-driven Google One subscriptions contributed significantly to cloud outperformance, but under a strategy of free model access, the necessity for users to pay specifically for Gemini is decreasing).

Google has advertising revenue to support it and its self-developed TPUs to continually drive down model usage costs—but what about the others?

What about pure model companies without an ecosystem? From a developer's perspective, I 100% support Google's strategy of free access. But from other perspectives? The trend of "too big to fail" bullying the market is only intensifying.

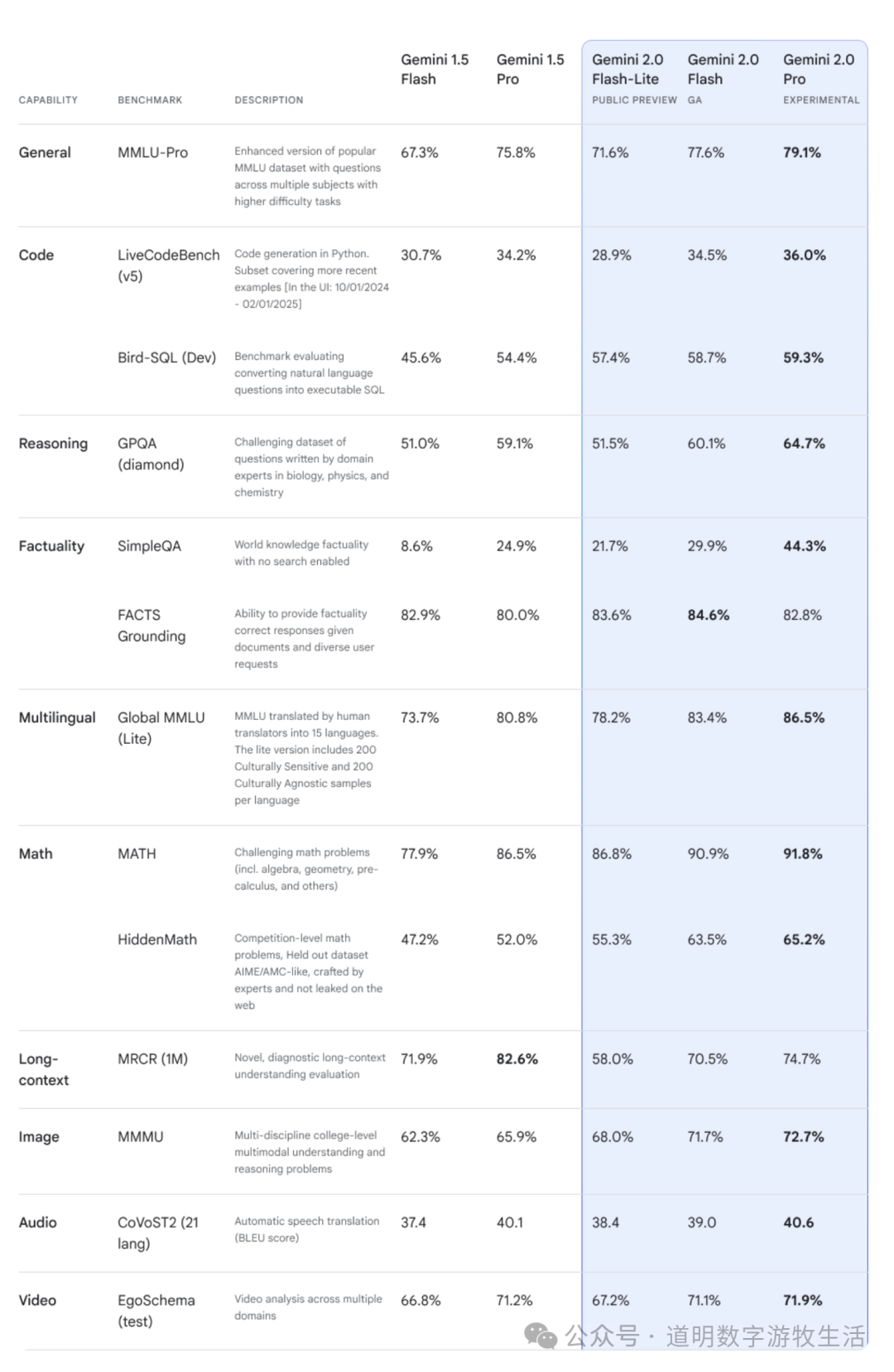

Returning to the model's capabilities: Google DeepMind provided evaluations for different versions of the model.

Gemini 2.0 shows significant performance improvements in text-related domains. However, in terms of multimodality—especially audio and video—there is almost no progress.

This likely stems from two reasons: limitations in model scale and, more importantly, limitations in training data—specifically the volume of data labeling (not just assembly-line worker labeling, but high-quality labeling by professional model researchers aided by specialized tools).