![[DeepResearch-2]:DeepSeek-R1与蒸馏模型](https://payload-backend.dmquant.workers.dev/api/media/file/2025-02-09-deepresearch-2deepseek-r1与蒸馏模型-1n7rl4-1772017801150-146.png)

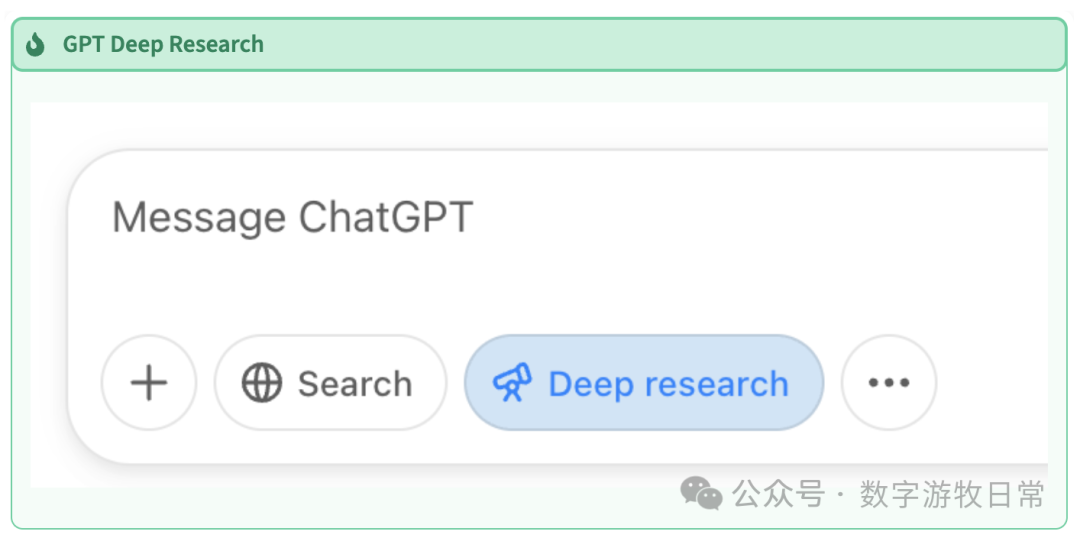

Continuing with Gemini Deep Research, I modified the prompts twice to more comprehensively cover the following content:

- The relationship between DeepSeek-R1 and V3, and where the "thinking ability" comes from;

- The relationship between R1 and the distilled models;

- The fact that the reduction in hardware requirements for inference actually comes from the distilled models;

- Verifiable reference materials for model comparisons.

As mentioned in the previous article, while the models are undoubtedly powerful, the key factor in writing a "good report" is "good searching." For known reasons, I cannot produce an acceptable report by directly writing Chinese prompts; I must first generate it in English and then translate it into Chinese. To better compare Gemini's and GPT's Deep Research, I had them write separately.

DeepSeek-R1 vs. DeepSeek-V3: Unleashing the Power of "Thinking"

DeepSeek-R1 and DeepSeek-V3 represent significant advancements in open-source Large Language Models (LLMs). While both excel across various tasks, they differ primarily in their approach to reasoning and problem-solving. DeepSeek-V3 is a Mixture-of-Experts (MoE) model that prioritizes efficiency and speed, making it ideal for content generation, translation, and real-time interaction. DeepSeek-R1, on the other hand, is built upon V3 but incorporates Reinforcement Learning (RL) techniques to enhance its logical reasoning capabilities.

The key difference lies in how these models deploy their "thinking" power. DeepSeek-V3 relies on next-token prediction, leveraging its massive training data to generate responses. This works well for creative writing or FAQs but struggles with complex reasoning. Conversely, DeepSeek-R1 employs Chain-of-Thought (CoT) reasoning, breaking problems into smaller, manageable steps. R1 undergoes a "thinking" phase before formulating an answer, resulting in more structured and deliberate outputs. This is especially evident in math, research, and logic-based tasks.

DeepSeek-R1 vs. Distilled Models: A Tale of Two Approaches

DeepSeek AI has extended access to advanced reasoning by releasing distilled models based on Qwen and Llama architectures. The original DeepSeek-R1 model boasts 671 billion parameters and, while powerful, requires immense computational resources. Distilled models, ranging from 1.5B to 70B parameters, are smaller, more efficient, and easier to deploy in resource-constrained environments.

The main difference is the training methodology. DeepSeek-R1 underwent a multi-stage process involving RL and Supervised Fine-Tuning (SFT). Distilled models were trained by fine-tuning smaller base models (Qwen and Llama) using reasoning data generated by DeepSeek-R1. This effectively transfers the knowledge and reasoning patterns of the larger model to smaller architectures.

Deployment Costs: Full vs. Distilled Models

Deploying the full DeepSeek-R1 is expensive and requires specialized infrastructure. Distilled models offer a cost-effective alternative. For example, deploying DeepSeek-R1-Distill-Llama-70B on Amazon Bedrock costs approximately $0.1570 per minute, significantly lower than the full model.

Performance Benchmarking Results

Below is the performance of DeepSeek-R1 and its distilled versions on key benchmarks:

AIME 2024 (Math Competition)

| Model | Pass@1 |

|---|---|

| DeepSeek-R1 | 79.8% |

| DeepSeek-R1-Distill-Qwen-1.5B | 28.9% |

| DeepSeek-R1-Distill-Qwen-7B | 55.5% |

| DeepSeek-R1-Distill-Qwen-14B | 69.7% |

| DeepSeek-R1-Distill-Qwen-32B | 72.6% |

| DeepSeek-R1-Distill-Llama-8B | 50.4% |

| DeepSeek-R1-Distill-Llama-70B | 70.0% |

MATH-500

| Model | Pass@1 |

|---|---|

| DeepSeek-R1 | 97.3% |

| DeepSeek-R1-Distill-Qwen-32B | 94.3% |

| DeepSeek-R1-Distill-Llama-70B | 94.5% |

Codeforces (Coding Rating)

| Model | Rating |

|---|---|

| DeepSeek-R1 | 2029 |

| DeepSeek-R1-Distill-Qwen-32B | 1691 |

| DeepSeek-R1-Distill-Llama-70B | 1633 |

These results show that the distillation process effectively transfers reasoning capabilities to smaller architectures. Qwen-based models show particularly strong performance in mathematical tasks.

Conclusion

DeepSeek-R1 and its distilled models compete with top-tier closed models in reasoning performance while offering greater deployment flexibility. As reinforcement learning advances, DeepSeek and its distilled versions will continue to lead the open-source LLM landscape.