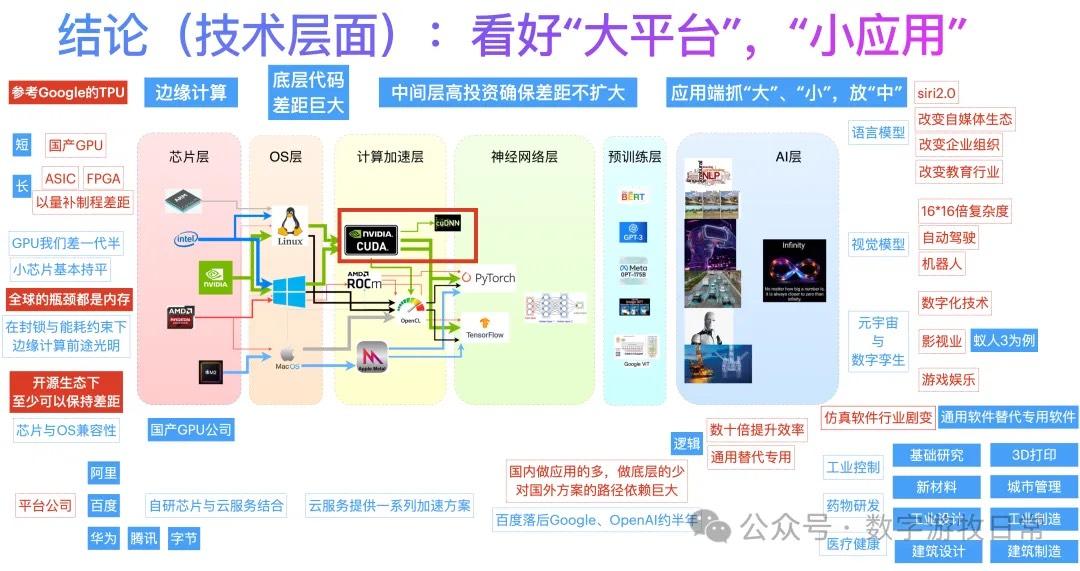

The image above was created exactly two years ago when ChatGPT first went viral. During offline exchanges in the first half of 2023, I would always present this diagram first.

Two years have passed, and the AI industry has undergone earth-shattering changes. However, looking back now, there aren't many conclusions I would change:

I have always believed that the gap between the open-source community and closed-source models would not continue to widen; on the contrary, it was only a matter of time before open-source reached parity with closed-source capabilities. The emergence of DeepSeek (DS) seems to have validated this. Although I might not choose DS for serious scenarios due to its higher hallucination rate, the consensus is that its basic capabilities are now close to the best models.

In terms of hardware, memory bandwidth—rather than raw chip computing power—is the most critical constraint. This is the foundation upon which major companies continue to push for in-house development.

In the market, there will eventually be no shortage of models, but rather a shortage of use cases and a need for continuous cost reduction. Clouds with strong ecosystems may be the ones that have the last laugh.

Due to a combination of various factors, the shifts in the domestic landscape have been far greater than those overseas. A certain giant I was optimistic about has finally "fallen rapidly." There might still be some chances for a turnaround, but this was my most incorrect judgment at the time.

Multimodal capabilities represent the greater future.

Exclusive models, ecosystem, economies of scale, low cost, or flexibility—one must possess at least one or two of these. Being stuck in the middle is very uncomfortable.

In reality, the progress of model implementation in specific industries is much lower than expected. It appears that data accumulation and process re-engineering for specific applications cannot be completed in a short timeframe.

Standing at the current moment, it feels like a familiar cycle is repeating:

Foundation models have once again become the most important point of competition. If we view DS as a breakthrough in language models catching up, then the next should be multimodality.

Among the three factors affecting application implementation—model capability, low cost, and ecosystem/use cases (data preparation)—it seems the needle has moved toward low cost and ecosystem scenarios.

A major difference from two years ago is that back then, the prospects for charging for models were broad. Today, on one hand, there is open source; on the other, the inference costs for deploying capabilities at the level of DS-R1 are actually not low, while the price ceiling has been suppressed.

Cloud services that rely purely on buying servers and selling inference APIs will likely struggle to cover their costs. There are only two ways to reduce costs: a sufficient volume of self-developed chips (such as the scale and maturity of Google's TPU) and sufficiently powerful ecosystem support.

In the cloud, bigger is better.