It has been almost exactly three months since Google DeepMind released Gemini 2.0 (Gemini 2.0 established Google's AI dominance for 2025). The team hasn't released a major new version yet, but they have accomplished several things, three of which are worth mentioning:

They released the third version of their open-model (open-weight) series, Gemma 3 (Google's sudden release of the open-source Gemma 3 suggests that ~30B might be the sweet spot for open-source models);

A new experimental version of Gemini 2.0 Flash (Gemini2-Flash-Exp) was launched, featuring mind-blowing integrated text-to-image capabilities;

The underlying model for Deep Research was upgraded to Gemini 2.0.

Yes, the first review regarding Gemma 3, as linked above, was completed on day one. To be honest, it is indeed currently the best open-weight multimodal model, and its currently underestimated value will continue to manifest over time.

Regarding the second point, just today, a large number of demonstrations have appeared showcasing powerful automated text-image and image-editing capabilities. Although I know these features aren't a high priority for my specific use case yet, multimodal capabilities are indeed the dream of many creators: with a simple prompt, the model can generate a story, automatically storyboard it, and generate images for each frame—all without human intervention.

I actually tested several stories; here is a demonstration of a new story inspired by "Nezha." Below is a screenshot of the interaction, showing the capability for automated continuous output.

Below is the model's output copied over. I believe it could generate even longer stories and illustrations, but due to Google's unique "content safety filtering mechanism," when the model reached the ninth frame, I was unable to "safely generate" it after countless retries due to safety flags. Google actually provides an editing function, so I could likely continue the work by making modifications. However, I lack the talent of an animation screenwriter, so this will suffice as a demonstration of capabilities.

Visual Story: "Spirit of Flame and Breath of Wind"

Introduction: Inspired by the success of Nezha, this is a brand new story about two youths with elemental powers and how they break prophecies to become their own heroes.

Act One: Omen Appears, Destinies Intertwine

Scene One: The Descent of the Celestial Stones

Shot 1: Wide angle. The night sky is torn open, fiery meteors fall towards the border, forming burning craters—the Flame Spirit Stone descends.

Shot 2: Close-up. In the center of the crater, a crimson crystal radiates scorching light—the Flame Spirit Stone.

Shot 3: Long shot. Deep within the Wind Valley, a beam of cyan light shoots into the sky, forming a powerful atmospheric vortex—the Wind Breath Stone appears.

Shot 4: Close-up. In the center of the vortex, an emerald crystal flows with soft light—the Wind Breath Stone.

Shot 5: Interior. Border military camp. Murong Xue gives birth to an infant surrounded by a faint fiery glow. General Ling Xiao looks at the child with concern.

Shot 6: Exterior. Wind Valley. Tribespeople surround a swaddled baby girl as gentle breezes circle her. The tribal leader looks solemn.

Scene Two: Awakening of Power

Of course, Gemini 2.0 Flash-Exp also features image editing functions, and its image modification capability is quite good.

With multimodal support, Gemini 2.0 Flash-Exp can even automatically generate WeChat Official Account articles. If the platform doesn't take measures, then perhaps...

Third Update: Deep Research powered by Gemini 2.0.

Indeed, Deep Research has become a crucial part of many of my workflows.

To be fair, before this, OpenAI's Deep Research was the only one I considered "usable," so I was very much looking forward to the performance of Gemini 2.0-enhanced version.

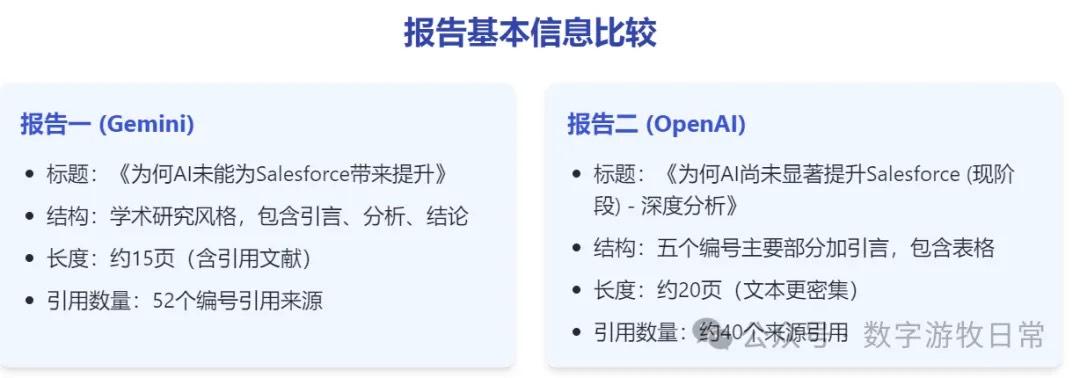

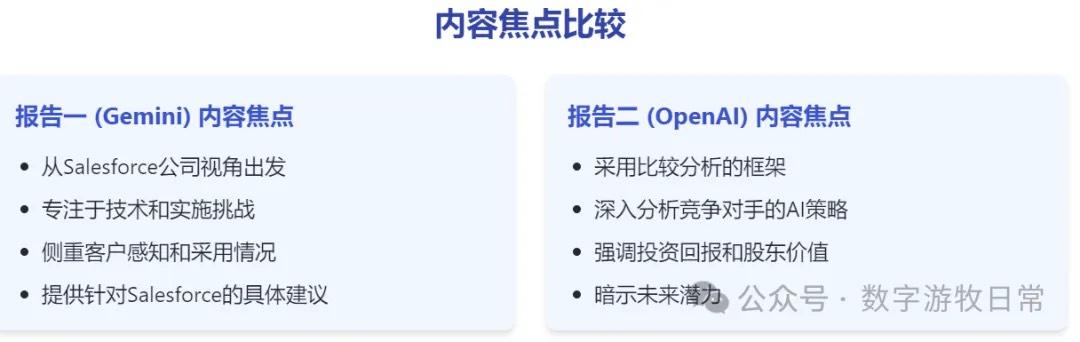

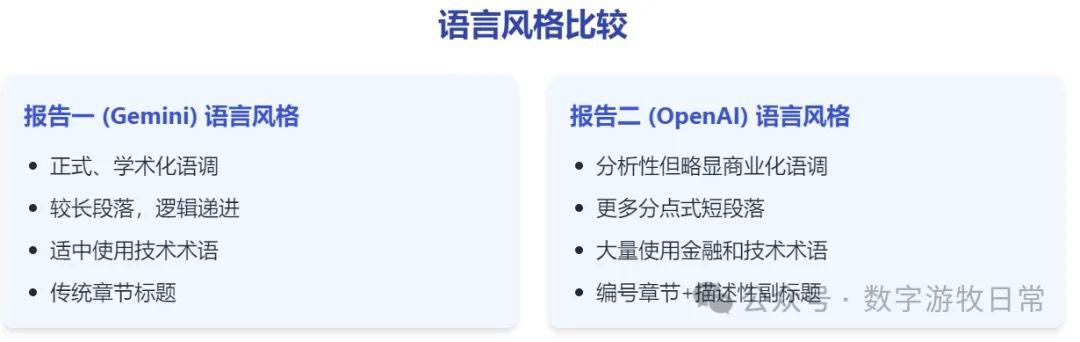

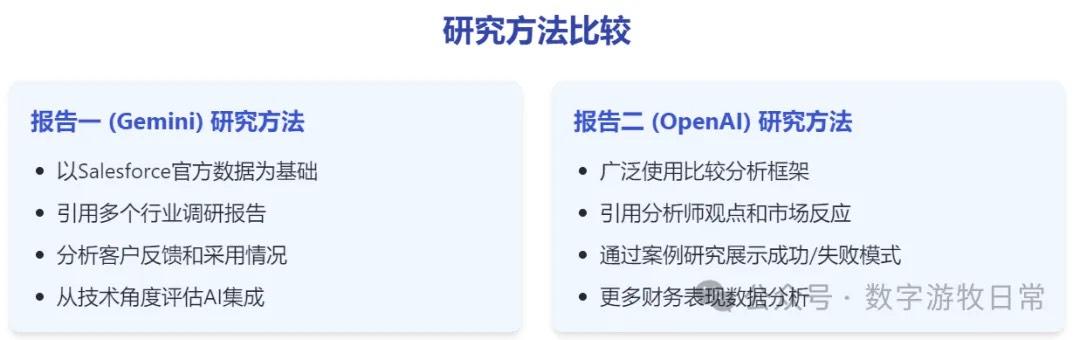

I published an article about Salesforce this morning, and the majority of the source material for the base report was generated by OpenAI's Deep Research. Therefore, I used the same process and prompts to let Gemini 2's Deep Research give it a try.

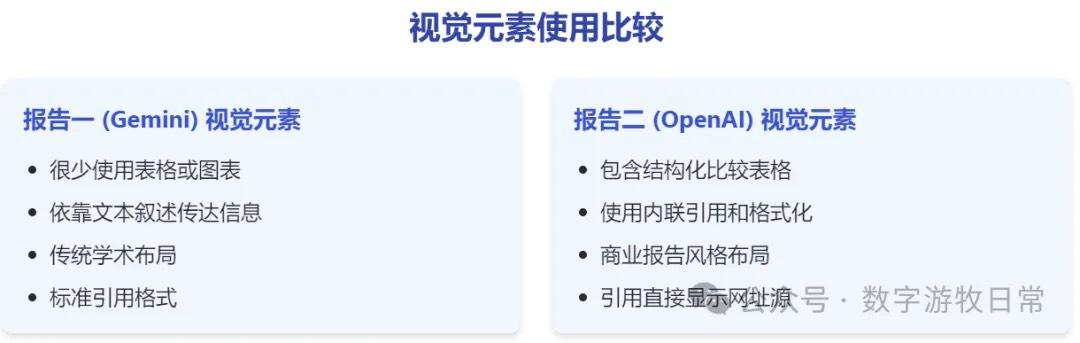

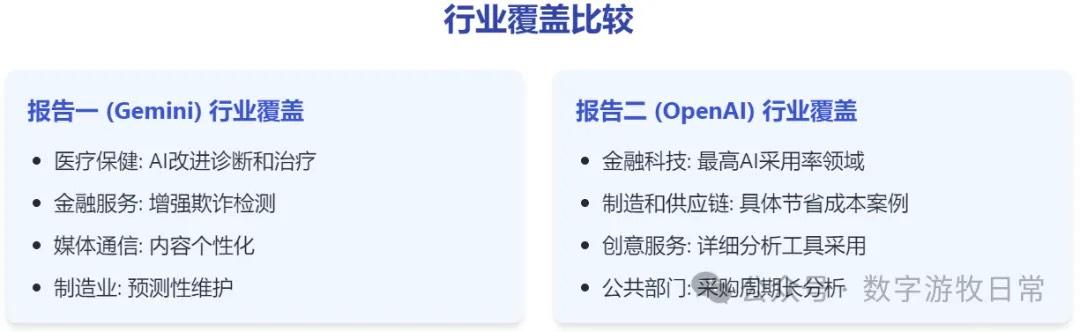

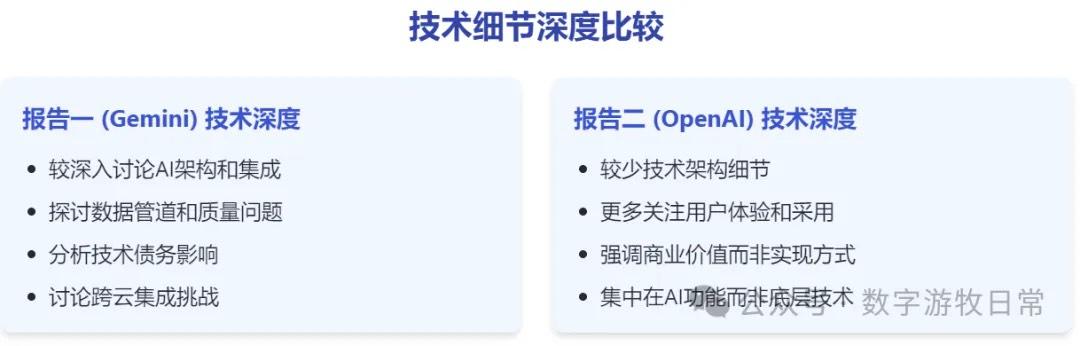

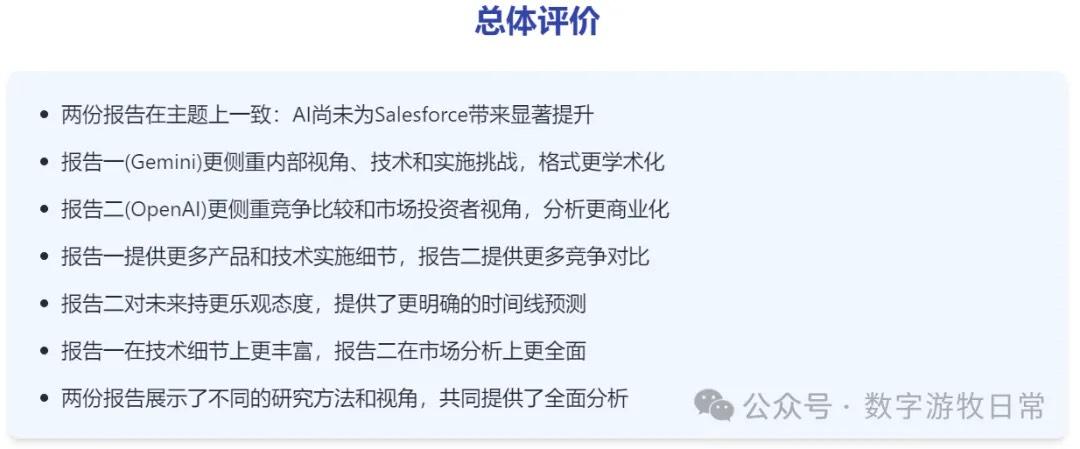

From a subjective assessment, I think the two models are basically on par. OpenAI's output is longer and more detailed, but Gemini 2.0 searches more data sources, and the reasoning process looks more complete. The most significant feature is that the cited data is more up-to-date.

(This point is clearly because Gemini cited newer quarterly financial data.)

Yes, these examples and comparisons reinforce the conclusion I reached late last year: Google DeepMind is demonstrating increasing dominance in the AI field.

Actually, the most important reason for this "come from behind" situation is Google's massive "elephant-level" scale and its enormous first-mover advantage.

But the real deciding factor lies in the choice between two paths: self-developed chips and long-context support (one million to two million tokens of input support). The former allows Google to prepare, train, release, and produce applications at its own pace; the latter is actually the most critical feature for the implementation of generative AI (everyone wants long-context, but only Gemini running on its own chips can afford to be so generous).

While OpenAI is considering launching a higher-priced ($2,000?) monthly subscription service for healthier cash flow, Google can still generously provide a high daily quota of free calls to its latest models. While other model companies struggle to find individual application use cases or go to great lengths to create viral hits, Google only needs to methodically integrate its ecosystem...

By the way, if these text-to-image and image-editing features are integrated into Samsung's Galaxy AI (which I believe is only a matter of time), the AI phone might truly establish its place.

Final thoughts: The whole of 2025 might prove one thing: the best model is the best AI application. The "intricate carvings" we see now are likely just fleeting "ephemera."

Because, if models continue to progress, their capabilities will eventually cover those "carvings"; conversely, if models stop progressing, then no matter how much you carve, it will be in vain.