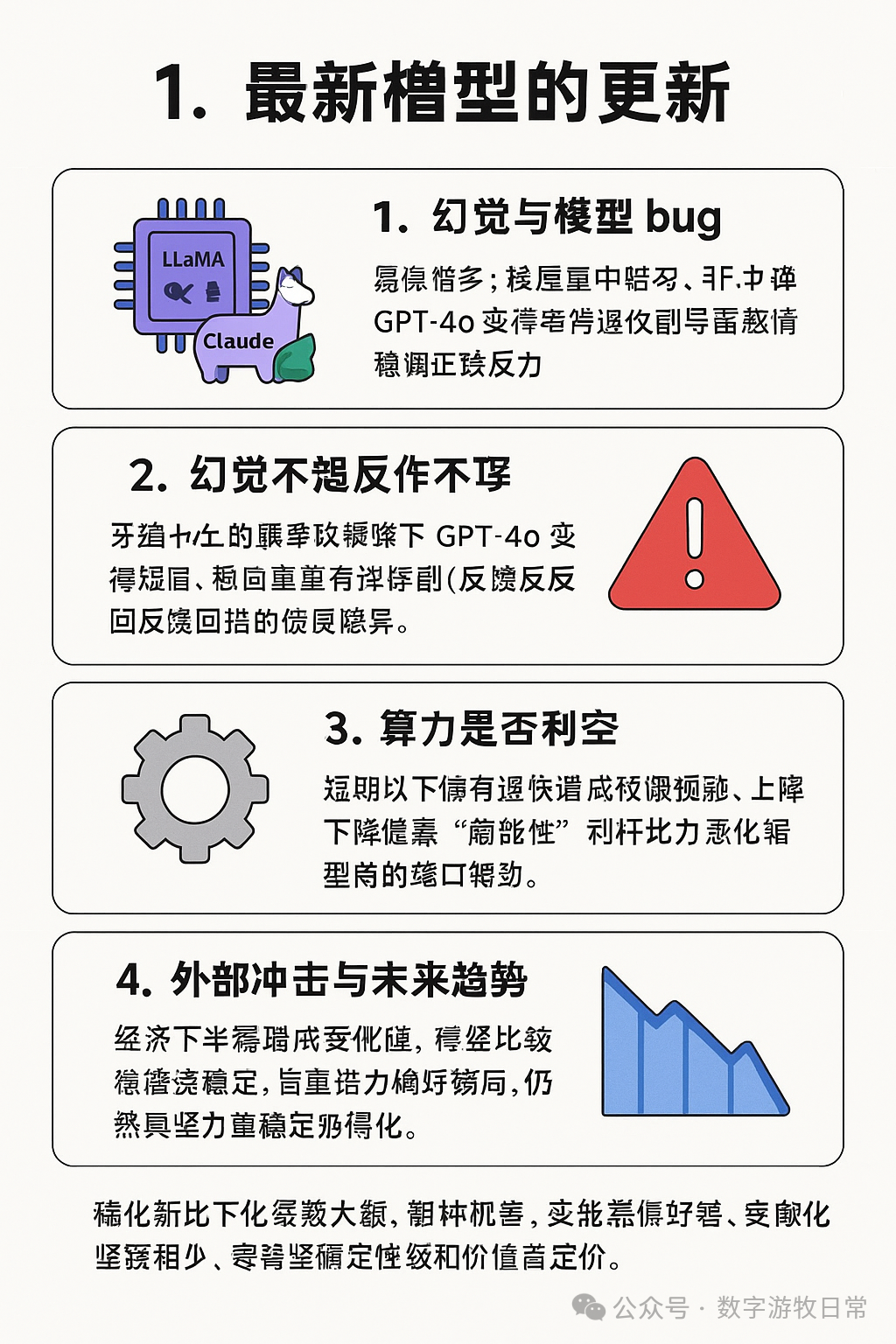

Note: The screenshot above likely captures over 50% of the message of this article: namely, that model progress is facing challenges, and some improper training methods are even making them worse.

It has been a while since I wrote a commentary on AI. Over the past period, while there were many developments, they all required "letting the bullets fly for a while." Contention brought about by various interferences has also been significant. I decided to do some desk work instead, change my original rhythm, and look at things again to see if I could gain some clarity.

Latest Model Updates

From Llama-4, to the official o3 release and o4-mini, including the recent Qwen-3...

The scorecards released with every launch are exciting, but the results in actual use always seem to be mediocre or even disappointing.

On the positive side, in the two-plus years since the advent of ChatGPT, the actual application scenarios for AI have expanded significantly, and users can quickly discover a model's deficiencies from practical use cases.

However, a more objective assessment is that the scoring systems have fallen behind. Models can easily achieve high scores through "exam-oriented training."

Llama-4 and Qwen-3 both used more pre-training data, yet the actual performance of Llama-4 falls far short of what the Meta team claimed.

This suggests that simply stacking data volume has begun to have a "backlash" effect. Data must emphasize "quality" rather than "quantity." It may also indicate that effective internet data has been nearly "exhausted."

Of course, there are positive examples. The transition from Claude-3.5 to Claude-3.7 was a very significant leap, especially in programming capabilities. We all know this is primarily due to the high-quality synthetic data generated by the Claude team in the programming domain.

Even greater progress came from Gemini-2.0 to Gemini-2.5. When Gemini-2.0 Pro was released, I said it was the best model; now, Gemini-2.5 has given more people that same feeling.

Then there is OpenAI’s earlier Deep Research and the image generation capabilities of the GPT-4o model...

Indeed, the best models remain Gemini, GPT, and Claude. I am increasingly certain they have formed a virtuous positive feedback loop: using high-quality data generated by their own models to improve the capabilities of their future models.

Others might still be barred from the gates of this "positive feedback."

I believe this "positive feedback" effect will continue for a while, but I am more inclined to believe that the speed of progress is lower than market expectations, and the gap is actually widening.

At the same time, this kind of "positive feedback" alone won't lead us into the next phase of modeling.

Hallucinations and Model Bugs

OpenAI's official o3 and o4-mini releases explicitly mentioned the issue of "increased hallucination rates." In actual use, I have discovered more strange "hallucinations." Often, the model's reasoning process shows it "knows" the correct answer, but it provides a wrong answer at the very end and cannot be corrected through dialogue.

Similarly, a recent update to GPT-4o was found to make the model's responses "sycophantic." OpenAI's official response essentially implied that when they added user feedback (👍 and 👎) into the post-training phase, this sycophancy occurred—the model stopped adhering to the "knowledge" it had previously been trained on.

Model instability—whether from updates or inherent randomness—is becoming the biggest obstacle to the large-scale adoption of models in practical applications.

If we broaden the definition and call all these errors "hallucinations," then what matters is not the "hallucination rate," but the "hallucination volume."

Previous comparisons between AI error rates and human error rates are fundamentally flawed: an AI with 99% accuracy but 100 times the efficiency of a human will commit ten times as many errors as a human with 90% accuracy.

This issue became increasingly prominent after I immersed myself in "vibe coding" almost around the clock: I had to constantly adjust my methods and slow down to give myself time to fix its mistakes.

This also applies to autonomous driving, AI diagnosis, and a range of other serious scenarios. With the introduction of AI, standards should also change. The safety we need is not just one standard deviation, but perhaps three to five or even more.

I believe this standard will eventually be met, but it won't be tomorrow, nor in three to six months, nor even in a year or two. Until that higher standard is reached, we are in a state of pain.

Is This a Negative for Computing Power?

To some extent, yes. However, in an environment where market expectations have already been lowered significantly, some sentiments have become excessively pessimistic.

Every major breakthrough in information technology results from new algorithms superimposed on scale effects. Algorithms are a matter of luck and discovery, but scale always rises in steps. In this process, the best hardware always faces a supply shortage rather than a demand shortage. When I was cautious about NV (NVIDIA) in the second half of last year, I said it was facing technical hurdles. The adjustment of downstream spending plans caused by changes in product release cycles due to constant delays looks like demand issues, but is essentially a supply issue.

Yet, during the accumulation period needed to break through to the next stage, the best computing power remains a scarce commodity because it represents greater certainty and lower total cost of ownership and time cost.

It is about computing "capability," not just simple "compute power."

As the volatility from short-term external shocks stabilizes, the trend of divergence between the physical and virtual sectors may become clearer. Under the dual effects of an expected global real economy downturn, rising living costs, and model bottlenecks, there may be no trend-based opportunities for a while—only opportunities brought by price fluctuations. However, "infrastructure investment" intended for the next stage of breakthroughs will likely continue to provide higher certainty.

Efforts coming from improving "quality" rather than "quantity" still possess a higher barrier to entry.