During a Deep Research task, the model searched for and cited a survey from Stack Overflow. Yes, I recently saw an analysis claiming Stack Overflow is essentially "dead." Is its AI-related survey still credible?

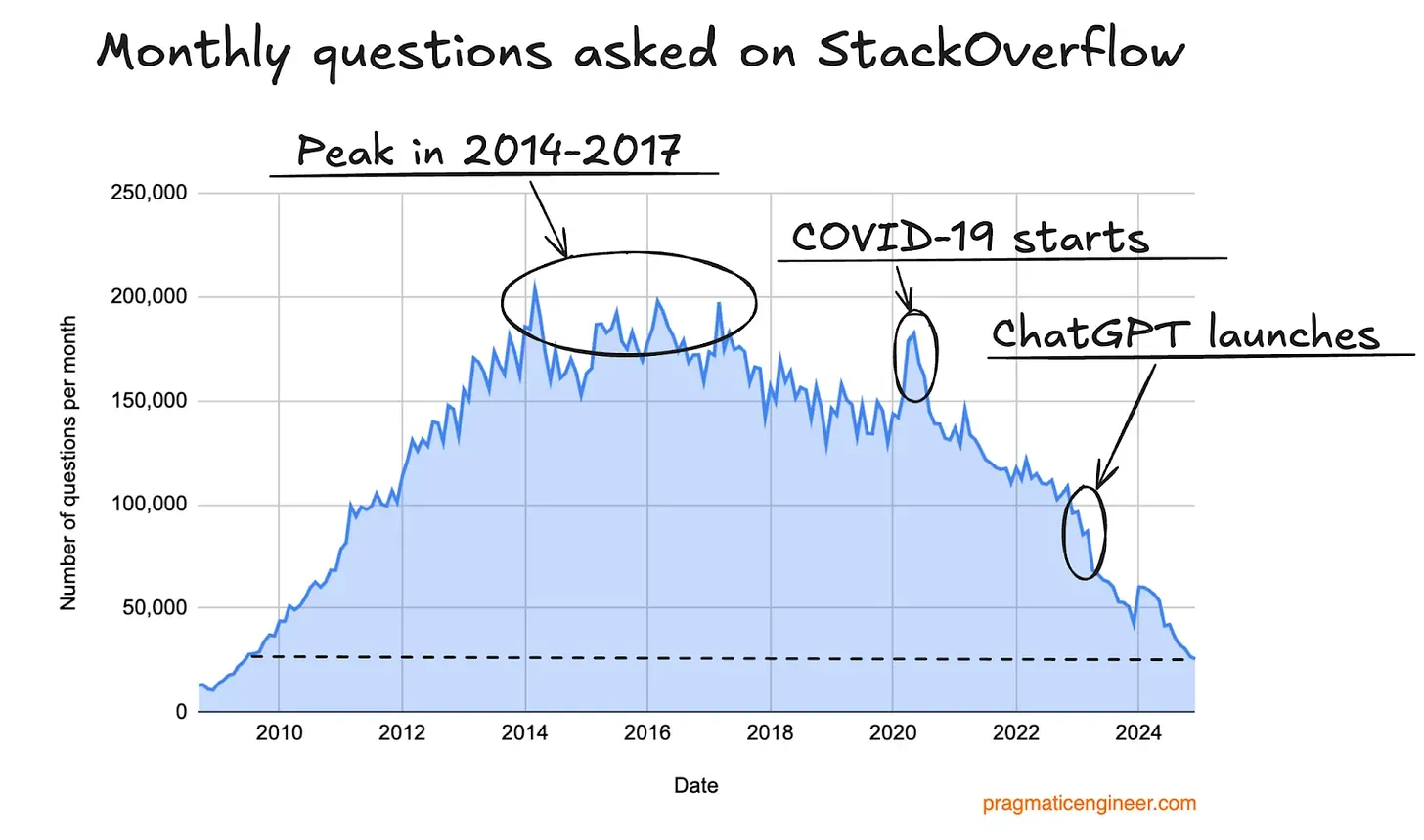

This won't be an article reminiscing about the era when every problem could be solved on Stack Overflow. Thus, I'll gloss over its past and present, only posting a screenshot from that "Stack Overflow is dead" post: the one showing the number of questions.

By posting this image, I can propose a hypothesis: the "programmers" in the subsequent survey statistics might be highly experienced or even older, positioned on the right half of the smile curve. Therefore, its representativeness needs to be considered from that perspective.

First, this is the Stack Overflow 2025 Annual Survey (https://survey.stackoverflow.co/2025). It’s conducted annually, so while its representativeness clearly declines over time, it remains significant: the statistics come from questionnaires answered by over 49,000 programmers across 177 countries and regions.

It covers many aspects, but I’ll focus on AI usage, starting with the general conclusions.

Clearly, these conclusions reflect the "right-skewed" user base discussed above (the sample is mostly long-term programmers). The demographic data on Stack Overflow’s official site also clearly illustrates this.

Why emphasize this? We can clearly see that in this booming year for AI Coding, while adoption rates have risen, trust has decreased, and distrust has significantly increased.

This indicates that relatively senior programmers began using AI Coding on a large scale as early as last year (with the release of Claude 3.5 in June being the "inflection point") during a honeymoon phase. This year’s rise in usage combined with a drop in trust doesn't mean the models have worsened; rather, it proves that this group has begun applying models to a much wider and more complex range of actual tasks.

Their biggest frustration with the models is: they look correct but have many small issues, and debugging takes more time (I’ve been vocal about the former, and I admit the latter, though I’ve recently realized this stems from improper methods, which I'll discuss later).

Another point illustrated: looking at the 2024 user experience distribution below, although the time divisions differ, it’s clear that 2025 users are indeed "older" than those in 2024. Thus, a bold guess regarding this year's AI Coding craze is that user penetration this year mainly comes from "younger" programmers and non-programmers.

Another discrepancy with our "intuition" is IDE usage: Cursor's adoption rate is significantly lower than I expected, likely lower than the reality for a larger sample of users. However, this validates another question: are more experienced programmers more "stubborn"?

There are many other interesting details in this survey that I won’t list one by one. I encourage those interested to visit https://survey.stackoverflow.co/2025/. When I started coding, the best resources were heavy volumes of "Language References" on my desk. Later, during the period of intensive Python use, Stack Overflow helped me solve many problems. While the tide of time is irreversible, it might be interesting and rewarding for more people to take a look or even flip through data from previous years.

Returning to the beginning, if Deep Research hadn't found this material for me, I would have almost forgotten the existence of the annual survey. In fact, I never used to care (who cares about what others are doing unless they're writing an article?). The purpose of this piece isn't even to discuss the survey conclusions, but rather that they happened to collide with questions that have been on my mind for a while.

The question is: if we begin to consider current AI as "toP" (to Professionals), where is the problem?

I am surrounded by two types of professionals: analysts and programmers. I also know many people like me who have begun using AI extensively in their daily "work."

However, when I consciously gathered their feedback, I received many conclusions similar to those presented in the statistics: they use it more and more, yet feel increasingly that "AI is useless" or even a waste of time—the frustration of something that "looks right but has many bugs."

I understand what they are saying. Programmers always think AI code isn't as good as what they write themselves and wastes debugging time. Analysts feel the conclusions AI provides are useless—they look complete and professional, but what professional only looks at that?

Perhaps being "conservative" is always right. But more often than not, just like Stack Overflow, while you are still seriously and methodically doing things the old way and looking down on "youngsters," the era has already wrapped you in a "cocoon," leaving you to wither away.

Within this "cocoon" formed by me and my peers, I am confident that I am among the most "rebellious" and the heaviest users of AI. I acknowledge all their shortcomings, but I see their unimaginable speed of progress. I acknowledge every flaw my colleagues and partners complain about, but I also know it’s more likely that we are using them the wrong way.

We always compare the conclusions and judgments in AI-generated reports with our own experience. But where does our experience come from? Rather than an accumulation of time, it’s a precipitation of data—and data is clearly AI's greatest advantage! We can still say there is a lot of "private domain" data AI cannot access, but when even core content from the Alaska Summit can be leaked, how much data is truly "private"? Just the data in increasingly smaller "cocoons."

We always say AI code has many bugs, security issues, logical errors in execution, and low efficiency. But think back to a year ago—we were excited that it could generate interactive web pages. Even earlier, we found it "incredible" that it could write a single function. Today, Claude is truly "taking over" our projects!

If a model can organize and analyze all market information from the previous night in half an hour, then what we should consider is how to make it generate output that fits each of our own habits, rather than asking, "What's the use?"

If a model can complete a basically functional software system in a day or two, our primary consideration should always be "is the requirement correct," rather than whether it's robust enough or efficient (if you haven't experienced configuring the files below or optimizing the system's core 640K memory, talking about execution efficiency is a bit laughable).

If a system is built by AI in a day or two, and if this foreseeable timeframe shrinks to half a day or an hour next year, then all those ideas long "brainwashed" by "software engineering" and "The Mythical Man-Month" should be discarded.

Only goals, no software. I’ll say it again: only goals, no software.

Although the above sentence might still seem like a fantasy at this point in time, I have indeed always been a bit "rebellious."

I am lucky to be a programmer and an analyst; I am also fortunate that I am not just a programmer or an analyst.

The AI we face is a partner we cannot fully understand, yet one we can walk alongside. Its direction of evolution will increasingly diverge from what we want. What needs to change is us—and those disciplined "bad habits."