OpenAI DevDay, I had some small expectations for the previously announced Agent Builder feature, so I woke up and came over to check the notifications (getting old, can't stay up for the livestream anymore).

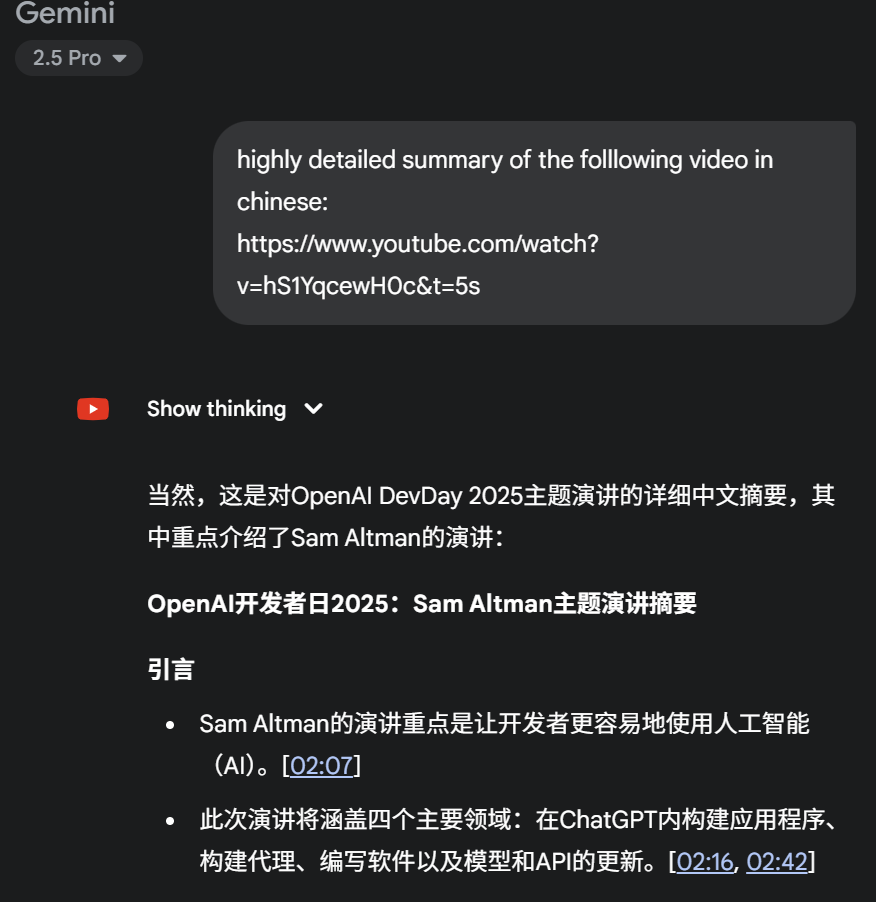

Of course, missing the livestream is no loss at all, and I don't need to watch the video replay; Gemini is enough. Here is Gemini's summary:

OpenAI DevDay 2025: Sam Altman Keynote Summary

Introduction

Sam Altman's keynote focused on making artificial intelligence (AI) easier for developers to use. [02:07]

The talk covers four main areas: building applications within ChatGPT, building agents, writing software, and updates to models and APIs. [02:16, 02:42]

Applications in ChatGPT

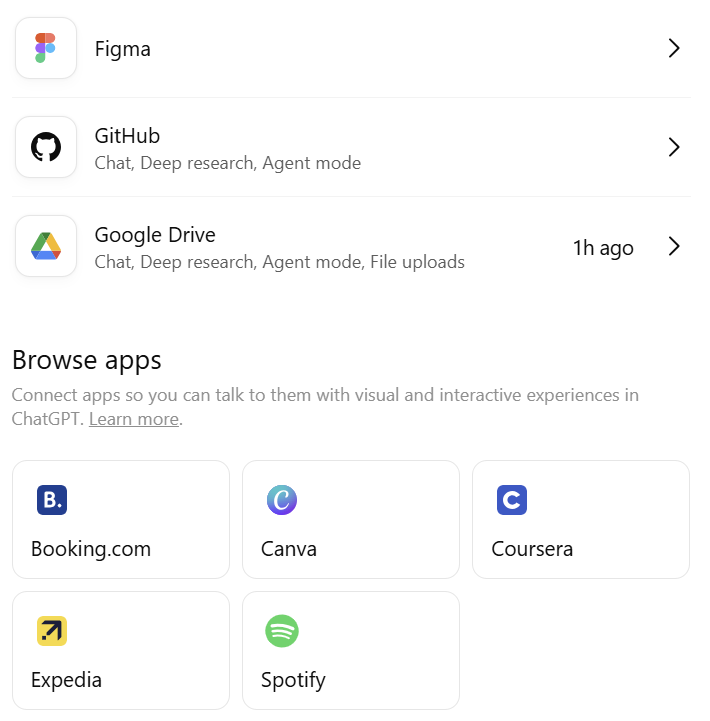

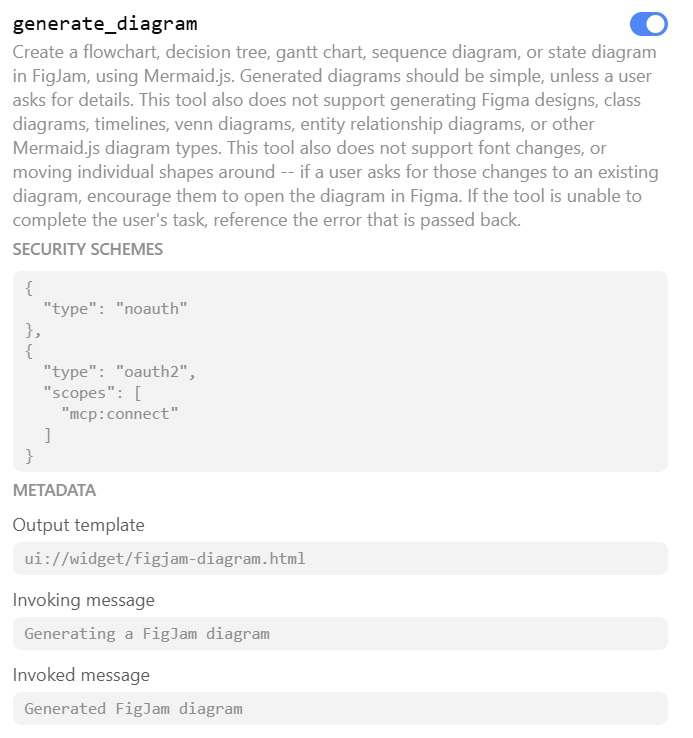

OpenAI is launching an Application SDK that allows developers to build real applications within ChatGPT. [03:47]

These apps will be interactive, adaptive, and personalized, allowing users to chat with them. [03:40]

The SDK is built on the open standard MCP, giving developers full control over their backend logic and frontend UI. [04:02]

Apps built via the Application SDK can reach hundreds of millions of ChatGPT users, providing a significant product expansion platform for developers. [04:09]

Demos showed how apps like Figma, Spotify, Coursera, Canva, and Zillow are used within ChatGPT, showcasing diverse functionalities. [04:55, 11:26]

Apps will be discovered via direct name search and recommendations during conversations. [04:50, 05:08]

Building Agents

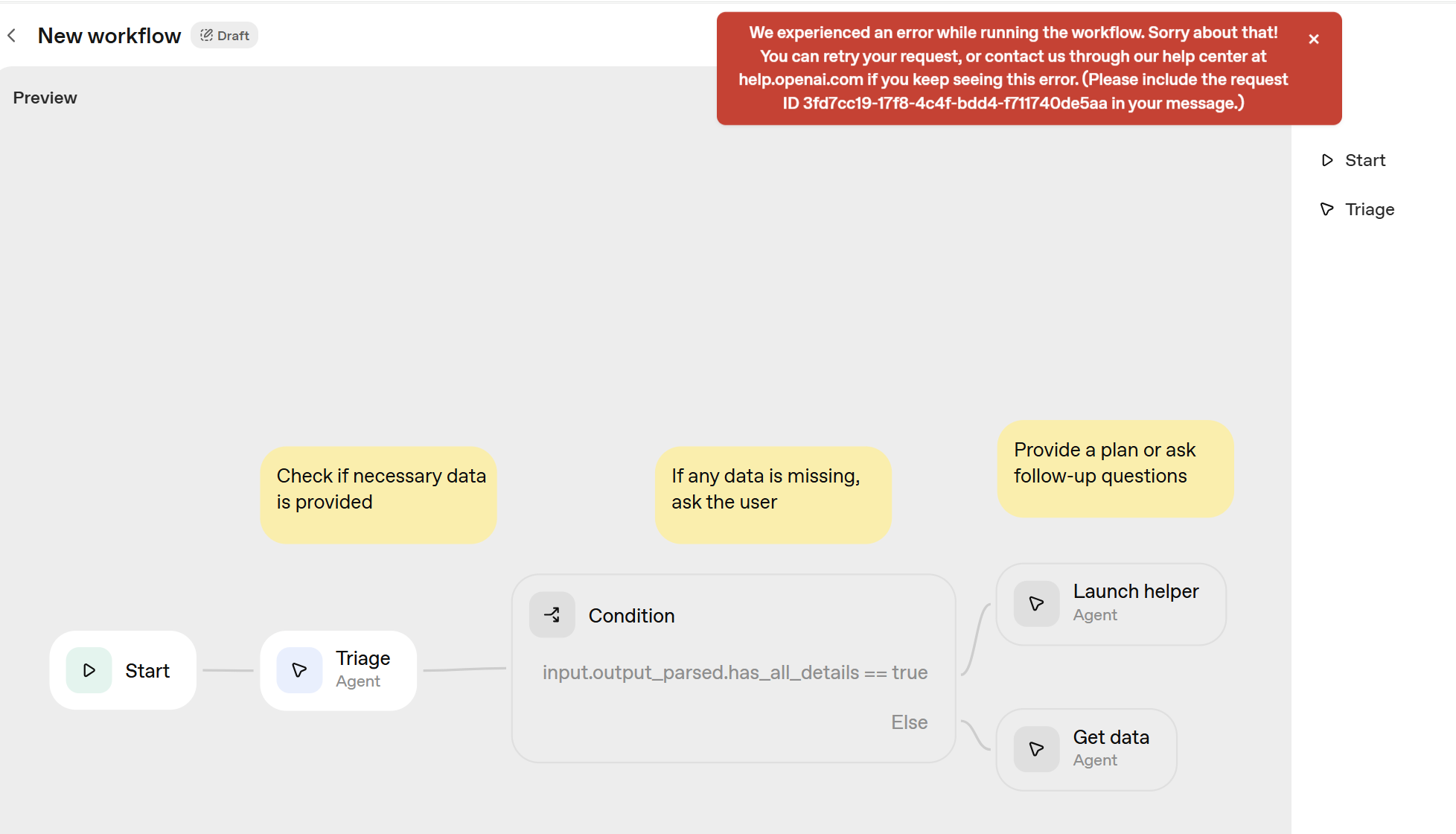

To simplify agent building, OpenAI introduced Agent Kit, a complete set of building blocks to help move agents from prototype to production. [16:52]

Core features of Agent Kit include:

- Agent Builder: A visual canvas for building agents, allowing for quick design of logical steps and testing workflows. [17:17]

- Chat Kit: A simple embeddable chat interface to bring the chat experience into the developer's application. [17:36]

- Evals for Agents: New features specifically for measuring agent performance, including track grading, datasets, and automated prompt optimization. [17:58]

Companies like Albertson's and HubSpot are already using Agent Kit to improve their operations and customer service. [18:42, 19:38]

Live demos showed how to use Agent Kit to build and deploy an agent on the DevDay website in eight minutes. [21:17]

Writing Software

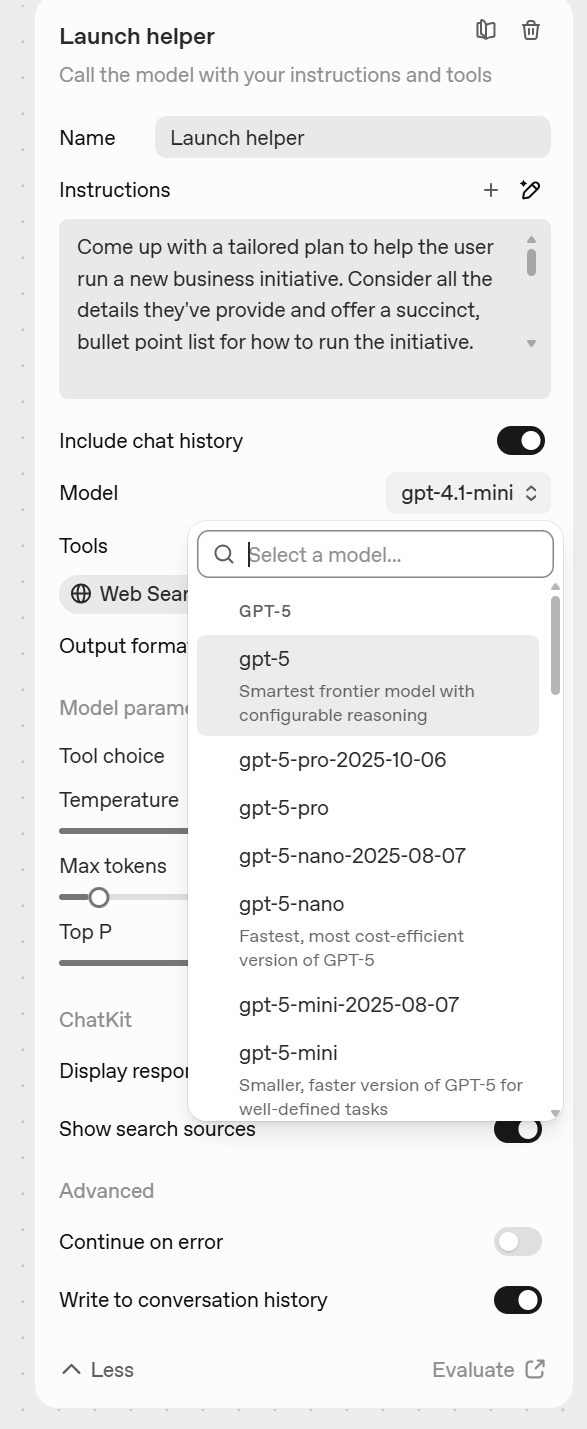

Codex is OpenAI's software engineering agent, designed to work alongside developers to accelerate software creation. [31:18]

Codex is now available across various coding environments like IDEs, terminals, GitHub, and the cloud, connected via ChatGPT accounts. [31:35]

It runs on the new GPT-5 Codex model, specifically trained for tasks like code refactoring and code review. [31:51]

New Codex features include Slack integration, the Codex SDK, and new management tools and reporting. [33:15, 33:34]

Live demos showed how to use the new Codex and API to turn anything around into workable software, including controlling cameras and lighting systems. [34:11]

Model and API Updates

GPT-5 Pro is now available to all developers via API, providing higher accuracy and reasoning depth for complex tasks in fields like finance, law, and healthcare. [46:41, 46:56]

Sora 2 is also now available in preview via API, allowing developers to integrate stunning Sora 2-powered video outputs directly into their own applications. [47:47, 47:54]

Advancements in Sora 2 include enhanced controllability, the ability to pair sound with visuals, and the integration of real-world elements into generated videos. [48:00, 48:45]

Partners like Mattel are already using the Sora 2 API to bring product ideas to life faster. [49:55, 50:02]

Conclusion

Sam Altman's speech emphasized OpenAI's commitment to providing developers with tools and platforms to build the future of AI. [51:04]

Announcements at this DevDay, including the App SDK, Agent Kit, Codex, and updated models, aim to support developers in creating the next generation of AI applications and experiences. [51:14]

Altman expressed excitement for the future of AI and encouraged developers to use these new tools to turn their ideas into reality. [51:38]

Pictures prove the truth.

For those who don't want to read too much text, it's okay, the SVG version is also ready.

The huge gap is already visible; by contrast, GPT can only read the text of blog pages.

Yes, I wanted to try Agent Builder immediately, as I've been "advocating" for this kind of drag-and-drop workflow for about two years now.

Although I actually use these things very little now, as soon as I saw this was a feature in the so-called Developer platform, my slight anticipation vanished. I had a very bad intuition: OpenAI will charge by API.

Yes, it seems it's all about adding so-called more features to drive API revenue and enterprise customer revenue.

So, following the diagram above, it didn't run successfully. I didn't even bother to investigate whether it was because I used a developer account with no "balance" or because OpenAI's own product wasn't tuned properly before launch. To be fair, I used their example directly.

Of course, I took a closer look at the contents. Users of n8n or Dify don't need to switch. This product is not just a little bit behind Google's Opal; it's behind by two eras. In terms of facing developers, the Gemini product line is so comprehensive, with massive daily free quotas. Tell me OpenAI can "disrupt" Google? With this?

I introduced Opal in my article covering all Gemini applications.

It's simple logic: if users have the ability to build workflows themselves and still need to pay for API calls, then n8n and others are obviously better choices because they can use models from any provider and integrate numerous third-party features.

Let me say it again: OpenAI put on this launch just to sell APIs and make money.

So, skip this feature—it's something I could never possibly use.

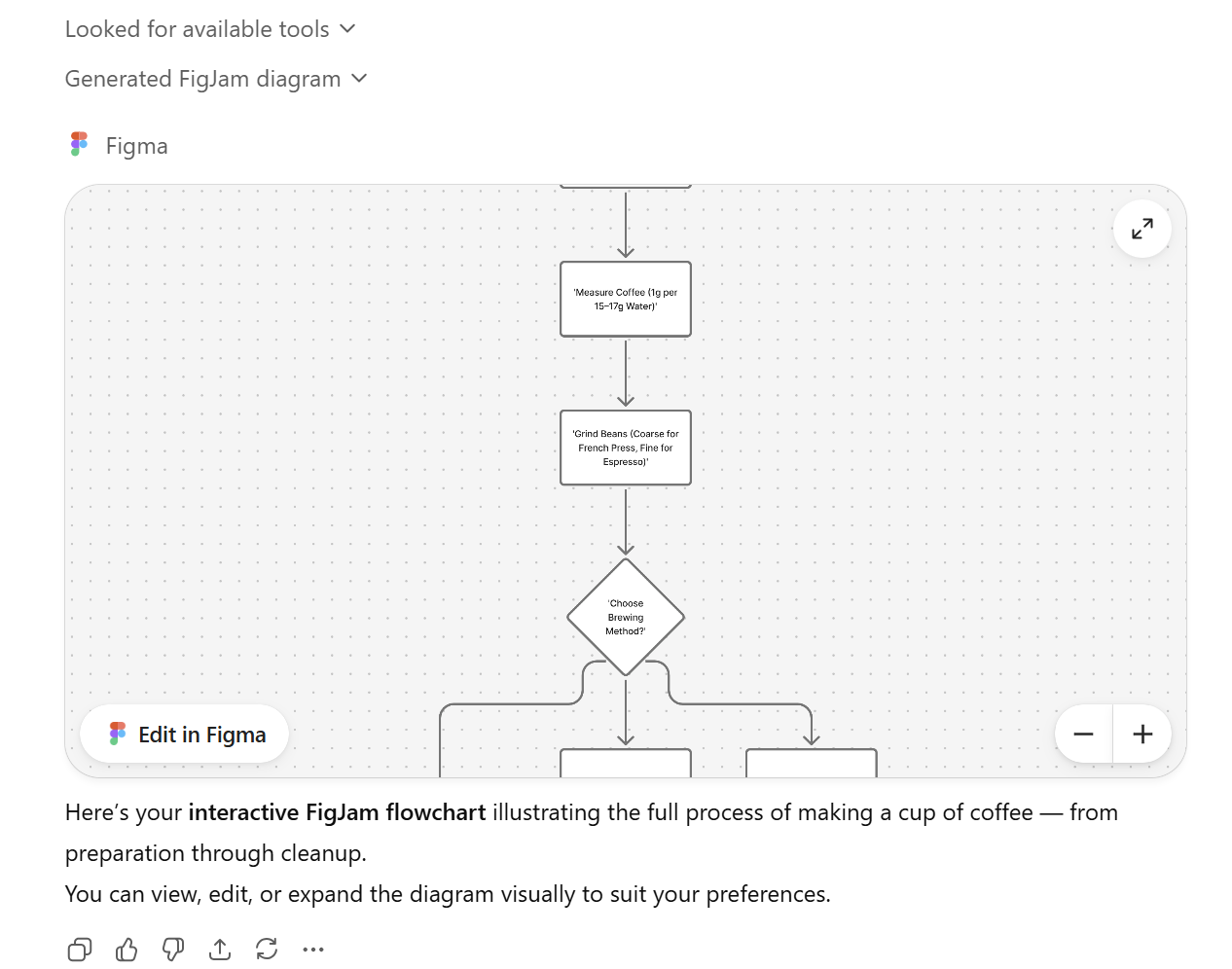

However, there were small highlights in the launch, such as the APP functionality within ChatGPT. See the screenshot below. Of course, currently, the available ones are extremely limited—only six, including the Figma one I've already set up.

Among the methods supported by Figma, almost all are "get" types for retrieving information; only one is "generate."

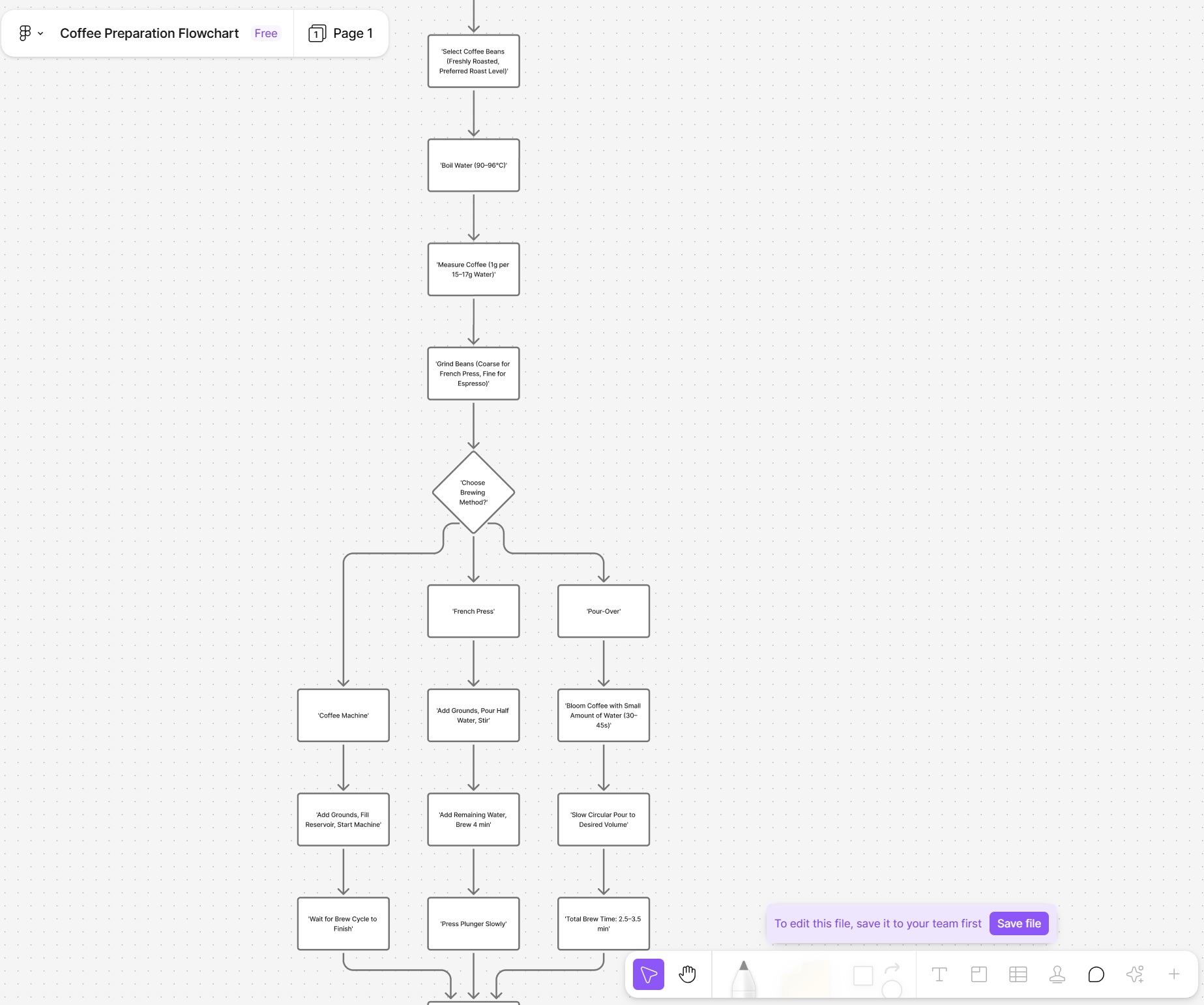

Actually, it's just an MCP. But since it's an official integration, let's try: generate a coffee making process flowchart.

Yes, even if you open Figma, this is what it looks like.

Just as defined in MCP, it only supports generation based on mermaid.js—nothing more.

By the way, Kimi could render mermaid.js directly in chat results when its first version was released. The Excalidraw plugin for Obsidian has supported mermaid.js input for automatic chart drawing for two years.

More recently, the workflow in the Starter Kit released by TLDraw can automatically draw flowcharts.

All of these were much earlier than OpenAI and provided a much better experience.

Okay, skip this feature too. I think I might use it, after all, there's a convenience factor within ChatGPT.

But if it's just based on MCP, well, anyone can do that.

In summary, in one sentence: I'm glad I didn't get up for the livestream, otherwise, I would have felt like my "intelligence was being insulted."

Actually, I was already holding back my tongue yesterday about OpenAI's partnership with AMD. If the market wants to believe it, why should I resist?

But after releasing this "pile" today, I'm basically certain that OpenAI is getting very, very desperate because it can't come up with anything new. Sora 2? Those who have used Veo 3 for a long time won't find this stunning. It's just another social media hype cycle. The purpose remains simple: get money.

OpenAI's models might still be on the level, but their reputation and user stickiness have actually dropped significantly. Code generation has Claude, and various multimodal generation and fusion have Gemini. As I keep repeating, for me, the only thing I'm still using is its ChatGPT-Agent, because right now they are willing to pour "compute" into getting the results right.

OpenAI bet too much on the Scaling Law. Against the backdrop of seemingly drifting away from Microsoft (refer to my outlook from late last year), they are looking for "compute" and "money" everywhere. Contracts with Google and Broadcom, partnerships with NVIDIA, and now the partnership with AMD—what you see is not its "advantage," but betting everywhere. Perhaps by today, very few people still believe it hasn't "overbooked."

Why is there an Agent Builder? Because the potential for ChatGPT paid subscriptions seems to have plateaued. Given such huge investments, it must quickly prove its commercialization potential and story. Hence the so-called Sora 2 challenging "TikTok," the "shopping" challenging Amazon, and today's Agent Builder intended to tap into massive API payment potential.

However, right now, when a leading model company thinks primarily about "commercialization" in everything it does—while understandable—it only loses reputation. Not to mention, it isn't leading at all.

A while ago, many friends were saying OpenAI is increasingly looking like just a product company. I actually offered some explanations: GPT-5 is actually not bad, ChatGPT-Agent experience is decent, Codex is okay, and the GPT-5-supported Copilot has seen a noticeable increase in recognition and usage among enterprise customers.

After today, I will no longer speak up for OpenAI, unless it gets back to being "down-to-earth" for better models.

I am increasingly convinced that the current massive investment is a huge bubble.

However, I'm willing to refine this conclusion: if you don't consider OpenAI, AI has no bubble. It is an unethical company, though few companies are truly ethical.

To refine it further: this is a company that is currently "doing evil."

By the way, for OpenAI, this is a self-justification trap.