After discovering that Kimi OK Computer's input capacity is roughly a document of dozens of pages, I started a "mini challenge": giving OK Computer one document at a time to generate an interactive site:

It completes a website every time using only "one life" (one attempt);

The aesthetic appeal is excellent, with theme-appropriate color schemes, background images, and interactive animations;

However, regarding content, it often "freestyles" a bit too much, adding many points that are related but not actually in the document;

There are still many small errors; it can be described as: aspirations far exceeding capabilities;

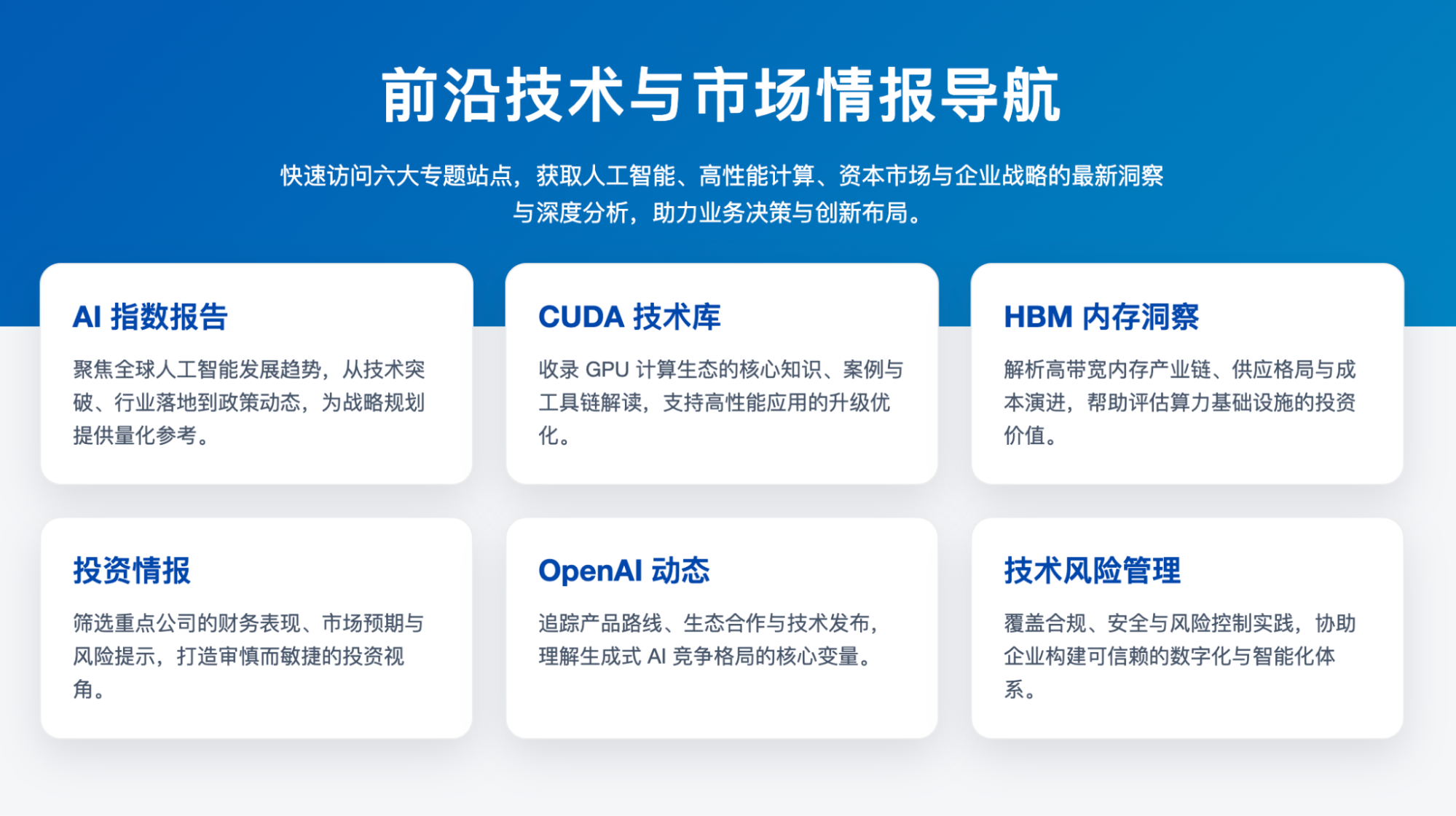

Having exhausted my remaining usage for the month, I created six pieces of content, used Codex to generate a simple directory homepage, and deployed it on Vercel:

And I recorded a short video:

Of course, the biggest issue with sites generated by OK Computer is that they are very hard to modify—difficult to get the AI to change and even harder for a human to edit. So, just enjoy it for the novelty; using it to make simple audiobooks to help children learn history or English should be quite helpful.

The K2 model behind OK Computer naturally shares the same characteristics: initial generation is decent, but making changes is difficult. Thus, it clearly cannot yet serve as a reliable "vibe coding" model.

Recently, due to heavy usage and the frustrating usage limits on Claude Code, I've had to use Codex more frequently.

Codex's GPT-5-codex-high capability is quite good, and under the GPT Pro subscription, I haven't encountered any usage limit issues so far;

The degree of "freestyling" in Codex is much lower than in Claude Code (Claude-4.5), which means more frequent manual input (the model stops to ask questions more often) and the constant need to provide Codex with more details;

Therefore, in most scenarios, Claude Code still has higher efficiency, completion, and richness of detail;

Of course, Codex uses a lot of folding during execution, so the screen looks more static, whereas Claude Code looks busier and more "Vibe Coding";

PS: I actually started writing this article three days ago but got interrupted by other things. The current situation is that Anthropic updated Claude Code's rate limit policy again in the last two days—it has decreased overall, and the quality has noticeably worsened.

This is basically forcing me to stop using it. In contrast, Codex is still working diligently; it’s been nearly two hours since 8 PM.

So, as long as the gap isn't enormous, there's always a way to solve things by switching models.

I know my behavior has a certain "early adopter" quality; as long as there is more than one top-tier model, selling tokens won't result in "obscene profits."

Of course, while listening to an artist's interview today in my spare time, I gained a new insight: I think I misunderstood OpenAI and misunderstood this rhythm. I unconsciously maintained a "financial mindset" of long-term optimism with short-term fluctuations. But in reality, for OpenAI, which is currently leveraging over a trillion dollars, it's either success or taking everyone down in "destruction" together; there seems to be no middle ground left.