I've been using GPT-5.2 for a day, and of course, I've done some comparisons, especially re-running some cases I frequently use for testing.

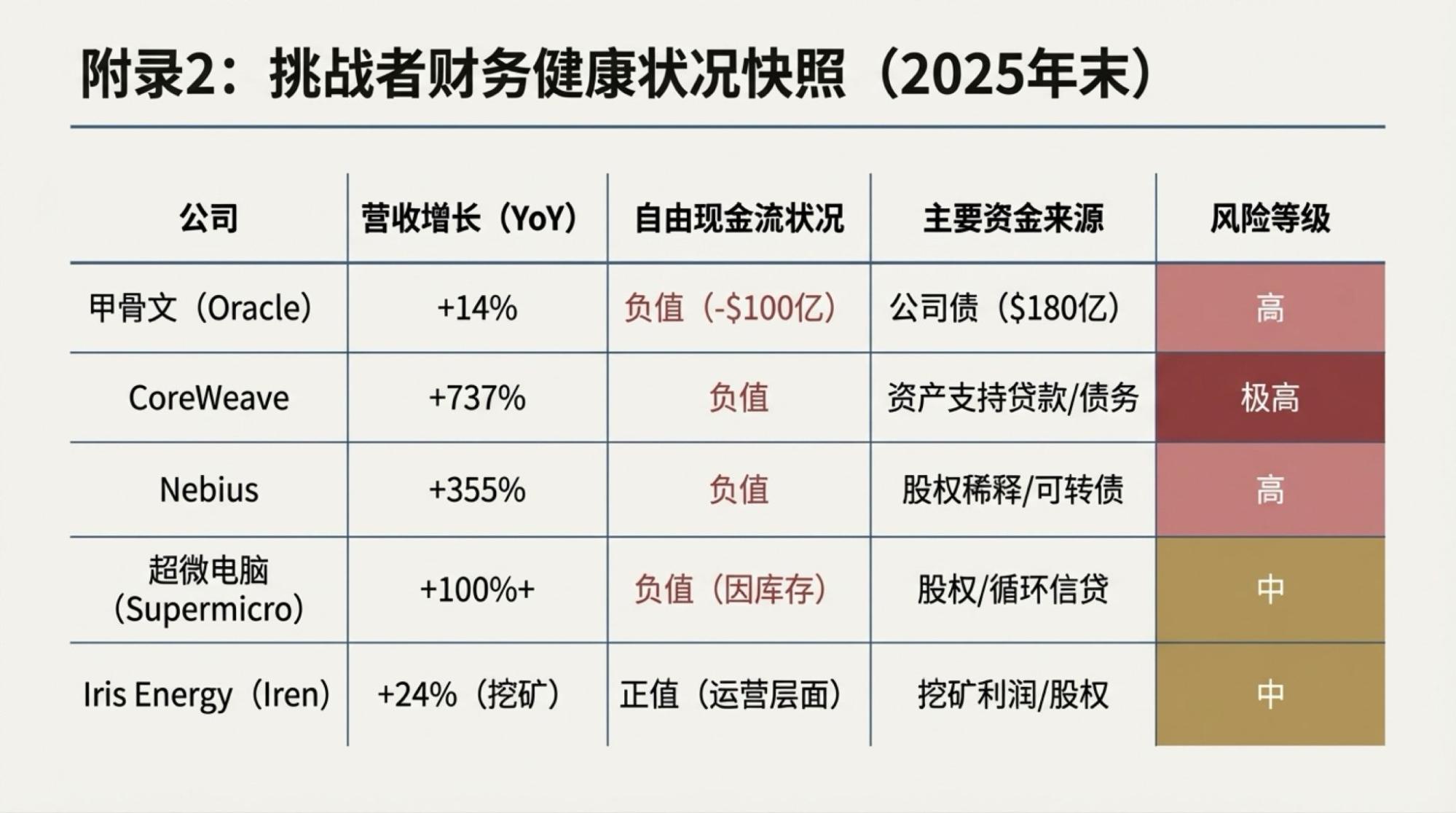

For example, the image below should have been compared during the GPT-5.1 era. Clearly, the progress over 5.1 is obvious, though the style remains quite consistent. Naturally, it has become significantly flashier, especially with the use of chart controls, which is truly impressive.

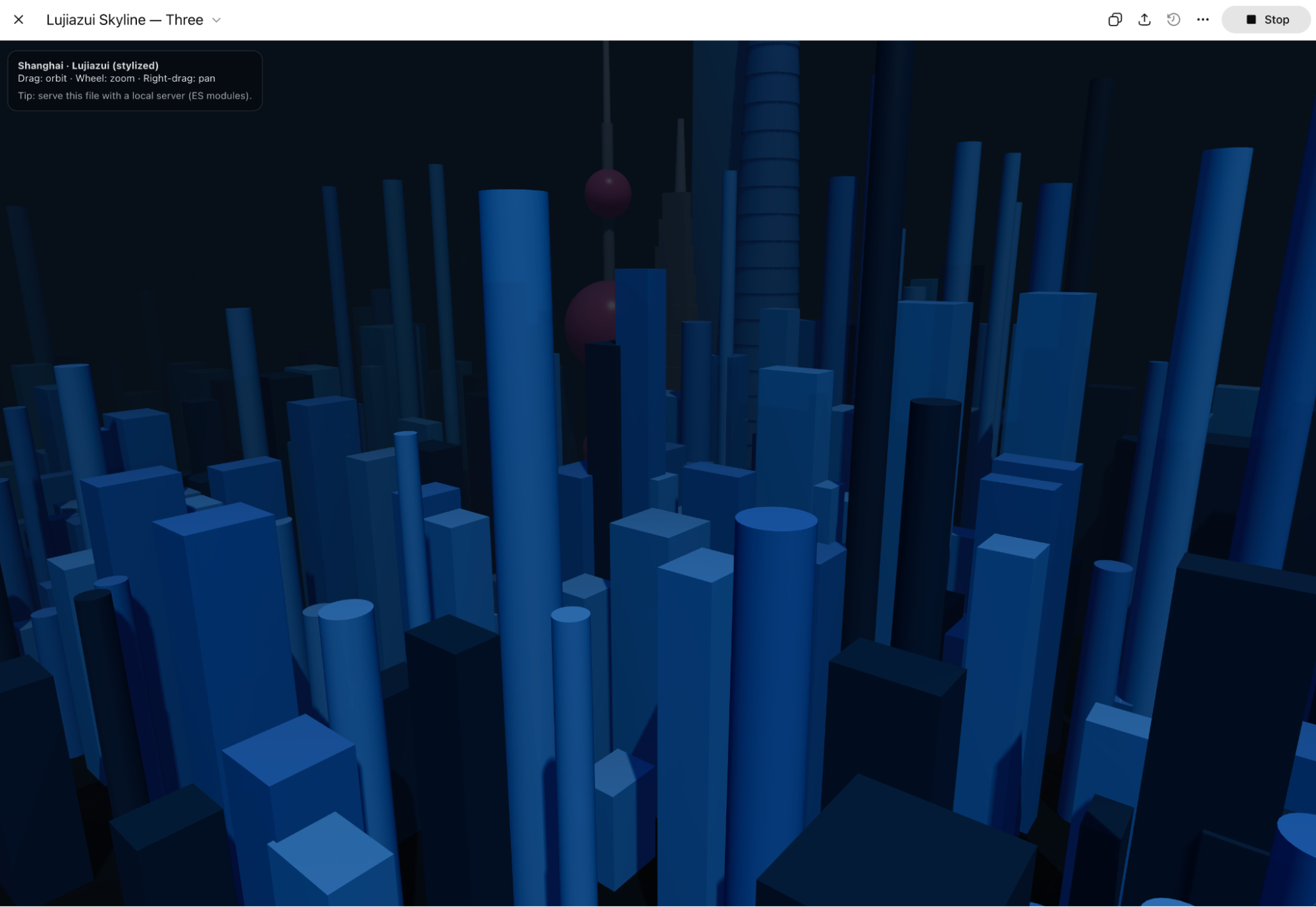

Of course, making a Three.js model of Lujiazui, the results have improved a lot, and there are even shadow changes. The only drawback is that the code overestimates Chrome's capability; the dynamic effect speed is too slow.

As for performance in Codex, it feels much faster—or rather, the execution efficiency is much higher.

OpenAI's promotion hasn't been overly exaggerated; the progress in results is clear, but something feels off.

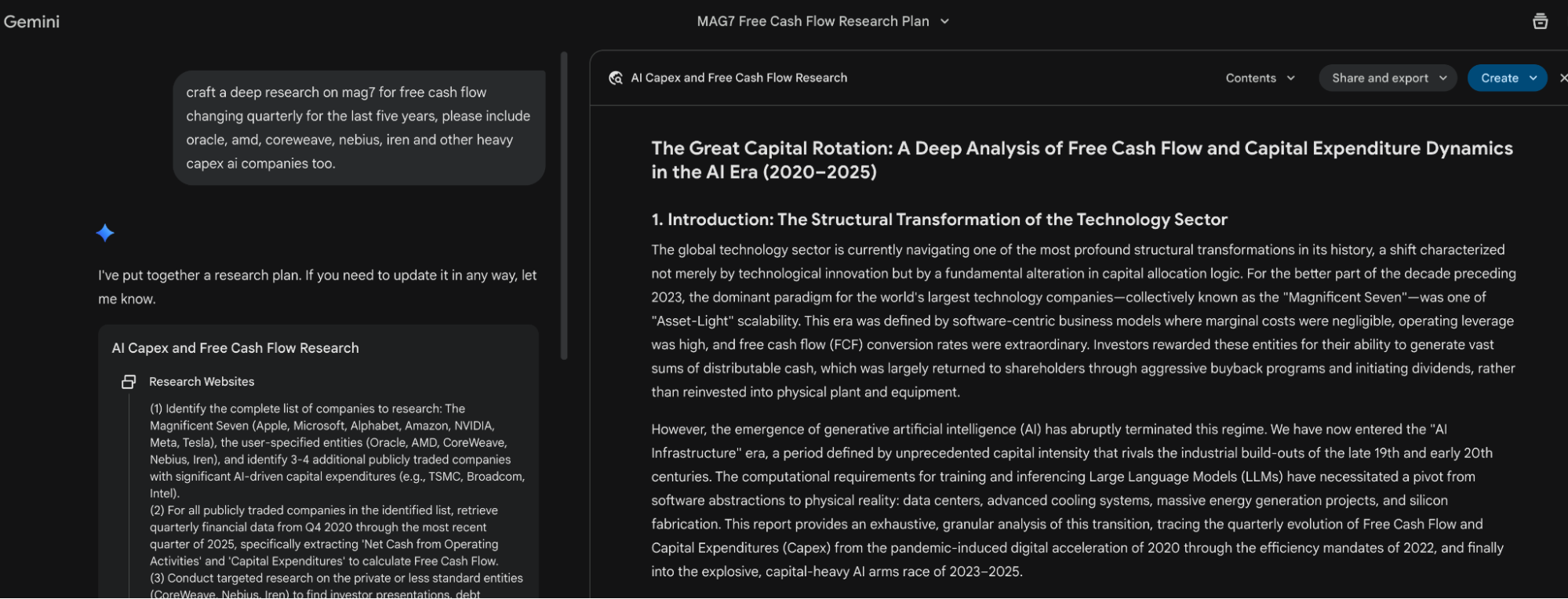

Compared to the Gemini ecosystem, GPT feels very thin. Nowadays, Gemini can easily complete a high-quality deep research report (with the newly released Deep Research Agent showing significant improvements). Then, whether it's outputting Slides directly in Gemini, generating a video in one click via Vids, or using Nano Banana Pro in NotebookLM to generate pictorial Slides, a complete and effective product loop is achieved. Of course, you can also do what I do: quickly integrate Gemini-3-Pro, Nano Banana, TTS, and other models in the Build app of AI Studio to achieve automated one-click video output.

Multimodal to final product-level output is the more meaningful combination of practical models and tools for users. Once, a better user experience was the greatest advantage of the GPT series models, but as it is forced to optimize for coding and focuses only on this single ability, it has begun to gradually "abandon" a larger user base. You see, although code generation can solve a vast number of problems, the majority of users will not get used to it. For most users lacking coding ability and experience, a website is not a format they can easily manage.

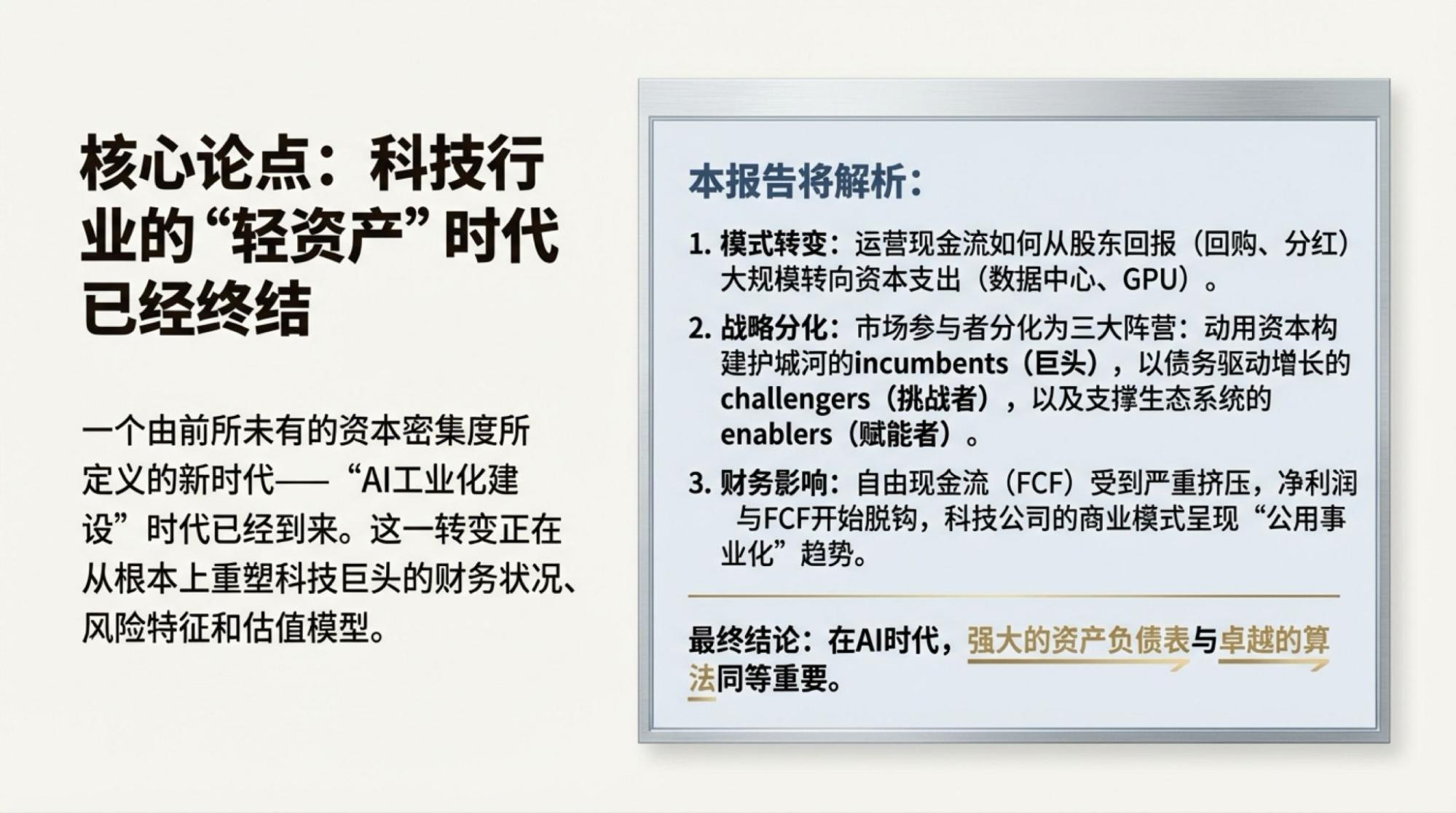

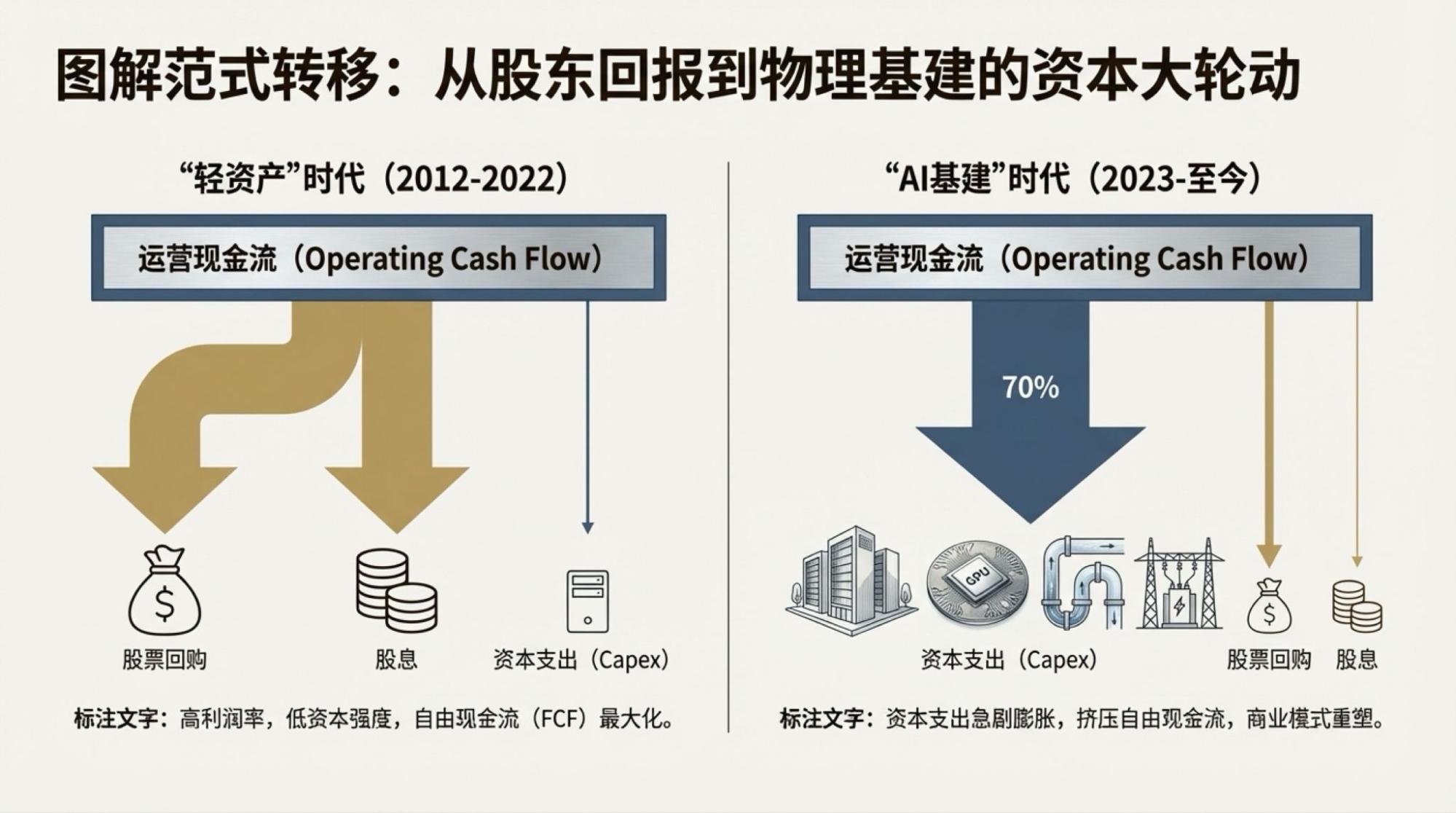

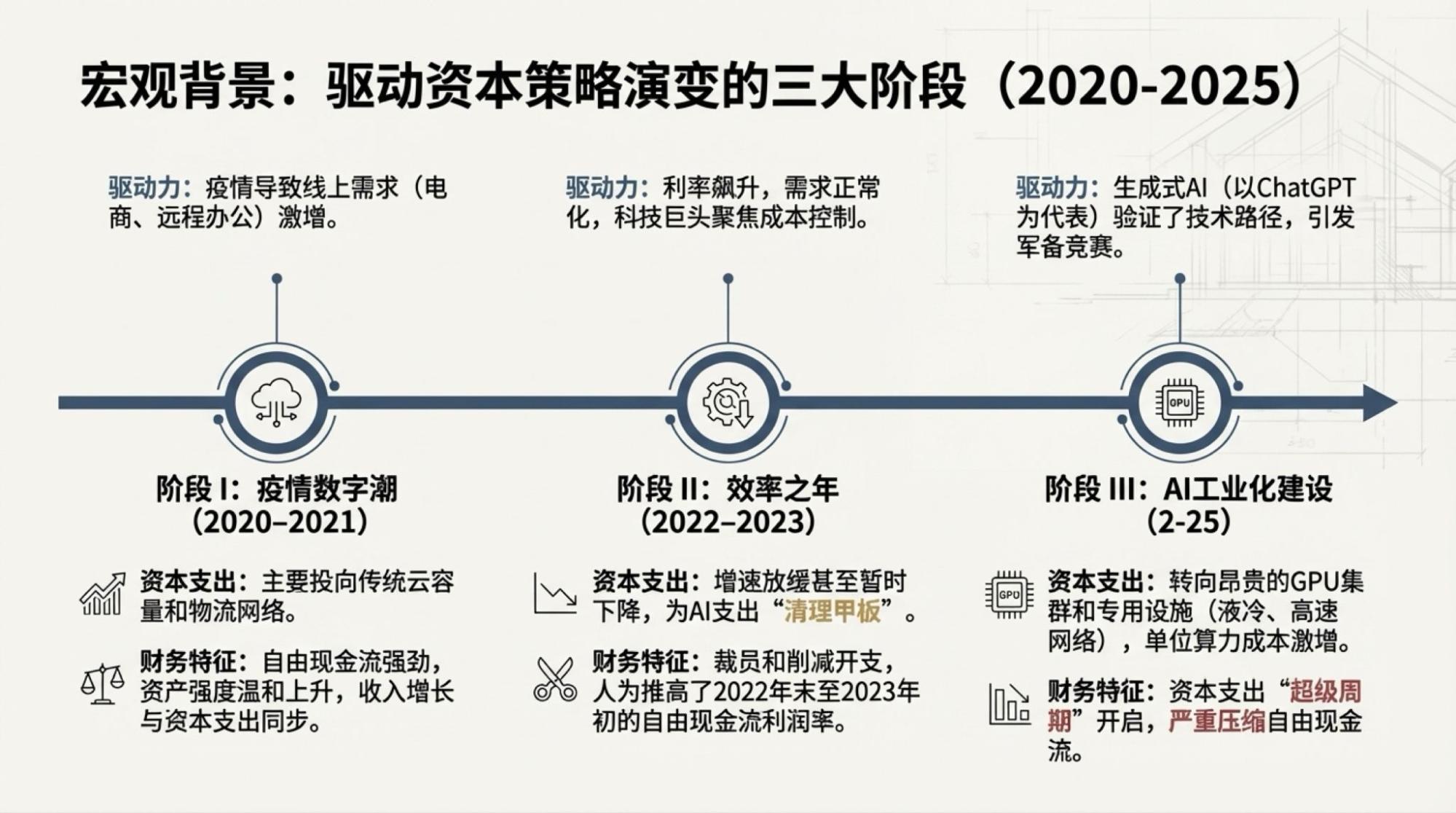

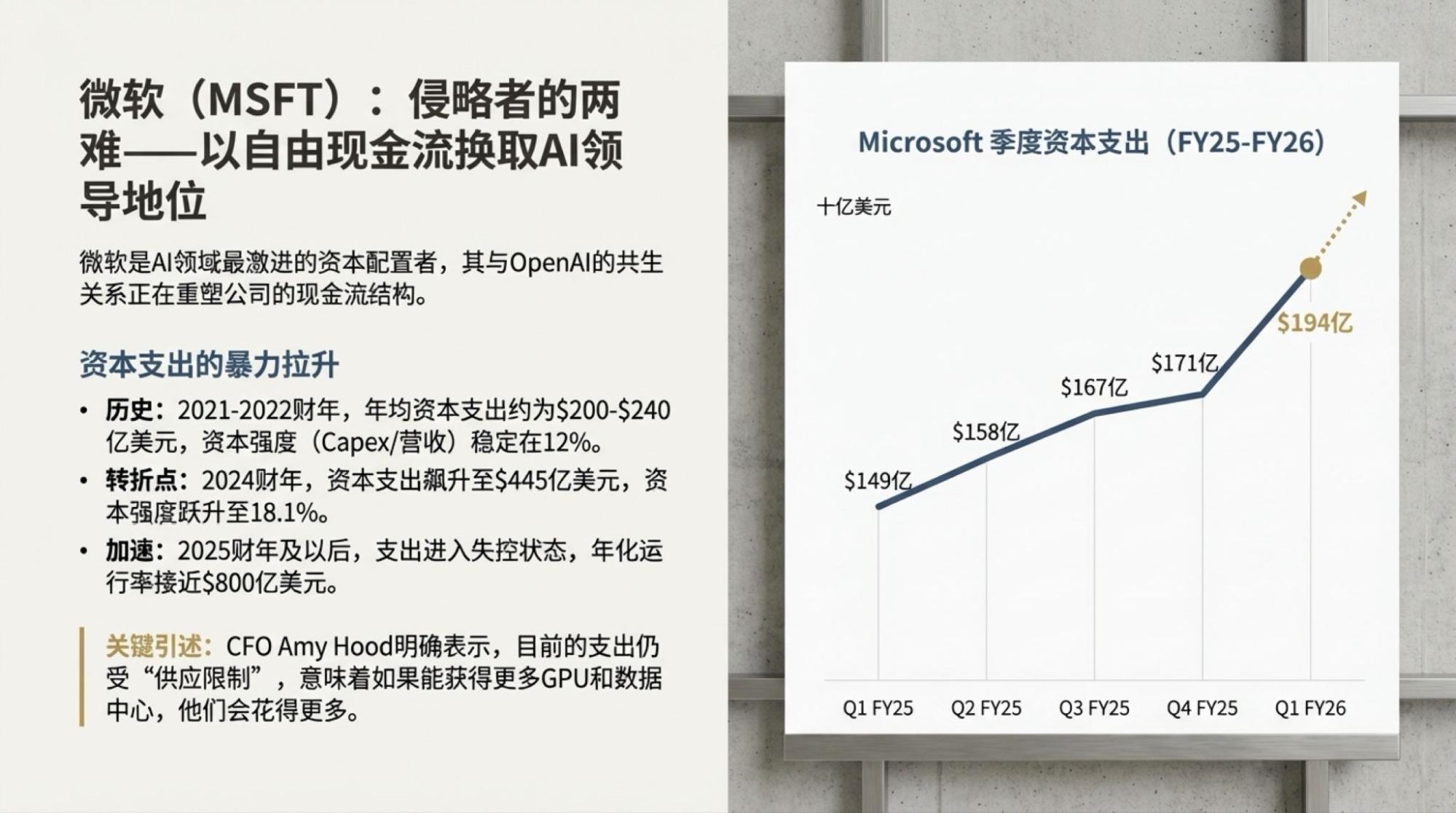

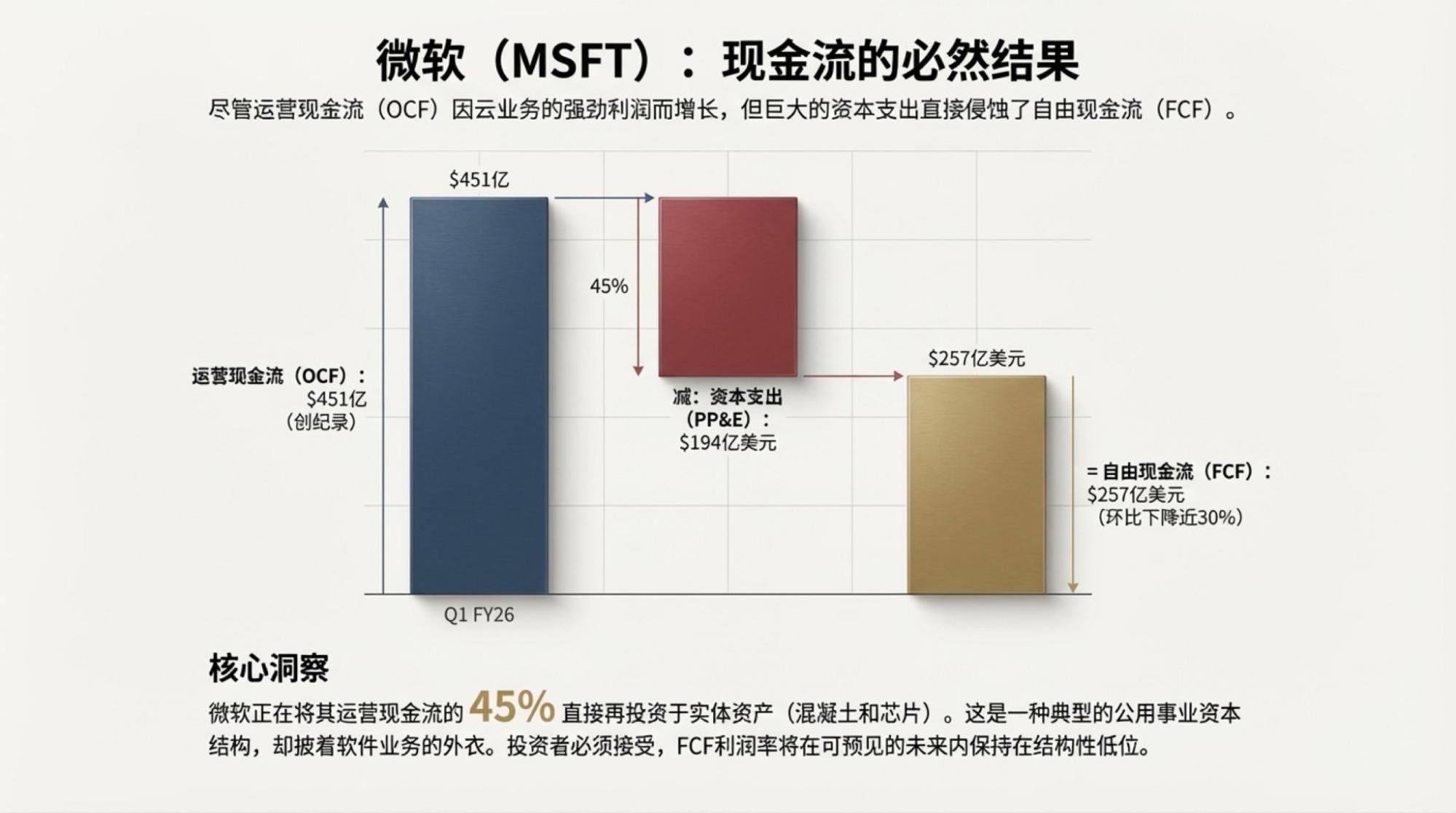

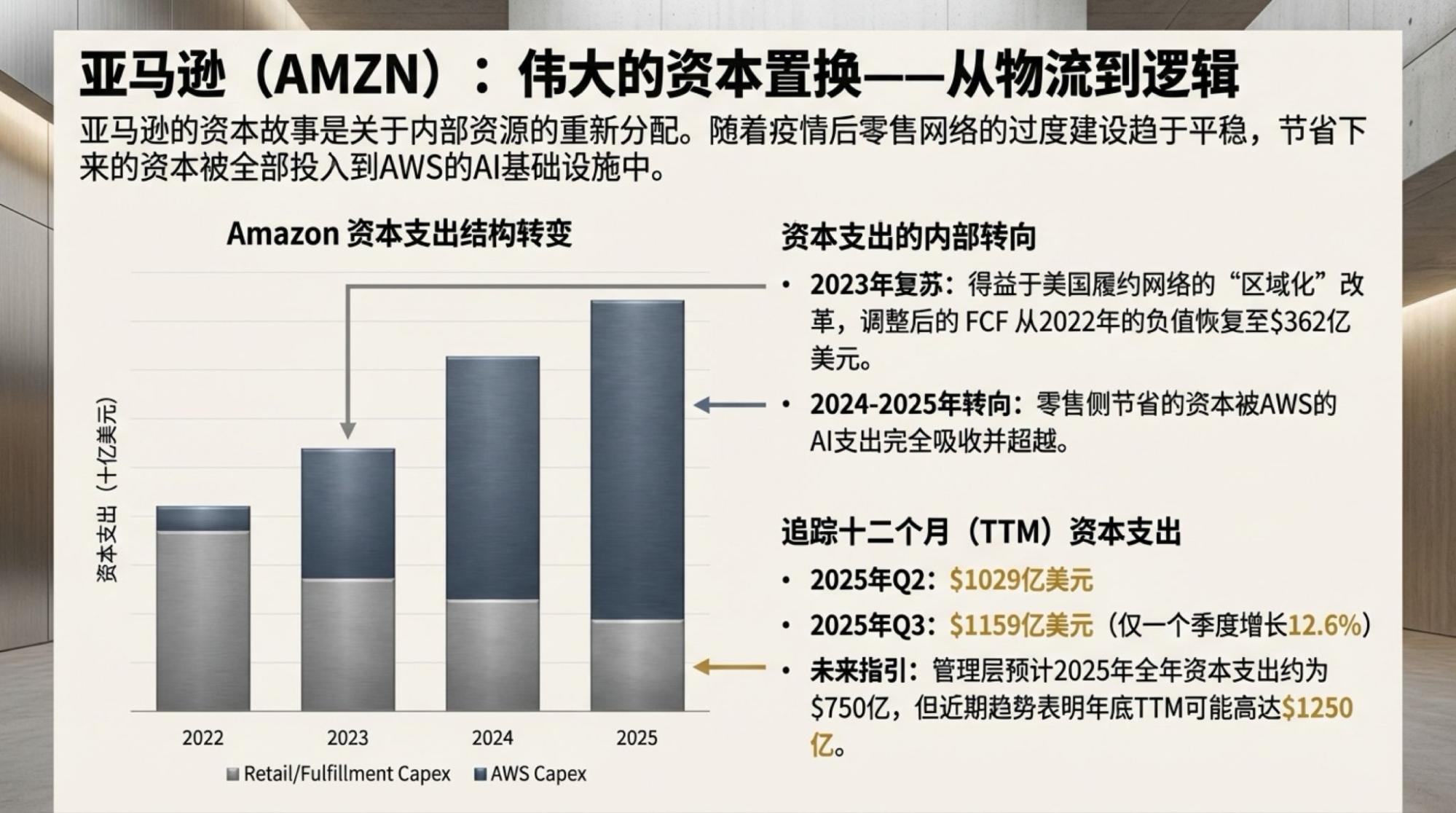

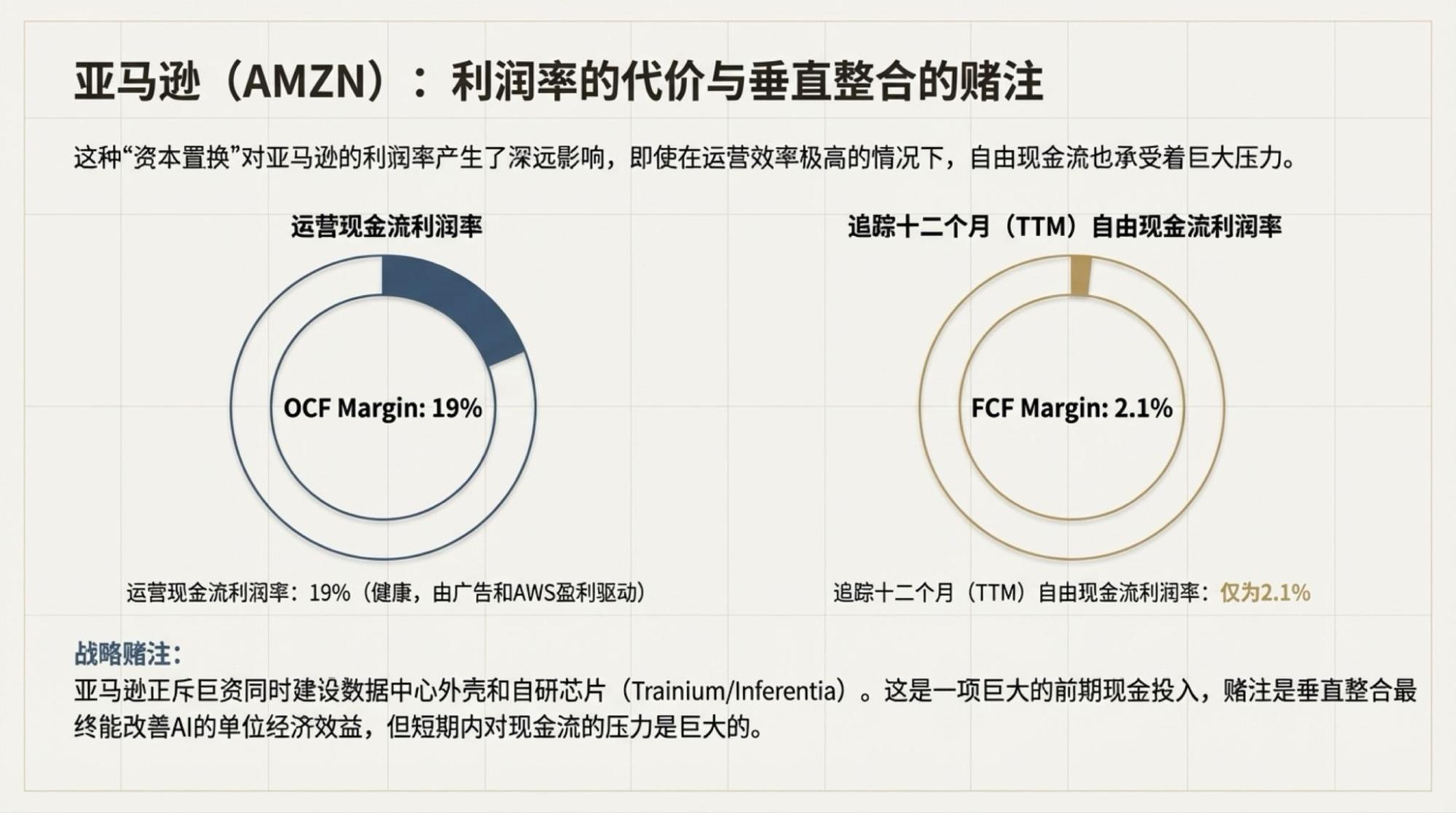

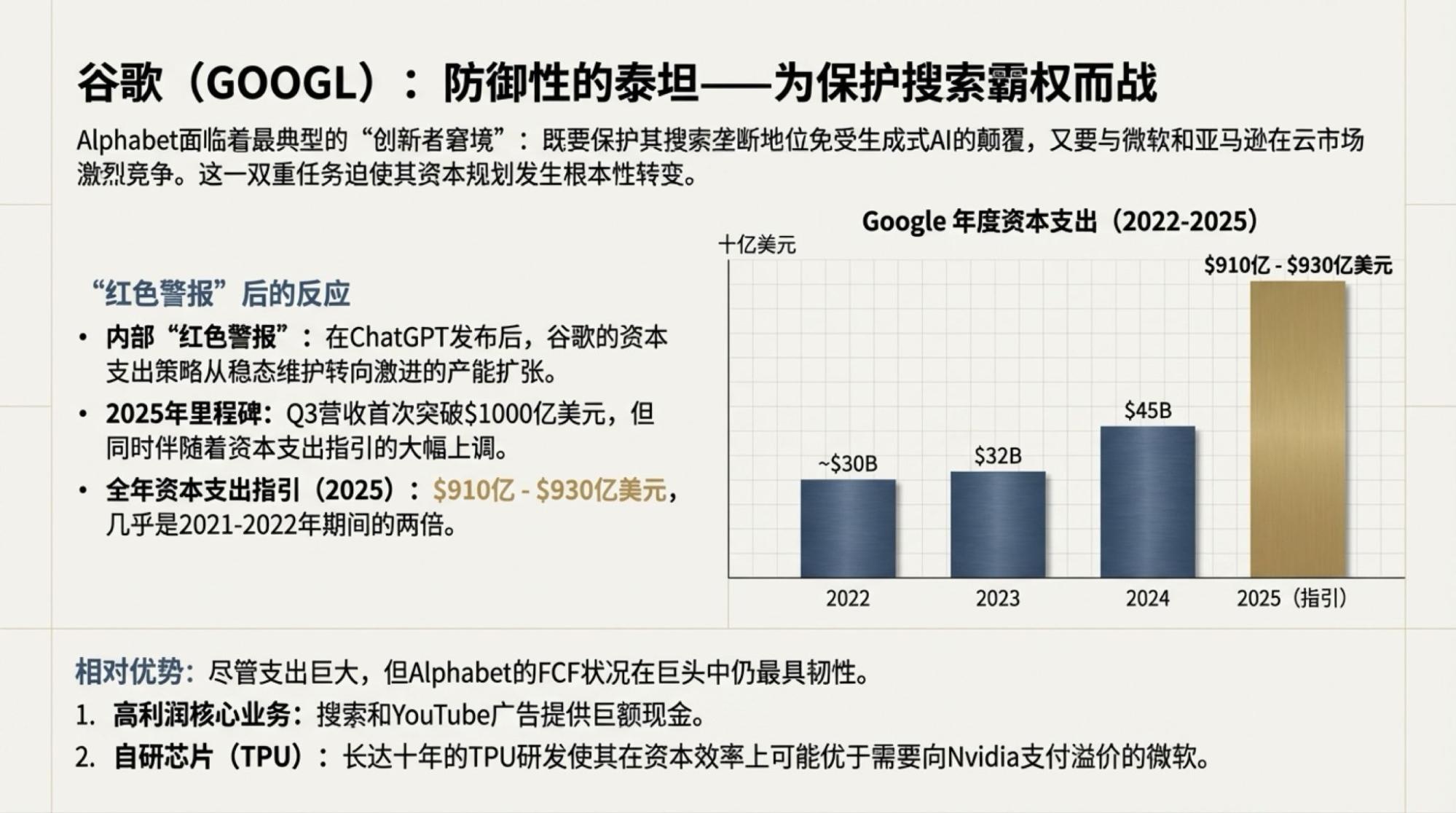

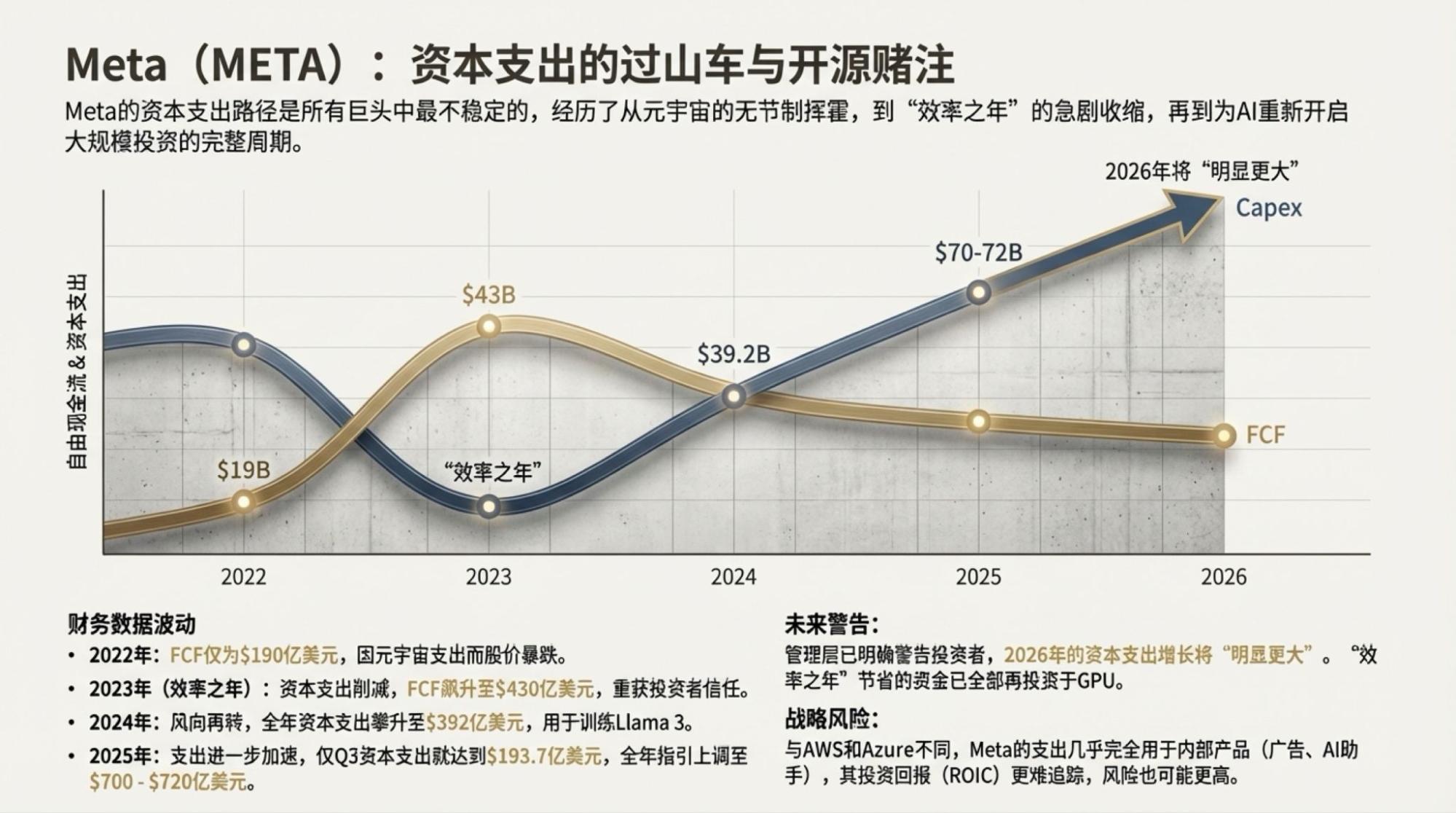

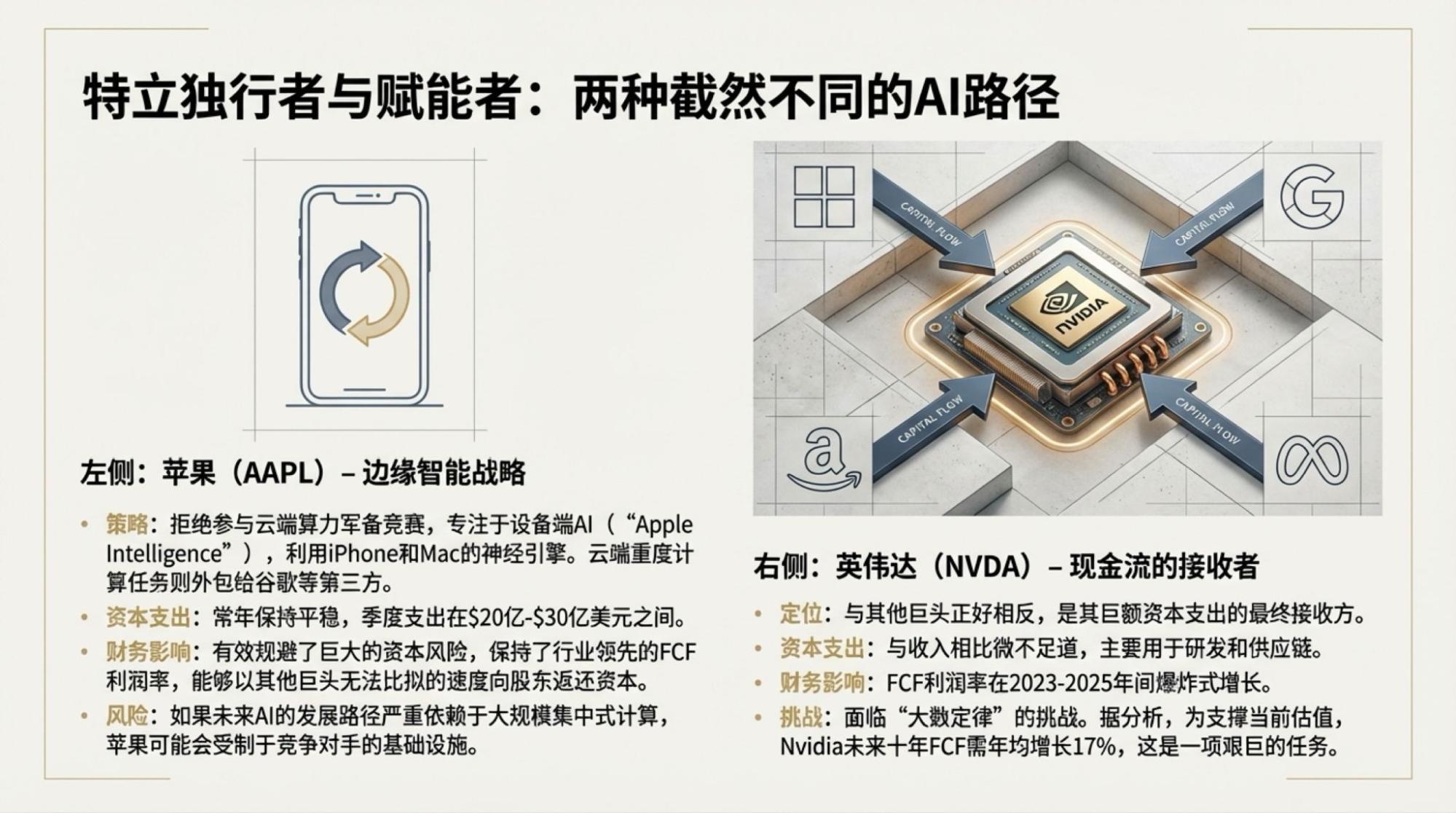

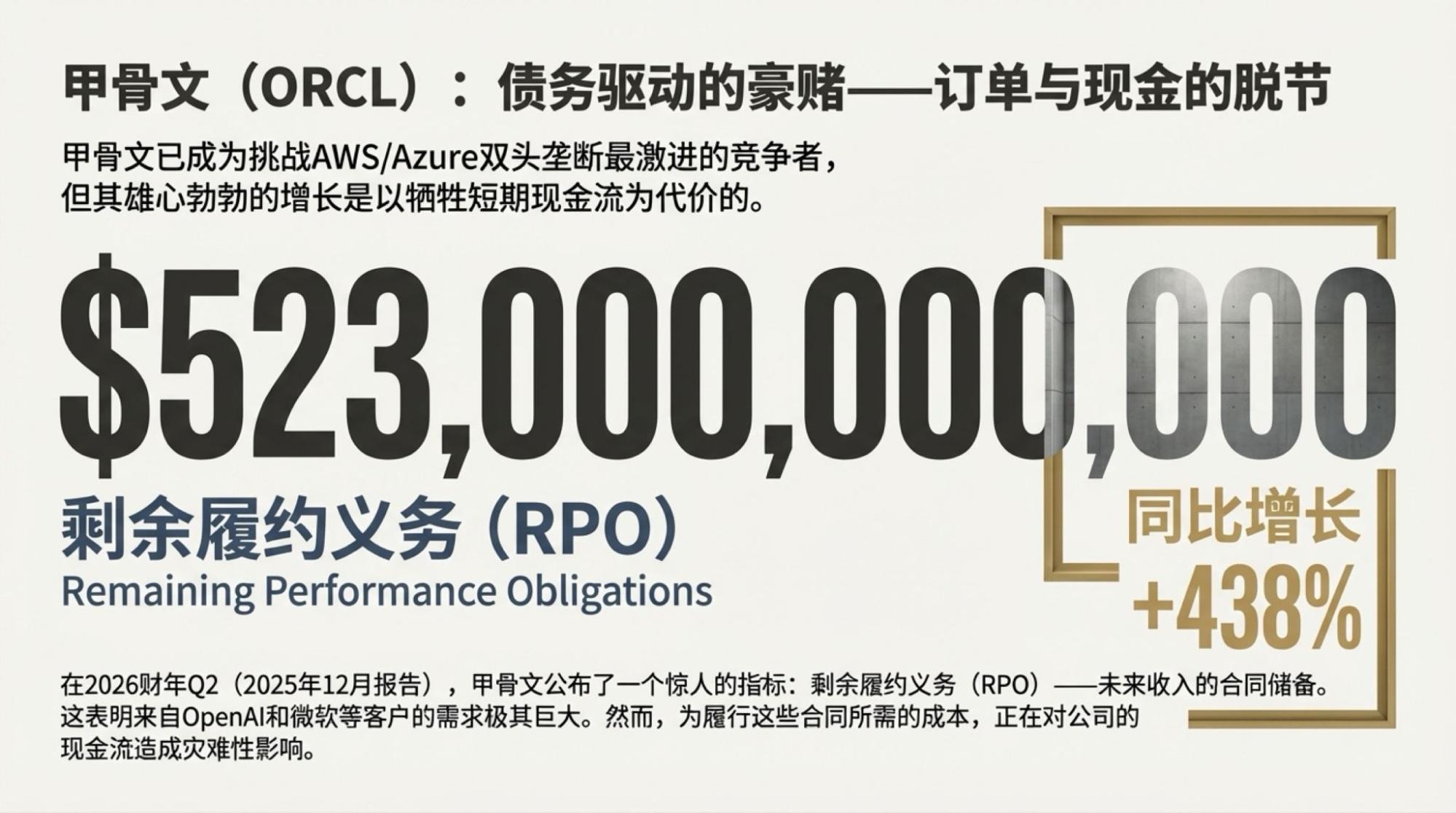

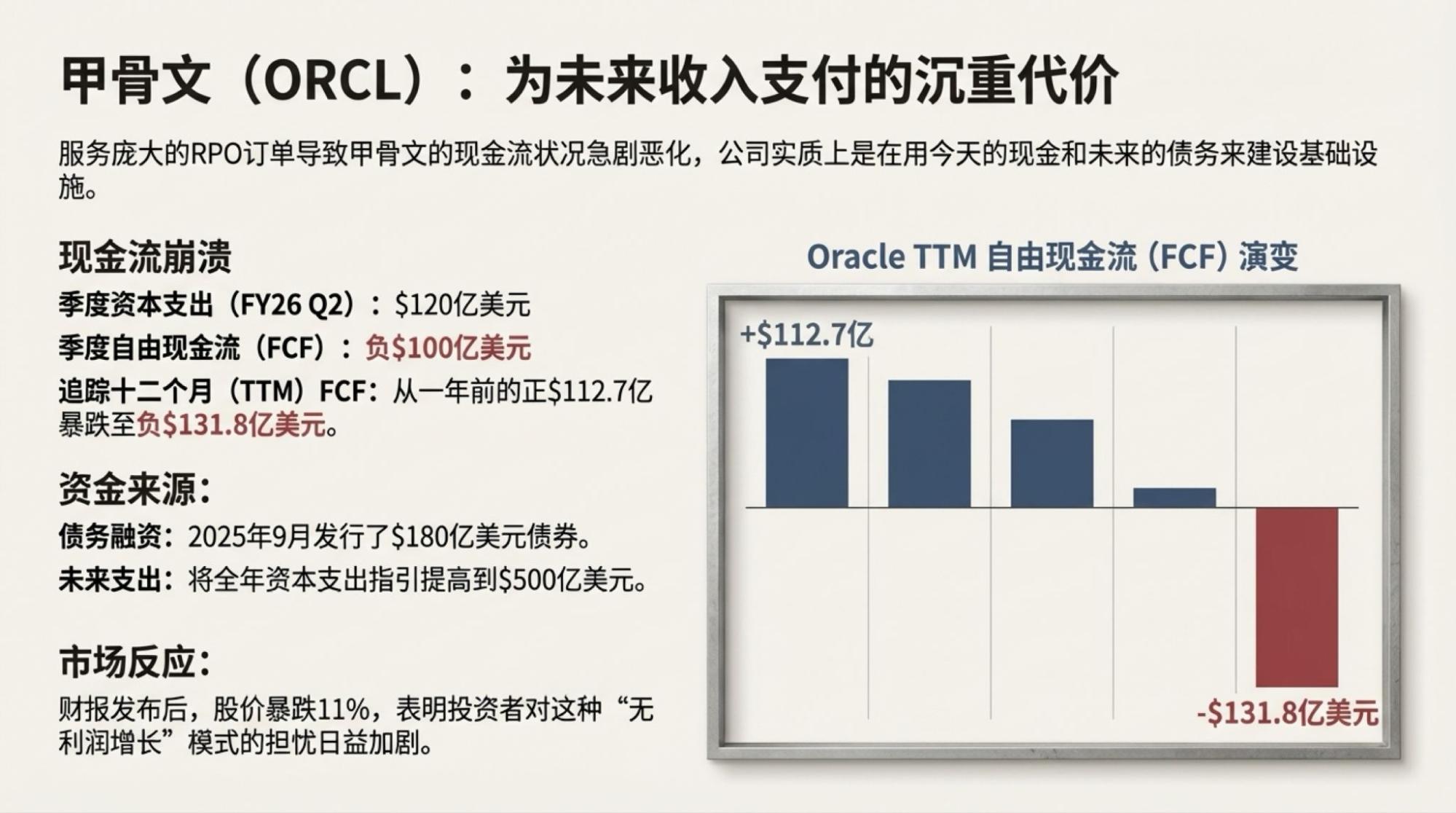

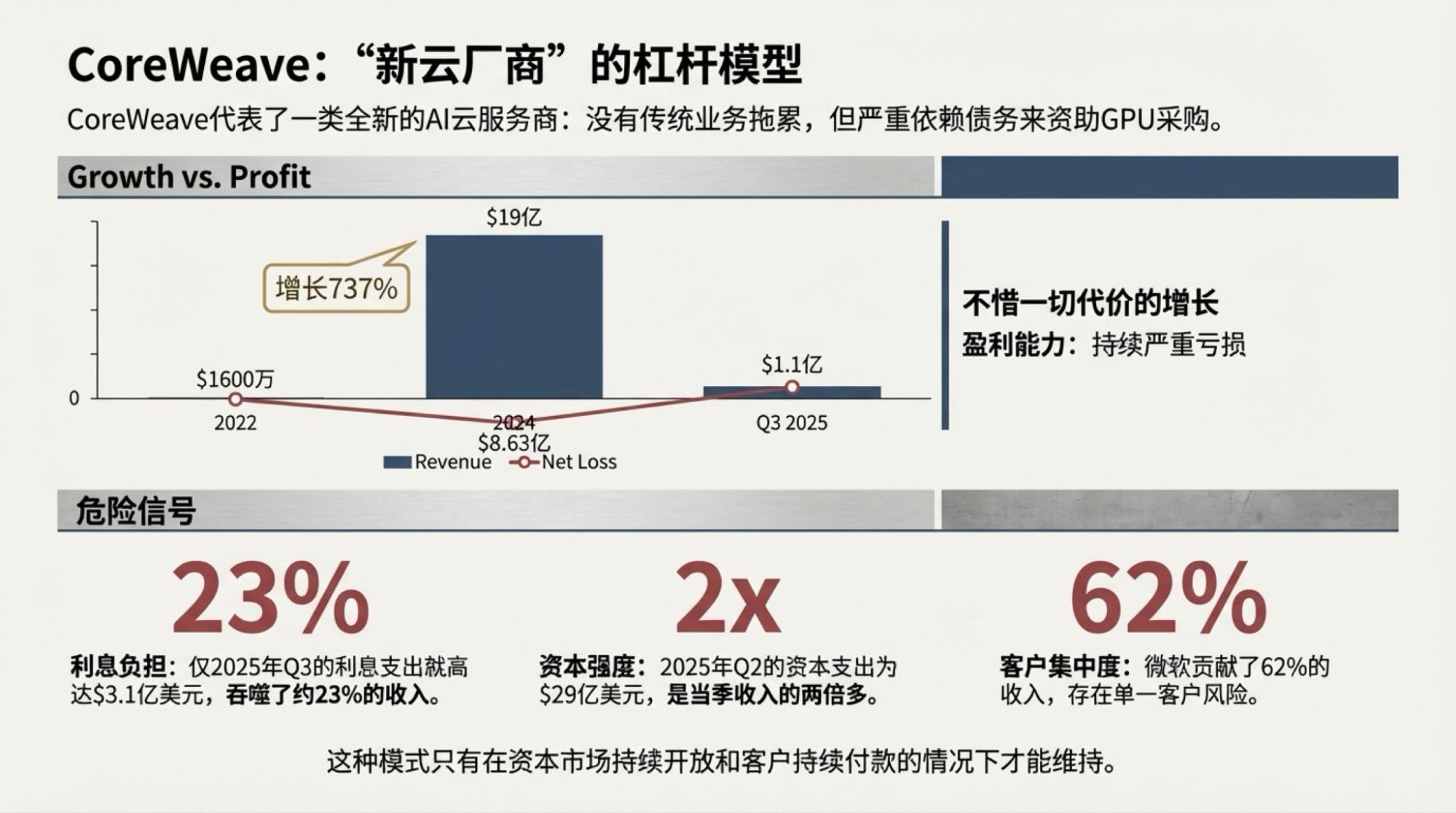

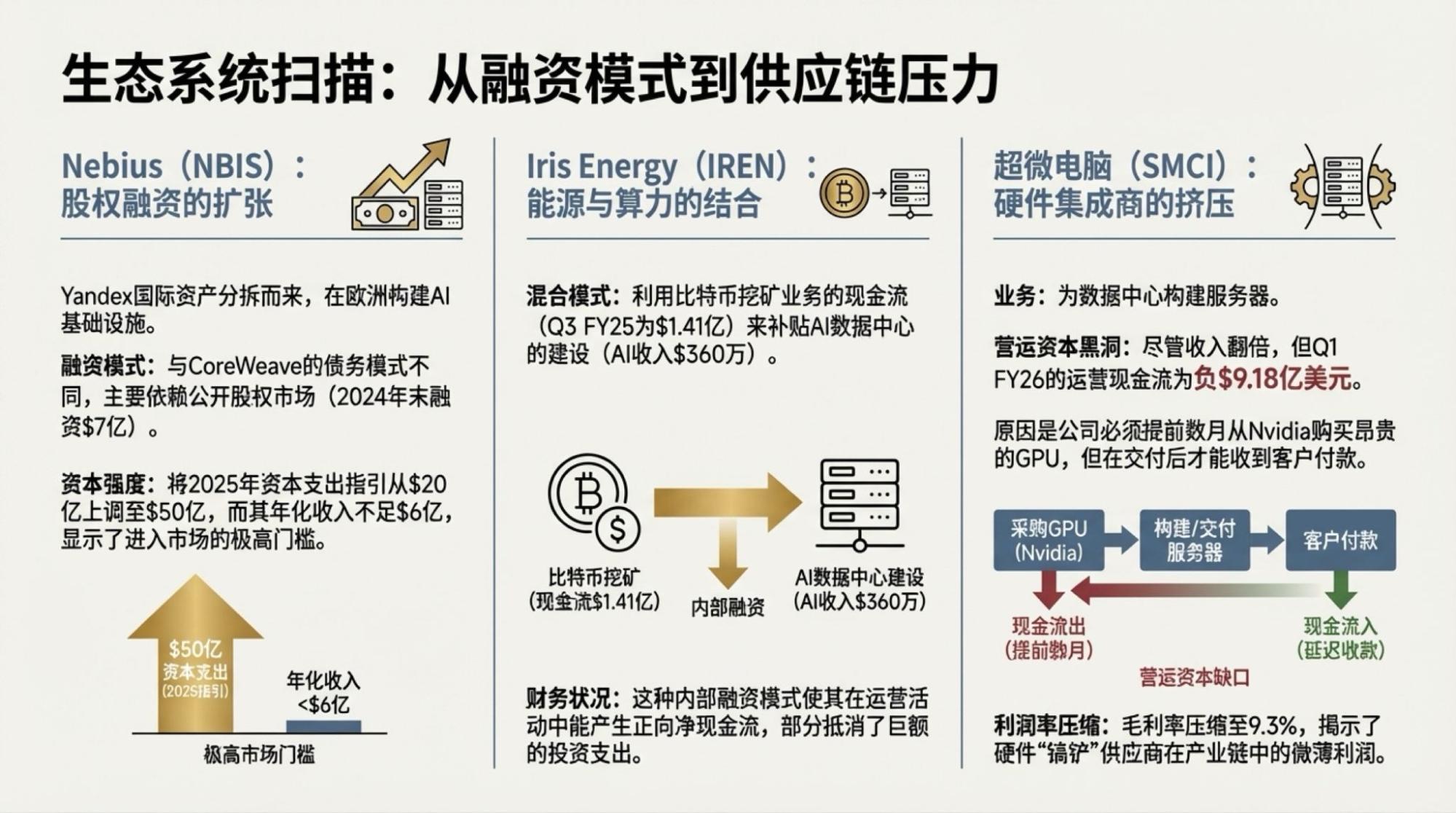

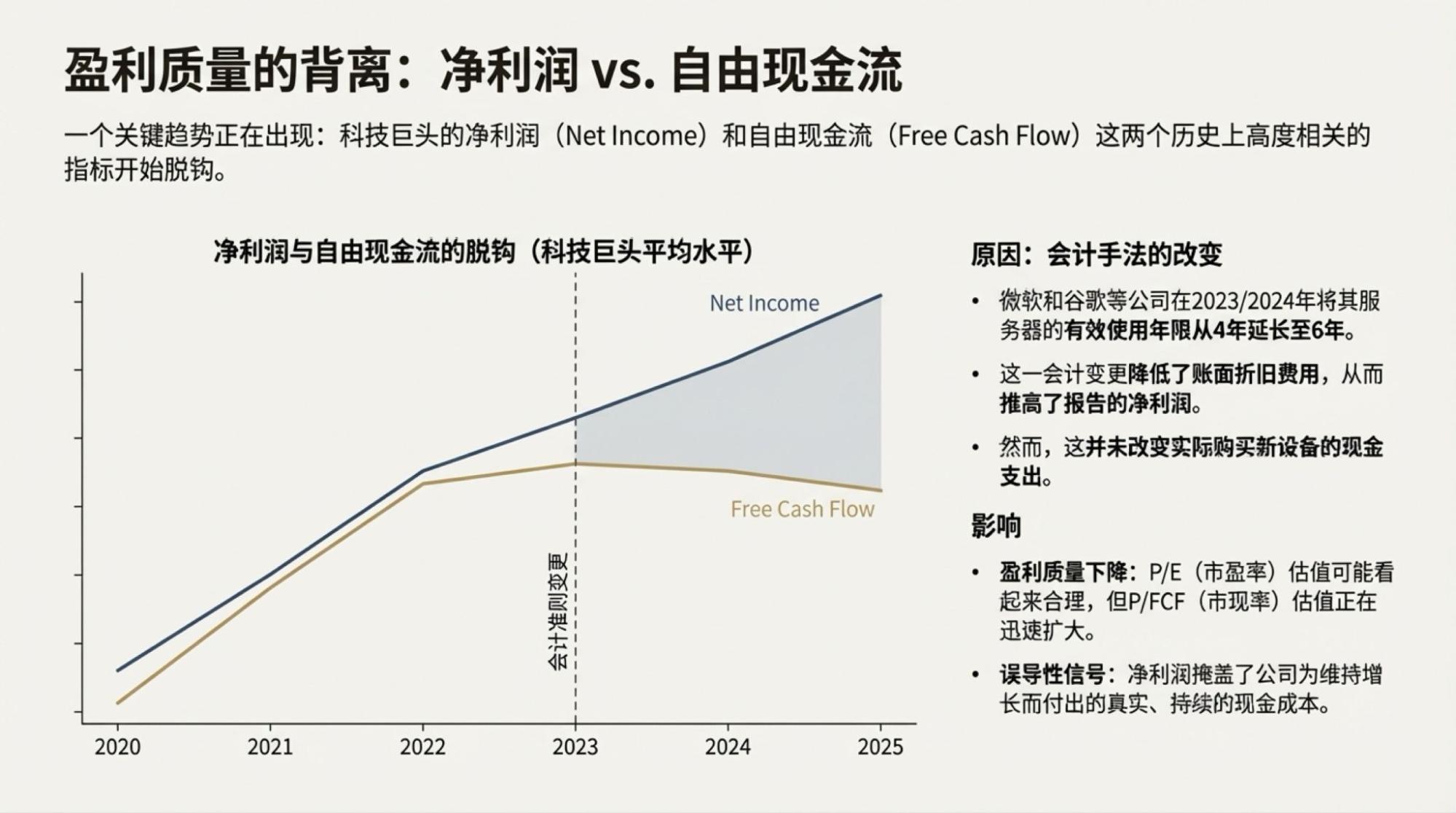

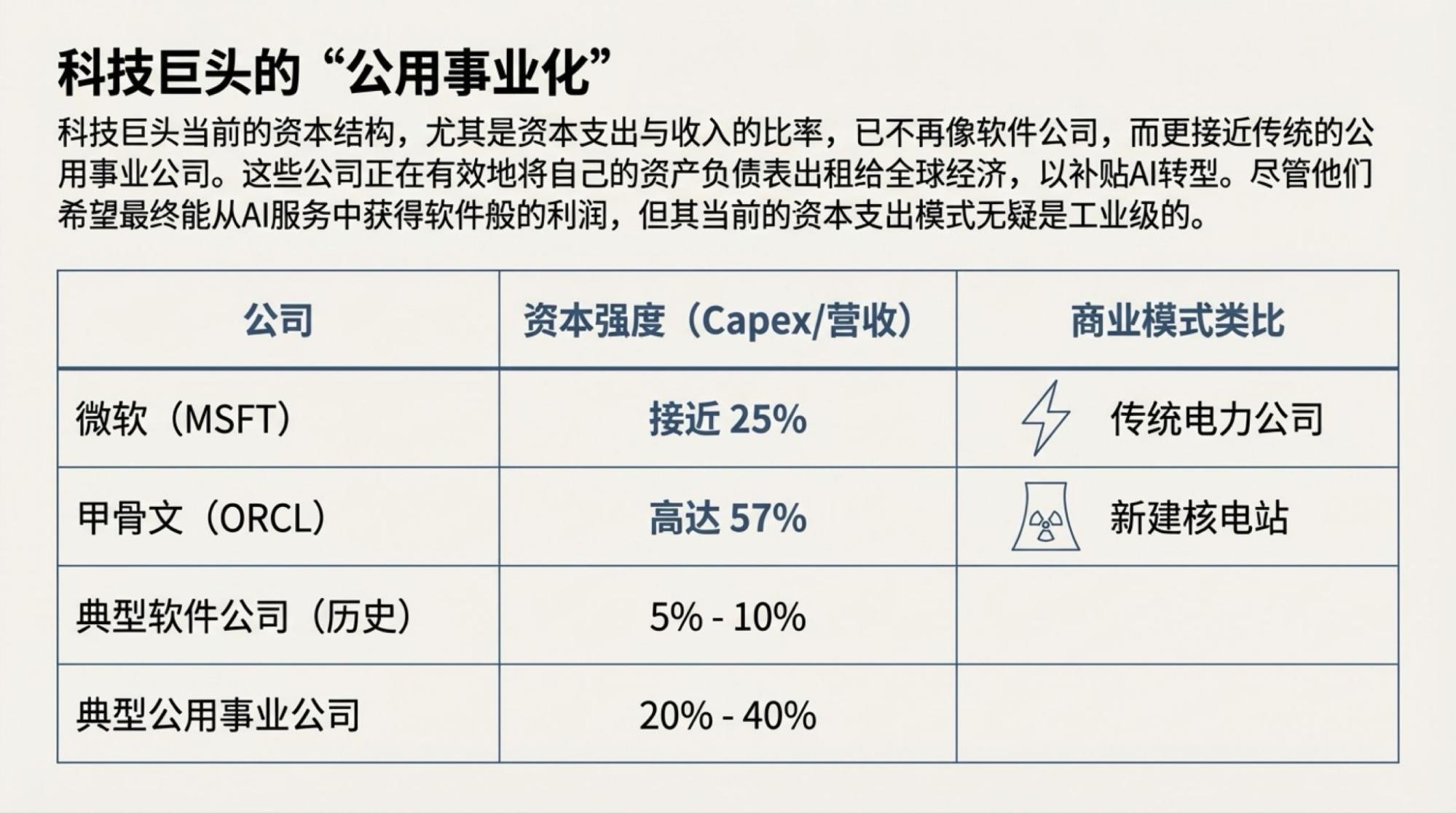

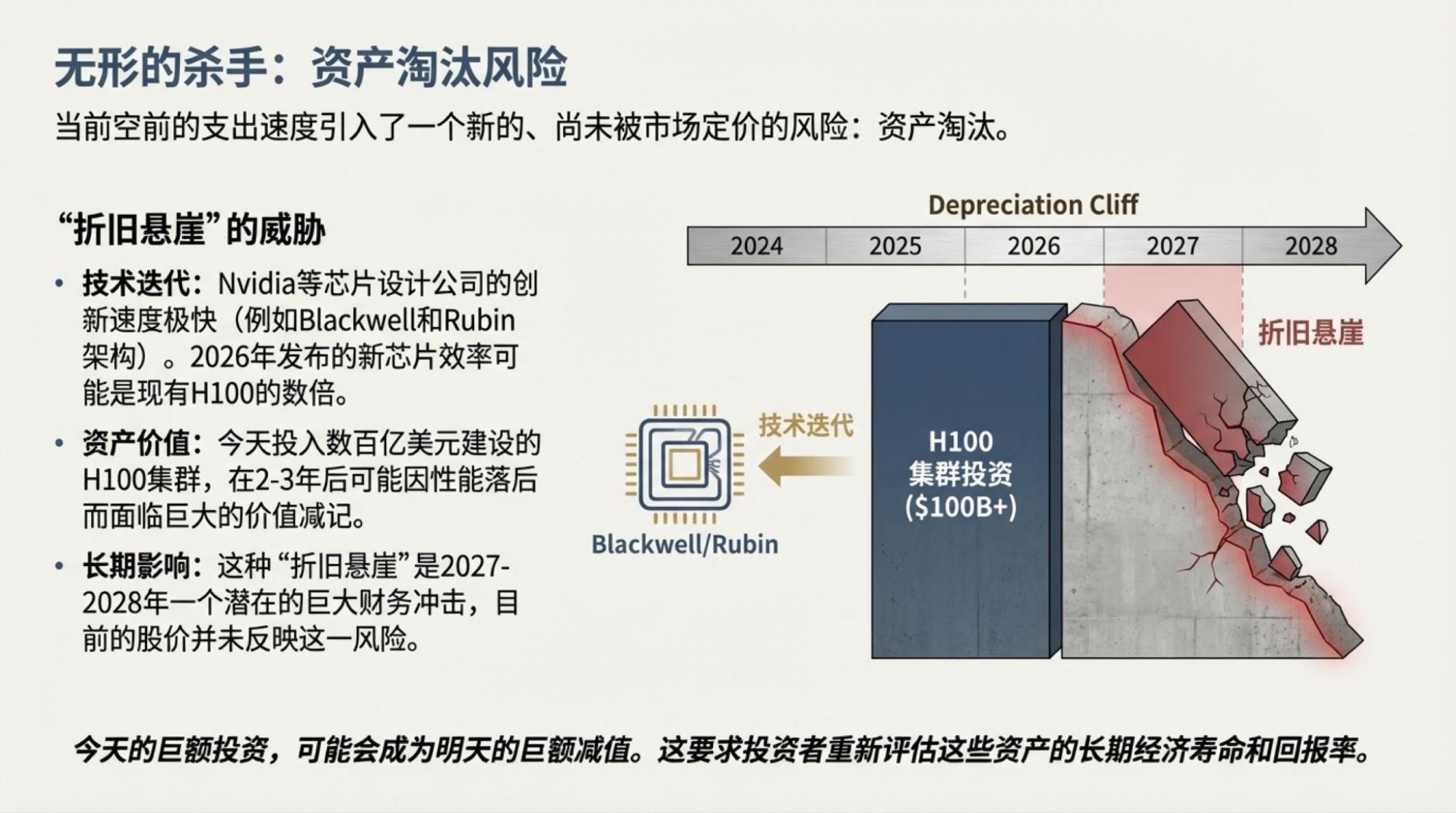

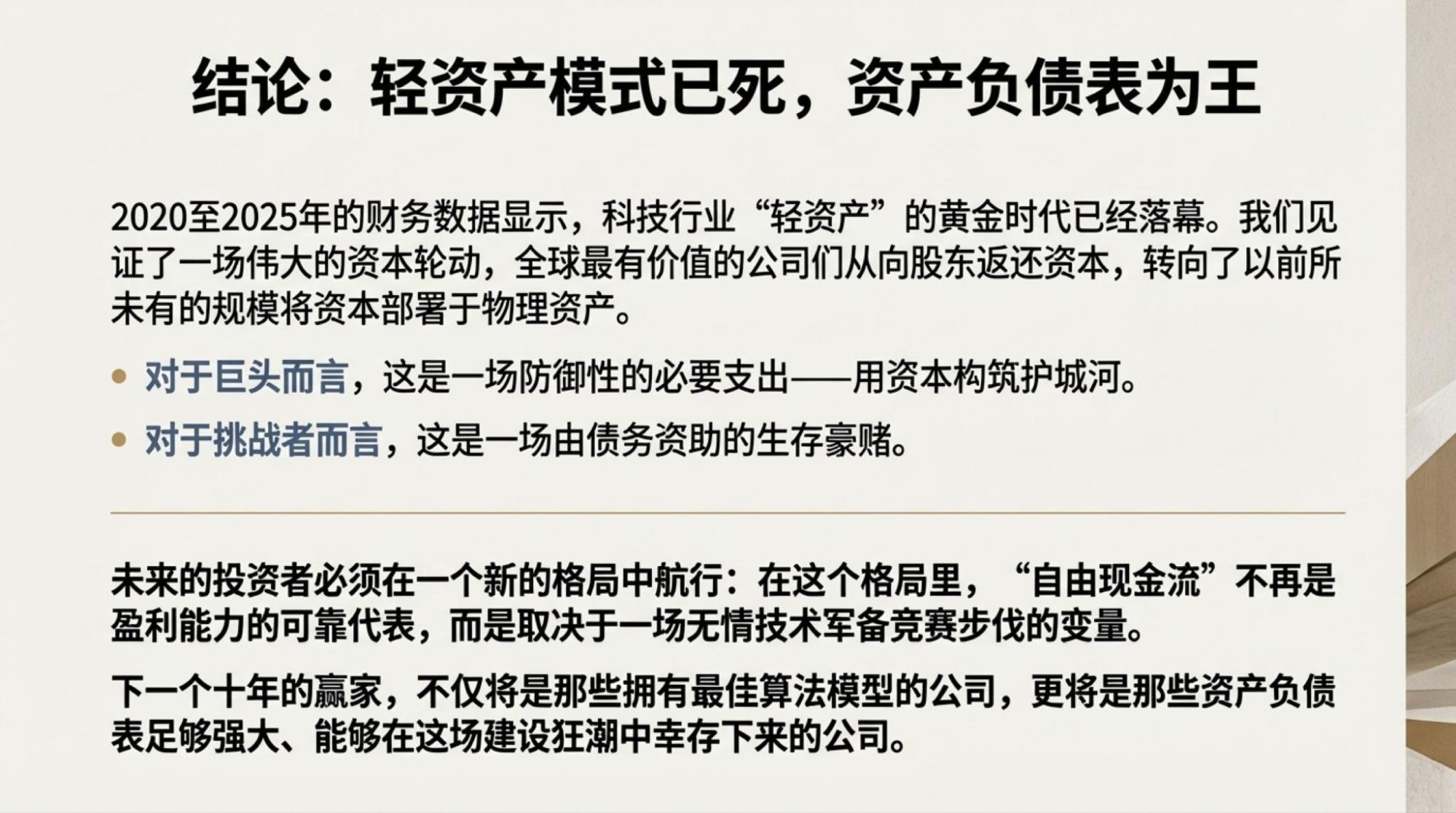

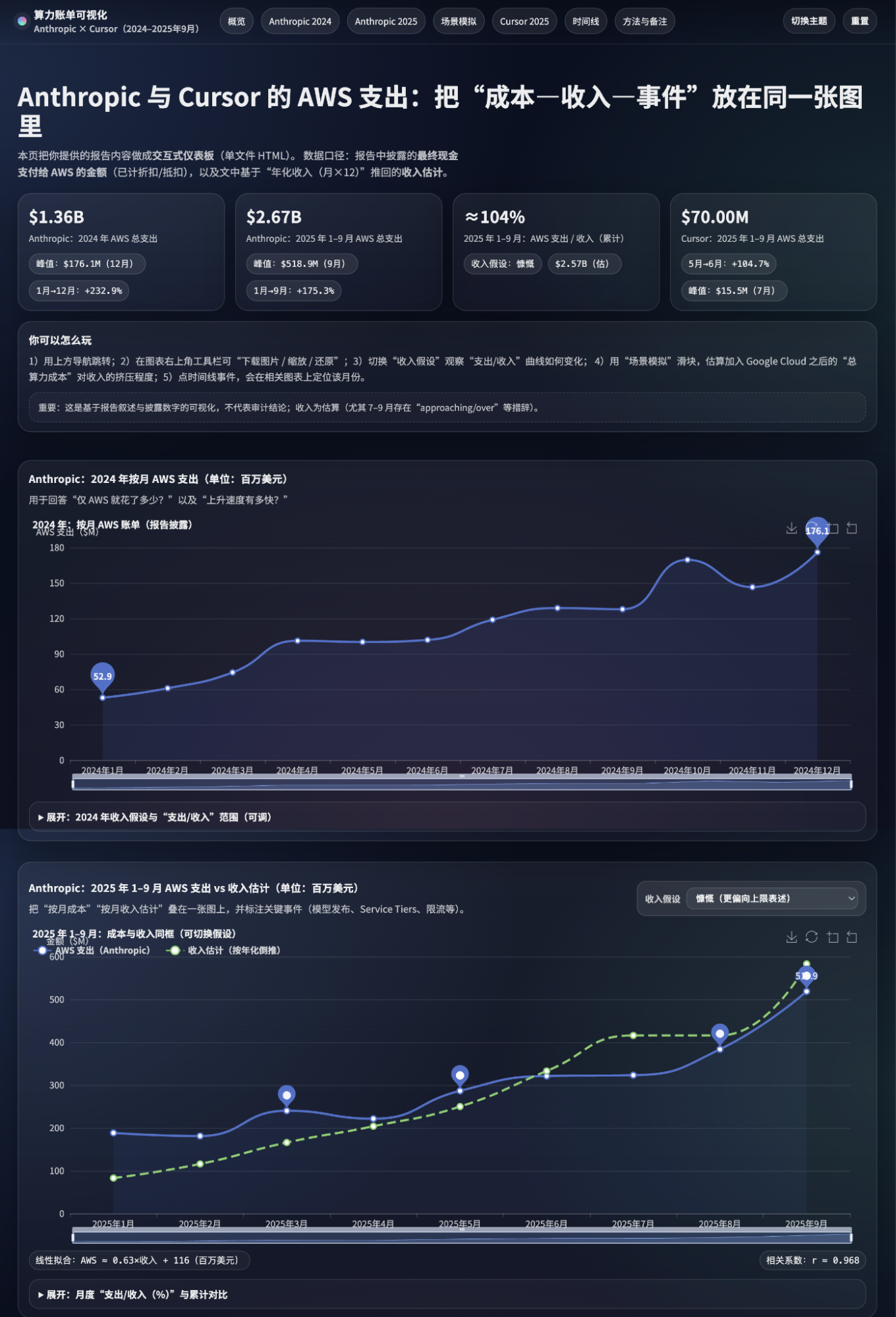

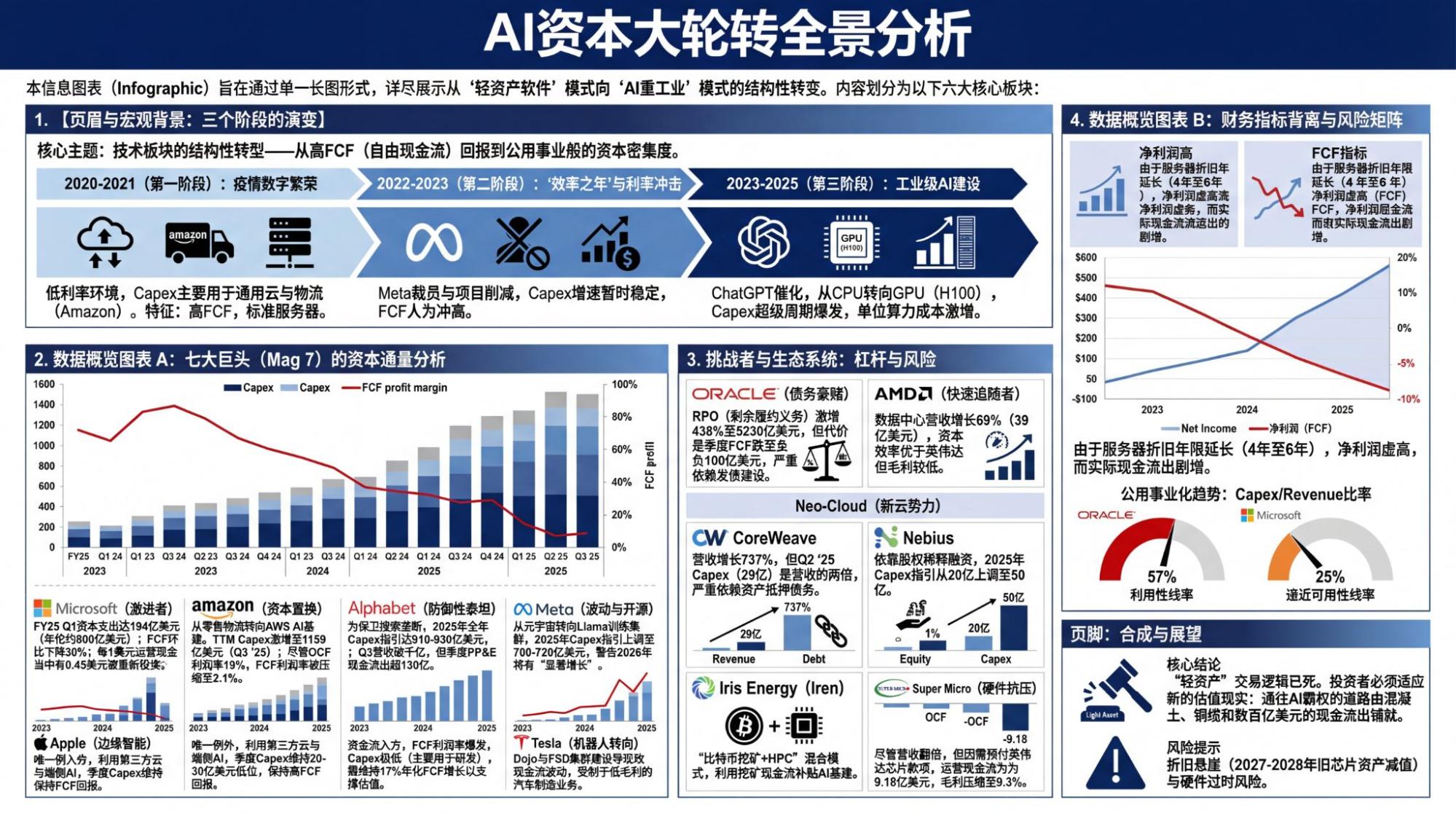

To illustrate this, I initiated a Deep Research into the "cash flow" issue that the market is increasingly concerned about, with a very simple prompt.

Of course, we all know that the resulting report can be exported as a Google Doc, stored in Google Drive, and then imported directly into NotebookLM. From there, you can produce audio blogs, video blogs, infographics, or slides.

When I tried it today, the "long mode" for slides was back.

And then, 25 pages of slides could be generated.

Attached page by page at the end of the article.

Of course, GPT-5.2's capabilities allow for building a great interactive website, for example:

But this, compared to Gemini, really isn't in the same dimension.

Using Nano Banana Pro, it's easy to compress this content into a single infographic.

Today, GPT has followed in Claude's footsteps, moving further away from ordinary users. That is the part that feels off: GPT was the first to do real-time voice conversation models, Whisper remains the top choice among open-source voice models, last year's GPT went viral thanks to its image generation model, and GPT was the first to integrate ChatGPT-Agents, which, combined with scheduling, could complete friendly workflows with complex processes. At the model level, OpenAI possesses all the model capabilities and reserves needed to achieve the stunning and practical product effects of the Gemini series.

However, perhaps Google's ecosystem is too strong, or perhaps OpenAI simply doesn't have enough resources to implement a set of output tools that are more valuable to the vast majority of users.

Nevertheless, the AI competition has already unfolded in a higher dimension, and so far, there is only one player in this dimension: Google, starting from Gemini-2.0.