All features will be available.

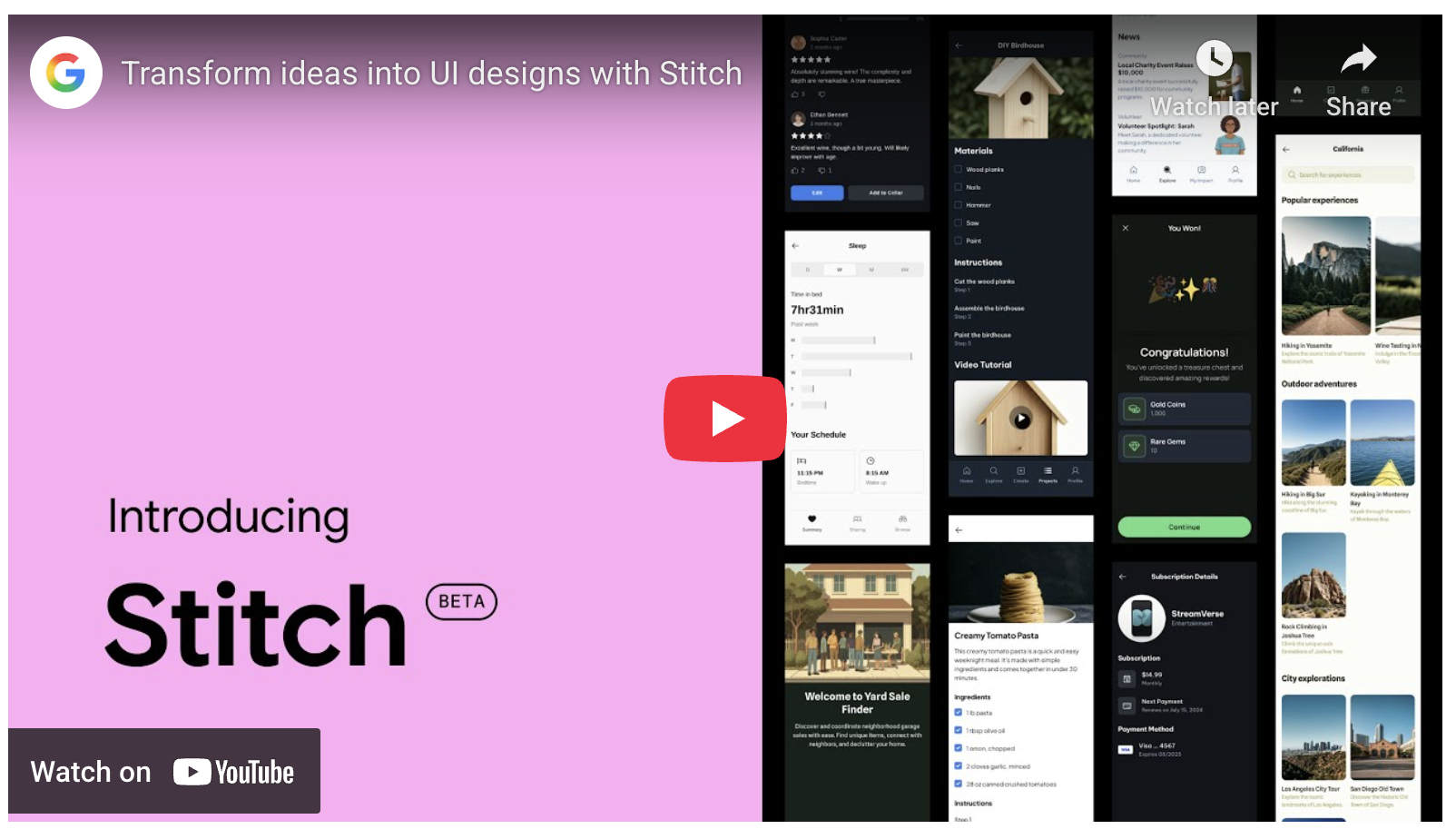

On the last day of 2025, I was consecutively blown away by the new features of two tools: Stitch and Mixboard. I have been relying more and more on these two tools for design and brainstorming, yet they still manage to shock me.

Thus, for the first post of 2026, here is perhaps a good topic: 2026, Design Beyond AI.

I have never studied design; in fact, "art" has eluded me since childhood. In various jobs across over twenty years, design and UI were always parts I simply "skipped." However, over the past year or so, many workflows have increasingly required "design"—or, more objectively, design with AI's involvement.

It is difficult for me to judge from a professional perspective whether the "designs" output by AI are aesthetically superior, but at the very least, they look much better than anything I could produce, and that is enough.

Consequently, they have naturally transformed my workflow, whether in tool development (I will continue to use the word "tool" rather than "app," as my primary goal is utility for myself) or material production (such as slides needed for communication, or demonstrating ideas, concepts, and phase results while writing).

Of course, as mentioned at the beginning, thanks to the tools Google launched and continuously improved in 2025: Stitch and Mixboard.

https://stitch.withgoogle.com/

https://labs.google.com/mixboard

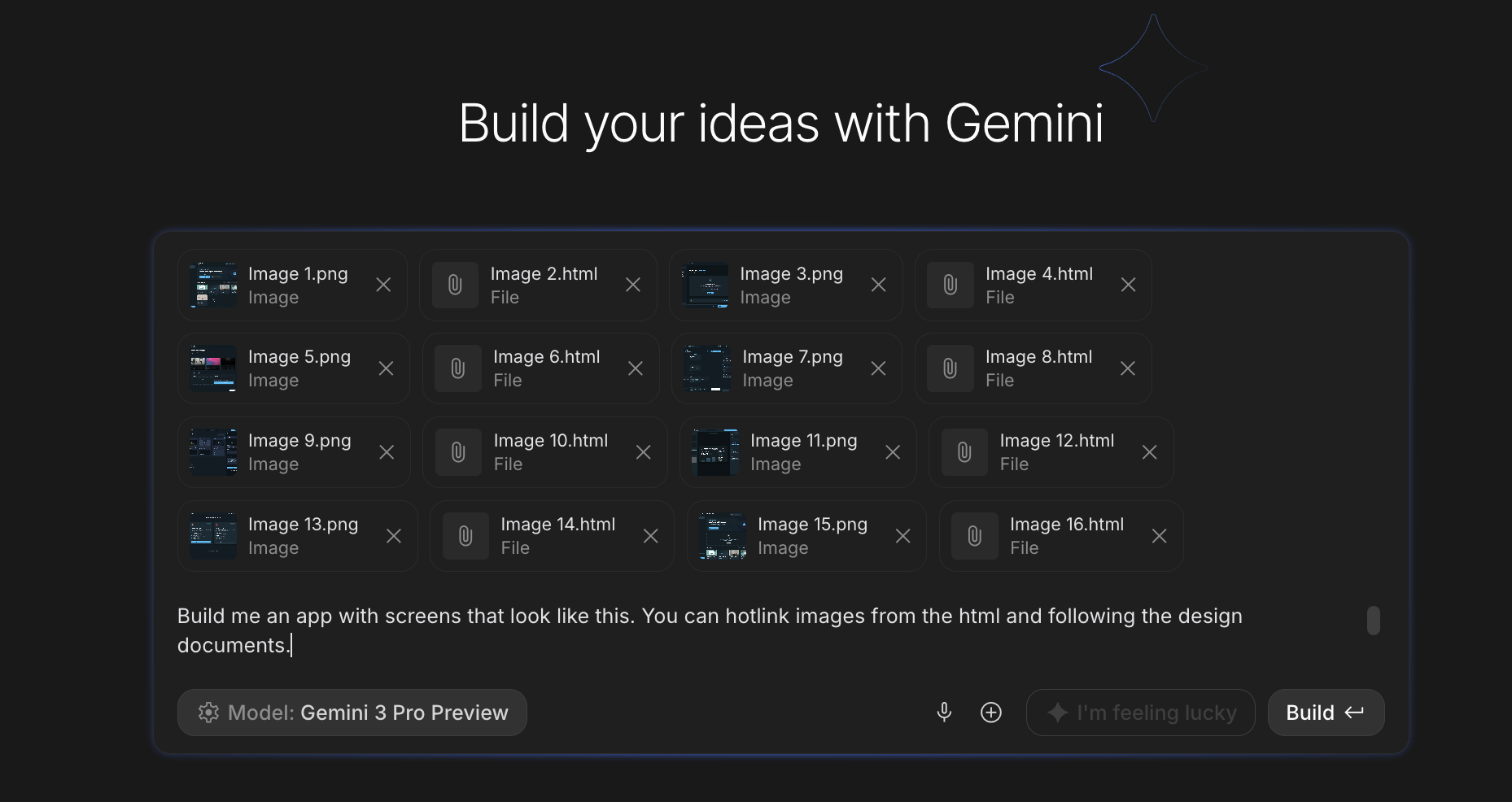

For tool development, I have long become accustomed to this workflow: Organize ideas --> Gemini Deep Think model generates documentation needed for tool development --> Hand documentation to Stitch for UI design --> Hand UI and documentation to AI Studio Build for generation --> Iterate.

Deep Think. After the release of Deep Think based on the 2.5 model, I wrote that Deep Think is, in my opinion, the most suitable model for project design: it has sufficient depth and accurate details, yet keeps architectural complexity just right with high feasibility. In contrast, ChatGPT-5 Pro tends to pursue excessive "perfection," making it difficult to implement. Now that Deep Think has upgraded to Gemini-3, its capabilities are even stronger—so strong that it feels as if "humans are no longer worthy of it."

Handing the design documentation to Stitch for UI design. In the six months since Stitch's release, it has seen massive upgrades. Specifically, a recent update not only showcases the greater potential of Gemini-3 but also directly connects the ecosystem with the coding tool Jules and AI Studio Build.

After "throwing" the Deep Think design document to Stitch, it directly produced various functional pages and guided the user to generate more detailed pages. As shown below (please forgive the use of nano banana for blurring, as this is a tool still in development):

Even with the blurring, the page design remains quite reasonable. In fact, more than that, the main features and elements mentioned in the design document are all reflected in the pages.

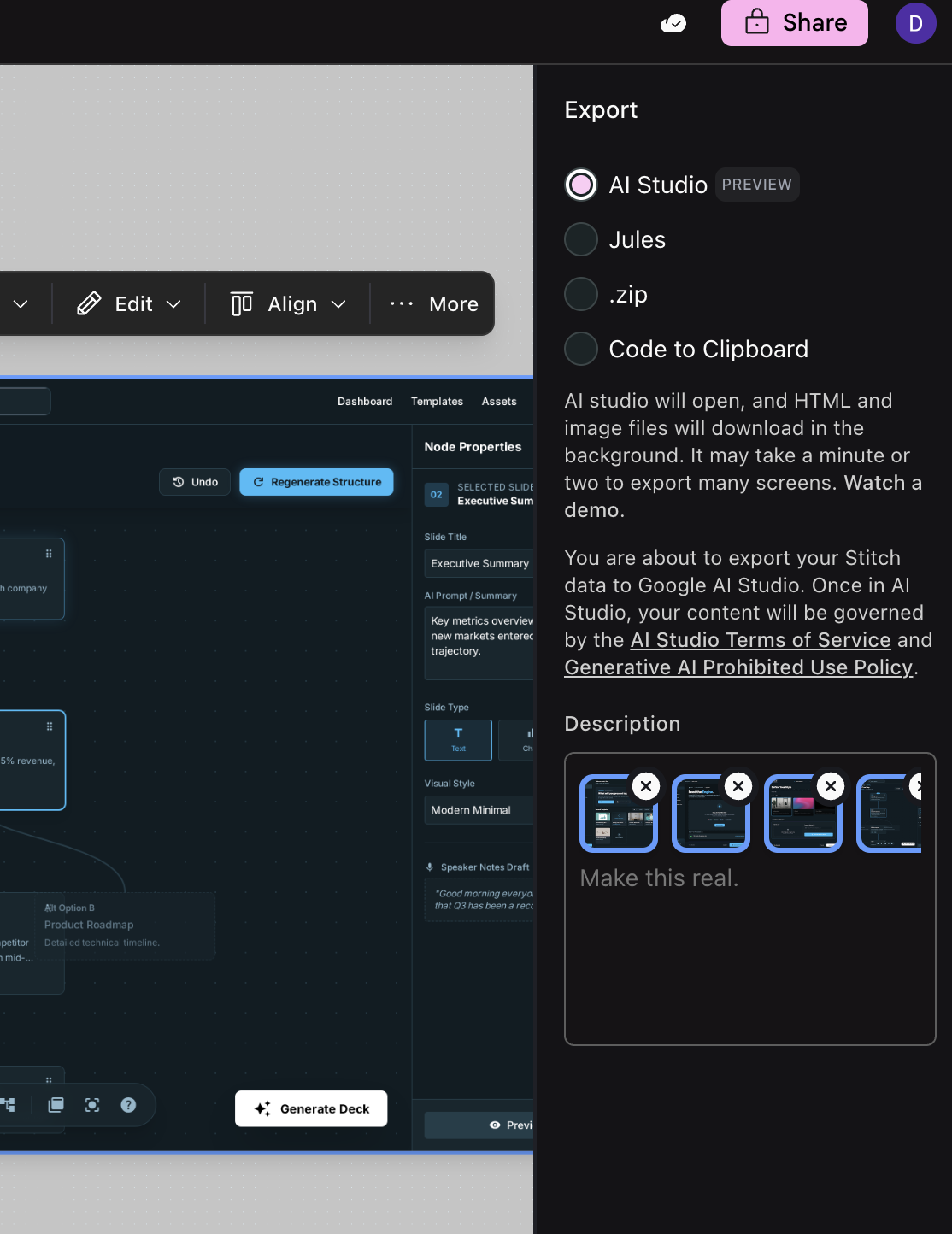

Prior to recent Stitch updates, these pages could be exported to Figma, as HTML code, or as images. If you wanted to use them for vibe coding, you still had to copy-paste and add some improvements.

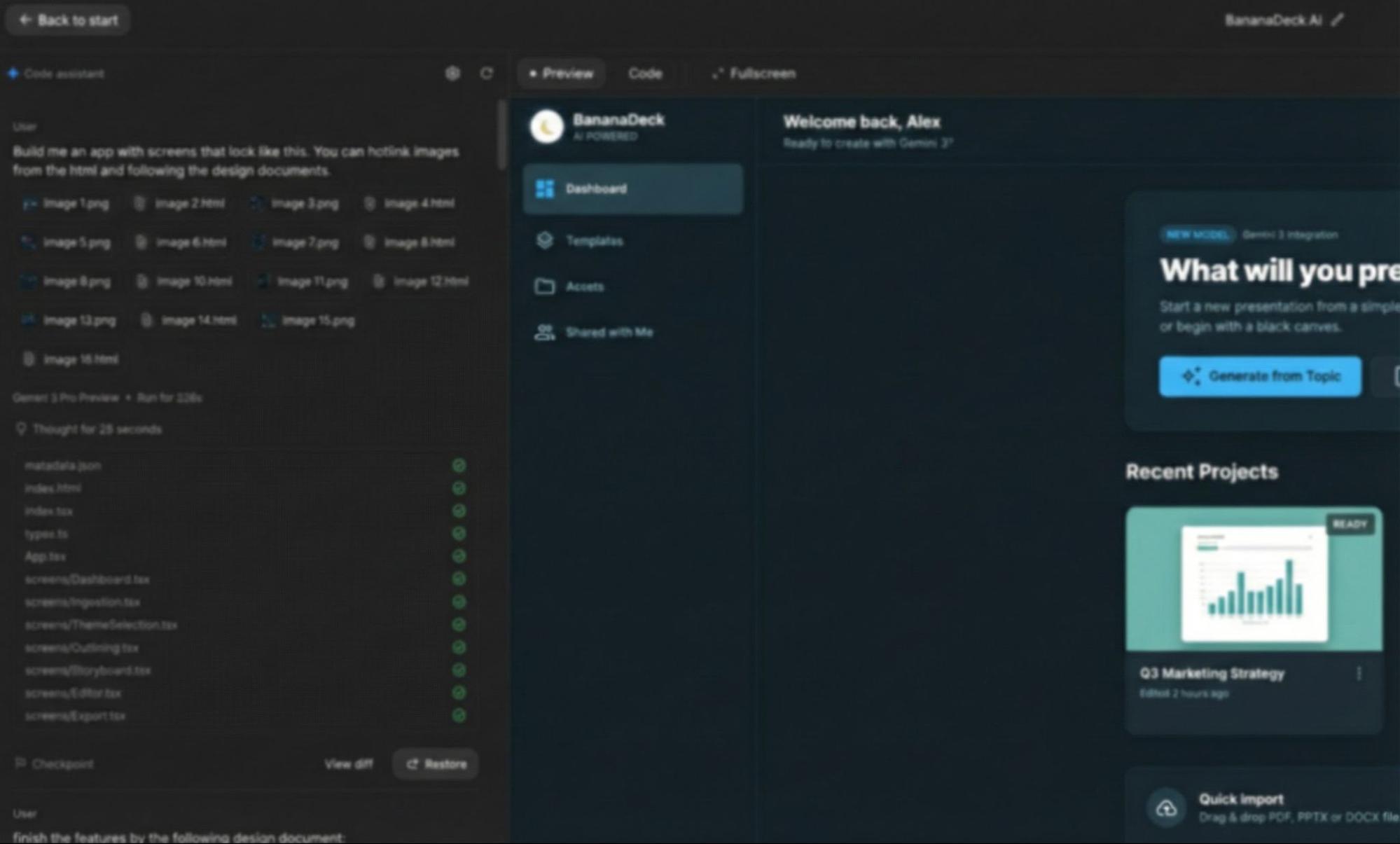

Now, it connects directly to AI Studio or Jules (another Vibe Coding IDE from Google Labs). For a while, this seamless ecosystem experience may remain something only Google can provide.

Then, in AI Studio Build, the tool is immediately ready.

Of course, given the scale of Build, many backends in the first version are mocked and need gradual refinement for actual application. However, the seamless operation from Stitch to Build is indeed a massive leap in experience. I want to say it again: outside of Gemini, no other model has user stickiness.

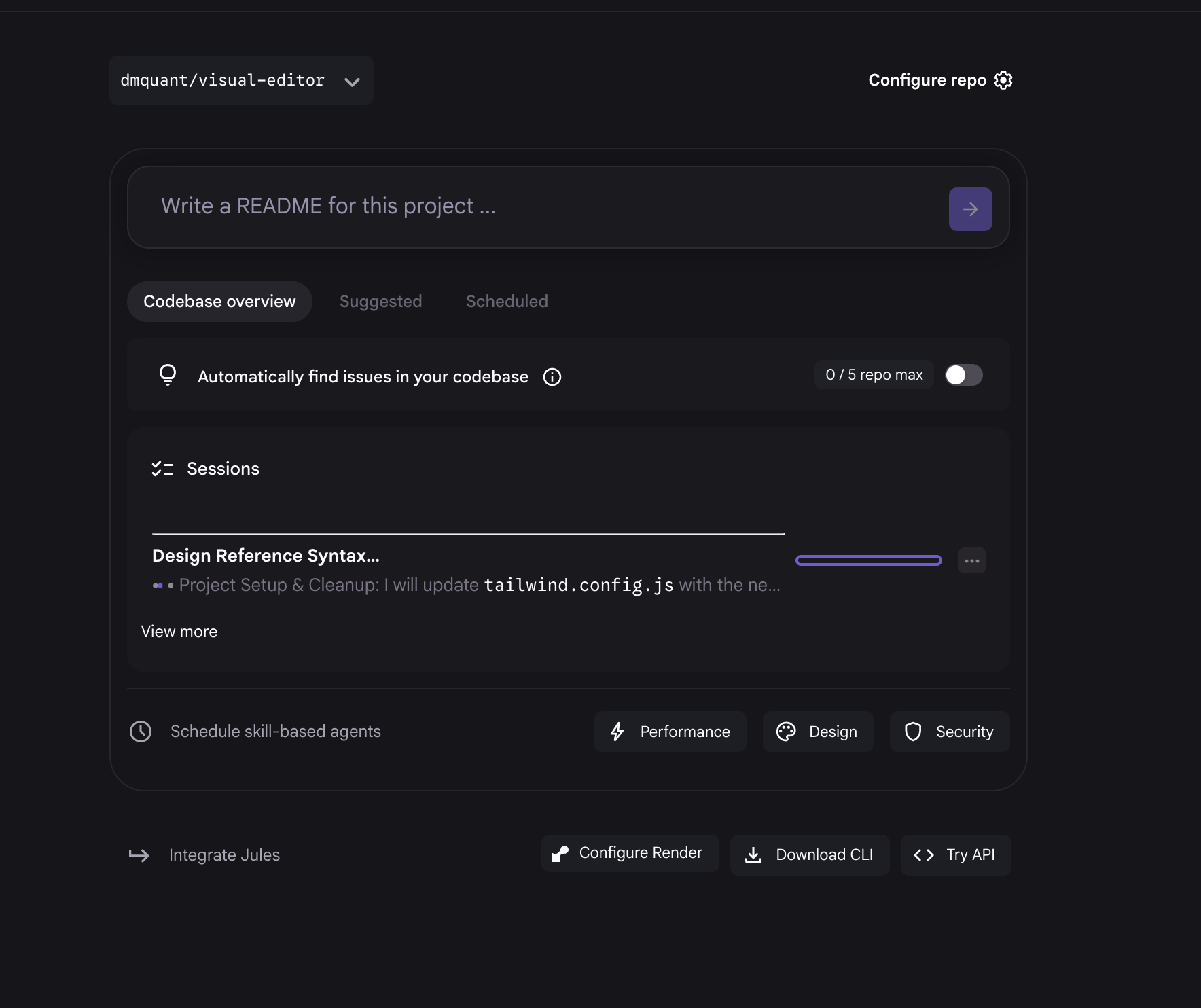

Of course, I also tried the connection with Jules. Jules is a unique tool that has improved significantly over the past few months. Although it doesn't quite fit my workflow, diversity should no longer be a luxury in the age of AI.

As seen above, after submitting to Jules within Stitch, there is no immediate feedback on the current page. However, once you enter Jules, you can see background tasks running—this is Jules' biggest feature. As of writing this, I haven't had time to download and run the Jules code locally (Jules binds directly to GitHub repos, requiring a return to traditional project development, which is why it often feels less seamless in the AI era).

In summary, while AI Studio Build already handles over 90% of my tool development work, the current version of Stitch has become its "golden partner."

Of course, the purpose of building tools is to generate results. In my current state where "I am my own client," design is more important to me in the creation of "deliverables" than in tool generation. Not just the final result, but the "brainstorming" in the middle is equally vital.

Currently, there is hardly a tool that seems better suited for this scenario than Mixboard.

If you throw the aforementioned Deep Think design document into Mixboard, it looks more like an inspiration of scattered elements rather than something ready for production.

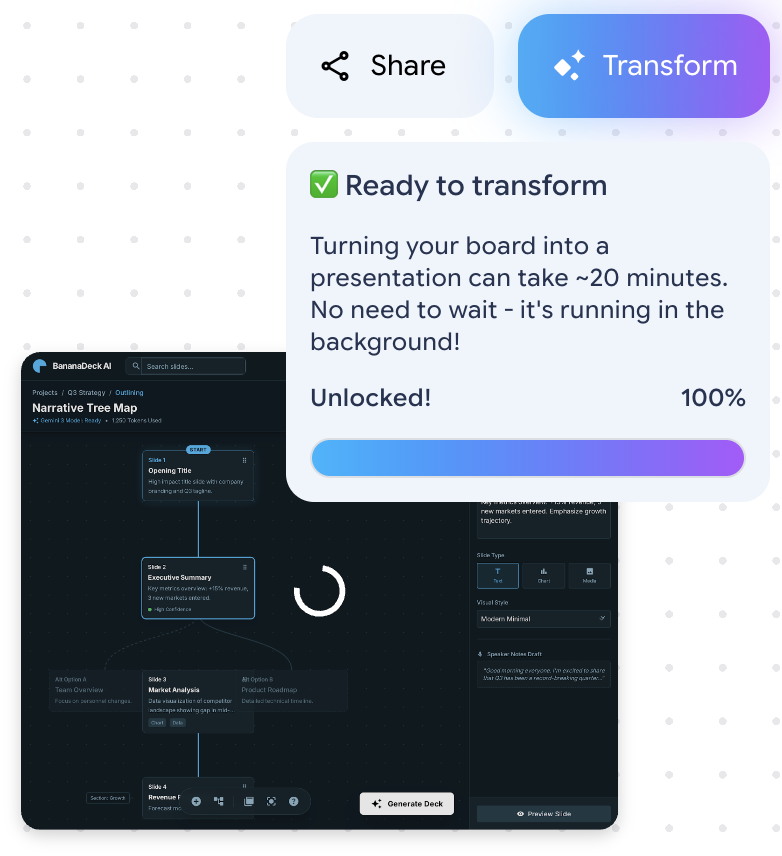

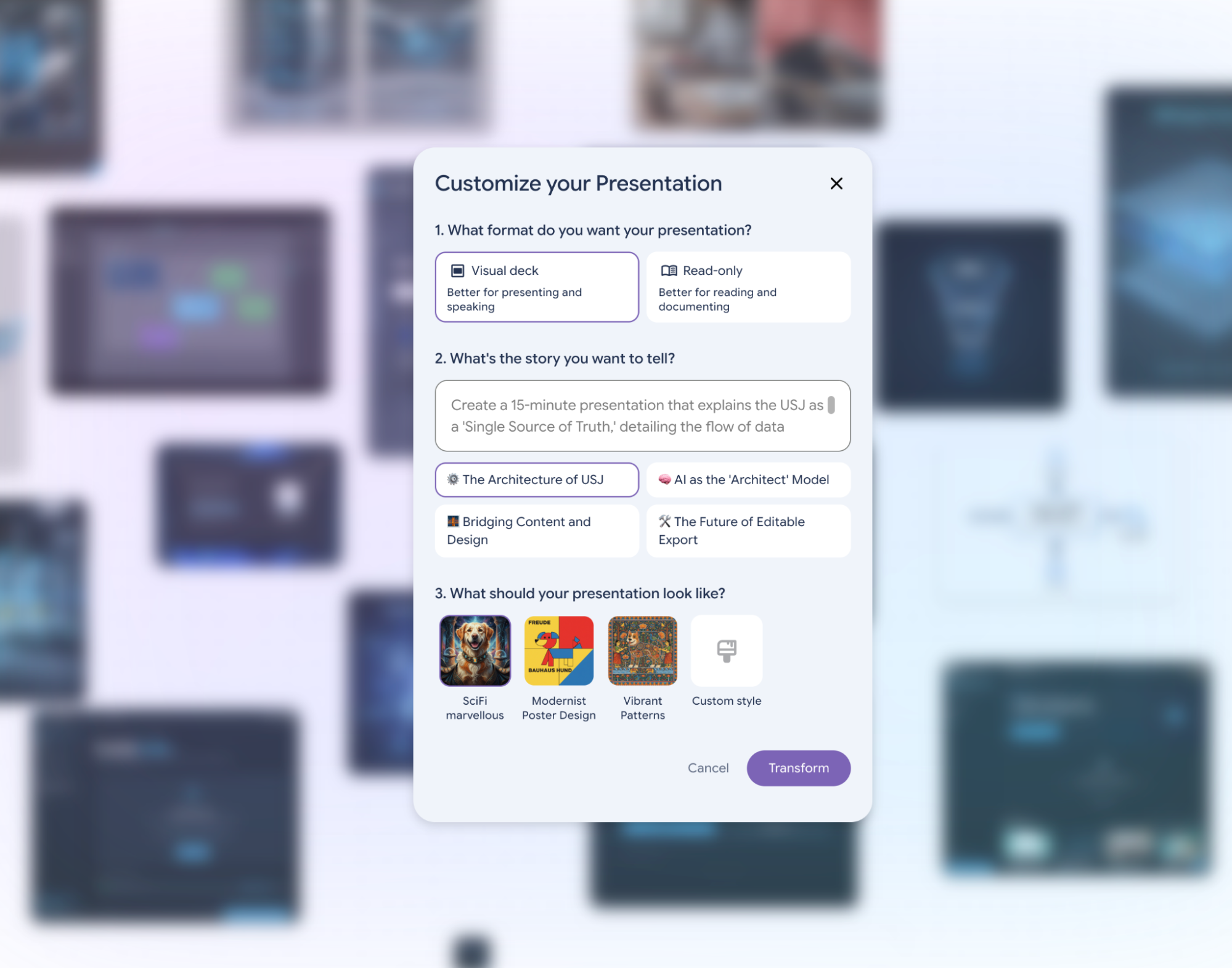

Yes, that was the impression of Mixboard before this update. But a few days ago, Mixboard added a brand-new feature: Transform. This organizes scattered content into a Visual Deck. Does the name sound familiar? Yes, it’s the slide feature that made Notebooklm🔥 go viral.

However, Notebooklm's slides are geared more towards knowledge and professional research with text-based inputs, while this Mixboard feature is more design-oriented and requires high-quality image inputs.

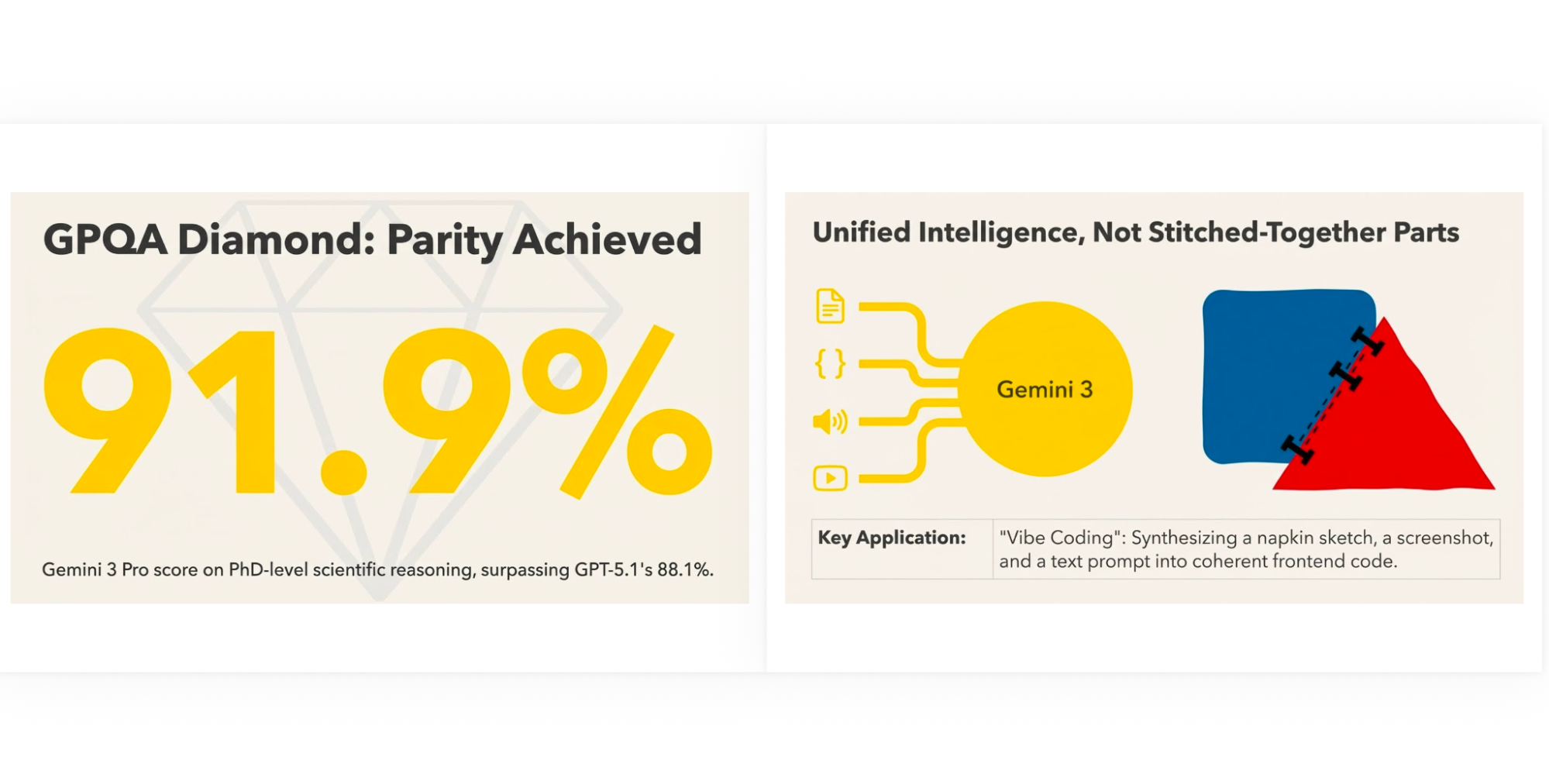

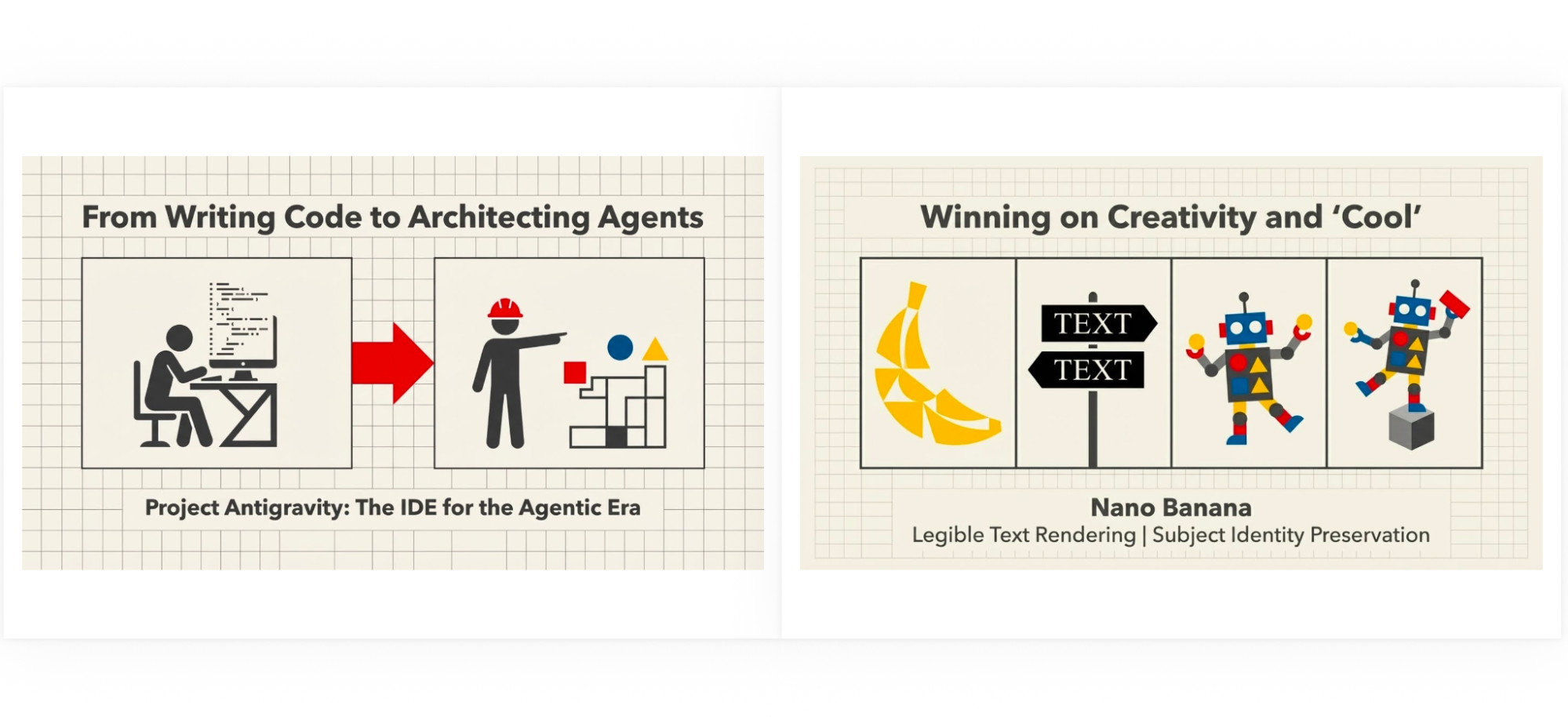

If I input some content about Google's 2025 into Mixboard and select Transform (this requires enough images and PDFs to reach 100% unlock).

One can choose styles and themes (I don't have the screenshot for this specific project, so the screenshot above is from another one).

Once finished, click "Presentation" to download a PDF or images directly.

The style is distinct and has a stronger sense of design. Of course, the drawbacks are obvious: first, it doesn't support Chinese output yet; second, for professional-domain presentations, Notebooklm is clearly more suitable.

But 2026 has only just begun today. Why can't AI design drastically change workflows and habits in the same way "vibe coding" did last year, and in turn, transform even more industries?

It is exactly this thought that led me to write this title: From AI Design Back to AI Design.

Because our pursuit of "beauty" is endless and perhaps has no finish line.