For those interested in my conclusion, here is the TL;DR:

As model capabilities continue to strengthen, the daily tasks they can complete are increasing. Consequently, new ideas will emerge at an even faster pace. Applications that rely heavily on a single model capability (such as coding or search) may gradually be superseded by the models themselves and native model applications. However, ideas that combine multiple models and various tools are beyond the reach of basic model applications and even the model companies themselves.

Applications are increasingly resembling AI data centers; they need not only acceleration cards (corresponding to models) but also connectivity (model-to-model, tool-to-model, tool-to-tool), storage (data, knowledge bases), automated workflows, and, more importantly, the ability to scale up.

Here is the detailed content:

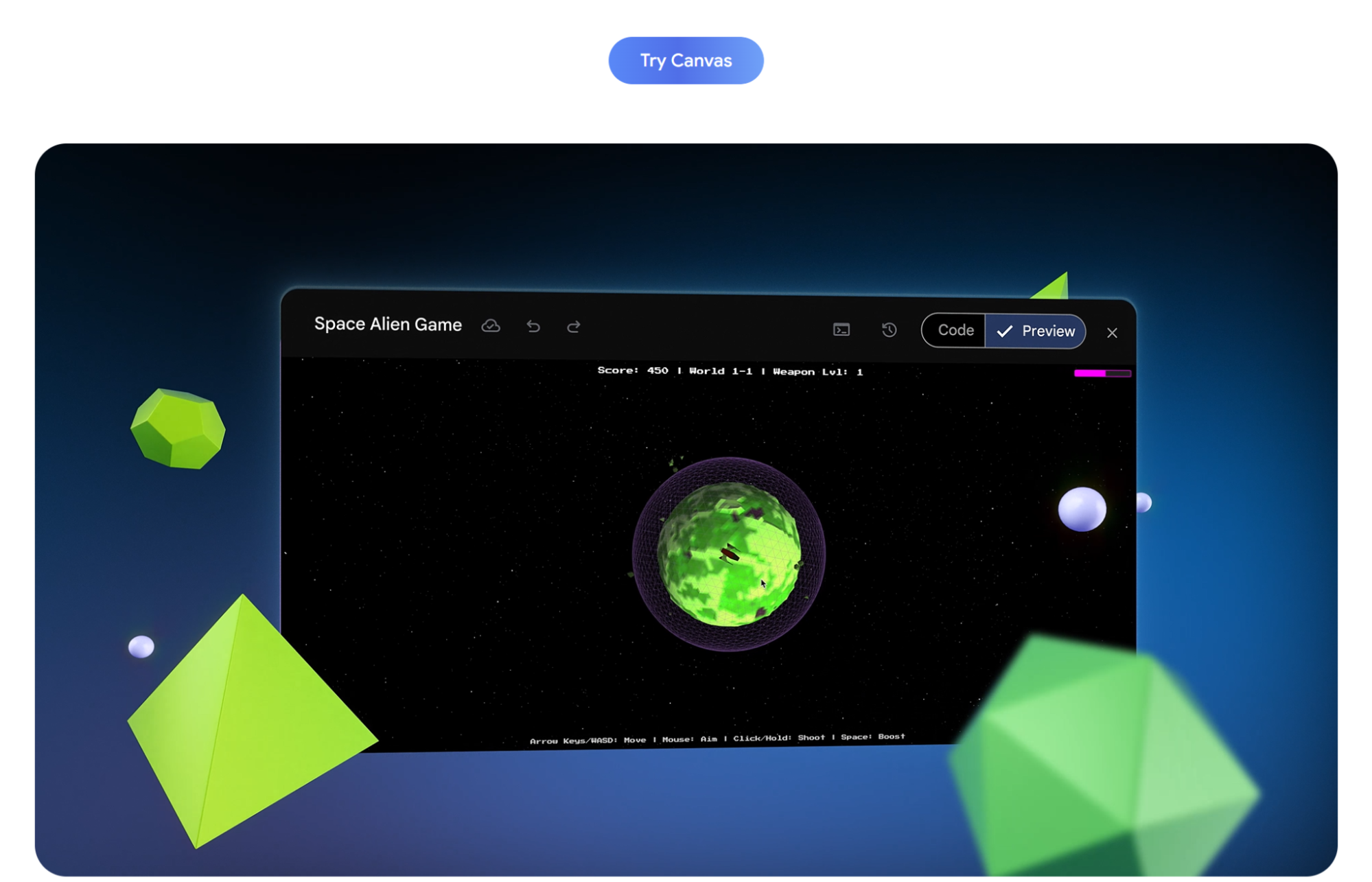

Gemini recently enhanced the Canvas feature within the Gemini application.

While the promotional demos look great, for someone like me who uses it every day, these are fairly standard operations.

I am more interested in the comparison with "Build" in AI Studio. Therefore, I considered taking a report visualization toolset generated in Build and seeing how far it could go in Canvas.

However, a multi-page requirement could only be completed to the following extent:

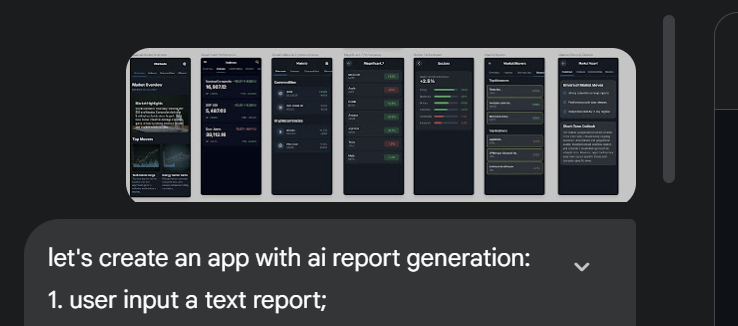

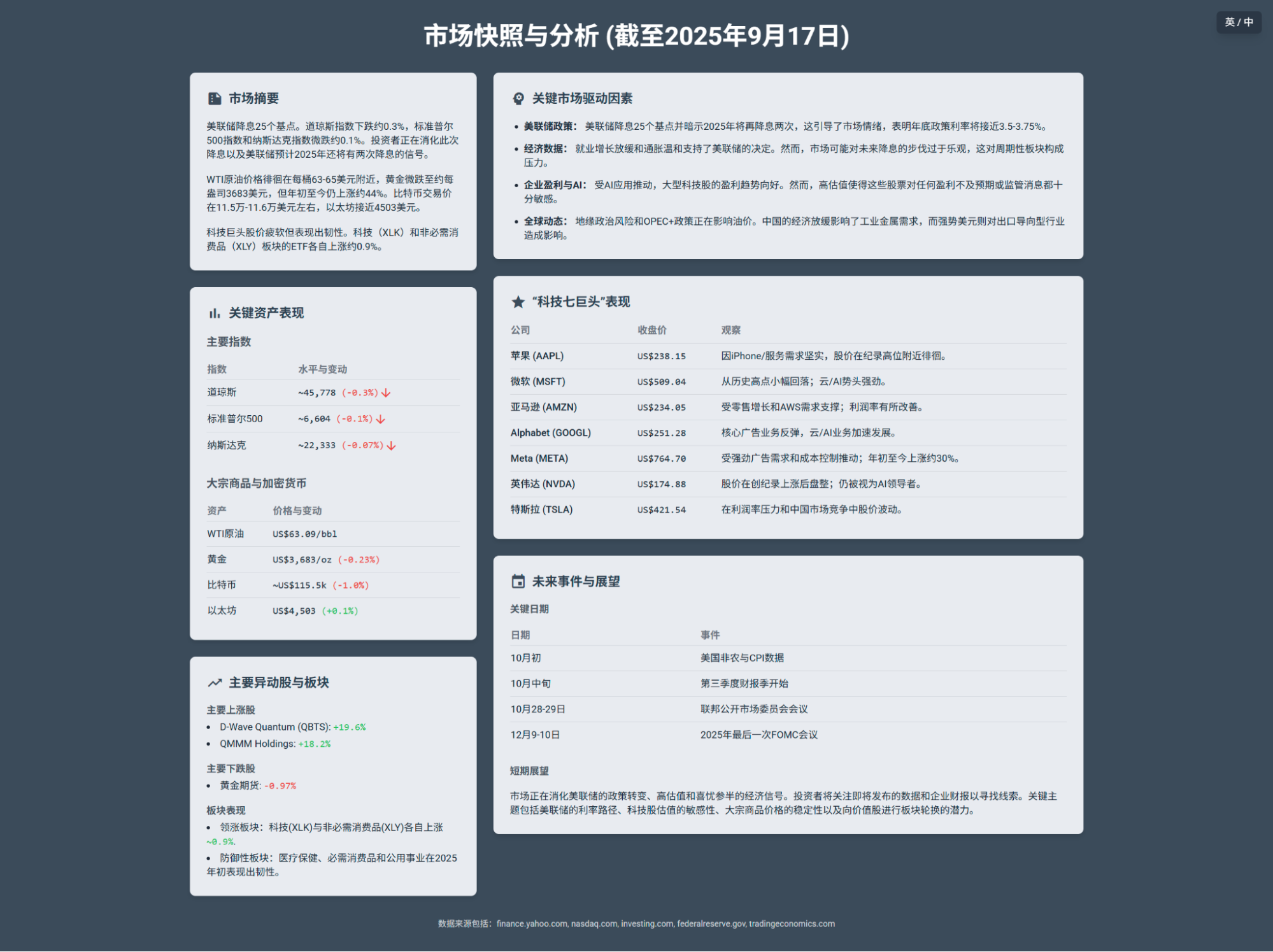

Meanwhile, my actual requirement looks something like this (a screenshot based on the design of Gemini's other tool, Stitch):

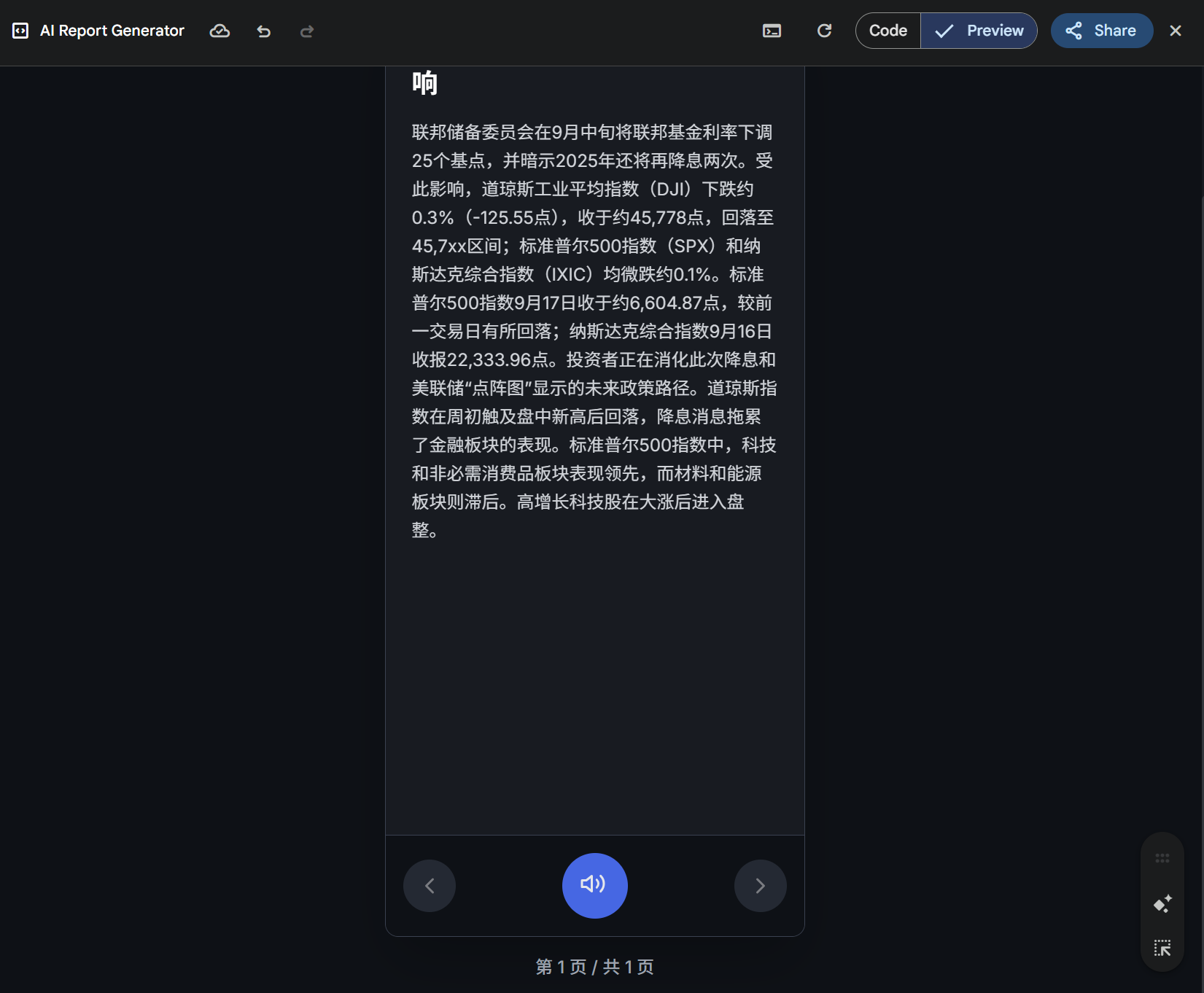

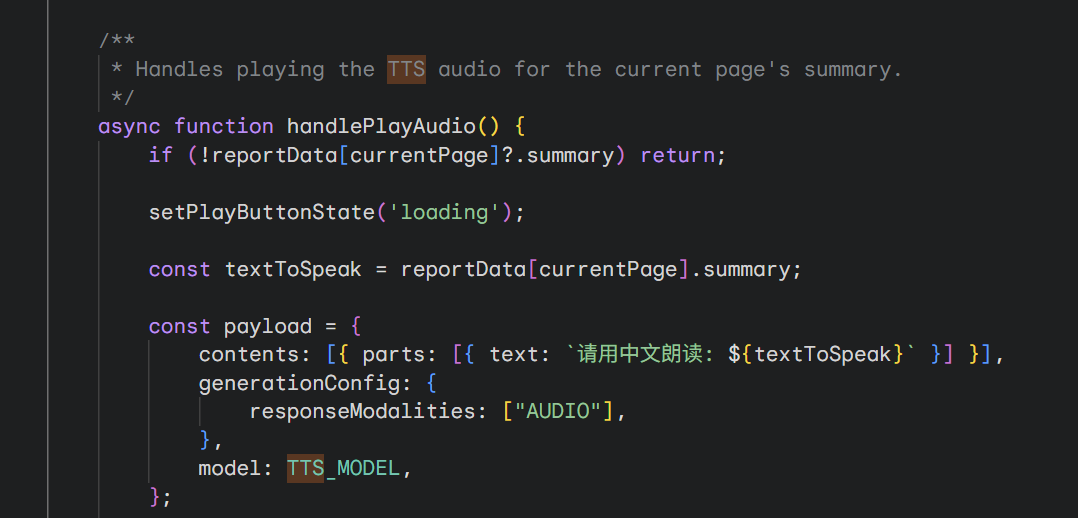

Of course, there is one thing Canvas can do that Build cannot: Canvas can directly call Gemini's TTS (Text-to-Speech) model to generate voice.

This TTS_MODEL defaults to gemini-2.5-flash-tts, which cannot yet be called within Build for the Gemini series models.

Naturally, Canvas is positioned for generating single HTML pages, so this level of performance is expected.

With this update to Canvas (though I didn't actually feel a huge difference), we can start to see the increasingly clear boundary between model companies and application companies:

As model capabilities grow, the daily tasks they can perform increase, and new ideas emerge faster. Applications built on single model capabilities (coding, search) will likely be absorbed by model companies. However, the specialized ideas that orchestrate multiple models and tools are areas model applications—and model companies—cannot easily reach.

Applications are becoming like AI data centers, requiring not just processing power (models), but interconnection, storage, automated workflows, and scaling.

A primary battlefield for my application development remains AI Studio's Build. As mentioned, Canvas is unlikely to replace it; they differ in scale and capability. However, Build's capacity is also limited. Prototypes successfully tested there, while useful for daily production, eventually face the challenge of how to scale.

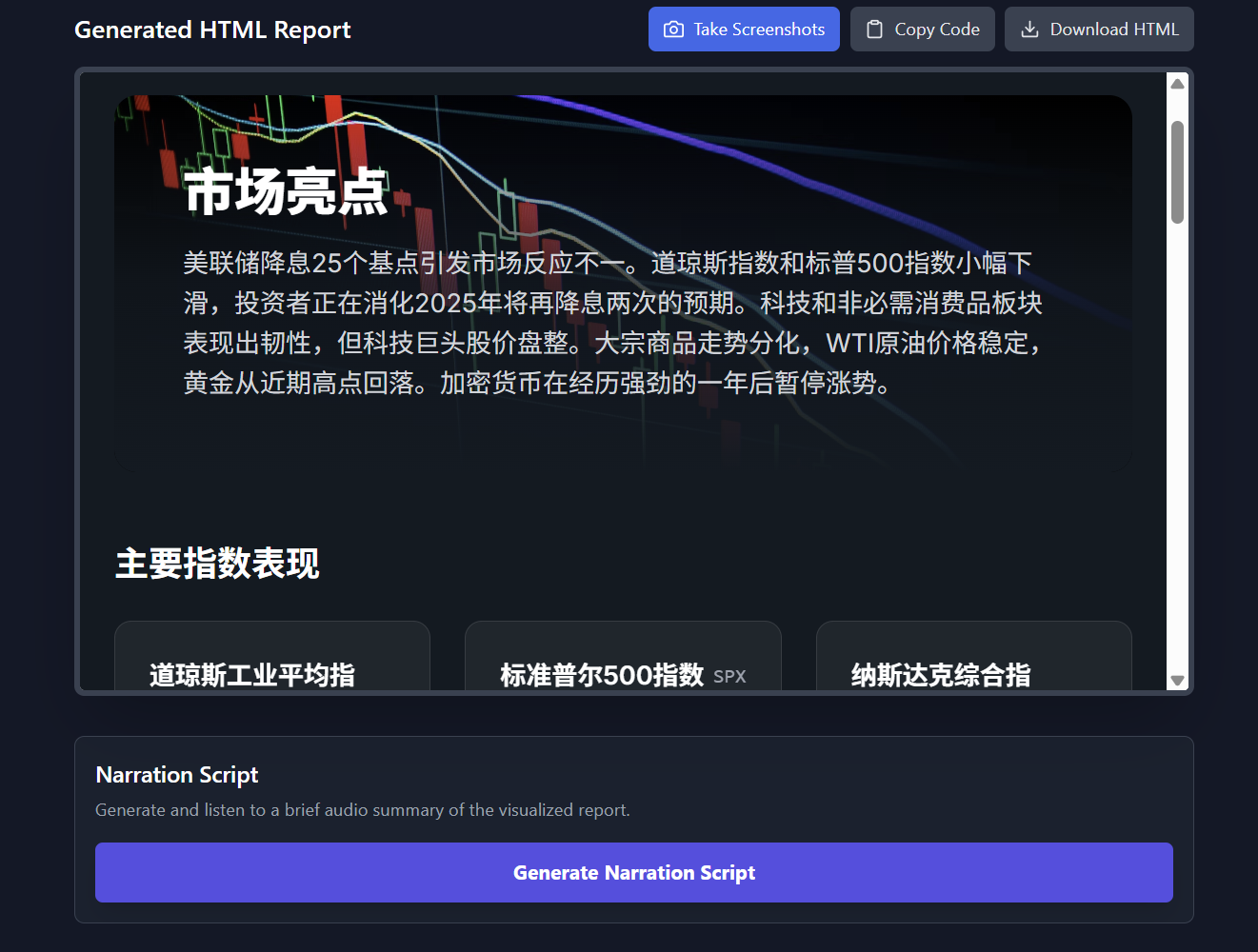

For tools similar to the Canvas example above, I have finalized a version in Build before scaling and am sharing it here.

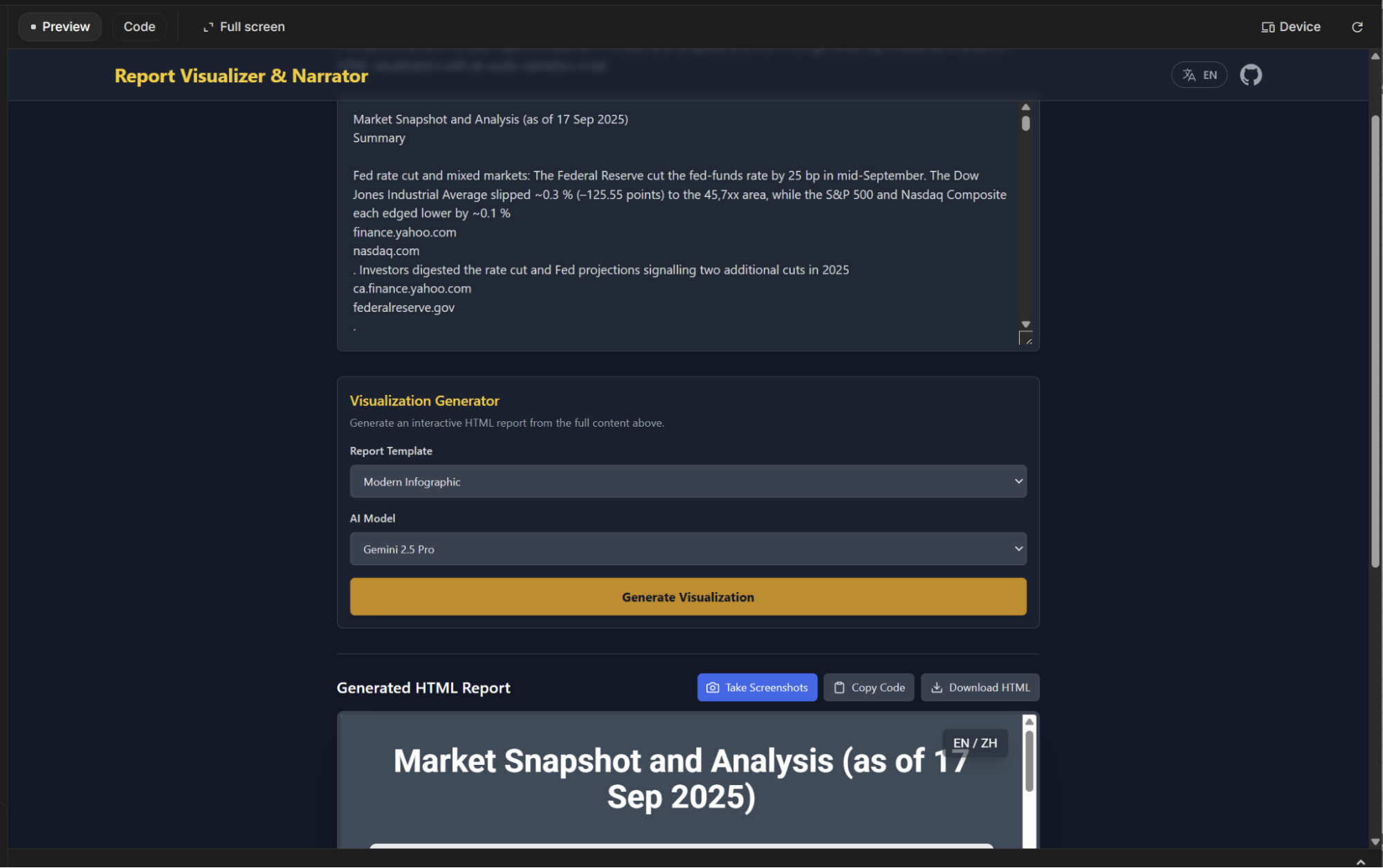

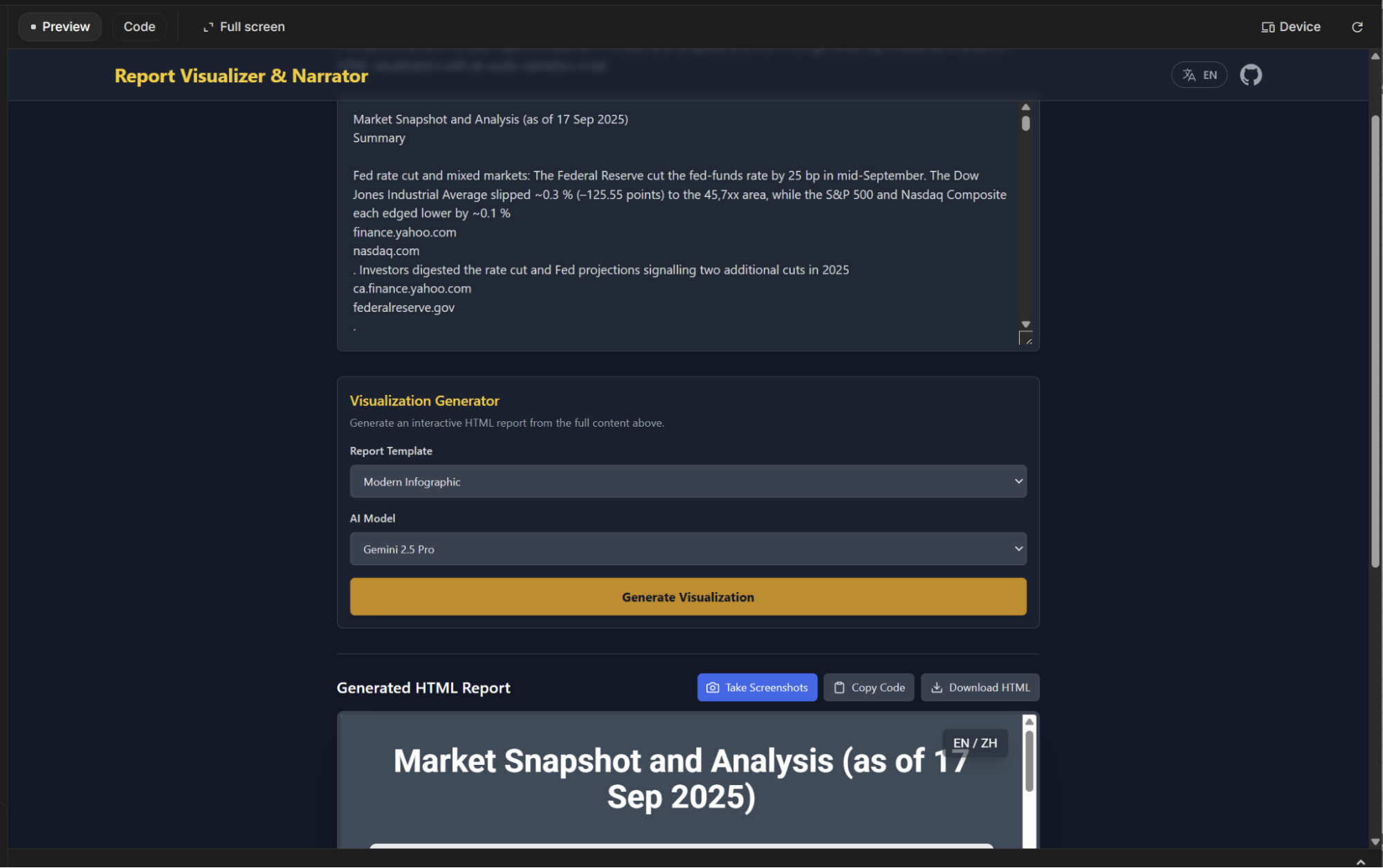

This tool is called "Report Visualizer & Narrator." The AI Studio sharing link is below; users with an AI Studio account have "Viewer" permissions.

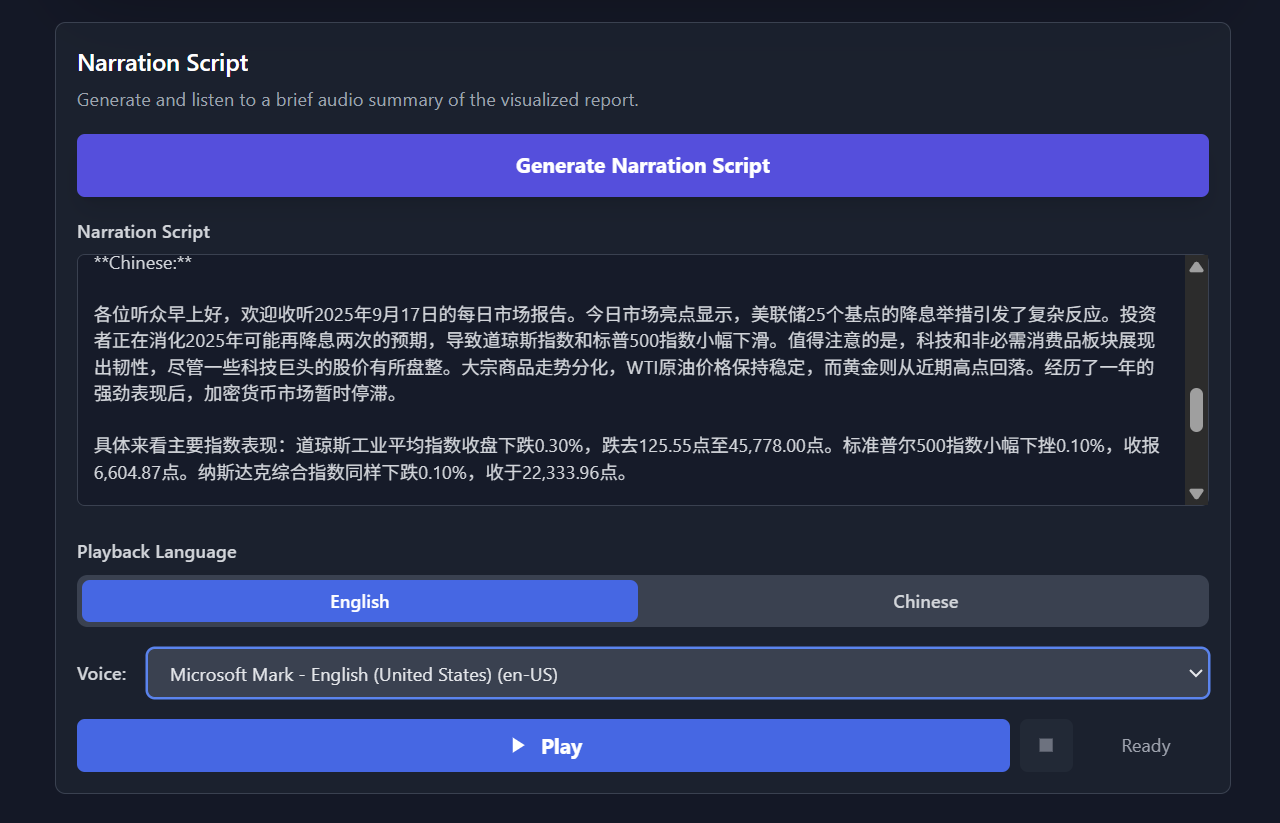

My original intention for creating this tool was to visualize and narrate automated daily reports, eventually generating videos automatically. However, this three-step process could only be completed one-and-a-half times in Build: visualization and script generation worked, and narration was possible but only using the desktop system's built-in models, with "interesting" results.

This is the most important reason for needing to scale beyond the Build tool.

The basic usage flow is as follows:

Input the report text;

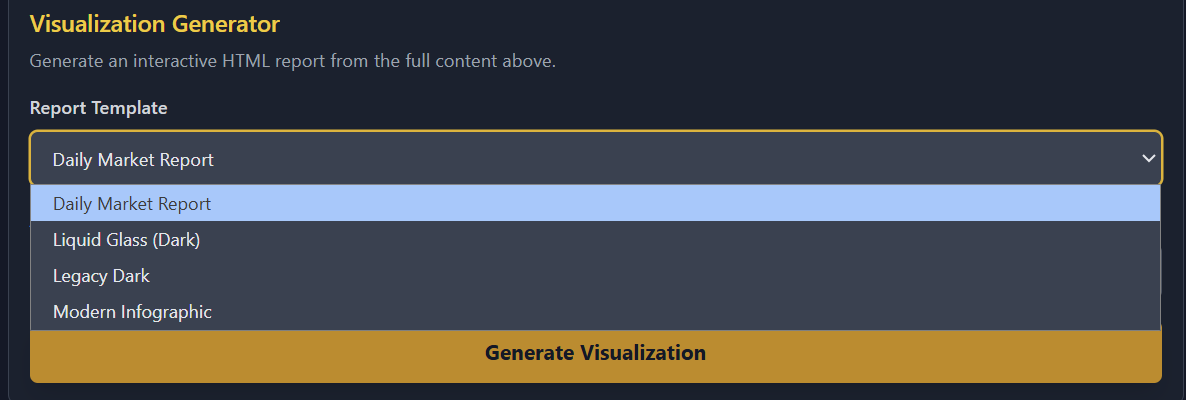

Select the style and model; I have configured various styles.

Review the generated results;

Generate the narration script and play it, though the voice sounds quite robotic;

I have configured different styles. Since it is primarily for daily reports, the content structure is somewhat fixed, but this doesn't impact it much.

For example, the Apple "liquid glass" style:

The "Nano Banana" poster style:

Final Thoughts: The biggest change AI has brought me is that I am someone whose mind constantly overflows with "new ideas," at a speed far exceeding my own skills and execution. Six or seven years ago, I forced myself to stop having new ideas. Now, AI's capability and execution far exceed my own; we have finally begun to match each other.

In fact, I no longer see myself as a "person with many ideas," but rather, I often feel like a "person with no ideas at all."