Actually, for a long time, the Vibe Coding tool I used most was Build in Google AI Studio. Strictly speaking, it's a pure application tool, not an IDE.

In practice, my usage completely exceeded the boundaries envisioned by the Google team: I integrated the backend and used CDNs to break through the Sandbox limitations. For quite a while, it remained my first choice for creating various tools. After all, many of our needs are relatively simple, and essentially, all our AI application cores consist of only three parts: workflow, data, and prompts.

However, in scenarios requiring strong interactivity and high coordination with a desktop environment or one's own cloud environment, Build inevitably feels inadequate. This is mainly due to Google's positioning of it; when Build's framework is set for small projects, you cannot add too many third-party libraries or your own functionalities.

As a result, returning to an IDE became my inevitable next step. Of course, before starting the content below, I still suggest that most friends (especially those without extensive project development experience) should still choose lightweight tools like Build for Vibe Coding rather than an IDE. Complexity often means a loss of control.

Thanks to a period of "unorthodox ventures," when I reopened the IDE, I could view it with an almost "blank slate" perspective.

Yes, before this, my combination for over a year had always been Cursor + Claude models. Although it changed to Cursor + Claude Code plugin after the second half of last year, the essential difference wasn't significant. During the same period, I would directly use Gemini CLI, Claude Code, or OpenAI's Codex in the terminal, and also use Codex (as a plugin) within Cursor.

Over the past few months, at the model level, we've seen upgrades like Gemini-3-Pro, GPT-5.2, and Claude-4.5. On the tool side, options like Google's Antigravity and Claude Cowork have emerged.

Indeed, all of these—whether models, tools, or Agents—have significantly improved the Vibe Coding experience, without a doubt.

However, if we return to the simplest question—if I could only choose one tool—after a period of use, my answer unequivocally points to: Antigravity.

Perhaps many will question why I can't use several at once. I think anything can be used during testing, but for real "production," one must focus on the smallest toolset and the simplest workflow, well-honed through use.

This is the core reason why I would only choose Antigravity.

Correspondingly, there are several reasons at the technical and experiential levels, listed briefly below:

- I've always believed that at this stage of model, tool, and Agent development, the factors influencing the final result are increasingly leaning towards "human-machine synergy." In many of my use cases, the requirement for comprehensive model capability is far higher than whether a specific problem can be solved (for most specific problems, I can rely on my experience to prompt the model). Thus, Gemini is the best choice.

- Although switching from Cursor to Antigravity was a bit jarring at first—after all, Antigravity is derived from Windsurf and has distinct settings compared to Cursor—it also emphasizes parallel multi-Agent work in its interaction, returning a significantly larger volume of information. Both require some adjustment time.

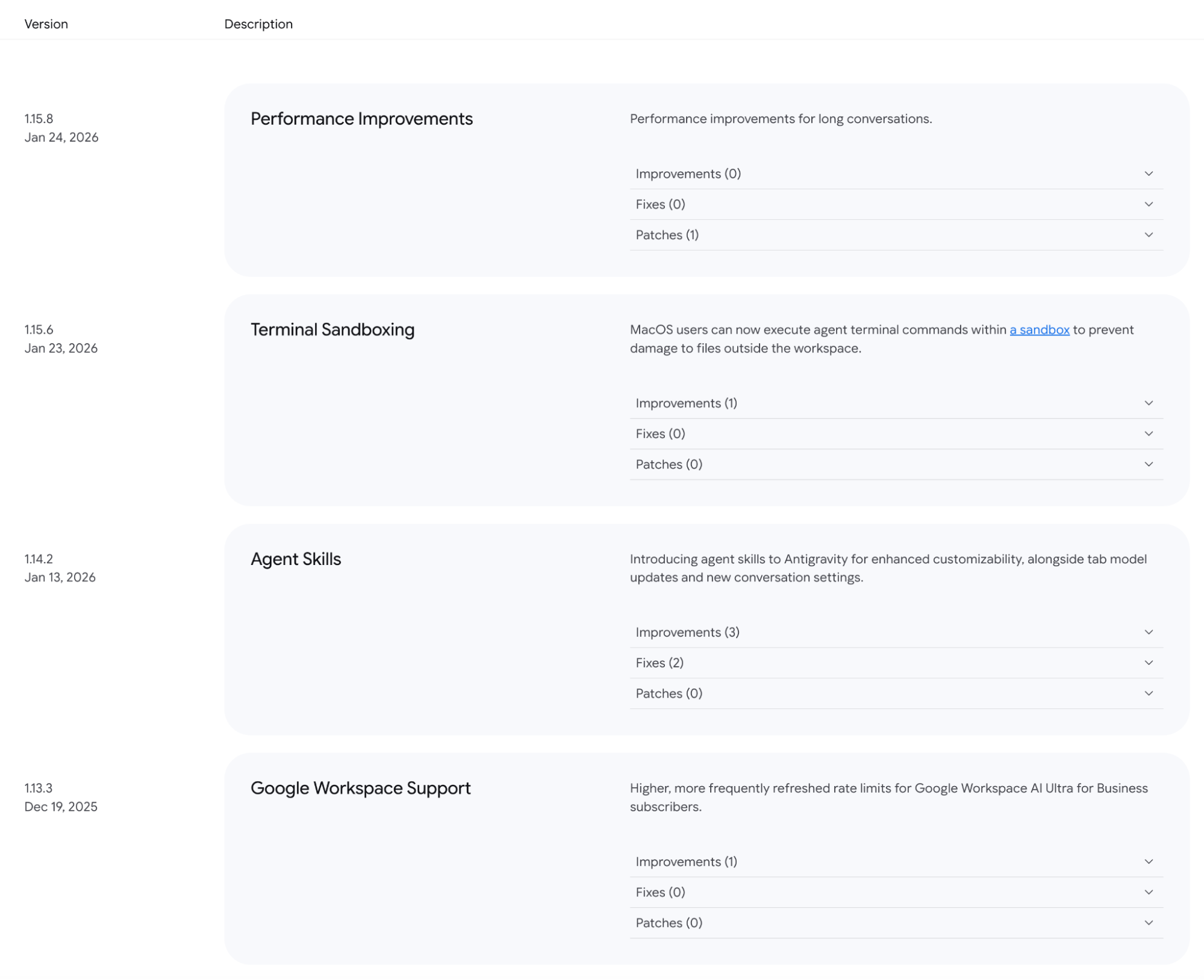

- In the two-plus months since Antigravity's release, many major functional updates have been made. The screenshot above is just part of the latest changelogs. Meanwhile, the interaction information for Multiagents has also been significantly optimized.

- This point is key: Antigravity is extremely capable in testing and debugging. While Claude Code has corresponding features, seeing Antigravity open a browser, simulate user clicks for testing, and locate errors after code completion feels much better.

- Antigravity is actually a combination of Claude Code + Cowork. When Gemini CLI first came out, I said such tools aren't just for Vibe Coding, but for Vibe Working. Using the capabilities to initiate searches, operate local files, and call models for generation solely for writing code is a waste. In fact, while I've written two articles on the convenience of Cowork, when I let Antigravity implement the same functions, it performs better because the Gemini-3 model is stronger and more comprehensive. Of course, Cowork's advantage is its simplicity; Antigravity is still a bit complex for users who don't know much about operating systems.

- Ecosystem: I've also said that except for Gemini, other models lack user stickiness. The reason is simple: real production isn't as easy as "ask the model a question, and it delivers the finished product." It involves tool combinations and costs. When Antigravity finally added Workspace support in the December update and formally integrated the account system into Google One, the production chain became complete. This determines the different strategies taken by Google and its competitors. Whether it's OpenAI or Anthropic, they need to keep creating new concepts and tools—like Skills or Cowork—which are essentially the same thing in different packaging, while maintaining model competitiveness. Google only needs to say Workspace has Gemini support, NotebookLM is integrated into the Gemini App, Google Drive is fully connected, and Antigravity supports Workspace. In the race for frontier model implementation where "speed is the only core competitiveness," Google's advantage continues to expand.

- You never know if OpenAI or Anthropic will create a new concept or tool while abandoning previous achievements, forcing users to readapt. But you can be confident that in Gemini's toolkit, everything that should be there will eventually be there.

Actually, by now, most people can no longer distinguish whether it's the underlying model capability or Agent optimization that makes us feel the AI boundaries are "getting larger." For production, distinguishing these two is very important: the former determines the boundary of reliability, while the latter determines the role and direction of human effort.

In fact, in solving a specific problem, the differences between GPT, Gemini, and Claude might be small. Their differences stem more from the breadth and depth of knowledge (data) and the capabilities opened to users; Gemini undoubtedly wins by a lot.

In terms of Agent optimization and synergy with training data in the coding field, Claude Code remains relatively ahead.

The baton is ultimately in human hands. My reason for choosing Antigravity is the same as why I've used Gemini-1.5 to assist my work since its release: it provides far more possibilities and potential than its competitors.

Whether it's Gemini or Antigravity, it has always been the one that coordinates better with humans.