I am launching a series of short commentaries on recent events in the AI field. The initial frequency is set at three times every two weeks; however, major updates will be covered immediately.

- CCTV launches the first AIGC animation "Qianqiu Shi Song" (Odes of a Thousand Autumns).

I have always believed that text-to-image and text-to-video are primarily for the B2B side (though if self-media is considered C-side, that works too). This implies that models must be deeply integrated with the content produced. The model is a tool and a stage in content production, rather than a magic wand that turns everyone into an artist and replaces professionals. Content that is easily accessible to everyone is almost invariably worthless.

Therefore, CCTV's complete animation clearly demonstrates this direction. From the video results, it actually slightly exceeded my expectations. Technology is always a tool; the more advanced the technology, the higher the requirements for imagination and aesthetics.

To sum it up: AIGC itself cannot be the "selling point." Only by using AIGC to achieve what was previously "impossible" will there be paying customers.

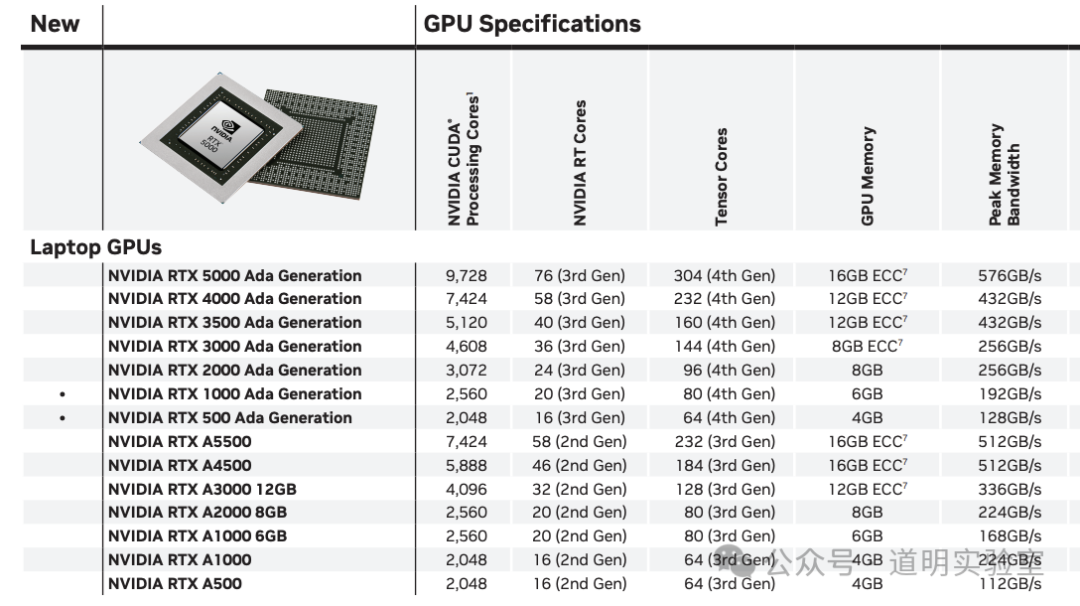

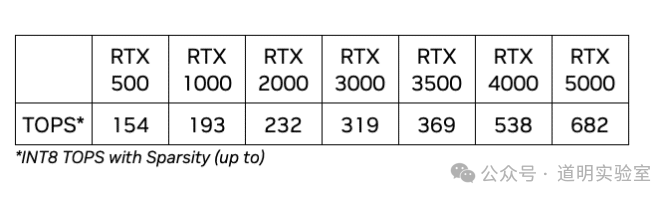

- NVIDIA launches RTX 500 and 1000 Ada GPUs.

These two graphics cards are primarily targeted at the laptop market, highlighting "AI PC" features. The memory is 4GB and 6GB, with INT8 computing power at 154 and 193 TOPS, respectively. Power consumption ranges from 35-60W and 35-140W.

While I don't believe AI PCs will become ubiquitous so quickly, the brutal competition among chipmakers has already begun. The laptop market is the first major battlefield for chip manufacturers in the AI PC space. The core issue is the balance between inference capability and power consumption. With SoCs becoming the definitive solution from Intel, AMD, and Qualcomm, NVIDIA's long-term plan is likely to launch an independent SoC solution for laptops based on the Jetson product line. However, given that Windows for Arm still needs significant upgrades and the exclusive agreement between Microsoft and Qualcomm has not yet expired (rumored to expire this year, though no specific date is set), NVIDIA's release of two entry-level professional cards is a very swift response. Particularly, the 500 model's power consumption is only 35-60W, yet its inference capability significantly outperforms Intel and AMD SoCs. When paired with a CPU, the overall laptop power consumption is expected to be slightly over 100W, which is quite attractive to many users.

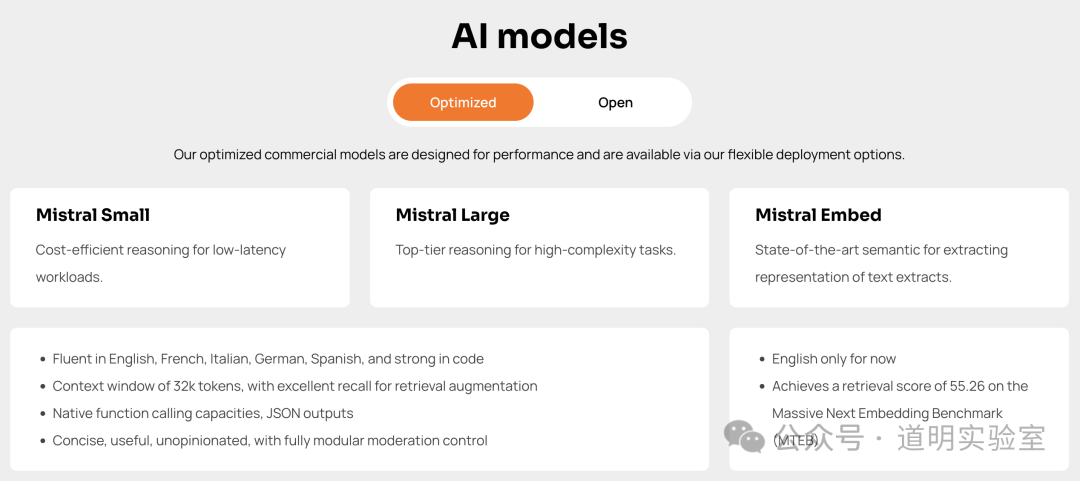

- Mistral AI officially releases its next-generation model.

A few days ago, Mistral quietly launched a model called "next." I tried it, and it indeed shows strong capabilities in mathematics and programming.

Last night, the company officially announced the new generation model on its website. Currently, there is no link to download the parameter files. The company also announced a partnership with Microsoft Azure. Therefore, whether the parameter files will be open for download in the future remains uncertain.

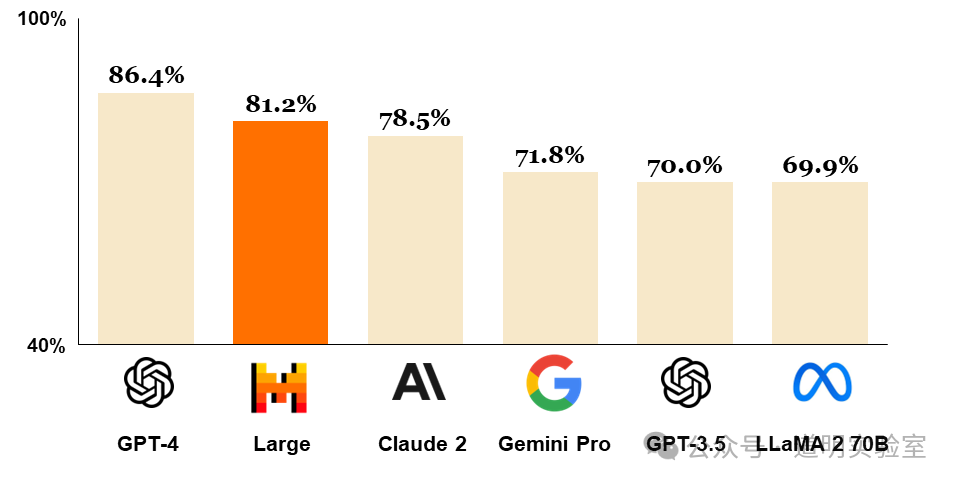

Judging by the MMLU scores released on Mistral's website, it is indeed currently the second-best model after GPT-4. This year's model competition will mainly revolve around these players. Multimodality is a vital aspect of model evolution, but regarding the language model portion alone, players are focusing on planning and search capabilities (performance in reasoning). Furthermore, in the debate between long tokens (such as Gemini 1.5 Pro's 1 million tokens, and the imminent 10 million tokens) versus RAGs, I am largely in the long-token camp. This may also mean that RAGs, as a major direction in AI startups, might slowly become "cannon fodder" in the near future.

- OpenAI to discontinue the Plugin feature.

At this point, objectively speaking, the GPT Store can completely replace the plugin feature, so its closure is expected. I originally wanted to write a longer piece discussing this, but some topics on social media are best left at this level. This move reflects several challenges OpenAI faces:

Relationship with developers: When plugins were first launched, some developers earned significant income through plugins like "MyPDF." Then, OpenAI supported native file uploads. While OpenAI was bound to do this eventually, the way it was handled was somewhat ungraceful. As model capabilities improve, OpenAI cannot solve every "last mile" problem. To build an AI ecosystem—essentially becoming an AI operating system—the boundaries with developers must be clear from the start to provide them with a sense of security.

Positioning relative to Microsoft during commercialization: Unless Microsoft bets entirely on Azure cloud, the competition and conflict between an increasingly commercialized OpenAI and Microsoft's extensive product line will become more intense. In 2024, a public outbreak of these conflicts is not even a low-probability event.

Finally, I should mention that I now choose to wear Vision Pro for almost all my writing. My daily usage, including watching series, totals about three to four hours, with individual sessions kept within two hours or even one hour.

Once you experience the feeling of a massive screen, there is no going back.

Putting on the headset makes the experience more immersive, and the productivity boost is significant.

You can't scan QR codes while wearing them yet, so regarding the future of that scenario... hehe.

Browsing the App Store daily reveals new and fun things. They aren't mature yet, but they are sufficiently inspiring. Some pain points that I've struggled with as a programmer, photographer, or data analyst now seem solvable through custom-developed apps.

Every excellent product I have ever used shares this trait: once you accept its flaws, the remaining features become indispensable advantages.