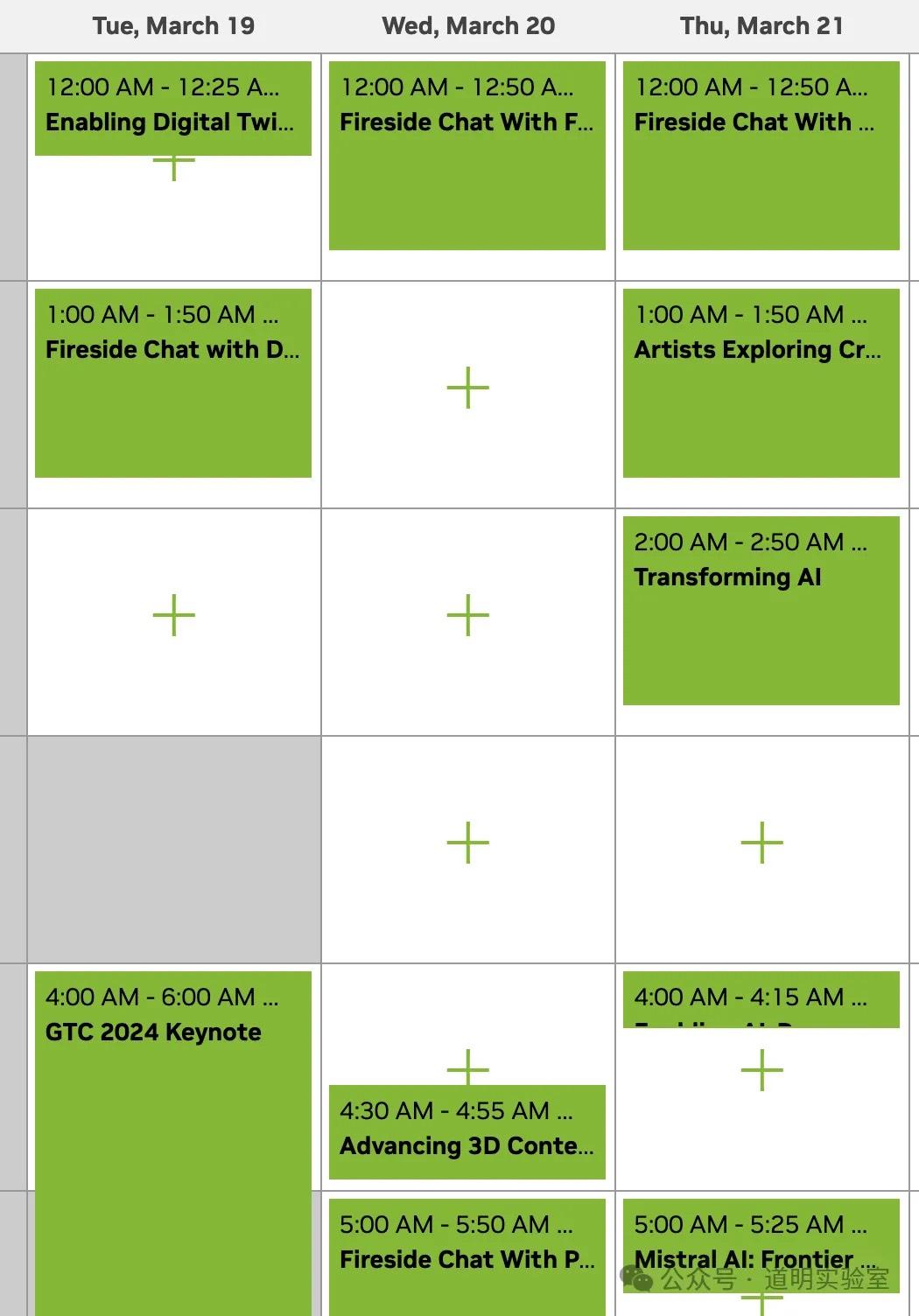

I spent half a day organizing my schedule for online participation in the NVIDIA GTC 2024 conference.

Regrettably, many sessions of interest have time conflicts, so I had to make some tough choices. I’ve listed some screenshots from the scheduling process below. (If you find this tedious, feel free to skip this section; the second part following the screenshots is the real "preview" from my perspective.)

These are already set to Beijing time, so it's essentially like watching late-night football matches.

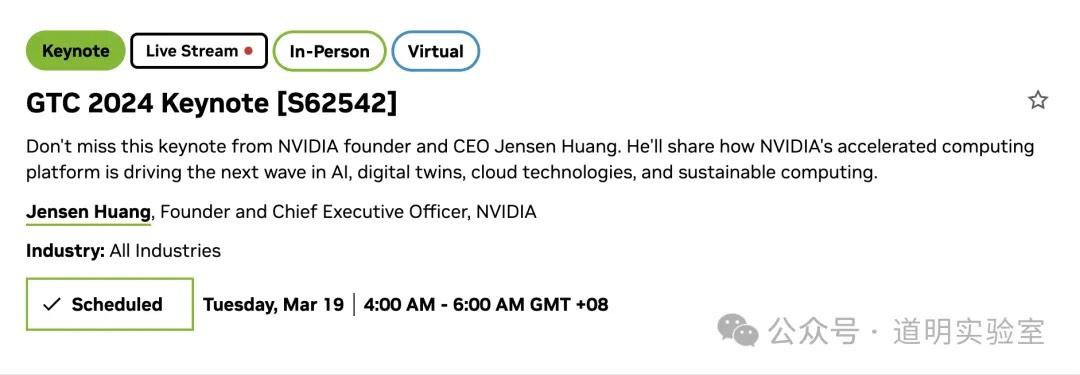

Jensen Huang’s Keynote is naturally the main event; everyone wants to hear what he has to say. However, objectively speaking, last year the hype for ChatGPT was just beginning, and Jensen's content was fresh to many. This year, with everyone following NVIDIA’s every move daily, that sense of "novelty" has definitely faded significantly.

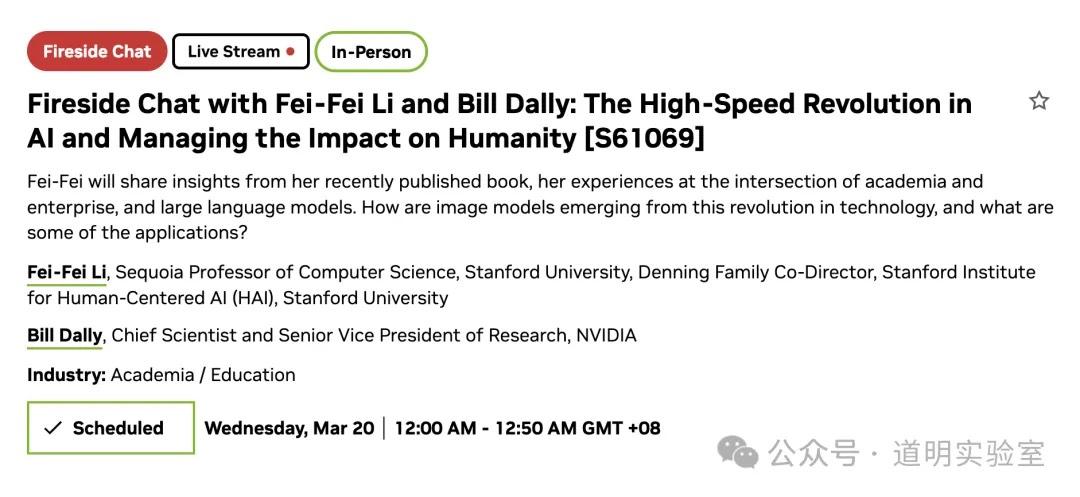

I believe Fei-Fei Li’s "Fireside Chat" ranks second. It's safe to say that more than half of the prominent figures in today’s vision model field originated from her lab.

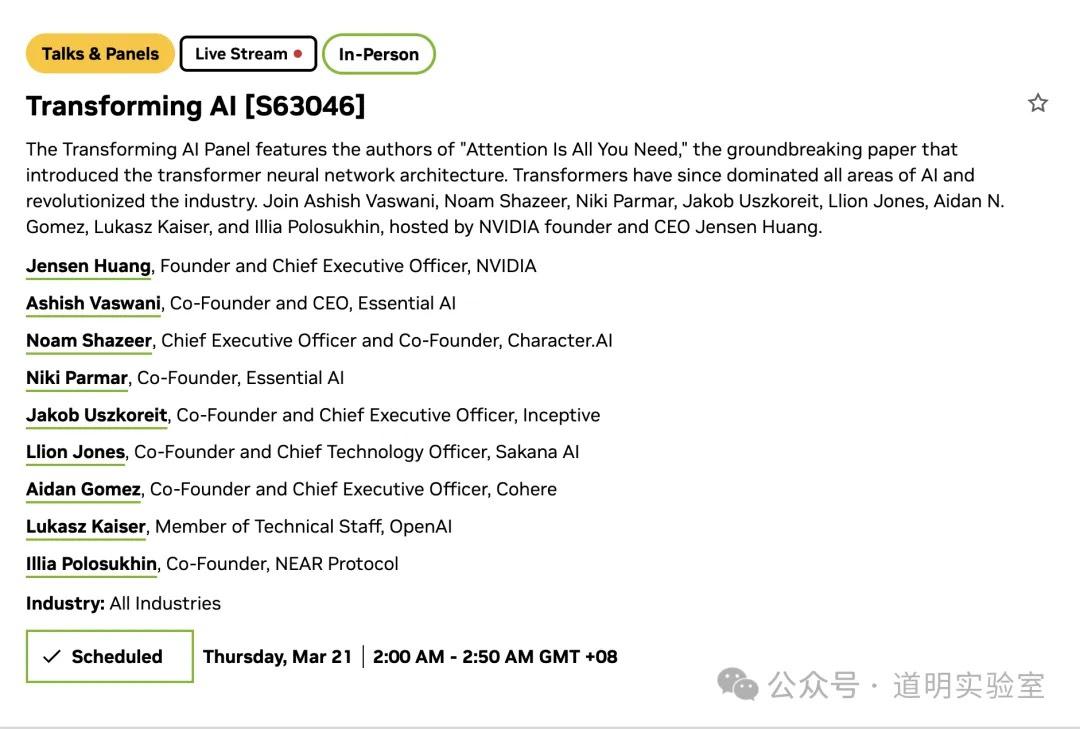

Of course, large roundtable discussions like this are also not to be missed.

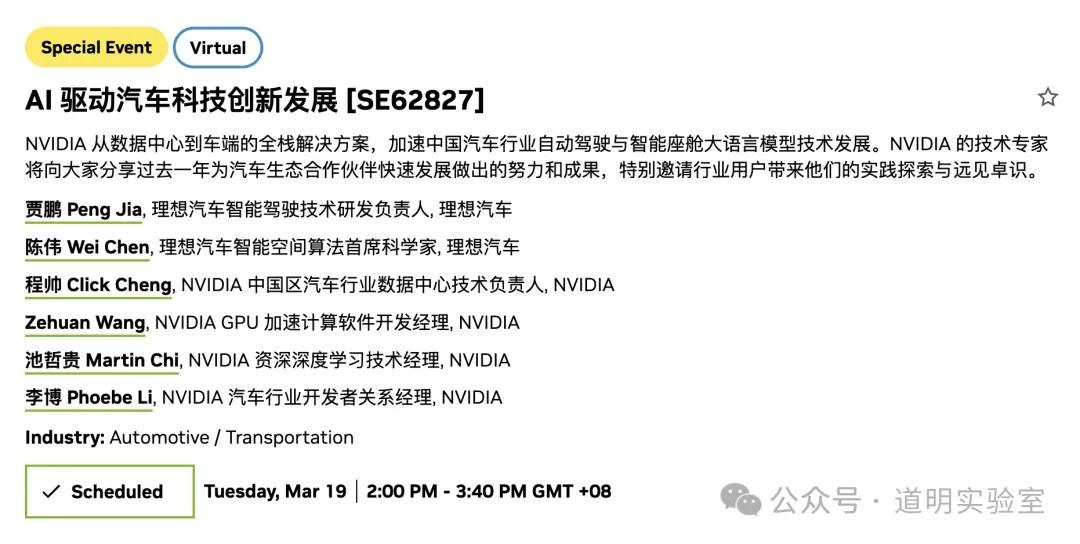

Smart driving actually has the highest relevance to us, and the timing is convenient.

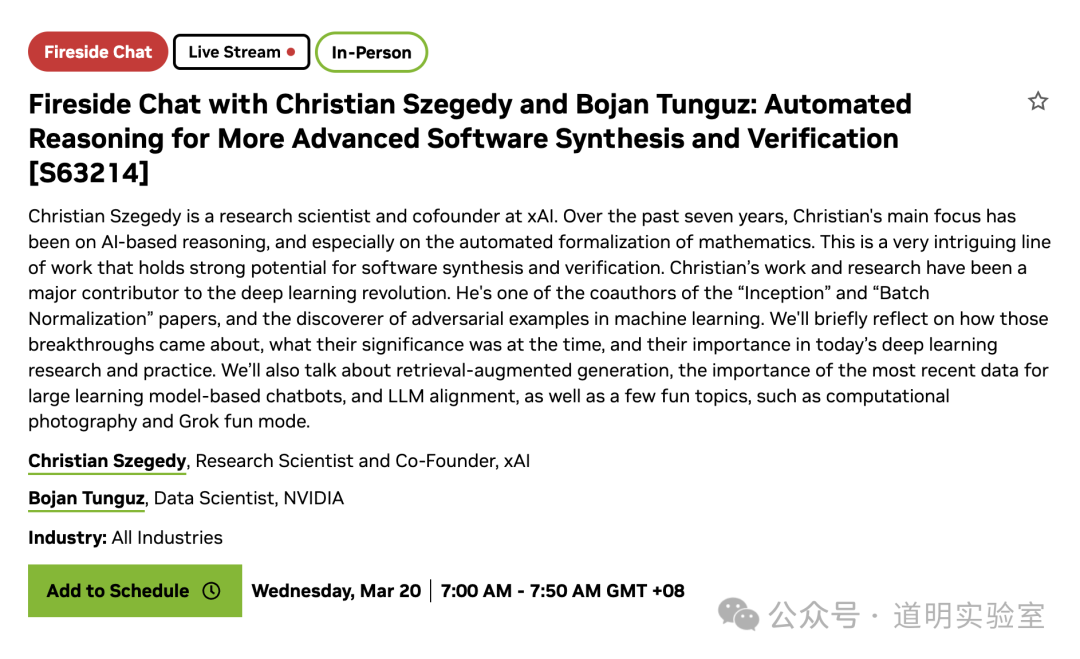

Since Grok is going open-source, then...

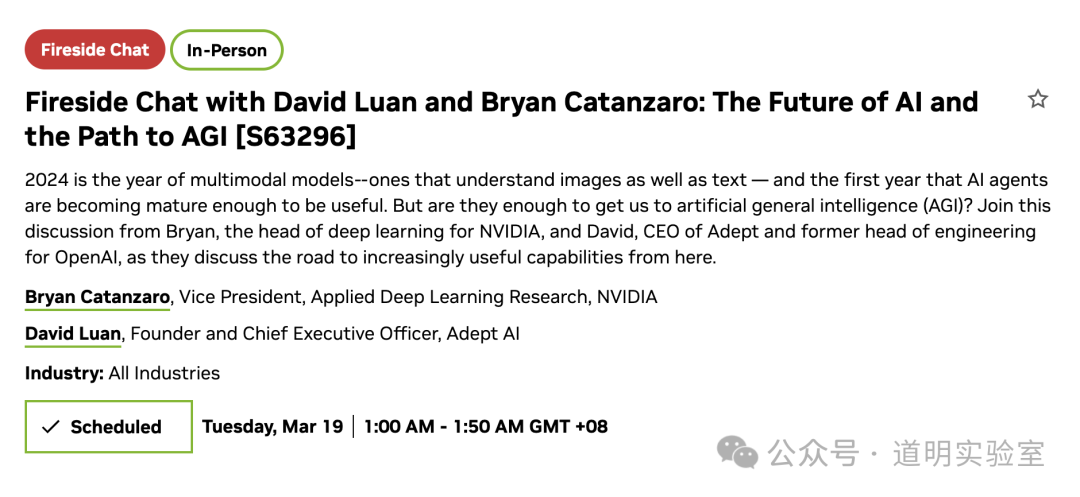

Path to AGI.

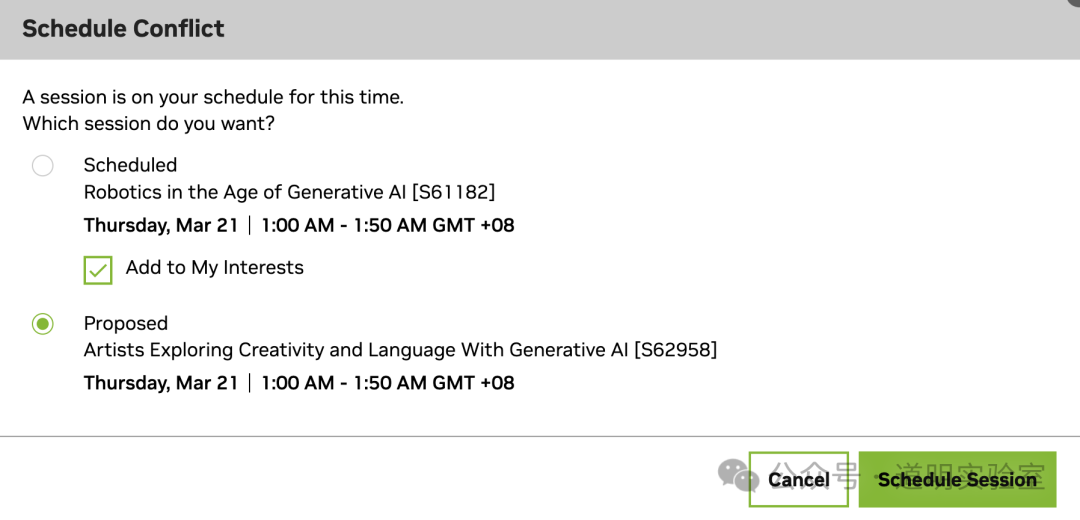

For the first conflict, I initially chose robotics, but art is more appealing to me, haha.

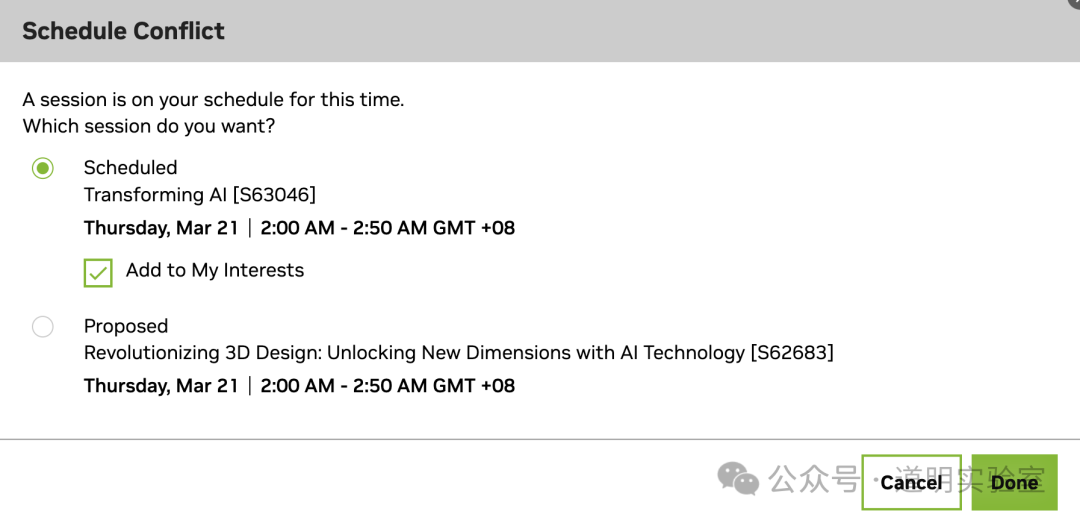

However, for the sake of the roundtable discussion, I had to give up the sessions related to "3D."

Those were some of my choices; below are my key focal points.

Preview: Hardware Section - B100?

It was at GTC 2022 (also in March) that NVIDIA first revealed the Hopper architecture and the H100, which started shipping in October of that year.

Two full years have passed, and the release of the Blackwell architecture and the disclosure of B100 information at this GTC have become almost a consensus market expectation. Therefore, conservatively, the probability of B100 information being disclosed is at least 95%.

As for the technical specifications, although much can be gleaned from public information, this is a public article, so I won't quote them here.

Other hardware? Some are expected. Honestly, anything released will be a significant improvement, but after a year of "frenzy," everyone has become somewhat desensitized unless it's something never seen before.

Preview: Software Ecosystem

Beyond large model pre-training and the C-end consumer market, NVIDIA has been building several ecosystems over the past year: smart driving and robot training/deployment based on Jetson edge chips; film and game production based on Omniverse; and, in the last six months, inference and model fine-tuning for individual consumers (Chat with RTX, Avatar, AI Workbench, etc.). Objectively speaking, with the help of the CUDA ecosystem, NVIDIA's only real rival in the terminal inference market is Apple. However, in the competition for AI desktop systems, Intel and AMD—NVIDIA's competitors in the server GPU market—actually benefit from NVIDIA's increased share. It’s quite peculiar. Of course, the market space for AI desktop systems is huge, and the relationship between NVIDIA and Apple is not a zero-sum game (remember when the two split completely, Apple turned to AMD graphics, and then to its own Apple Silicon; the judgment of major companies on trends far exceeds that of us spectators).

In fact, NVIDIA's layout in the software ecosystem is somewhat "chaotic," but with CUDA, they can afford to be a bit willful—at least until Apple's Vision Pro matures completely, or the recently rumored "four M2 Ultra chips combined into one" product (nearly finished before the Apple Car team disbanded) is launched. Only these products, combined with the Apple ecosystem, could allow for a complete departure from reliance on CUDA.

Preview: World Models

Following the launch of Sora, NVIDIA announced the formation of an "Embodied Intelligence" team. Objectively, NVIDIA is in the first tier of research for smart driving and robot algorithms—or to put it another way, so many research institutions cooperate with NVIDIA that their collective results place NVIDIA in that top tier.

From a purely technical perspective, the greatest common divisor of NVIDIA's achievements in smart driving, robotics, 3D reconstruction, and AI Agents could be called a "World Model," which aligns with the original intentions and underlying logic of OpenAI and Google Deepmind. However, similar to the issues in its software ecosystem layout, NVIDIA's research outputs are often semi-finished products, and it currently lacks sufficient staffing and productization capabilities.

Nevertheless, we can still gather plenty of information about "World Models" from the GTC sessions: the demand for computing power, the demand for data, and many interesting experiments.

Summary

Market sentiment is much like the sketch "Father's Funeral" performed by Toudou and Luyan on the Annual Comedy Island: only by relying on bubbles that look increasingly "absurd" can the audience be continuously satisfied.

It's another year of GTC, but unlike last year, everything has been fully anticipated. Unless a "completely new" thing suddenly appears, nothing seems capable of stimulating the market further.

This is true even though the progress of AI is moving upward at a nearly 90-degree slope.

Technically, that's the situation; the rest is up to the market. In the early hours of March 19 (Beijing time), NVIDIA GTC 2024 officially begins. With 1,045 sessions of various forms—speeches, exchanges, and training—this is the only largest and most comprehensive conference in the AI field. It concerns the future of technology, which is actually much more important than the market itself. If you can quiet your mind and enjoy it slowly, it will be truly beautiful.