If you clicked in because of the title, please forgive me for being a bit clickbaity. But this is also my conclusion: In the past month, I have tried generating no fewer than 100 "Deep Research" reports across four versions of similar tools (OpenAI, Gemini, Perplexity, Grok-3). While I am convinced that the value of excellent analysts has actually increased due to the popularity of such applications, another concern has arisen: these applications are actually being used to "destroy" the investment research system.

In fact, this conclusion has been in my mind for some time, but I hadn't found a good entry point to discuss it intuitively until today.

Here is what happened: I came across the following article regarding Wan Weigang's experience using "Deep Research."

Deep Research was used here.

Wan Weigang, Public Account: Logical Thinking (Luoji Siwei).

DeepSeek + Huawei: Can they surpass NVIDIA and OpenAI?

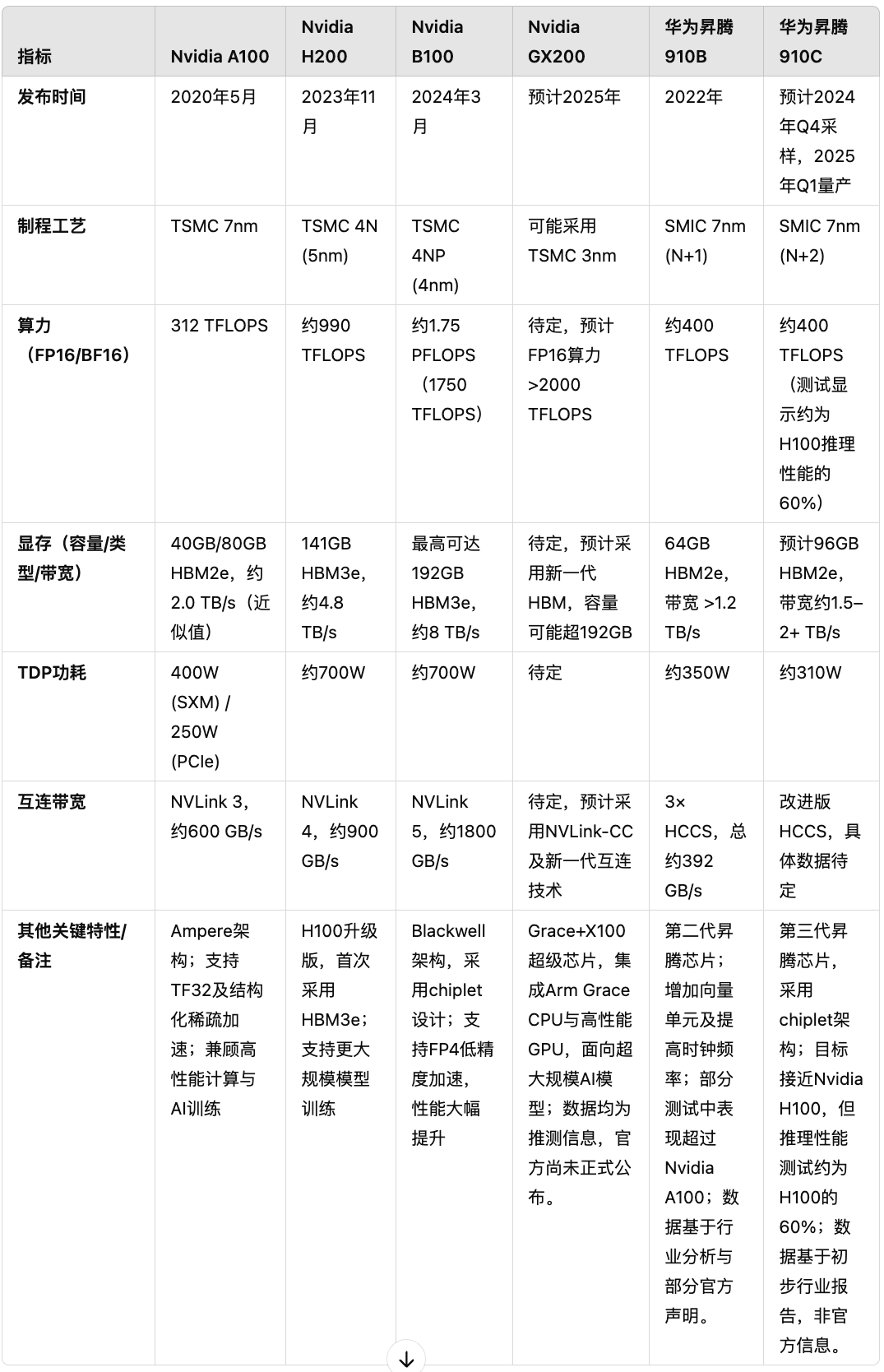

However, when I saw the following table mentioned in the article, generated by OpenAI's Deep Research, I immediately spotted some errors.

So, I sent this image to my teammates, and their speed of response pleasantly surprised me.

This example is not meant to criticize "Dedao" or Wan Weigang. After all, he is not a front-line analyst. He lacks the discernment to "see at a glance that something is wrong" regarding very detailed industry specifics. However, by accepting it entirely without fact-checking, he deserves a bit of skepticism.

It is precisely this difference in rapid discernment that prevents products like Deep Research from replacing top-tier analysts for a long time. This discernment has become almost an instinctive reaction for them, processed rapidly through "System 1."

However, Deep Research can become a highly efficient assistant for excellent analysts if used properly.

This is the first aspect.

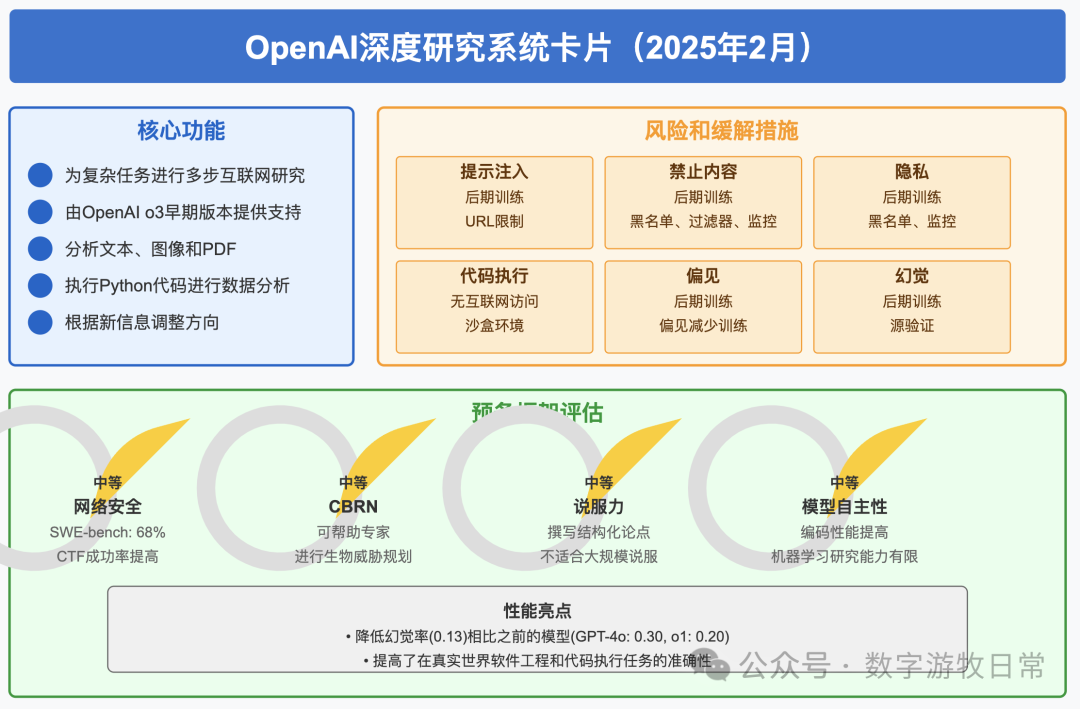

If we look closely at the potential errors in the above example, if one were to fact-check Deep Research's results, I am almost certain those numbers and descriptions could be found in the information sources it provided. OpenAI just released the Deep Research system card, which I have summarized below:

One very important point is the extremely low hallucination rate: 0.13. (An aside: when the model was helping me generate this slide, it used 13%. Since I had already read the original report, I knew this was an incorrect figure and knew it wasn't a "hallucination" by the model itself. So I told the model not to treat it as a percentage and to modify it. It changed it to 0.13%, which matched my first instinct. But I went back and checked the original report again; OpenAI only stated it was a metric without units. So, I told the model again to just output the number: 0.13).

Yes, in every number I have fact-checked across dozens of reports generated by OpenAI Deep Research, I haven't found a single case of "hallucination," which is a distinct difference from Perplexity and Grok-3.

However, the absence of hallucinations doesn't mean the numbers are correct: the model faithfully retrieves numbers from its information sources, but those sources themselves might be wrong. The example above is more likely this situation.

Of course, in the industry, it's quite common for public specifications of "newly released new energy vehicles" to have errors in three or four out of every ten numbers.

Fact-checking awareness and capability vary from person to person. It's just that as tools like Deep Research proliferate rapidly, they will cause "analysts" who already lack such awareness and capability to completely lose this most important and fundamental skill.

So, back to the title—I was indeed being "clickbaity" because the "errors and hallucinations" of human analysts are often even higher than those of the models. "Junk information" has already flooded the market; it's just that it is now polluting the model's information sources and hitting back like a boomerang.

"Shoddy workmanship" is one reason.

Another important reason is that increasingly convenient tools effectively flatten the "information gap." The output of Deep Research is similar to a "consensus" and no longer provides incremental information in an efficient market.

As a result, truly valuable information might only exist in the minds of a few people or in personal "private knowledge bases," and will less frequently flow into the "system." This is because, for a long time to come, external tools will be more useful than internal ones.

If the "investment research system" refers to specific individuals, it should still exist. If it refers to an organization, I am not optimistic. It's not that AI is getting better, but that our own actions destroyed its foundations long ago.