Last night, Anthropic (Claude) briefly flash-published an economic index report on its official website. I noticed many self-media accounts reposted it "mindlessly," including some obvious sensitive geopolitical errors. It seems that even for publicly released content, there is still a threshold—not just on a technical level, but in terms of long-term professional training.

My focus last night was primarily on video production. However, aside from the obvious geopolitical errors, I also noticed a strange phenomenon: the article's date was set to September 16, which is at least 4 hours into the future. Upon checking Anthropic's website just now, the article has disappeared. I suspect it was a premature accidental release. Fortunately, I saved the full text and illustrations in advance.

To be objective, while the report uses a lot of data, the findings are mostly "common-sense" observations.

TL;DR: The conclusions are as follows:

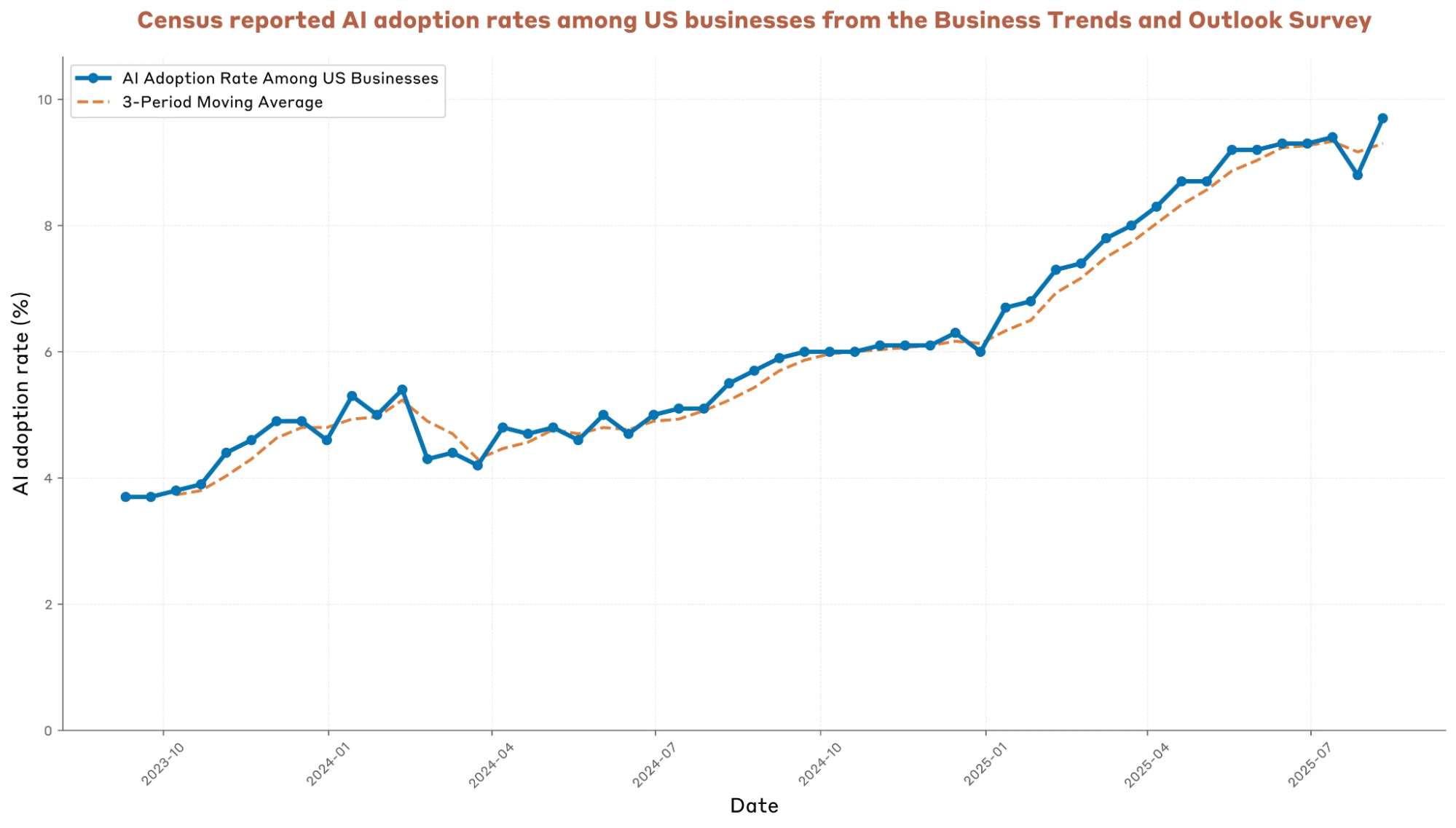

- According to the survey, while the AI adoption rate among surveyed enterprises has grown by more than 50% this year, the absolute proportion is still only 10%. This is actually quite low.

- The most publicized finding is that usage is higher in wealthier countries and regions. But isn't that a rather common-sense observation?

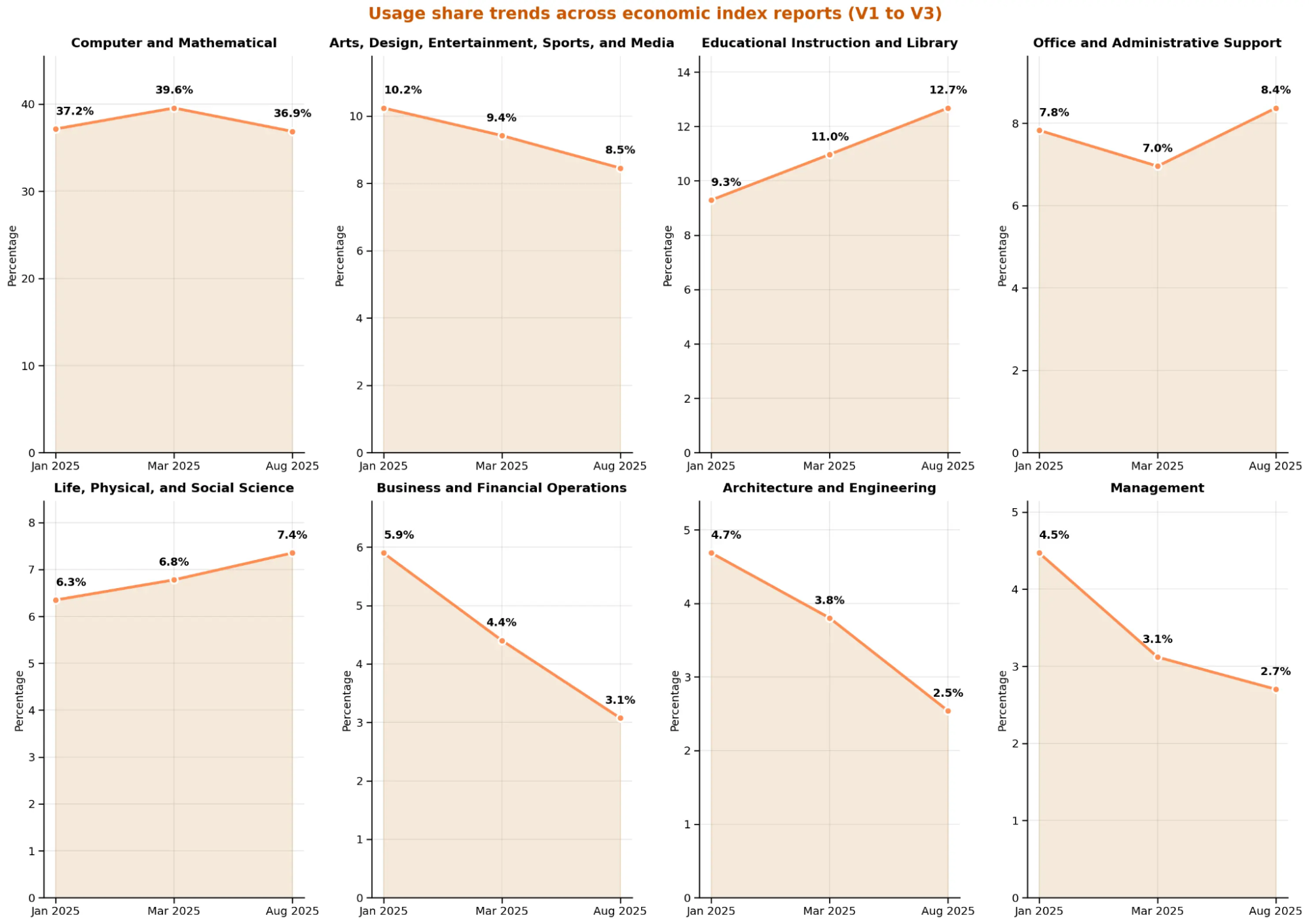

- Programming and mathematics tasks (36.9%) remain the dominant domains. However, education (rising from 9.3% to 12.7%) is growing rapidly, while the shares in business finance, art and design, management, and architecture and engineering are continuously declining. This is likely due to the characteristics of the Claude model (over-thinking, highly rational but prone to "high hallucinations").

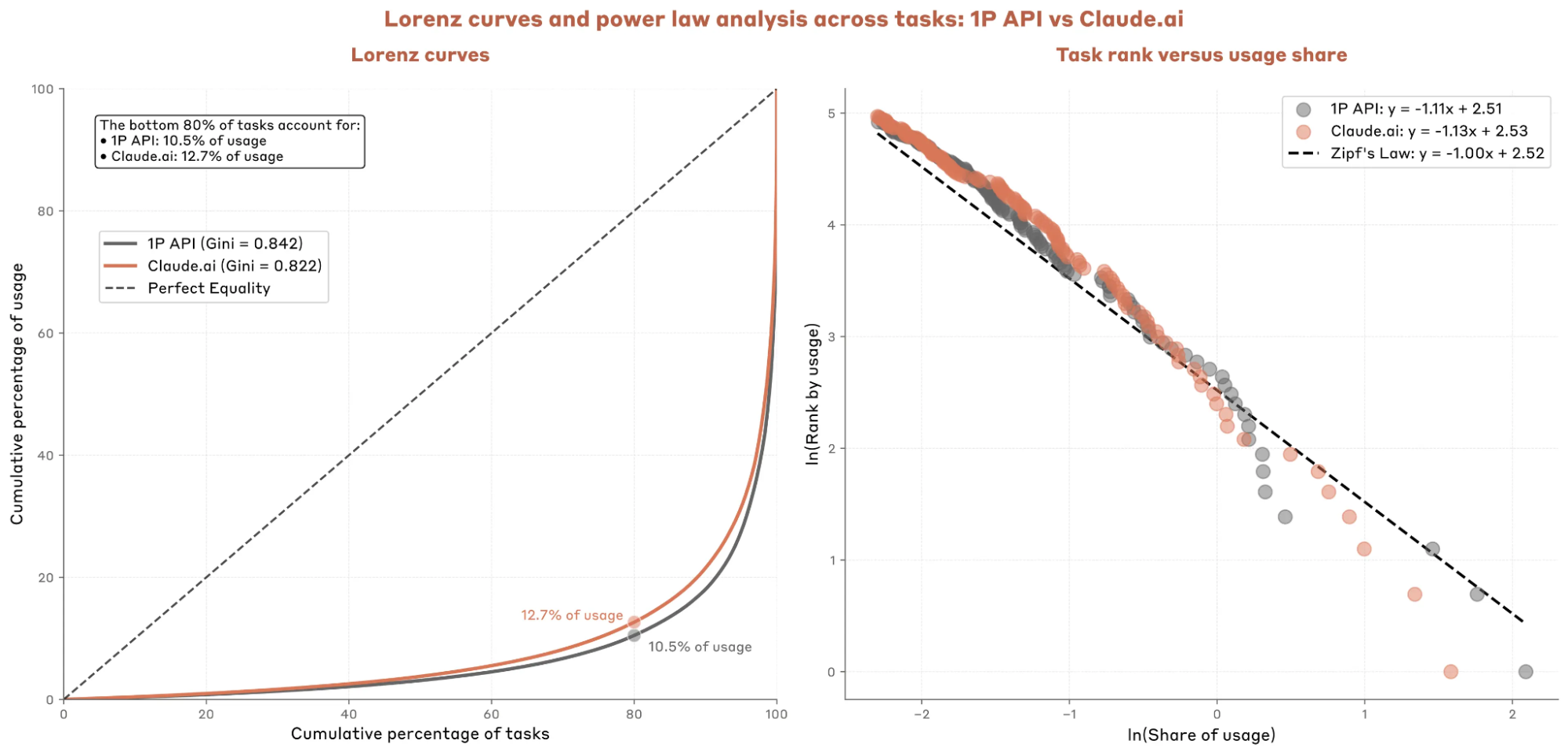

- 80% of tasks account for only 10.5%-12.7% of total token usage.

- AI usage is highly unbalanced across demographics and application domains. Although Claude likely "overestimates" this imbalance due to its specific model characteristics, it aligns well with common sense. It also suggests we still have a long way to go before reaching so-called Artificial General Intelligence (AGI).

Now, here are the charts and brief explanations.

AI adoption rate in US enterprises, Business Trends and Outlook Survey (Census Bureau). Note: AI adoption is calculated as the percentage of businesses answering "Yes" to the question: "In the last two weeks, did this business use Artificial Intelligence (AI) in producing goods or services? (Examples of AI: machine learning, natural language processing, virtual agents, voice recognition, etc.)".

Claude.ai usage over time. Each panel shows the share of sampled conversations on Claude.ai related to major group tasks in each domain. We see a significant increase in the use of science and education tasks. Domains are ranked by usage from the first report.

There is a significant positive correlation between AI adoption rates and a country's or region's GDP per capita (I omitted the maps as they contained the errors mentioned). However, I believe this correlation is overestimated for Claude. The reason is simple: Claude has very little free allowance, so it is used more for tasks like code generation which previously had higher entry barriers.

The Lorenz curve on the left shows that 80% of tasks account for only 10.5%-12.7% of total usage (including API calls or direct Claude app usage).

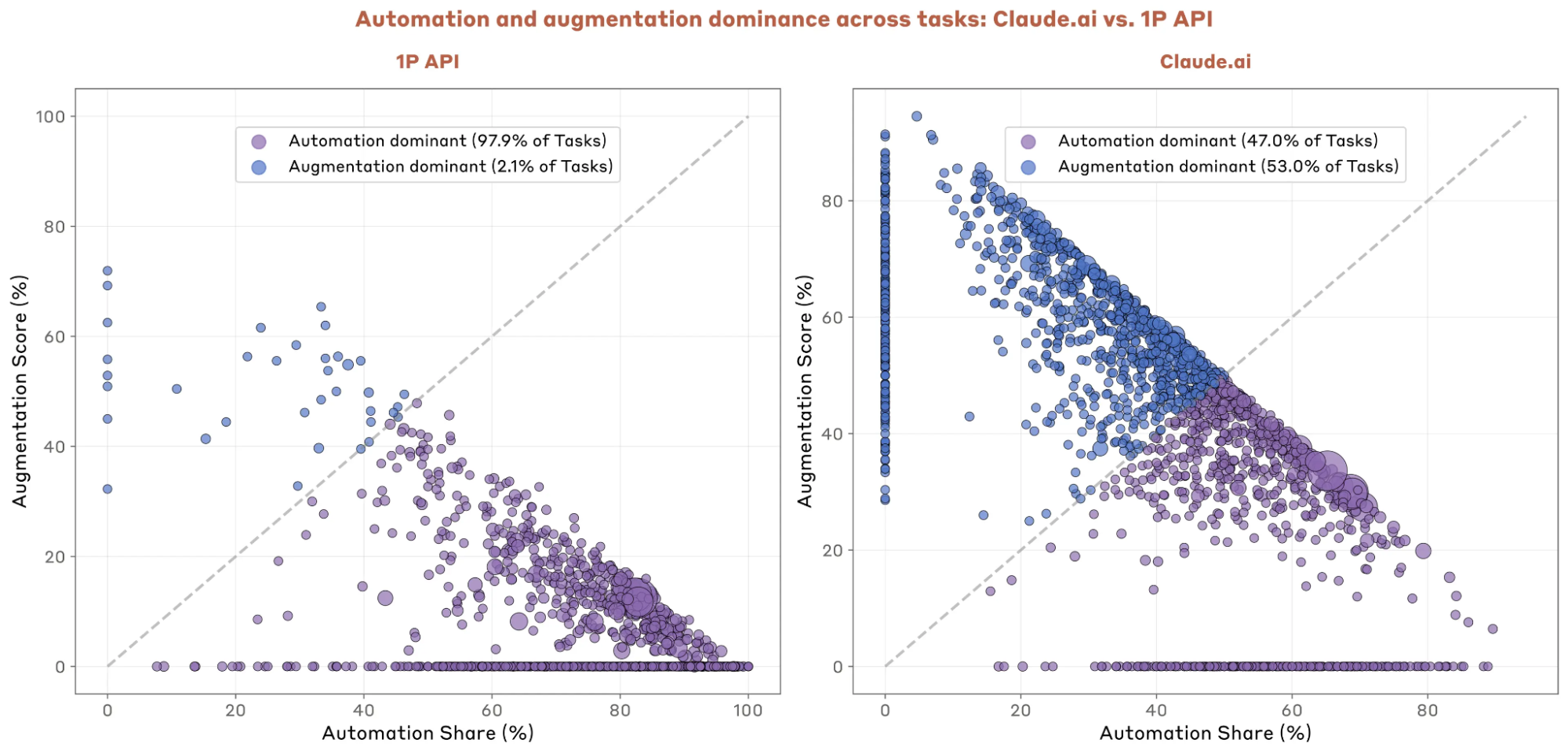

- 97% of API call tasks are automated, compared to only 47% in the Claude application.

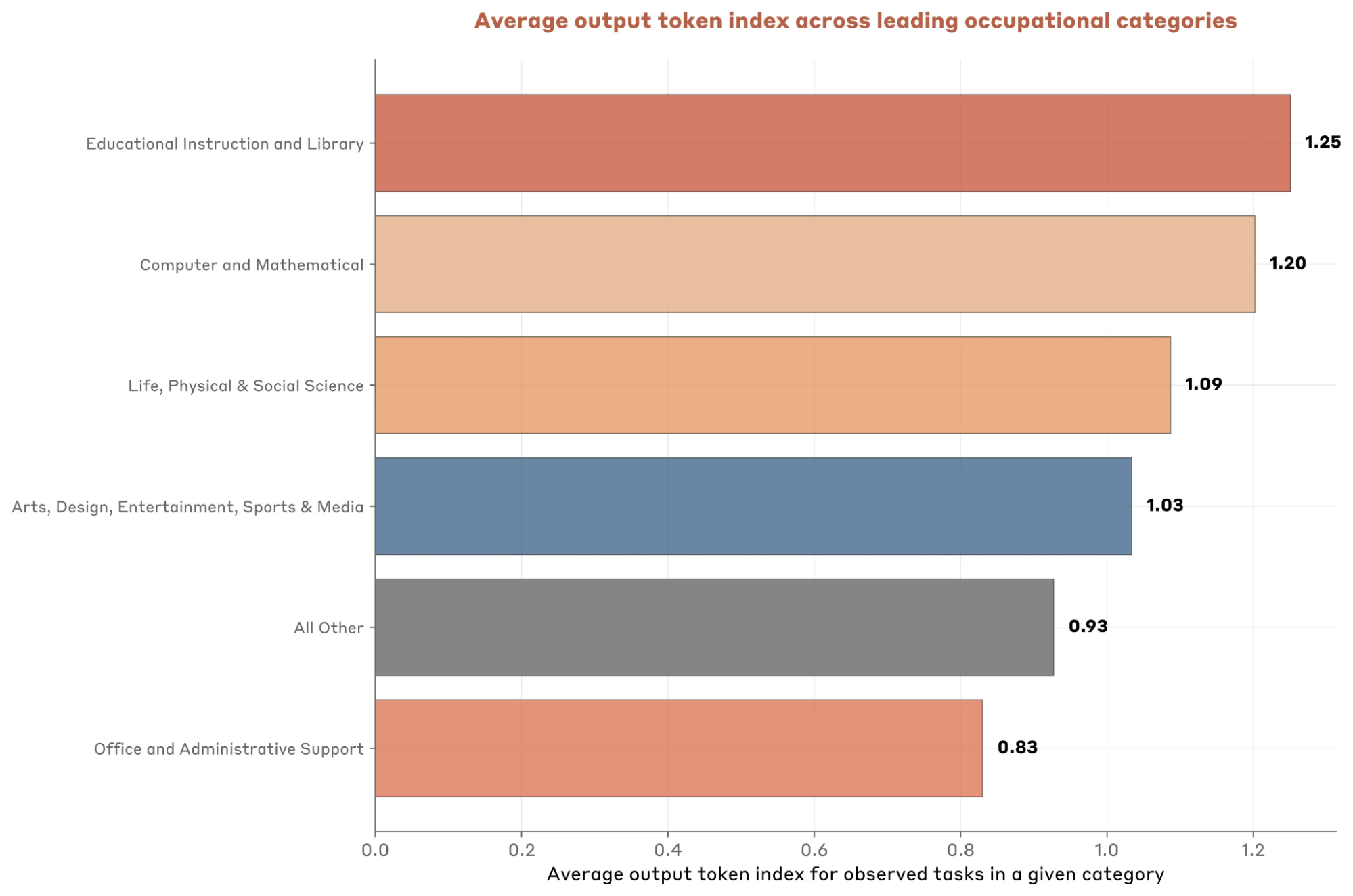

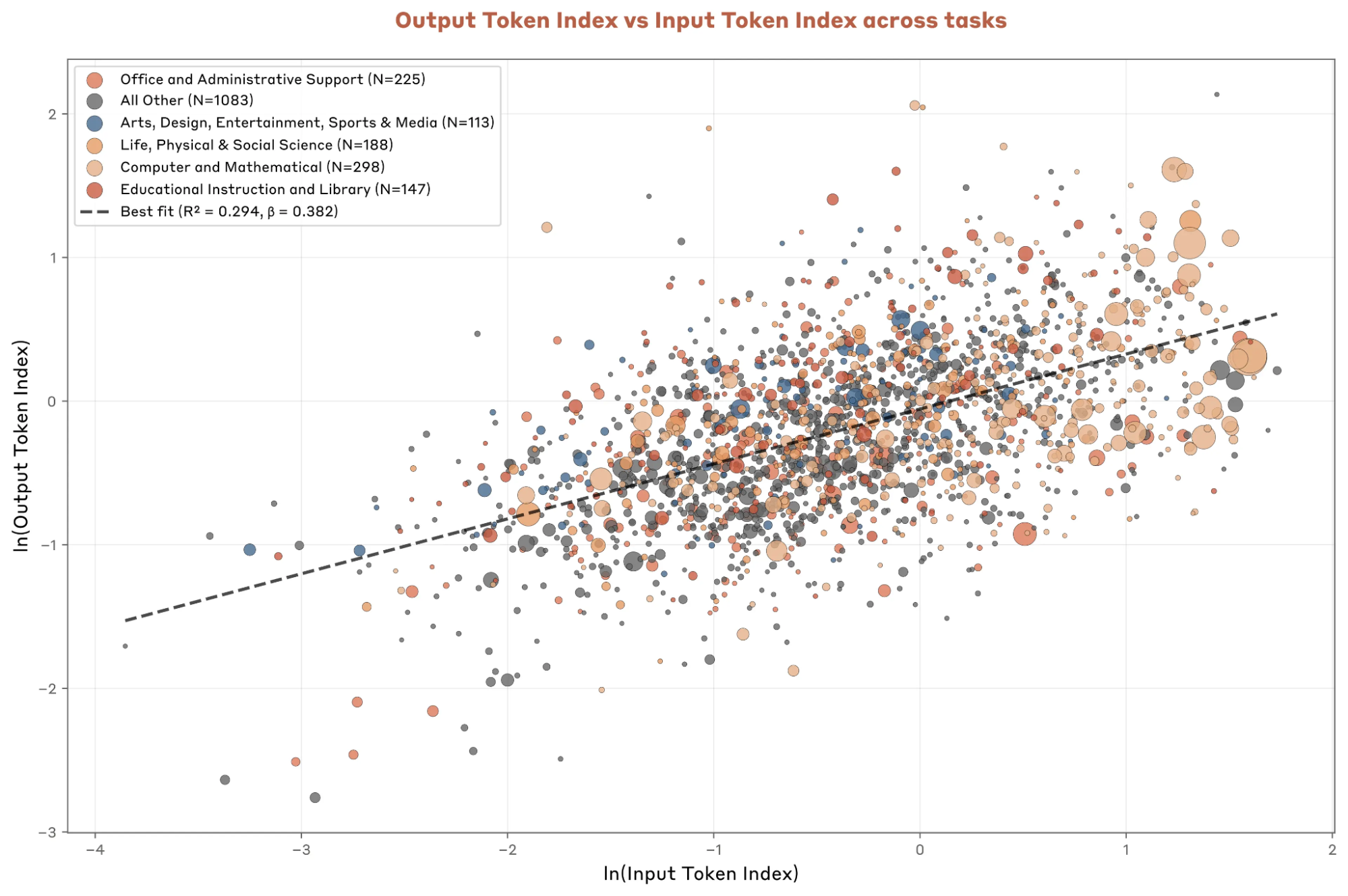

- There are significant differences in output token lengths across different domains. According to Anthropic's statistics, every 1% increase in input tokens results in a 0.38% increase in output token length. This suggests an input (context) to output ratio of roughly 3:1. However, this statistic is problematic; remember that 20% of tasks contribute about 90% of usage. We can easily see in the top-right of the bubble chart that many long-context tasks have input tokens significantly higher—by an order of magnitude or more—than output tokens.

- In the original text, Anthropic mentioned that since launching the "Search" feature in April, the proportion of tasks with "Internet Search" enabled rose from 0.003% to 0.27%. While the growth rate looks high, I believe the absolute volume is significantly smaller than ChatGPT or Gemini. This implies that Claude's data is more likely to overestimate output token lengths.